More in

Productivity Tech News

Half of U.S. Workers Now Use AI on the Job: The Operating Model COOs Need for the Post-Pilot Era

Apr 17, 2026

Notion Now Searches Your Salesforce Data: What Cross-Tool AI Means for How Operations Teams Work

Mar 20, 2026

You Can Now Give Notion a 20-Minute Task and Walk Away: What That Means for How You Lead

Mar 17, 2026

Notion Custom Agents Are Free Until May 3: How COOs Should Run a 3-Week Evaluation

Mar 13, 2026

Atlassian Cut 900 R&D Roles: What Operations Leaders Need to Assess Before Renewal

Mar 10, 2026

You Can Now Hire AI Agents for Your Monday.com Workspace: What That Means for How COOs Evaluate Work Management Platforms

Feb 6, 2026

ClickUp Wants to Replace Your PM Tool, Your Slack, and Your Zoom: Is the Everything App Bet Worth It?

Jan 30, 2026

Monday.com Lost 19% in One Day Over AI Agents: What That Signals About SaaS Pricing and Your Next Renewal

Jan 29, 2026

Monday.com vs. Asana in 2026: The AI Architecture Decision That Should Drive Your Platform Choice

Jan 16, 2026

AI Meeting Context vs. Your Notion Workspace: Do Teams Actually Need Both in 2026?

Jan 13, 2026 · Currently reading

AI Meeting Context vs. Your Notion Workspace: Do Teams Actually Need Both in 2026?

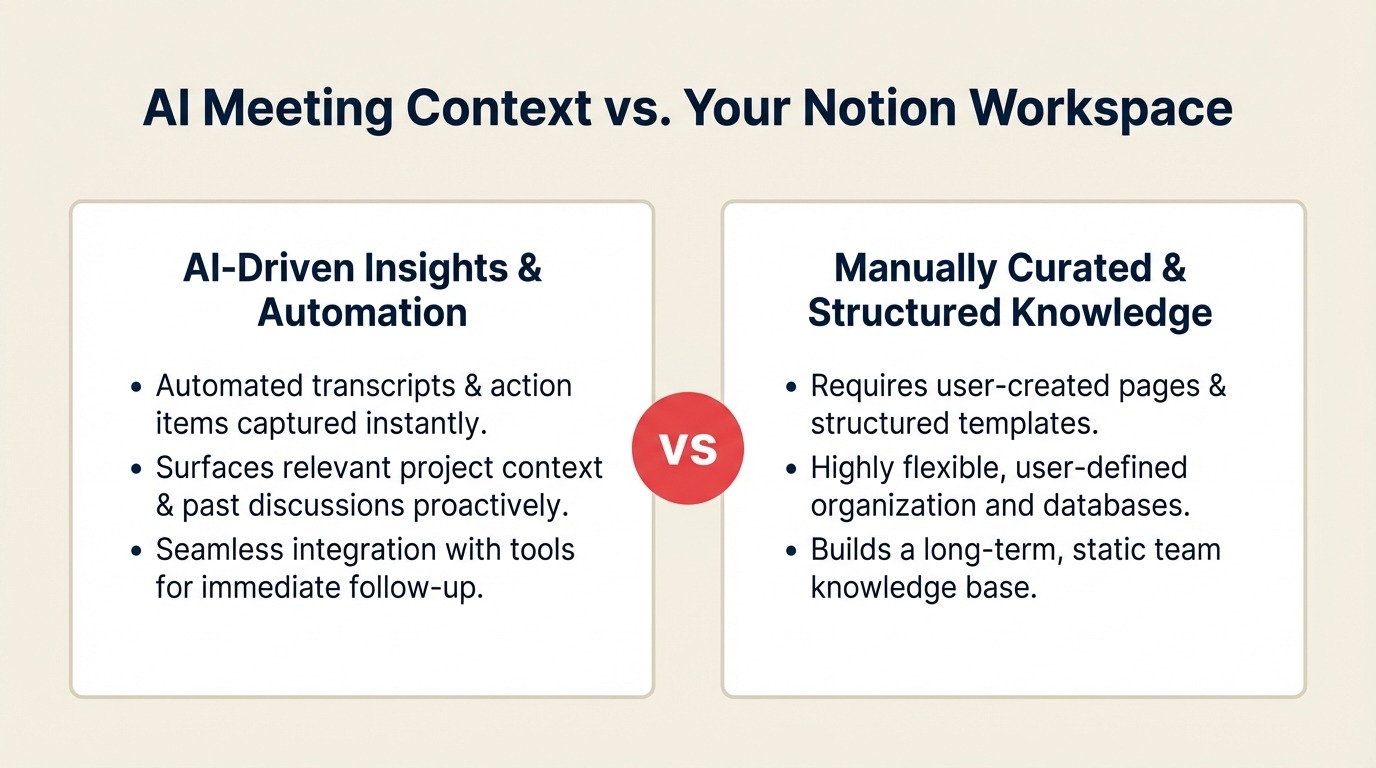

Every team lead managing a remote or hybrid team has some version of the same stack: a notetaker app that captures what happened in meetings, and a knowledge base where the team keeps the stuff that needs to outlive the meeting. It's not elegant, but it works. The question is whether it'll still work when tools like Granola are now trying to do both.

According to TechCrunch's coverage of Granola's Series C funding, the AI meeting notetaker raised $125 million in March 2026, pushing its valuation to $1.5 billion. The funding announcement came alongside a significant product expansion: Granola launched Spaces, a team workspace feature with folder structures and access controls that now competes directly with tools like Notion and Confluence for institutional knowledge management. The Next Web confirmed the shift, describing it as Granola expanding from a personal productivity tool to an enterprise AI application.

Granola also released a personal API and an enterprise API, letting organizations pipe meeting context into their own AI workflows. A Model Context Protocol server launched in February 2026. Enterprise customers already using the product include Vanta, Gusto, Thumbtack, Asana, Cursor, and Mistral AI.

For team leads, the funding round is less interesting than what Spaces represents. It's a direct challenge to the assumption that meeting context and persistent knowledge are different enough to warrant different tools. If you haven't audited how much of your current meeting load is actually generating useful knowledge versus just consuming time, a structured meeting audit is worth running before you add another tool to the mix.

Three Workflows Where This Actually Changes Something

Abstract comparisons between categories don't help much when you're the person deciding what the team uses on Monday morning. Here's how the lines shift across three common team scenarios:

Scenario 1: Weekly team syncs that generate decisions and follow-ups. This is where AI meeting context tools genuinely shine. A notetaker like Granola captures what was discussed, who committed to what, and what the open questions were (in context, with the ability to search back and pull up what was said three weeks ago). Traditional knowledge bases don't handle this well because someone has to translate the meeting outcome into a document, and that step gets skipped under time pressure. If your team runs structured weekly syncs where decisions need to be traceable, an AI context tool delivers real value that a Notion page can't replicate without significant manual effort. A lightweight decision log practice can bridge the gap while you evaluate whether a full AI context tool is worth the cost.

Scenario 2: Onboarding new team members with institutional knowledge. This is where traditional knowledge bases still win clearly. Onboarding documentation, process guides, team norms, and role-specific playbooks need to be authored, maintained, and structured for someone who wasn't in any of the original meetings. Meeting transcripts and contextual summaries aren't a substitute. A new hire can't reconstruct the "how we do annual planning" document from three years of Granola search results. The knowledge base exists to make institutional knowledge transferable and navigable. That's a different job than capturing what happened last Tuesday. If onboarding consistency is the core problem you're solving, a manager's onboarding checklist addresses the documentation gap more directly than switching knowledge tools.

Scenario 3: Cross-functional projects with multiple handoffs. This is the gray area where the stack decision gets genuinely complicated. If your team is running a project that involves regular syncs with engineering, finance, and a customer, and each of those meetings generates context that informs the next phase, a meeting context tool keeps the timeline coherent in a way a static doc can't. But the final outputs (specs, sign-off documents, go-live criteria) need to live somewhere structured and version-controlled that everyone can find without remembering which meeting produced them. Right now, this typically requires both tools. Granola Spaces is betting it can close that gap, but it's early.

A Simple Framework for the Stack Decision

Instead of choosing based on feature lists, think about two dimensions: the type of knowledge involved, and who needs to access it. It's also worth understanding what AI meeting tools actually do well before selecting one, because the category has real capability differences that aren't obvious from vendor marketing.

Episodic knowledge (what happened in a specific meeting, what was decided on a specific date, who said what during a negotiation) has a temporal structure that AI context tools handle well. The search is conversational ("what did we decide about the Q2 budget in February?"), not hierarchical. Meeting notes live naturally here.

Evergreen knowledge (how we run quarterly planning, what our escalation process is, how new hires get onboarded, what the product positioning is) doesn't have a timestamp that matters. It needs to be findable by someone who wasn't in any of the founding meetings, and it needs to be maintained as the team's practices evolve. Knowledge bases are built for this.

The audience dimension matters too. Self-use and small-team use tolerates more fragmentation. A team lead who keeps their own Granola notes alongside a shared Notion wiki can manage the context-switching. Broader organizational use (onboarding, compliance, cross-functional documentation) needs the kind of structure and accessibility that a dedicated knowledge base provides.

If most of your knowledge is episodic and most of your team already lives in meetings, a tool like Granola may carry more of the load than you'd expect. If your team runs on documented processes and you're responsible for bringing new people up to speed quickly, the knowledge base isn't going away.

What to Test with Your Team This Quarter

Before making any stack change based on a product launch, run a controlled test. Here's a practical approach:

Pick one project, not the whole team. Choose a cross-functional initiative that involves three or more people and runs for at least six weeks. This gives you enough scope and duration to see how meeting context compounds over time.

Use Granola (or your current notetaker) for all sync capture. Don't manually write meeting notes for this project. Let the AI context tool handle it entirely. At the six-week mark, have a team member who wasn't in every meeting try to reconstruct the key decisions and rationale using only the tool's search and context features.

Separately, audit your Notion (or equivalent) usage for the same project. How many pages were actually created and maintained? Which ones got referenced by more than one person? Which ones were created once and never touched again?

Compare where information actually lived. If the critical decisions and context were consistently findable in the meeting tool, and the Notion pages were mostly unused, that's a signal that your team's knowledge is more episodic than you assumed. If the Notion pages were the reference people actually used, the knowledge base is doing real work.

Granola's expansion into Spaces doesn't automatically make your current stack wrong. But it does mean the "one tool for meetings, one tool for knowledge" assumption deserves a fresh look, especially if you're already paying for tools that overlap more than they should. If you're evaluating Notion specifically as part of that review, the autonomous AI agent features Notion released in early 2026 change the knowledge base value equation in ways that are directly relevant to this comparison.

Learn More

- Meeting Notes Hit a $1.5B Valuation: Enterprise AI Context Race — The CEO-level signal behind Granola's Series C and what it means for knowledge infrastructure

- Your Meetings Are Now a Programmable Data Source — How Granola's MCP server turns meeting context into a live data source for AI agents

- You Can Now Give Notion a 20-Minute Task and Walk Away — How autonomous Notion agents compare as an alternative approach to the same knowledge problem

- AI Onboarding Checklist 2026 — Integrating meeting intelligence into how new team members build institutional context

Co-Founder & CMO, Rework