More in

Productivity Tech News

Half of U.S. Workers Now Use AI on the Job: The Operating Model COOs Need for the Post-Pilot Era

Apr 17, 2026

Notion Now Searches Your Salesforce Data: What Cross-Tool AI Means for How Operations Teams Work

Mar 20, 2026

You Can Now Give Notion a 20-Minute Task and Walk Away: What That Means for How You Lead

Mar 17, 2026

Notion Custom Agents Are Free Until May 3: How COOs Should Run a 3-Week Evaluation

Mar 13, 2026

Atlassian Cut 900 R&D Roles: What Operations Leaders Need to Assess Before Renewal

Mar 10, 2026

You Can Now Hire AI Agents for Your Monday.com Workspace: What That Means for How COOs Evaluate Work Management Platforms

Feb 6, 2026

ClickUp Wants to Replace Your PM Tool, Your Slack, and Your Zoom: Is the Everything App Bet Worth It?

Jan 30, 2026

Monday.com Lost 19% in One Day Over AI Agents: What That Signals About SaaS Pricing and Your Next Renewal

Jan 29, 2026

Monday.com vs. Asana in 2026: The AI Architecture Decision That Should Drive Your Platform Choice

Jan 16, 2026 · Currently reading

AI Meeting Context vs. Your Notion Workspace: Do Teams Actually Need Both in 2026?

Jan 13, 2026

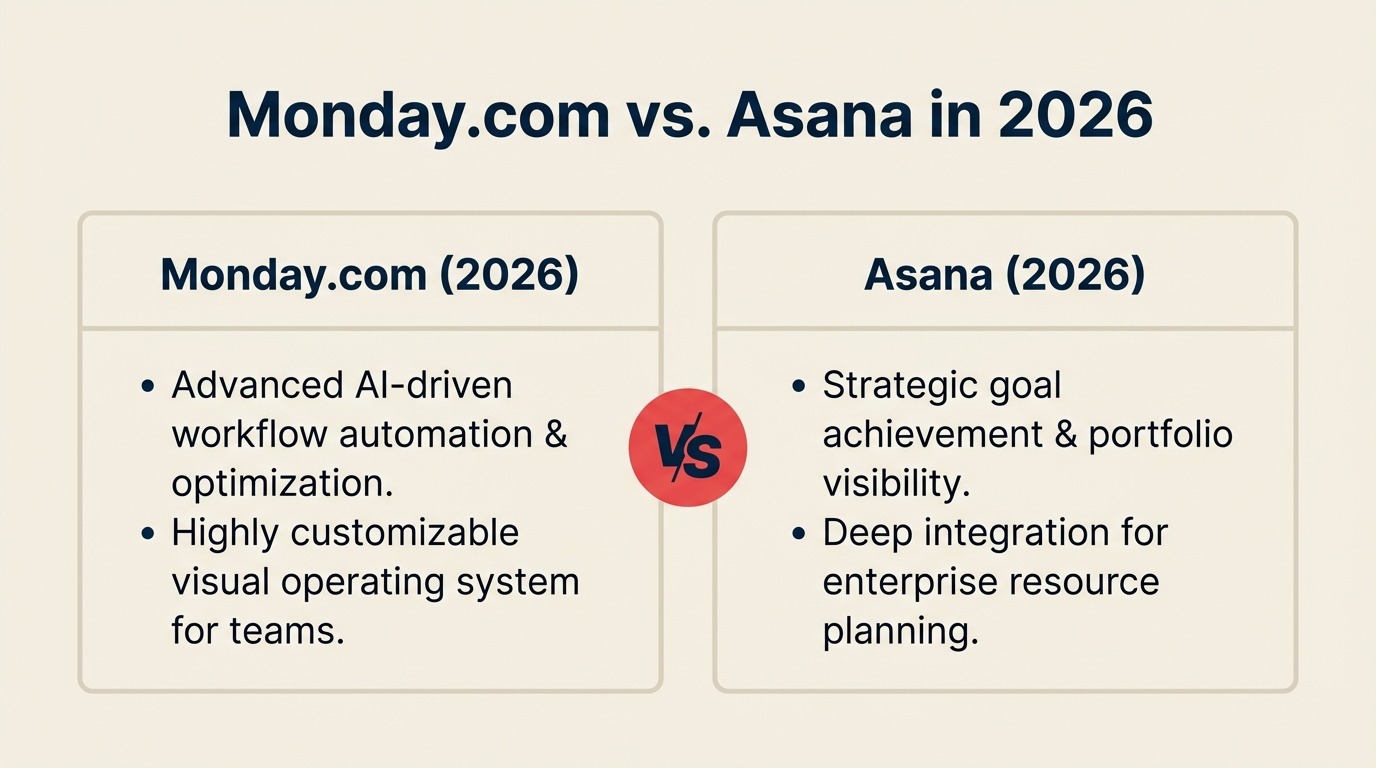

Monday.com vs. Asana in 2026: The AI Architecture Decision That Should Drive Your Platform Choice

Most platform comparisons between Monday.com and Asana focus on the obvious variables: UI preference, integration count, price per seat, template library, reporting flexibility. Those are legitimate factors. But in 2026, there's an architectural difference between the two platforms that deserves more weight than it typically gets in comparison articles, and it has direct implications for operations teams building automated workflows on top of AI.

Monday.com runs its AI features on a credit system. Asana does not. That gap in design philosophy affects how each platform behaves when your team's AI automation usage scales up.

According to Monday.com's own AI report and analysis from Prism News's 2026 feature comparison, Monday.com's AI credits can be exhausted, which pauses AI features until the credit balance resets or additional credits are purchased. Asana, by contrast, offers unlimited AI usage on paid plans. No credit system, no pause risk. For a team that treats AI automation as part of daily operations rather than an occasional add-on, that's not a minor pricing detail. It's a workflow reliability question.

How Each Platform Is Building AI

The two companies are investing heavily in AI, but they've made meaningfully different architectural choices about what AI in work management should actually do.

Monday.com's approach is built around three components. Sidekick functions as a central AI assistant embedded across the workspace. Vibe allows teams to build custom applications without technical expertise. And AI Blocks are pre-built AI task management capabilities that attach directly to workflows, covering data categorization, content summarization, and information extraction, requiring no code to activate.

On top of that, Monday.com has introduced what it calls Digital Workers: autonomous agents designed to function like persistent team members. Two current examples are the Project Analyzer, which continuously audits workflow health, and the Monday.com Expert, which helps build and refine reporting structures. These agents operate continuously and learn from interactions over time, a design that suits teams that want AI running in the background without explicit task-by-task triggering.

Asana's approach centers on AI Teammates, agentic workflows powered by what Asana calls its Work Graph, a structural model of how teams, goals, projects, and task dependencies relate to each other. Because Asana's AI draws on that relational context from the start, its agents have more native understanding of how a specific team's work is structured. The trade-off is that building that context requires investment: the Work Graph is meaningful only if your team has been disciplined about how it sets up projects, goals, and dependencies in Asana.

Asana AI Studio, launched in late 2025, adds a low-code environment for building custom AI agents with specific business rules. An operations manager can define an approval workflow with conditional routing without writing code, but the output inherits Asana's structure-first design philosophy.

Critically, as noted in financial analysis of Asana's 2026 direction, every AI action in Asana is logged and auditable. That's a design choice, not just a feature. It means the team can see exactly what the AI did, when, and why, which matters for operations teams with compliance requirements, post-incident reviews, or managers who want to understand AI behavior before trusting it more broadly.

The Credit Model: A Closer Look

The credit-based model isn't necessarily a dealbreaker. For teams using AI features occasionally, drafting a summary here, categorizing a batch of items there, credits are unlikely to be a constraint in practice. Many teams run well within credit limits and never experience a pause.

But consider two scenarios where the credit model creates operational risk:

The first is volume spikes. If your operations team has a quarterly close process, a product launch cycle, or any workflow that generates a burst of AI-automated activity, there's a real chance of exhausting credits during the period when you need AI automation most. Unlike a metered API where you simply pay more, a paused AI feature can break workflows that assume continuous operation.

The second is growth. Teams building on top of AI automation tend to automate more over time, not less. A credit allocation that works comfortably today may not cover usage in 18 months, and the budget conversation to expand credits adds procurement overhead that unlimited-model customers don't face.

Neither scenario is catastrophic. Both are worth modeling before you commit to a platform whose AI architecture you'll be living with for several years.

The Accountability Question

The audit trail differentiator is undervalued in most platform comparisons. Asana's choice to log every AI action is a design bet that operations teams, and the compliance and finance functions they work with, will eventually want to know what AI did on their behalf. It's the same instinct behind AI security and compliance frameworks that mature operations teams are now building into their tool evaluations.

Monday.com's Digital Workers are built on a different premise: that a continuously operating agent creates more value than one you have to inspect at every step. The always-on model is genuinely useful for workflows where you want background monitoring without constant configuration. But it trades off against the ability to reconstruct exactly what happened when something goes wrong.

For COOs whose teams handle sensitive data, operate in regulated industries, or simply have managers who are skeptical of AI systems they can't audit, the Asana model removes a friction point. For teams that want AI to be invisible and persistent, Monday.com's approach fits the use case better.

Neither is objectively correct. They reflect different values about how much visibility teams want into AI behavior, and that's a cultural question as much as a technical one.

A Platform Decision Framework for COOs

Use these questions to make the AI architecture dimension concrete for your specific situation:

1. How high is your expected AI automation volume, and is it consistent or bursty? Estimate how many AI-powered workflow steps your team would run in a heavy month. If your peak months are significantly higher than average, model whether Monday.com's credit system would require purchasing additional credits during those periods, and what that costs.

2. Do you have compliance or audit requirements that touch your workflow management tool? If audit trails are required, for SOC 2, ISO, or internal governance, Asana's logged AI actions remove a documentation burden. If your compliance requirements don't extend into your project management layer, this differentiator matters less.

3. How much operational structure has your team already built in each platform? Asana's Work Graph AI is most powerful when the team has invested in clean project and goal structures. If you're migrating from a looser setup, the AI capability won't perform well initially. Monday.com's AI Blocks work with less structural prerequisite.

4. What does your team's technical capability look like for building custom automation? Both platforms offer no-code automation builders. But Monday.com's AI Blocks are faster to deploy for straightforward operational use cases. Asana AI Studio is more flexible for complex conditional logic but requires more investment to configure well.

5. How important is manager visibility into AI behavior during the adoption phase? For teams in early AI adoption, the ability to explain what AI did, and correct it when it's wrong, builds the trust that sustains adoption. Asana's audit trail supports that. Monday.com's approach may work better for teams with higher existing AI comfort.

6. Where are your current contracts, and what's the cost of switching at renewal? Platform decisions that ignore contract timing create unnecessary switching costs. If you're 18 months into a Monday.com enterprise agreement, the AI architecture question matters less than your renewal window.

What to Do This Week

Pull your team's current project workflow data and estimate the number of AI automation steps you'd use in a typical month, and in your heaviest month. Map that against Monday.com's credit tiers for your team size. If the math is close to limits during peak periods, that's a real constraint worth factoring into your platform evaluation. If you're tracking the broader platform consolidation story in parallel, ClickUp's everything-app bet raises similar questions about AI architecture trade-offs worth reading alongside this one.

If you're in active evaluation between the two platforms, ask each vendor specifically: what happens to automated workflows when AI credits are exhausted, and what's the lead time to restore service? The quality of that answer will tell you as much as the official documentation.

Learn More

- ClickUp Wants to Replace Your PM Tool, Your Slack, and Your Zoom — The everything-app bet as a third option in the productivity platform decision

- Monday.com Lost 19% Over AI Agents: SaaS Pricing Signal — What Monday's stock reaction reveals about the business model risk behind AI credits

- AI Powered Workflows for Operations — Building workflows that maintain continuity regardless of vendor credit model

- Measuring AI Adoption ROI — How to evaluate platform decisions based on workflow impact, not feature lists