Fit vs. Intent Scoring: Which Signal Actually Predicts a Won Deal

You have two leads sitting in the queue.

Lead A: VP of Operations at a 200-person software company. Matches your ICP in every dimension: industry, size, tech stack, role. Has been on your email list for 8 months. Never visited pricing. No product pages. Opened two emails last quarter.

Lead B: Marketing Manager at a 40-person services company, smaller than your ideal. Not in your core industry. But they requested a demo yesterday. Visited pricing three times this week. Searched your category on G2 and clicked through to your profile.

Your scoring model says Lead A has 78 points. Lead B has 61 points.

Which one do you call first?

If your instinct says Lead B, because they're in an active buying window right now, you're probably right. And if your scoring model ranks Lead A higher, you have a calibration problem that's silently hurting your pipeline.

This is the fit vs. intent question. And getting it wrong in either direction costs revenue. It's also the central tension in any joint lead scoring conversation: the weights you assign to fit vs. intent signals are where alignment either holds or breaks.

Defining Fit Scoring

Fit score measures whether a lead belongs to the kind of company you sell to and holds the kind of role that buys from you.

What fit captures:

- Company size (employees, revenue)

- Industry or vertical

- Geography (if you serve specific markets)

- Technology stack (tools they use that integrate with or are replaced by your product)

- Job title, function, and seniority

- Stage of business (startup vs. growth vs. enterprise)

What fit does not capture:

- Whether they're actively looking for a solution right now

- Whether they have budget available this quarter

- Whether there's an active project or procurement cycle underway

Fit is largely static. A company's size doesn't change month to month. Their industry doesn't shift. The contacts' titles change slowly if at all. This stability is both a strength and a limitation.

Strength: Fit scores don't decay quickly. A high-fit lead from six months ago is still a high-fit lead today.

Limitation: Fit doesn't tell you when. A company could be a perfect fit and have zero urgency for the next 18 months.

Where fit data comes from:

- Form fields (self-reported company size, title, industry)

- CRM enrichment tools (Clearbit, ZoomInfo, Apollo)

- Third-party firmographic databases

- LinkedIn data (for title and seniority)

Form data is self-reported and often inaccurate (people lowball company size, inflate titles). Enrichment data is more reliable but has coverage gaps for smaller companies. For SMB targets especially, plan on some percentage of leads with incomplete or inaccurate fit data. The shared ICP framework is where the fit criteria live, and the scoring weights should mirror whatever the two teams agreed on there.

Key Facts: Fit and Intent Signal Performance

- Leads with high intent signals convert to opportunities at 3-5x the rate of leads with high fit scores alone, according to Bombora and SiriusDecisions research on intent data adoption.

- 70% of the B2B buying journey happens before a buyer contacts a vendor, meaning intent signals often appear before leads enter your CRM, per Forrester's buying behavior research.

- Over-weighting engagement behavior in lead scoring inflates MQL counts by an average of 40% without improving pipeline quality, per Demand Gen Report data on scoring accuracy.

Defining Intent Scoring

Intent score measures whether a lead is actively researching a solution in your category right now. Gartner's B2B intent data guide defines intent signals as behavioral evidence that a company is actively evaluating software in your category. That's a useful operational definition for deciding what your model should actually measure.

What intent captures:

- Are they in a buying window?

- Are they evaluating options in your category?

- Have they shown commercial interest, not just educational curiosity?

First-party intent signals come from your own properties. These are the strongest intent signals because you directly observed the behavior.

| Signal | Intent strength | Why |

|---|---|---|

| Demo request | Very high | Explicit request to buy-evaluate |

| Pricing page (3+ visits) | High | Active commercial evaluation |

| ROI calculator used | High | Commercial math in progress |

| Competitor comparison page | High | Vendor evaluation mode |

| Free trial started | High | Product evaluation underway |

| Pricing page (1 visit) | Medium | Research curiosity, not necessarily evaluation |

| Feature page deep dive | Medium | Understanding product, may be evaluating |

| Blog content (educational) | Low | Research but not commercial intent |

Third-party intent signals come from data providers who aggregate research behavior across multiple websites, review platforms, and content networks. Forrester's analysis of B2B self-service buying found that 68% of B2B buyers prefer to research on their own before engaging a vendor. That means third-party intent signals capture active evaluation that never appears in your own analytics.

| Source | What it tracks | Reliability |

|---|---|---|

| Bombora | Topic surges (companies researching specific categories) | High for enterprise, lower for SMB |

| G2 Buyer Intent | Views of competitor profiles and your category on G2 | High; these are active evaluations |

| TechTarget | Downloads and research on TechTarget properties | Category-specific, good for tech buyers |

| LinkedIn Lead Gen | Form completions on LinkedIn | Strong intent for role-targeted campaigns |

Third-party intent data significantly improves scoring precision but requires budget (Bombora starts at $1,500+/month) and MAP integration work. If you don't have the budget for intent data providers yet, you can build proxy signals from first-party data alone.

How Each Predicts Different Outcomes

The Fit/Intent Response Matrix

The Fit/Intent Response Matrix maps leads into four quadrants based on two independent axes: ICP fit (who they are) and buying intent (whether they're actively evaluating now). Each quadrant requires a distinct response playbook. The matrix is the decision tool; the scoring model is just the input that places a lead into the right cell.

Low Intent High Intent High Fit Nurture for timing: structured, patient, wait for intent trigger Call immediately: best lead, speed is the variable Low Fit Suppress or deprioritize: low-investment nurture only Handle carefully: qualify quickly, don't route to senior AEs The matrix makes explicit what a single composite score hides: a score of 85 could be high-fit/low-intent (future pipeline) or low-fit/high-intent (fragile opportunity). The right action is completely different.

The four quadrants of the fit/intent matrix each call for a different response.

HIGH INTENT

|

NURTURE FOR TIMING | CALL IMMEDIATELY

High fit, low intent | High fit, high intent

|

─────────────────────┼─────────────────────

|

SUPPRESS/DEPRIORITIZE| HANDLE CAREFULLY

Low fit, low intent | Low fit, high intent

|

LOW INTENT

LOW FIT HIGH FIT

High fit + high intent: Call immediately. This is your best lead. They match your ICP and they're actively looking. This is who the sales team should be calling within the hour. Every other work stops when this quadrant lights up.

The priority is speed. The data consistently shows that high-fit/high-intent leads contacted within the first hour convert at dramatically higher rates than those contacted 24 hours later. The buying window is open now. Your job is to be in it. A five-minute response SLA for inbound demo requests is specifically built for this quadrant.

High fit + low intent: Nurture for timing. This is a valuable lead in a dormant window. They'll eventually need what you sell, but probably not today. Pushing this lead to sales creates wasted calls and erodes trust in the scoring model. Keep this person in structured nurture: quarterly check-in content, relevant industry updates, a case study that matches their situation. When their intent score rises (a pricing page visit, a demo request) that's the trigger to move to sales.

This quadrant is where most of your MQL database lives. Treat it as future pipeline, not current pipeline. The lead lifecycle stages framework shows how these dormant-but-fit contacts should flow through nurture programs before they're ready for the SQL handoff.

Low fit + high intent: Handle carefully. This lead is doing active research but doesn't match your ICP. There are a few scenarios that produce this pattern, and they're handled differently:

Scenario A: They're a faster-growing company than your data shows. Enrichment data can be 6-12 months stale. A company that looked too small six months ago might now be at 80 employees. Run a quick enrichment update before dismissing.

Scenario B: They're genuinely outside your ICP. They need a solution but you're not the right fit for them. Route to a quick qualifier call or an inbound SDR. Don't route to your AE team. These leads occasionally close but at lower rates and often with higher churn. Don't build pipeline on a pattern that creates churn.

Scenario C: The fit data is wrong. Title doesn't reflect actual role (a "Coordinator" who is actually running the budget for a major project). Ask directly in the qualifier to verify before dismissing.

Low fit + low intent: Suppress or deprioritize. Don't pass this to sales. Don't invest in heavy nurture. If this lead is on your list, they might convert eventually, but at a rate so low that the opportunity cost of focusing on them is high. A simple nurture sequence (monthly newsletter) is appropriate. Remove from active scoring.

Quotable: High-fit B2B leads that lack intent signals take an average of 6-9 months longer to close than high-fit/high-intent leads, according to CEB (now Gartner) research on B2B buying cycle length. Routing fit-only leads to sales is not just inefficient; it actively distorts pipeline velocity forecasts.

The Scoring Trap: Over-Weighting Behavior

The most common scoring mistake is giving too much weight to behavioral engagement signals that feel like intent but aren't. Forrester's research on intent data mistakes lists conflating engagement with buying intent as one of the top errors teams make, inflating MQL counts without improving pipeline quality.

Email opens. Blog posts read. Webinar registrations. Social clicks. These signals indicate someone is engaged with your content, but engagement isn't intent.

The "engaged but never buys" persona is real in every B2B content library. They consume everything. They open every email. They attend every webinar. They never buy. Often because they're practitioners who use your content for education and idea generation, but they're either not the budget holder, not in an active project, or not in a company that would ever be a customer.

If your model gives 5 points for every email open and 10 points for every blog visit, this persona scores extremely high, potentially higher than a perfect-fit lead who visited pricing once. That's a model malfunction.

How to fix the behavior weight problem:

- Cap the total points any behavior signal category can contribute (e.g., behavior score caps at 30 points total regardless of how many emails they open)

- Apply aggressive decay to engagement signals (14-day half-life on email opens, 30-day on blog visits): the lead scoring model decay article covers the mechanics

- Require at least one intent signal above a minimum threshold before a lead can reach MQL status, regardless of behavior score

- Validate by checking whether your highest-scoring "behavior only" leads actually convert. Most won't.

Setting Relative Weights by Sales Motion

The right balance between fit and intent depends on how you acquire customers.

Inbound-led motion: When most of your leads come from content and inbound search, you're already pre-filtering for intent: someone who sought you out. Fit becomes the primary differentiator because you want to route only ICP-fit inbound leads to sales. Weight: Fit 50%, Intent 35%, Behavior 15%. The MQL-to-SQL score thresholds for this motion will reflect that fit-heavy weighting.

ABM motion: When you're proactively targeting specific accounts, fit is already fixed (you chose the accounts). Intent becomes the trigger signal: when is a target account in an active buying window? Weight: Fit 25% (already screened), Intent 60%, Behavior 15%.

PLG (product-led growth) motion: Product behavior is your strongest signal: trial usage, feature adoption, usage volume. Fit matters but product engagement often predicts conversion better than either traditional intent or fit. Weight: Fit 25%, Intent 25%, Product behavior 50%.

Outbound-assisted motion: Sales is driving outreach to accounts regardless of inbound signals. Fit determines targeting. Intent signals tell you which accounts to prioritize in the sequence. Weight: Fit 60%, Intent 40%, Behavior 0% (behavior is minimal because you're reaching out cold).

Practical Implementation Without Expensive Tools

Third-party intent data providers improve scoring accuracy significantly. But you don't need them to build a working fit/intent model.

Proxy signals for intent when you don't have intent data vendors:

| Real intent signal | Proxy you can build |

|---|---|

| Bombora topic surge | Content engagement spike: 4+ high-value page views in 30 days |

| G2 category research | Direct traffic to your comparison or alternatives pages |

| Competitor research | Visits to your "[competitor] vs. [you]" content |

| Active procurement cycle | Form field: "How soon are you looking to implement?" |

| Budget available | Form field: "What's your approximate budget range?" |

The weakest proxies are form fields, because people lie, skip, or give aspirational answers. The strongest proxies are actual behavioral signals from your own properties: pricing page visits, comparison page visits, ROI calculator completions.

Even without third-party data, a model that separates fit from intent and requires both before reaching MQL status outperforms a single-dimension model. Once you have both signals, the real question is how much weight to give each one.

Quotable: Over-weighting engagement behavior in lead scoring (email opens, page views, newsletter clicks) inflates MQL counts by an average of 40% without improving pipeline quality, according to Demand Gen Report data on scoring accuracy across B2B marketing teams.

Rework Analysis: The Fit/Intent Response Matrix clarifies the most expensive scoring mistake teams make: routing high-intent/low-fit leads to senior AEs. These leads convert occasionally, but they close slower, churn faster, and consume disproportionate sales time. The matrix forces an explicit routing decision by quadrant, not just a score threshold. Build the quadrant logic into your CRM view so reps see which cell a lead falls into, not just a composite number.

Calibrating Weights Against Closed-Won Data

The best way to set weights is to look backward at what actually predicted a won deal. The pipeline vs. forecast data shows you which lead characteristics consistently produced deals that closed vs. leads that stalled.

The analysis:

- Pull your last 90-180 days of closed-won opportunities

- For each, check their lead record: What was their fit score at the time of SQL? What was their intent score?

- Build the same dataset for closed-lost

- Compare the distribution of fit and intent scores between won and lost

If closed-won deals cluster at high fit AND high intent, your model is roughly right. If closed-won deals cluster at high fit but medium intent, you're over-weighting intent. If you see a cluster of low-fit/high-intent leads in your closed-won set, you may have a broader ICP than your model assumes.

This analysis doesn't require a data scientist. A spreadsheet with two columns (fit score and intent score at time of SQL) for your last 50 won and 50 lost deals will show you the pattern.

Run this calibration every six months. Markets shift. Buyer behavior changes. A model that was accurate 18 months ago may be off by now without anyone noticing.

When Intent Signals Decay

Intent is time-sensitive in a way that fit isn't. A company that was researching your category three months ago may have bought a competitor, paused the project, or deprioritized it entirely. A high intent score from last quarter is not the same as a high intent score today.

Signal half-life estimates:

| Signal | Half-life | Logic |

|---|---|---|

| Demo request | 48-72 hours | Active evaluation window closes fast |

| Pricing page (3+) | 7-10 days | Commercial evaluation is urgent |

| G2 profile view | 2-3 weeks | Category research window |

| Competitor comparison | 3-4 weeks | Evaluation process in flight |

| Topic surge (Bombora) | 3-4 weeks | Company research cycle |

| ROI calculator | 7-10 days | Commercial math in active project |

| Webinar attendance | 30-60 days | Interest signal, not urgency signal |

| Blog content | 60-90 days | Educational, non-commercial |

High-urgency intent signals like demo requests should trigger immediate routing to sales, not batch processing. The intent score will be meaningless if you're reviewing it in a weekly batch when the signal fired on Tuesday. The lead routing rules need to account for this: hot intent signals should have a dedicated fast-path, separate from the normal weekly MQL batch.

For signals with 2-4 week half-lives, build decay into your MAP automation so scores automatically recalculate. A lead who visited pricing three times two months ago and hasn't been back is not currently in a buying window.

The lead scoring model decay article covers the technical implementation of decay rules in detail.

The Bottom Line

Fit without intent is patience. You have the right kind of company, but they're not ready to buy. Keep them in nurture, let the model trigger when intent rises.

Intent without fit is noise. Someone is actively researching your category, but they're not a company you can actually win. Handle carefully: qualify quickly, don't route to senior AEs, stay honest about conversion probability.

Both together is pipeline. High-fit + high-intent is your best lead, and your response speed to those leads matters more than almost any other operational variable.

The model that gets this balance right, and stays calibrated against real closed-won data, is what separates revenue teams that trust their lead score from teams where sales ignores the number and works their own list.

Build for accuracy first. Joint ownership second. And validate it against what actually closed.

Frequently Asked Questions

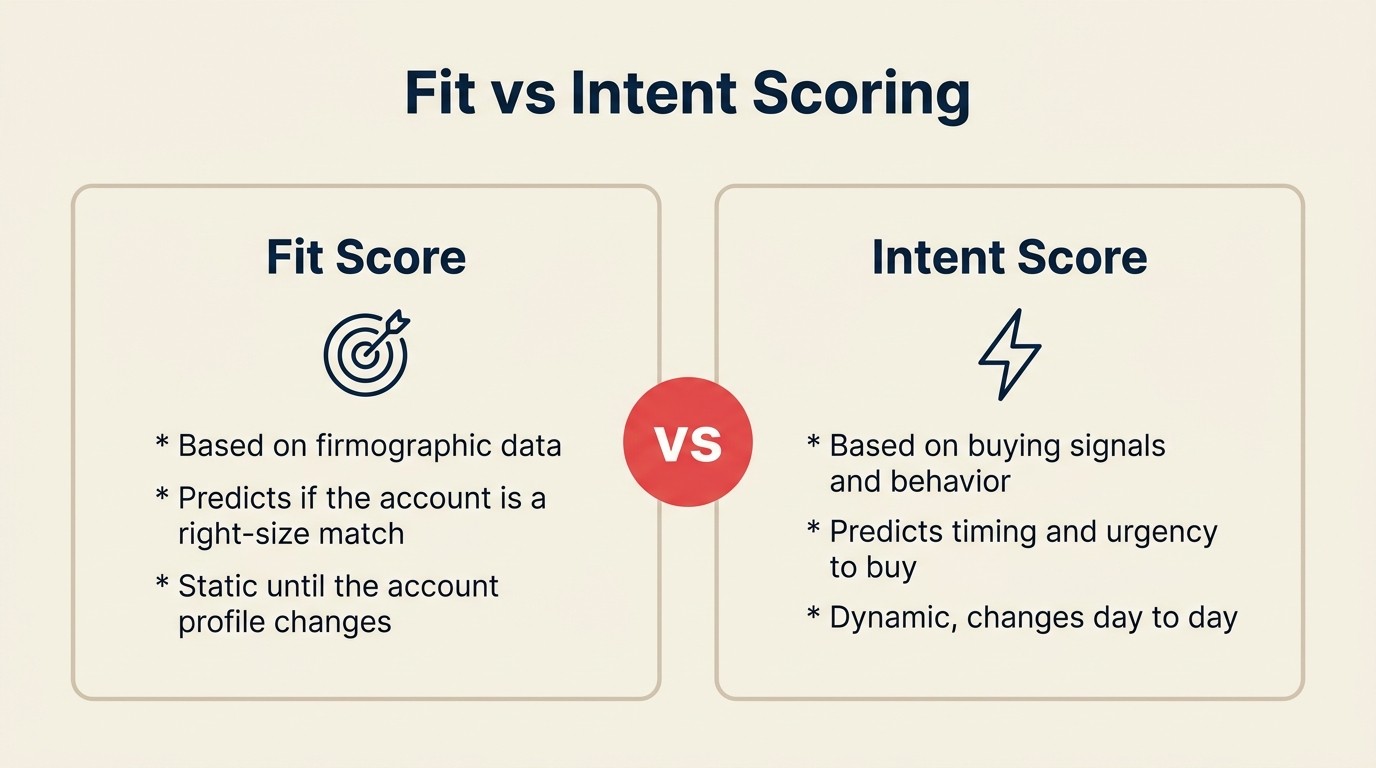

What is the difference between fit scoring and intent scoring?

Fit scoring measures whether a lead belongs to the kind of company you sell to and holds the kind of role that buys from you. It's based on firmographic and role signals that are largely static. Intent scoring measures whether a lead is actively researching a solution in your category right now. It's based on behavioral signals (pricing visits, demo requests, G2 category research) that are time-sensitive and decay within days to weeks.

Which matters more: fit or intent?

It depends on your sales motion, but when both are present, a high-intent/high-fit lead is almost always your best opportunity. If forced to prioritize one: for inbound-led motions, fit differentiates because inbound already pre-filters for some intent; for ABM motions, intent triggers because you've pre-screened on fit. Neither signal is reliable alone. Intent without fit is noise, and fit without intent is patience.

When does fit matter most?

Fit matters most in ABM and outbound-assisted motions where you're targeting accounts proactively. In those motions, fit is pre-screened (you chose the accounts), so it's the gate, not the trigger. Fit also matters most at scale: when you have high inbound volume, fit is the fastest filter to separate ICP leads from non-ICP leads before investing in intent analysis.

What happens with intent signals but no fit match?

Low-fit/high-intent leads should be handled carefully, not routed to senior AEs. These leads are doing active research but don't match your ICP: they might be the wrong size, wrong industry, or wrong role. Route to a quick qualifier call or an inbound SDR. Don't invest heavy sales resources. These leads occasionally close but typically at lower rates and higher churn.

How long does an intent signal remain valid?

Intent signals are time-sensitive and decay fast. Demo requests: 48-72 hours. Pricing page visits (3+): 7-10 days. G2 profile views: 2-3 weeks. Competitor comparison visits: 3-4 weeks. Bombora topic surges: 3-4 weeks. A high intent score from last month is not the same as a high intent score today. Build MAP automation to recalculate scores with decay applied, not just at batch-processing intervals.

What is the most common lead scoring mistake?

Over-weighting behavioral engagement signals (email opens, blog visits, webinar registrations) as if they indicate buying intent. They don't. They indicate content interest. The "engaged but never buys" persona scores extremely high on behavior and rarely converts. Fix: cap the total behavioral contribution to a lead's composite score, apply aggressive decay to engagement signals, and require at least one genuine intent signal before a lead can reach MQL status regardless of behavior score.

Learn More

Senior Operations & Growth Strategist

On this page

- Defining Fit Scoring

- Defining Intent Scoring

- How Each Predicts Different Outcomes

- The Scoring Trap: Over-Weighting Behavior

- Setting Relative Weights by Sales Motion

- Practical Implementation Without Expensive Tools

- Calibrating Weights Against Closed-Won Data

- When Intent Signals Decay

- The Bottom Line

- Frequently Asked Questions

- What is the difference between fit scoring and intent scoring?

- Which matters more: fit or intent?

- When does fit matter most?

- What happens with intent signals but no fit match?

- How long does an intent signal remain valid?

- What is the most common lead scoring mistake?

- Learn More