Marketing-Sales Alignment Maturity Diagnostic: Score Your Team Across 5 Levels

Most alignment initiatives fail for the same reason: teams apply solutions that belong at Stage 4 to problems that actually exist at Stage 1. They buy revenue intelligence software when they haven't yet agreed on what an MQL is. They run joint planning sessions when the CRM is still a fiction. They hire a RevOps lead before establishing a single shared dashboard.

The five-tier structure here draws on the logic of maturity models, a well-established framework used across engineering and operations to describe how reliably an organization's processes produce intended outcomes.

Diagnosis before prescription. That's the premise of this article.

The diagnostic below takes about 10 minutes. It's designed to be completed independently by the marketing lead and the sales lead, then compared. If you score the same question differently, that gap (not the score itself) is the most important thing in the room.

Research from SiriusDecisions and HubSpot consistently shows strong alignment correlates with significantly faster revenue growth, yet only a small fraction of companies describe their teams as truly aligned. That gap between aspiration and reality is the problem this diagnostic is designed to measure.

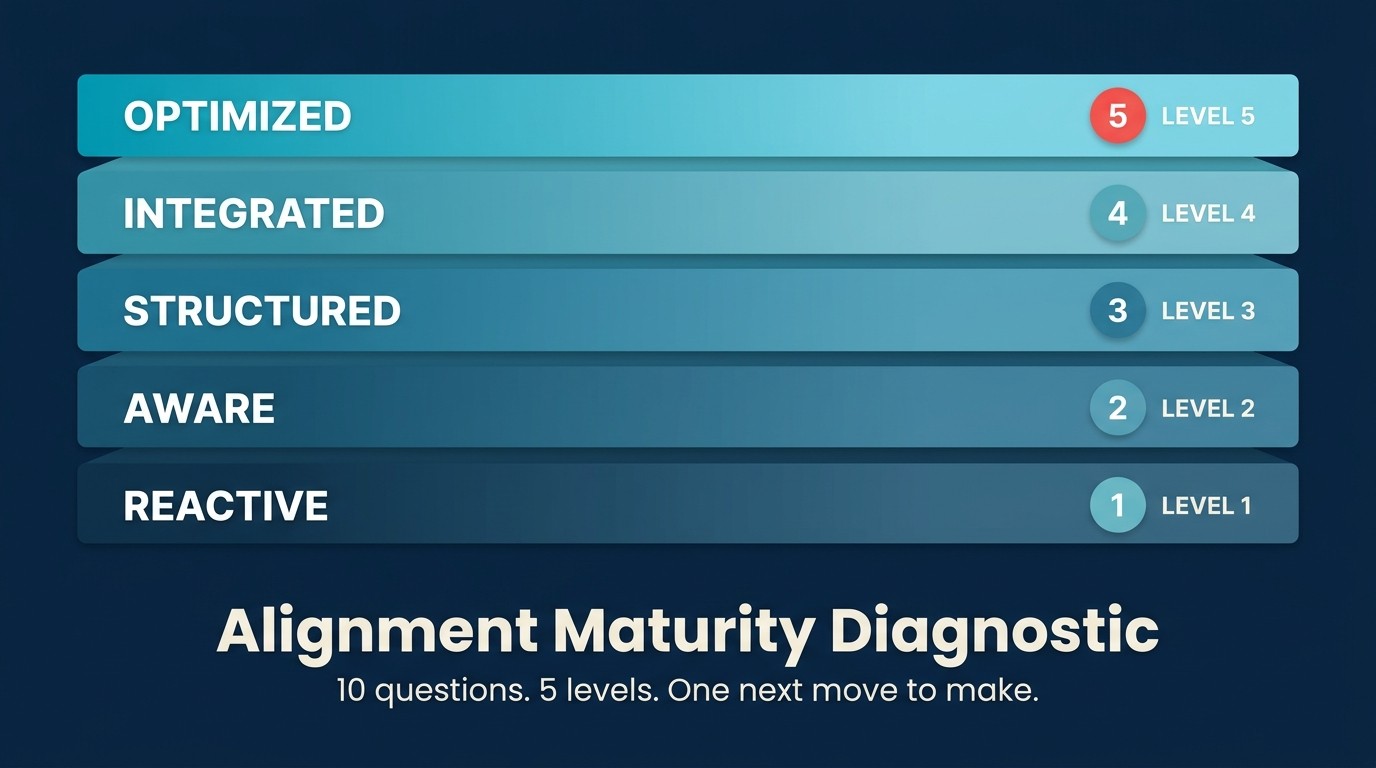

The Marketing-Sales Alignment Maturity Diagnostic is a 10-question, 20-point self-scoring instrument that places a revenue team at one of five maturity tiers (Siloed, Reactive, Functional, Integrated, or Predictive) and identifies the single highest-leverage next move. Unlike generic alignment surveys, this diagnostic is designed to be completed independently by marketing and sales leads simultaneously; divergent scores on the same question reveal structural misalignment more reliably than any aggregate score.

The Named Framework: The Marketing-Sales Alignment Maturity Diagnostic

This instrument formalizes the Marketing-Sales Alignment Maturity Diagnostic: a five-tier scoring model across 10 structural process areas that determine whether a revenue team's alignment is durable or personality-dependent. The five tiers (Siloed, Reactive, Functional, Integrated, Predictive) map to measurable revenue outcomes, not cultural impressions. The diagnostic is designed to surface the gap between perceived alignment and operational alignment, which Forrester has identified as the primary reason most alignment initiatives fail to sustain beyond one quarter.

The 10 questions map to three root-cause categories: shared definitions (Q1, Q5), process infrastructure (Q2, Q3, Q6), and feedback loops (Q4, Q7, Q8, Q9, Q10). A pattern of zeros in any single category reveals the structural layer that needs work first.

Key Facts: The Cost of Misalignment

- B2B companies with strong marketing-sales alignment achieve 24% faster three-year revenue growth and 27% faster three-year profit growth, according to SiriusDecisions research.

- Only 8% of companies describe their marketing and sales teams as tightly aligned, per HubSpot's State of Marketing report.

- When both marketing and sales agree on the ICP, conversion rates from MQL to closed-won improve by up to 45%, according to Forrester.

How to Use This Diagnostic

Who should complete it: The head of marketing and the head of sales, independently and simultaneously. If you have a RevOps lead, they complete a third copy as an observer. Do not share scores before each party has finished.

Scoring scale: Each question is scored 0, 1, or 2.

- 0 = not in place; this is either absent or only exists informally

- 1 = partial or inconsistent; it exists on paper but execution is unreliable

- 2 = fully in place and working; both teams would agree this is true

Total: 10 questions × 2 points = 20 points maximum.

The comparison step matters. Once both parties finish, reveal scores question by question. Any question where marketing and sales differ by 2 points is a structural gap dressed up as a perception problem. Those are the questions to discuss first, not the ones where you agree you're weak.

Cadence: Run this quarterly. Progress across tiers tends to be slower than teams expect. Moving from Tier 2 to Tier 3 typically takes 60-90 days of consistent execution, not two all-hands sessions. A Forrester study on B2B alignment found that most leaders believe they're aligned while their teams are not. That diagnostic gap between perception and reality is exactly what this exercise surfaces.

The 10 Diagnostic Questions

Score each question 0, 1, or 2 using the scale above.

Q1. Do marketing and sales share a written, agreed definition of MQL and SQL, reviewed in the last 6 months?

Score 2 if: A document exists, both teams signed off, and it was reviewed (and updated if needed) within the past 6 months. Score 1 if: A definition exists but hasn't been revisited in over 6 months, or one team has a definition the other team doesn't fully endorse. Score 0 if: No written definition exists, or each team operates from a different informal understanding.

See: joint MQL definition process and SQL acceptance criteria.

Q2. Is there a documented handoff SLA (for example, a 5-minute response window for inbound MQLs) that both teams signed off on?

Score 2 if: The SLA is written, both teams agreed to it, and response time is tracked in a shared dashboard. Score 1 if: An SLA exists verbally or in one team's process doc but isn't monitored or enforced. Score 0 if: No SLA exists; response time depends on whoever checks the queue first.

See: five-minute response SLA design.

Q3. Does your CRM reflect the single source of truth for pipeline data, not spreadsheets or siloed dashboards?

Score 2 if: All pipeline reporting comes from the CRM; neither team maintains a shadow spreadsheet that contradicts it. Score 1 if: CRM is the primary source but some team members run their own numbers that don't always match. Score 0 if: Marketing and sales pull pipeline data from different sources and regularly arrive at different numbers.

See: CRM as single source of truth.

Q4. Do marketing and sales meet weekly (or at least bi-weekly) specifically to review lead quality and pipeline, not just report status?

Score 2 if: A recurring meeting exists with a structured agenda covering MQL quality, conversion rates, and pipeline health, and it rarely cancels. Score 1 if: A meeting exists but is often skipped, replaced by status reports, or dominated by reporting rather than decision-making. Score 0 if: No regular joint meeting covers this topic.

See: joint pipeline review cadence and weekly lead-quality call.

Q5. Do you have a shared ICP definition that sales reps can articulate without looking it up?

Score 2 if: A written ICP exists with firmographic, behavioural, and situational criteria. If you asked three AEs to describe your ideal customer, their answers would substantially overlap. Score 1 if: An ICP document exists but AEs aren't using it to qualify; marketing targets one segment while sales gravitates toward another. Score 0 if: No written ICP exists, or ICP lives only in the VP Sales's mental model.

See: shared ICP framework.

Q6. Is there a documented lead-rejection and recycling workflow that both teams follow consistently?

Score 2 if: When a sales rep rejects an MQL, a defined process kicks in: rejection reason codes in the CRM, a recycling path back to marketing, and a feedback loop that marketing uses to adjust scoring. Score 1 if: A process exists on paper but rejection reason codes are rarely filled in, or rejected leads disappear rather than getting recycled. Score 0 if: No formal process; rejected leads just sit in the CRM with no follow-up.

See: lead rejection and recycling workflows and MQL-rejection feedback loop.

Q7. Can you report on marketing-sourced vs. marketing-influenced pipeline in a way both teams agree is accurate?

Score 2 if: Both numbers exist in the CRM, both teams agree on the definitions used to calculate them, and neither team disputes the methodology during pipeline reviews. Score 1 if: One or both numbers exist, but there's ongoing disagreement about how they're calculated or what they include. Score 0 if: Attribution is disputed; marketing and sales regularly present conflicting pipeline contribution numbers to leadership.

See: attribution models both teams trust.

Q8. Does marketing receive structured feedback from sales (win/loss themes, objection patterns, or competitive intel) at least monthly?

Score 2 if: A formal feedback mechanism exists (a monthly call, a shared doc, a CRM field) and marketing actively uses it to update messaging and content. Score 1 if: Feedback happens ad hoc or depends on individual relationships between specific people on each team. Score 0 if: Marketing learns what sales thinks only when something goes wrong; no structured feedback channel.

See: win/loss feedback to marketing.

Q9. Is there a joint content process where sales nominates topics and marketing produces assets, reviewed for field usage?

Score 2 if: Marketing has a documented process for collecting topic requests from sales, and there's a mechanism to track whether produced assets are actually used in deals. Score 1 if: Marketing occasionally asks sales what they need, but the process is informal and there's no way to know if content gets used. Score 0 if: Marketing creates content based on its own judgment; sales creates its own materials because marketing output doesn't match field needs.

See: sales enablement content vs field needs.

Q10. Does leadership review a shared revenue dashboard, not separate marketing and sales scorecards, at least monthly?

Score 2 if: There is one revenue dashboard that both the CMO and CRO (or their equivalents) review together; it covers marketing pipeline contribution, conversion rates, and sales performance in a single view. Score 1 if: Leadership sees combined data occasionally, but the standard reporting cadence uses separate scorecards for each team. Score 0 if: Marketing and sales each present their own scorecards to leadership; no shared view exists.

See: 8 shared dashboards revenue teams.

Scoring Rubric

| Total Score | Maturity Tier |

|---|---|

| 0-6 | Tier 1: Siloed |

| 7-10 | Tier 2: Reactive |

| 11-14 | Tier 3: Functional |

| 15-17 | Tier 4: Integrated |

| 18-20 | Tier 5: Predictive |

The 5 Maturity Tiers Defined

For a detailed description of each tier (how it looks, how it feels to work in it, and what the typical revenue impact is), see the Alignment Maturity Model. This section gives you enough to act; that article gives you the full picture.

Tier 1: Siloed (Score 0-6)

Marketing and sales operate as separate businesses that happen to share a company. There are no shared definitions, no recurring joint cadences, and no agreed attribution model. When pipeline misses happen, the default response is to blame the other team. MQL volume is the primary marketing metric; quota attainment is the only sales metric; neither connects to the other.

The characteristic sound of a Tier 1 org: "those leads are garbage" from sales and "they never follow up" from marketing, both repeated every quarter.

Tier 2: Reactive (Score 7-10)

Some shared processes exist, but they're held together by individual relationships. The CMO and CRO get along well, so alignment is fine. Until one of them leaves. MQL and SQL definitions were written at some point but haven't been revisited. Joint meetings happen sporadically, usually after a bad month. Attribution is fought over but never formally resolved.

Tier 2 teams often feel more aligned than they are because the interpersonal chemistry masks the structural gaps.

Tier 3: Functional (Score 11-14)

Documented processes exist across most of the 10 diagnostic areas. Meetings run consistently. MQL definitions are agreed and in writing. But execution is uneven: some weeks the handoff SLA is respected, some weeks it isn't. Attribution is agreed in principle but disputed in practice. CRM data quality is "good enough" rather than trustworthy.

Tier 3 is where most mid-market revenue teams sit. The processes are there. The discipline to maintain them isn't fully baked yet.

Tier 4: Integrated (Score 15-17)

CRM is genuinely the source of truth. Attribution is agreed and neither team disputes the numbers during pipeline reviews. The handoff SLA is tracked and visible to both teams. Win/loss feedback flows from sales to marketing monthly, and marketing uses it. Joint pipeline review is the rhythm of the revenue org, not a one-off event.

Gaps at Tier 4 are known. Leadership can name them, and they're being actively addressed rather than avoided.

Tier 5: Predictive (Score 18-20)

Alignment is structural, not personality-dependent. Revenue intelligence tools feed conversation data into marketing planning. Intent signals inform both outbound sequences and campaign targeting. Marketing and sales forecast together, not in separate slides. The feedback loop between field activity and content production closes in days, not quarters.

Very few mid-market companies are at Tier 5. The ones that are typically have a strong RevOps function and leadership that treats alignment as an operating system, not a cultural value. Gartner predicts that 75% of the highest-growth companies will deploy a RevOps model, the structural backbone that makes Tier 5 achievable at scale.

Your Score: What to Do Next

Tier 1-2: Start with definitions, not tools

Don't buy software. Don't run a two-day offsite. Start with three things: (1) a joint session to write your MQL and SQL definitions, (2) a shared ICP document, and (3) one recurring weekly meeting. These three moves will do more than any tool purchase.

Your highest-leverage questions from the diagnostic: Q1 (MQL/SQL), Q5 (ICP), Q4 (joint meeting).

See: MQL Definition Framework and Shared ICP Framework.

Tier 2-3: Formalise the handoff and close the loop

You have definitions. Now make the handoff reliable. Build a 5-minute SLA for inbound MQLs. Add rejection reason codes to the CRM. Build a closed-loop report both teams can see. These mechanics turn a personality-dependent process into a structural one.

Your highest-leverage questions: Q2 (SLA), Q6 (rejection workflow), Q3 (CRM as source of truth).

See: Closed-Loop Reporting Explained.

Tier 3-4: Fix attribution and start a win/loss program

Your processes run. But data trust is still the gap. This is where joint attribution work pays off. Agree on sourced vs. influenced definitions. Build the shared Monday dashboard. Start a structured win/loss program so marketing hears from the field consistently. This tier is also where conversion rate analysis starts producing reliable data for both teams.

Your highest-leverage questions: Q7 (attribution), Q8 (structured feedback), Q10 (shared dashboard).

Tier 4-5: Layer in revenue intelligence

At Tier 4, the processes are solid. The next step is to make data flow faster. Revenue intelligence platforms give marketing access to deal conversations. Intent data allows both teams to identify in-market accounts before they raise their hand. Joint forecasting sessions replace separate planning cycles.

Your highest-leverage questions: Q7, Q9, Q10. Focus on the quality of those processes, not just whether they exist.

Questions That Reveal the Biggest Gaps

Not all questions are equal. Three of the ten are structural prerequisites: if they score 0, other improvements won't hold.

If Q3 (CRM as source of truth) scores 0: Stop and fix this before anything else. Every other alignment initiative relies on shared data. Two teams working from different numbers will argue past each other forever. Pipeline coverage analysis becomes meaningless without a trustworthy single source.

If Q1 (MQL/SQL) and Q5 (ICP) both score 0: Alignment work can't meaningfully start. You're trying to optimize a process that has no shared inputs. These definitions are the foundation.

If Q7 (attribution) scores 0 but Q10 (shared dashboard) scores 2: You have a data trust problem disguised as a reporting problem. The dashboard exists, but one team doesn't believe the numbers on it. Attribution alignment is the fix.

Running the Diagnostic as a Team Exercise

The full protocol, step by step:

- Both the marketing lead and sales lead complete the diagnostic independently, without discussing scores first. If you have a RevOps lead, they complete it as a third observer.

- Reveal scores question by question, not total scores first. Use Q-by-Q comparison to surface specific disagreements.

- For each question where scores differ by 2 points: spend time here. This is a structural misalignment, not a scoring error.

- Agree on the top 3 questions with the lowest combined score that aren't already being addressed.

- Assign a named owner and a 30-day action for each of the top 3.

- Set a calendar reminder to re-run the diagnostic in 90 days.

What not to do: Don't average your scores together into a single number and move on. The gaps between perspectives are the point. If marketing scores Q8 a 2 (structured feedback exists) and sales scores it a 0 (they never give feedback that lands), that's a broken process, not a misread question.

Question-to-Article Mapping

Use this table to route each diagnostic gap to the right deep-dive resource in this library.

| Question | Topic Area | Go-to Article |

|---|---|---|

| Q1: MQL/SQL definitions | Shared definitions | MQL Definition Framework |

| Q2: Handoff SLA | Response time | Five-Minute Response SLA |

| Q3: CRM as source of truth | Data infrastructure | CRM as Single Source of Truth |

| Q4: Joint meeting cadence | Operating rhythm | Joint Pipeline Review Cadence |

| Q5: Shared ICP | Targeting | Shared ICP Framework |

| Q6: Lead rejection/recycling | Handoff process | Lead Rejection and Recycling |

| Q7: Attribution agreement | Measurement | Attribution Models Both Teams Trust |

| Q8: Sales feedback to marketing | Feedback loops | Win/Loss Feedback to Marketing |

| Q9: Joint content process | Enablement | Sales Enablement Content vs Field Needs |

| Q10: Shared dashboard | Visibility | 8 Shared Dashboards Revenue Teams |

Rework Analysis: Across mid-market revenue teams, the most common failure pattern is a Tier 3 score on the diagnostic combined with Tier 1 behavior in practice. Teams have documented their MQL definitions, run joint meetings, and built a CRM-based dashboard, but the execution is inconsistent enough that outcomes look like Tier 1. The diagnostic gap between Q3 (CRM as source of truth) and Q7 (attribution agreement) is the single most reliable indicator of this pattern: if Q3 scores 2 and Q7 scores 0, the data exists but isn't trusted, which means every downstream decision is made with contested evidence. The fix is a joint attribution working session before the next quarter starts, not another pipeline review.

The One Question That Matters

A diagnostic is only useful if it drives a decision. After you complete this, before you walk out of the room, answer one question together:

Which single gap, if fixed in the next 30 days, would most improve our pipeline reliability?

Not the gap that's easiest to fix. Not the one that makes marketing look better or sales look better. The one that, if resolved, would change the reliability of pipeline from uncertain to predictable.

That's where you start.

For patterns that keep showing up despite fixes, see Common Alignment Failures and Fixes. For the full five-tier model with revenue benchmarks, see Alignment Maturity Model. If your Tier 4-5 priority is forecasting accuracy, forecasting fundamentals covers the mechanics that make joint forecasting possible.

Frequently Asked Questions

How do we score the diagnostic if marketing and sales give different answers?

Score them separately. That's the point. When marketing scores a question 2 and sales scores it 0, you've identified a structural misalignment, not a scoring error. Don't average the scores. Hold a working session on every question where the two scores differ by 2 points. The gap between perspectives is the most actionable output of the exercise.

Who should take this diagnostic?

The head of marketing and the head of sales complete it independently and simultaneously. If you have a RevOps lead, they complete a third copy as an observer. Do not share answers until both parties finish. For companies without a formal marketing leader, the person who owns demand generation makes the best marketing proxy. The exercise loses most of its value if the same person completes both sides.

How often should we run this diagnostic?

Quarterly is the recommended cadence. Moving between tiers takes 60-90 days of consistent execution, so quarterly runs show meaningful progress without asking teams to score themselves too frequently. Book the Q+1 date at the same time you close the current session. Alignment initiatives that don't have a follow-up calendar date on the books rarely get done.

What if our scores disagree significantly across most questions?

More than three questions with a 2-point gap between marketing and sales signals a Tier 1 or low Tier 2 organization, regardless of the individual scores. Prioritize the three questions where the disagreement is largest and the combined score is lowest. Those are the process gaps creating the most pipeline risk. Don't try to fix all of them simultaneously; pick one and address it structurally in the next 30 days.

Can we use this diagnostic with a new CMO or CRO?

Yes, and it's especially valuable for onboarding a new revenue leader. Running the diagnostic in the first 30 days gives an incoming CMO or CRO a structured map of where alignment stands rather than relying on anecdotal impressions from either team. The question-by-question comparison also surfaces where the predecessor's team believed processes were working versus where they actually were.

What's the minimum viable first step for a Tier 1 team?

Three things, in order: agree on a written MQL/SQL definition (Q1), write down a shared ICP (Q5), and schedule a weekly joint pipeline meeting (Q4). These three moves cost nothing except time and will do more for alignment than any software purchase. Don't add tooling until these three are running consistently for at least 60 days.

How do we prevent the diagnostic from becoming a blame session?

Frame it explicitly before the session starts: the goal is to find structural gaps, not to prove one team is performing better than the other. Both marketing and sales leaders score independently and reveal scores simultaneously, question by question. RevOps (or the facilitator) presents the comparison as process data, not performance data. Questions that show a 2-point gap go into a "fix-it list" with named owners and 30-day actions. They don't become a retrospective about who failed.

Learn More

Senior Operations & Growth Strategist

On this page

- The Named Framework: The Marketing-Sales Alignment Maturity Diagnostic

- How to Use This Diagnostic

- The 10 Diagnostic Questions

- Scoring Rubric

- The 5 Maturity Tiers Defined

- Tier 1: Siloed (Score 0-6)

- Tier 2: Reactive (Score 7-10)

- Tier 3: Functional (Score 11-14)

- Tier 4: Integrated (Score 15-17)

- Tier 5: Predictive (Score 18-20)

- Your Score: What to Do Next

- Tier 1-2: Start with definitions, not tools

- Tier 2-3: Formalise the handoff and close the loop

- Tier 3-4: Fix attribution and start a win/loss program

- Tier 4-5: Layer in revenue intelligence

- Questions That Reveal the Biggest Gaps

- Running the Diagnostic as a Team Exercise

- Question-to-Article Mapping

- The One Question That Matters

- Frequently Asked Questions

- How do we score the diagnostic if marketing and sales give different answers?

- Who should take this diagnostic?

- How often should we run this diagnostic?

- What if our scores disagree significantly across most questions?

- Can we use this diagnostic with a new CMO or CRO?

- What's the minimum viable first step for a Tier 1 team?

- How do we prevent the diagnostic from becoming a blame session?

- Learn More