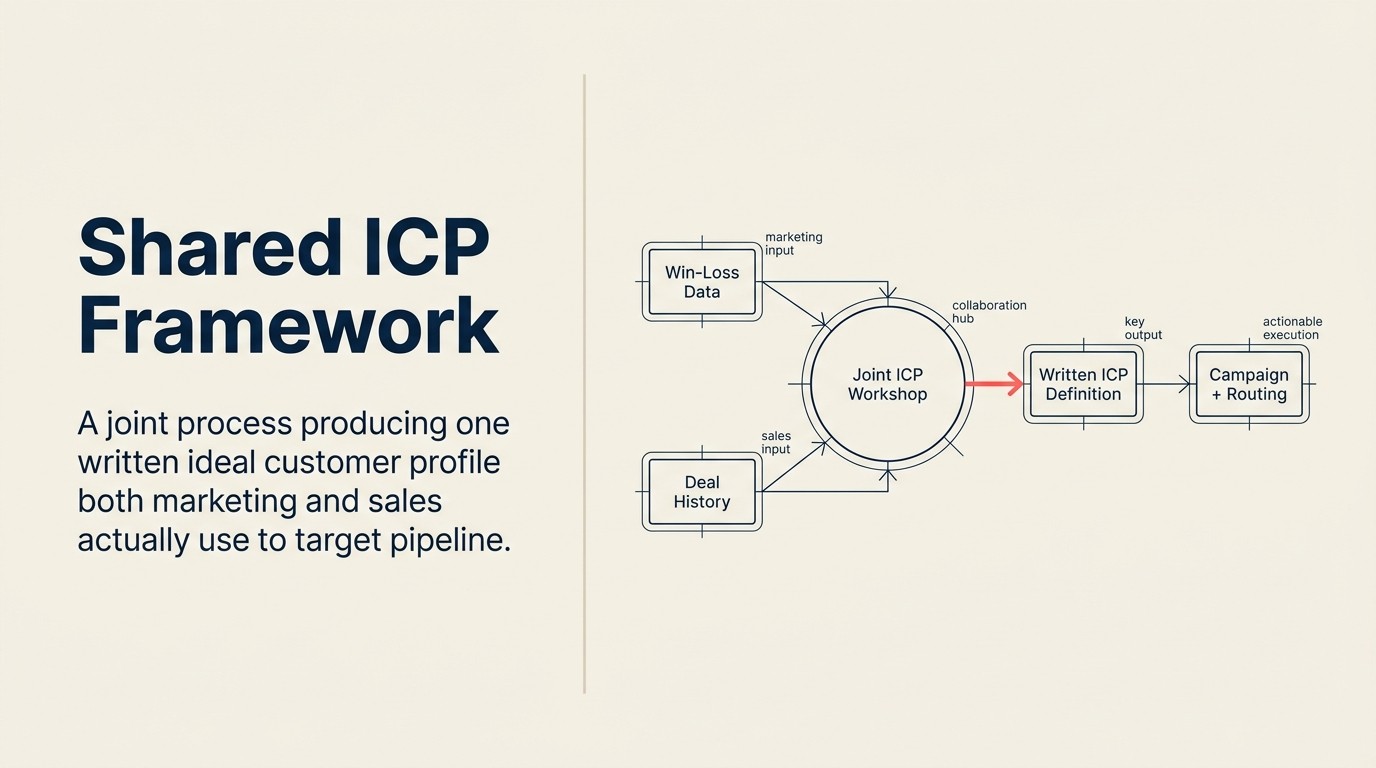

Shared ICP Framework: How Marketing and Sales Build One Ideal Customer Profile Together

Ask your CMO to describe your ideal customer. Then ask your CRO. You'll get two different companies.

The CMO will describe the segment their campaigns convert best. The CRO will describe the deals their top reps keep closing. Both profiles are real, and both are partial. Marketing's ICP reflects campaign performance signals; sales' ICP reflects deal experience and recency bias. Neither team has the full picture, and neither definition is built from the other team's data.

This gap is expensive. When marketing targets their version of the ICP, they attract leads that sales can't close. When sales chases their version, they pursue accounts marketing never warmed up. The pipeline looks active but converts poorly, and both teams point at the other. For the vocabulary around ICP, persona, and scoring terms, the marketing-sales alignment glossary is the canonical reference.

The fix isn't choosing one team's version. It's building one definition together, from both datasets, and writing it down. Gartner defines the ICP as the firmographic, environmental, and behavioral attributes of accounts most likely to become a company's highest-value customers, a standard applicable regardless of company size.

Why ICPs Drift Apart

Even teams that start with a shared definition end up with two separate versions within 12 to 18 months. The reasons are structural.

Marketing optimizes for volume and reach. Campaign performance pressure pushes marketers toward larger target audiences. The adjacent segment that looks like the ICP (same industry, slightly smaller company) gets included because it improves campaign metrics. Over time, the ICP definition inflates quietly. This directly drives up MQL rejection rates when those fringe leads reach the sales queue.

Sales remembers its biggest wins. Reps anchor on the deals that felt good, often the largest, the fastest, or the most technically interesting. Those outliers become the mental model for "ideal customer," even when they're not representative of the highest-conversion segment.

Neither team shares the full signal set. Marketing sees top-of-funnel conversion data, campaign engagement, and content consumption. Sales sees qualification calls, objection patterns, and closed-won context. The data that would correct each team's blind spot lives in the other team's workflow, and it rarely flows back.

The result: two sincere but divergent definitions, both partially right, neither complete. The next section shows what needs to be in a shared ICP to fix that.

Key Facts: ICP Quality and Pipeline Impact

- Companies that define their ICP and align it across marketing and sales report 68% higher account engagement rates compared to those operating without a shared definition, per ITSMA research on account-based strategies.

- 74% of B2B companies either have no documented ICP or have one that only one team actually uses, according to a SiriusDecisions survey on go-to-market alignment.

- Sales teams who work a well-defined ICP convert at 2.4x the rate of teams pursuing a broad or undefined target market, per Gartner analysis of enterprise sales performance.

- Organizations that update their ICP quarterly (rather than annually or ad hoc) see 23% better MQL-to-SQL conversion rates, per Forrester's State of Revenue Operations report.

- The top reason for MQL rejection, cited in 58% of alignment audits, is "wrong ICP fit," which is a definition failure rather than a lead quality failure, according to SiriusDecisions research.

Sales teams that work a well-defined ICP convert at 2.4x the rate of teams pursuing a broad or undefined target market, per Gartner analysis of enterprise sales performance. The compounding effect of that gap, over a full fiscal year, across a 20-rep team, is a pipeline difference measured in millions, not percentage points.

74% of B2B companies either have no documented ICP or have one that only one team actually uses, according to SiriusDecisions survey data on go-to-market alignment. The problem isn't that companies don't know what their ideal customer looks like. It's that the definition lives in one team's data and the other team never sees it.

Organizations that update their ICP quarterly see 23% better MQL-to-SQL conversion rates than those that update annually or ad hoc, per Forrester's State of Revenue Operations report. Quarterly review doesn't mean rewriting from scratch. It means a 30-minute check against closed-won and churn data to confirm the definition still fits the current market.

What a Shared ICP Contains: Six Dimensions

A durable ICP isn't a one-liner. It answers six distinct questions, and both marketing and sales must agree on the answers.

The 6-Dimension ICP is the framework for building a durable, jointly owned ideal customer profile. Each dimension addresses a distinct failure mode: companies that skip firmographic specificity attract leads marketing can't score; companies that skip exclusion criteria waste sales cycles on accounts that look ideal but consistently churn. All six dimensions (Firmographic, Technographic, Behavioral, Economic, Strategic, and Exclusion) must be present for the ICP to function as an operational filter rather than a marketing slide.

1. Firmographic Fit

The observable, static characteristics of the target company. Industry vertical, company size (employee count and/or revenue range), geography, funding stage, business model (SaaS, services, manufacturing, etc.).

Be specific on the ranges. "Mid-market" means different things to marketing and sales. Write the actual numbers: 50-500 employees, $5M-$100M ARR, US-based, with a B2B customer base.

Firmographic fit is the filter. You should be able to run a company through the firmographic criteria and know whether it's in-ICP or out before any conversation happens.

2. Technographic Fit

What technology the target company is already using, particularly anything your product integrates with, competes with, or requires. A SaaS product that integrates with Salesforce may only work for Salesforce shops. A tool that replaces a specific workflow may not work for companies that outsource that workflow.

Technographic fit is often overlooked in early ICP definitions. It becomes critical once you start scoring leads for fit and intent, because a company with the right firmographic profile but the wrong tech stack may not convert.

3. Behavioral Fit

How the target company's buyers research, evaluate, and make decisions. What does a typical buying cycle look like? How many people are typically involved? How long does evaluation take? Do they respond to direct outreach or prefer self-serve research?

This dimension comes primarily from sales experience and win/loss analysis. It's not observable from campaign data. It's where sales' input is irreplaceable.

4. Economic Fit

Does the target company have the budget for your product and the decision-making process to approve it? A company with the right firmographics but the wrong economic structure (heavily cost-constrained, no discretionary budget, overly complex procurement) may be ICP-adjacent but not truly ICP.

Economic fit also includes willingness to pay, not just ability to pay. Some industries price your product category much lower than others. Knowing which segments undervalue the category helps you avoid costly deal cycles that die on price.

5. Strategic Fit

Why does this type of company buy your product? What problem do they prioritize that your product solves? What's the urgency driver: regulatory pressure, growth friction, competitive threat, operational failure?

Strategic fit is the "why now" dimension. Companies that fit firmographically but don't feel the pain your product addresses may convert slowly or not at all. Understanding the strategic conditions that make a company ready to buy helps both teams prioritize timing.

6. Exclusion Criteria

Who is never a good fit, regardless of how well they match everything else? This is the most underused dimension of an ICP.

Exclusion criteria come from closed-lost analysis and churn data. What types of companies consistently fail to get value from your product? What objections always kill deals regardless of champion strength? Which company profiles look ideal on paper but land in churn within 12 months? Running a structured win-loss review is the most reliable way to surface these patterns. HBR research on sales wins and losses confirms that a focused post-deal retrospective produces insights that improve future win rates across both sales and marketing.

Naming negative personas explicitly, and building them into scoring, prevents both teams from pursuing leads that waste time and inflate vanity metrics.

The Joint ICP Workshop: Building It Together

A 90-minute session with the right inputs and a simple facilitation structure is enough to produce a shared ICP draft both teams can work from.

Before the Session: Gather Three Inputs

Input 1: Marketing's top-converting segments. Pull the last 12 months of campaign performance by firmographic segment. Which industries, company sizes, and sources converted to MQL, SAL, and SQL at the highest rates? Bring that data to the session.

Input 2: Sales' top 20 closed-won deals. Ask sales leadership to identify the 20 deals from the last 12 months they'd most want to replicate. For each deal, pull: industry, company size, revenue, tech stack, buying cycle length, deal size, and deal champion title. Bring that profile to the session. Your lead qualification frameworks will reflect where these closed-won deals cleared the bar, and where they didn't.

Input 3: CS's lowest-churn cohort. If you have customer success data, identify the cohort of customers with the lowest churn rate over 24 months. What do they have in common? This is the most reliable ICP signal available: these are the customers for whom your product created lasting value.

The Session: Convergence Exercise

- Display all three datasets side by side.

- Ask: where do they overlap? What company type shows up in all three: high campaign conversion, high closed-won representation, and low churn?

- Mark the agreements explicitly. "We agree that companies with 50-200 employees convert and retain at the highest rate." Write it down.

- Name the disagreements. "Marketing's data shows healthcare converting well. Sales' data shows healthcare deals taking 9 months and dying on compliance requirements." That disagreement is a negative persona candidate.

- Draft the six-dimension profile together, using the areas of convergence as the foundation.

The convergence exercise works because it forces both teams to look at the same data at the same time. It's harder to defend a partial view when the full picture is visible.

Documenting the ICP: Format That Works Operationally

A shared ICP only creates value if both teams use it day-to-day. That means the format has to be operational, not archival.

One-page written definition. Fits on a single page. Readable in under two minutes. If it requires a presentation to explain, it won't get used.

Scoring rubric. A simple table that lets any marketer or rep rate a new lead against ICP criteria. Firmographic fit score (0-50 points), technographic fit (0-20 points), behavioral indicators (0-30 points). Total score leads to ICP tier (A, B, C, or out). The joint lead scoring model shows how to wire this into your MAP.

Named negative personas. Three to five company types that look ICP-adjacent but consistently fail. "Agencies under 50 employees" or "companies without a dedicated ops function," whatever your data shows as no-fit regardless of surface-level match.

The rubric gets wired into your MAP as the fit score component of your lead scoring model. The negative personas become disqualification criteria. The one-page definition goes into new hire onboarding for both teams. See the Marketing-Sales Alignment Glossary for canonical definitions of ICP, buyer persona, and scoring terms.

ICP vs. Buyer Persona vs. Deal Persona

These three documents are related but distinct. Conflating them creates confusion about who each team is targeting at each stage.

ICP is company-level. It describes the organization, not the individual. It answers: "Is this the right company?"

Buyer Persona is contact-level. It describes the individual who researches and enters the funnel. It answers: "Is this the right person to generate awareness and intent with?"

Deal Persona is contact-level at the economic buyer level. It describes the person (or committee) who has budget authority and approval power. It answers: "Who do I need to get in front of to close this?"

The ICP is the entry gate. Buyer and deal personas describe the individuals you'll encounter inside the gate, at different stages of the funnel, serving different purposes.

See Buyer Persona vs Deal Persona for the full distinction and how the two persona types should be maintained separately.

Keeping the ICP Current: Quarterly Review

An ICP that doesn't get updated becomes fiction over time. Markets shift. Your product adds features that expand the addressable segment. A new competitor emerges that changes who's in-market. Your pricing changes who can afford you. One reliable update trigger: when your MQL-to-SQL conversion rate drops, check whether the ICP has drifted before assuming the scoring model is broken.

Review the ICP quarterly. The trigger questions:

- Has the closed-won cohort from the last 90 days shifted in any of the six dimensions?

- Are there company types appearing in churn data that weren't there 12 months ago?

- Has the campaign conversion data shifted toward or away from the current ICP profile?

- Did sales win or lose a deal type that felt anomalous?

Quarterly review doesn't mean rewriting the ICP every quarter. It means checking whether the current definition still fits. Most quarters, it does. Some quarters, one dimension needs adjustment. Occasionally, a full re-workshop is warranted.

The Common Failure: ICP in a Deck Nobody Opens

The most common ICP failure isn't a poorly constructed profile. It's a well-constructed one that never gets used.

The ICP lives in a strategy deck from the planning session six months ago. The CMO knows it exists. The new sales rep has never seen it. The scoring model in the MAP is using last year's criteria. The CRM has no ICP tier field.

An ICP only creates alignment when it's operationalized. That means:

- Wired into the MAP as fit score criteria

- Available as a field in the CRM that reps can reference during qualification

- Visible in the lead routing rules (ICP tier A gets immediate routing, ICP tier C goes to nurture)

- Included in new hire onboarding for both marketing and sales

- Referenced in joint pipeline reviews as a filter ("of these 40 MQLs, how many are ICP tier A?")

If you have an ICP definition that nobody references in weekly operations, you have a definition problem, not a quality problem.

Running the First Joint ICP Session in 90 Minutes

Here's a practical agenda for a team that hasn't done this before:

Minutes 0-15: Each team presents their current ICP definition (even if informal). No debate, just display. Marketing shows their campaign segments. Sales shows their closed-won profile.

Minutes 15-45: Convergence exercise. Identify company attributes that appear in all three datasets (campaign conversion, closed-won, low-churn). Document agreements in real time.

Minutes 45-65: Name the disagreements and decide. For each point where marketing and sales data conflict, decide whether it's a disagreement about definition or a genuine question requiring more data. If the latter, assign a data owner to return with an answer by next week.

Minutes 65-80: Draft the six-dimension profile together. Assign one person to write the one-page version before end of week.

Minutes 80-90: Agree on who owns the ICP going forward, the review cadence, and how it gets wired into scoring and routing.

You won't have a perfect ICP after 90 minutes. But you'll have a shared starting point both teams negotiated, which is already better than two separate definitions nobody discusses.

Rework Analysis: Where ICP Definitions Break Down

In practice, most ICP failures are failures of specificity in two dimensions: firmographic (ranges are too broad) and exclusion (no one wrote down who is never a good fit). Teams that build ICP definitions in planning sessions tend to write aspirational profiles, the company they hope to attract, rather than empirical ones built from closed-won and churn data. The fix is a standing rule: every ICP update must reference at least 20 closed-won deals and 10 churned accounts from the most recent 12 months. An ICP built from actual data, not wishful targeting, produces measurably better MQL acceptance rates and shorter sales cycles, because reps spend their time on accounts that actually convert.

Frequently Asked Questions

What is the difference between a sales ICP and a marketing ICP?

Sales teams build their ICP from closed-won deal patterns, specifically the company attributes that correlated with the fastest close times and the highest deal values. Marketing teams build their ICP from campaign conversion data, the segments that converted to MQL at the highest rates. Both are partial views of the same question. A shared ICP is built from both datasets simultaneously, using the convergence zone (company types that appear in both sales wins and marketing conversions) as the core definition.

How often should we refresh our ICP?

The right default cadence is quarterly. A quarterly review doesn't mean rewriting the ICP. It means a 30-minute check-in against four questions: Has the closed-won cohort from the last 90 days shifted in any of the six dimensions? Are new company types appearing in churn data? Has campaign conversion shifted toward or away from the current profile? Did we win or lose a deal type that felt anomalous? Most quarters the answers will confirm the current definition. When they don't, you have an early signal to update before drift compounds.

What should be in the exclusion criteria section of an ICP?

Exclusion criteria should name the company types that consistently fail despite appearing to fit on surface-level firmographic criteria. These come from two sources: churned customer cohorts (what company types exit early?) and rejected deal patterns (what company profiles always die at a specific stage, such as "companies without a dedicated ops function" or "agencies under 50 employees"). Exclusion criteria are as operationally important as inclusion criteria. They prevent both teams from investing time in deals that won't close.

Can a company have more than one ICP?

Yes, and at enterprise scale, multiple ICPs are often necessary. A second product line, a new market segment, or a move up-market (SMB to mid-market, for example) typically requires a separate ICP rather than a revision of the existing one. The practical signal that you need a second ICP: sales is regularly winning deals that don't fit the current ICP definition, or marketing is generating strong MQLs in a segment the current ICP doesn't describe. When this happens, fork the ICP rather than inflate the existing definition to cover both segments.

What is the biggest mistake teams make when building a shared ICP?

The most common mistake is building the ICP in a planning session without referencing actual data. Teams describe their ideal customer from memory, which is usually the biggest recent win, not the most representative profile of their best customers. The session then produces an aspirational definition that over-indexes on outlier deals. The fix is mandatory: bring the closed-won data, the churn data, and the campaign conversion data to the session before writing a single word. Without those three datasets, the ICP will drift back toward aspiration within 90 days.

How does the ICP connect to lead scoring?

The ICP's firmographic and technographic dimensions map directly to the "fit score" component of your lead scoring model. A company that clears the ICP threshold on firmographic criteria gets a high fit score; a company that fails the ICP gate gets a low one regardless of behavioral signals. The ICP also defines the negative personas that map to disqualifying criteria in the scoring model. Contacts from companies below the minimum size or outside target industries should not reach MQL threshold no matter how engaged they are.

Learn More

Senior Operations & Growth Strategist

On this page

- Why ICPs Drift Apart

- What a Shared ICP Contains: Six Dimensions

- 1. Firmographic Fit

- 2. Technographic Fit

- 3. Behavioral Fit

- 4. Economic Fit

- 5. Strategic Fit

- 6. Exclusion Criteria

- The Joint ICP Workshop: Building It Together

- Before the Session: Gather Three Inputs

- The Session: Convergence Exercise

- Documenting the ICP: Format That Works Operationally

- ICP vs. Buyer Persona vs. Deal Persona

- Keeping the ICP Current: Quarterly Review

- The Common Failure: ICP in a Deck Nobody Opens

- Running the First Joint ICP Session in 90 Minutes

- Frequently Asked Questions

- What is the difference between a sales ICP and a marketing ICP?

- How often should we refresh our ICP?

- What should be in the exclusion criteria section of an ICP?

- Can a company have more than one ICP?

- What is the biggest mistake teams make when building a shared ICP?

- How does the ICP connect to lead scoring?

- Learn More