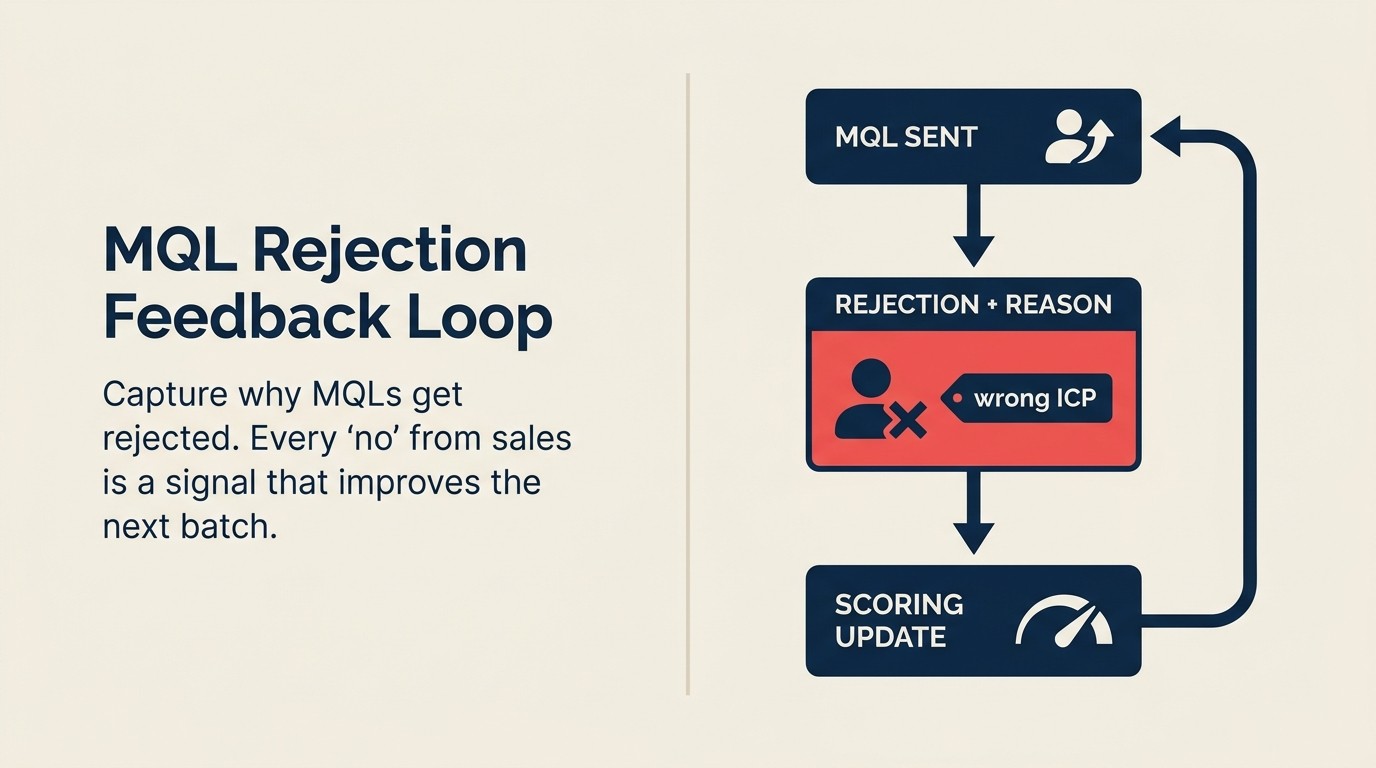

The MQL Rejection Feedback Loop: Turning Sales Rejects Into Marketing Intelligence

Most companies track MQL volume. They count how many leads marketing passed to sales, compare it to target, and move on. Almost none track why MQLs get rejected.

That gap is where alignment dies.

When a rep rejects an MQL without logging the reason, that rejection is invisible. Marketing doesn't know whether it was a fit problem, a timing problem, or a data problem. It can't fix what it can't see. So it keeps producing the same leads, sales keeps rejecting a portion of them, and both teams blame each other for the quarter's shortfall.

The MQL rejection feedback loop fixes this by turning every "no" into a data point and routing that data point to the action it actually requires. Gartner's MQL definition frames an MQL as a lead the marketing team has reviewed and deemed ready for sales. Rejection signals a breakdown at that review threshold, not a random failure.

Why Rejections Are Your Best Lead Quality Signal

Acceptances confirm what's working. Rejections reveal what's broken.

When a rep accepts an MQL, they're telling marketing: "this meets the bar." That's useful confirmation. But it doesn't tell you which dimension of quality they're assessing, and it can't help you improve the 35% of leads they're turning back.

Rejections are where the intelligence lives. A 35% rejection rate with no categorized reasons is just noise: a number that makes both teams feel bad without telling either team what to do. The same 35% rate, with categorized reasons, is a roadmap.

If 60% of rejections are "not ICP," marketing has an audience targeting problem. Fix the campaign segmentation. Your shared ICP framework is the reference point for what "ICP" actually means to both teams.

If 40% of rejections are "low intent," marketing has a scoring problem. The model is promoting leads before they've shown buying behavior.

If 25% of rejections are "bad data," marketing has a data quality problem, or a form problem. Reps are bouncing leads because there's no valid phone number or the company name is "test."

These are three completely different problems with three completely different fixes. Without categorized rejection reasons, you treat them as one undifferentiated "lead quality issue" and guess at solutions that may not match the actual root cause. The next question is: what are the actual root causes, and which one is yours?

Key Facts: MQL Rejection and Lead Quality

- The average B2B MQL rejection rate is 44%, meaning nearly half of all leads passed to sales are turned back, according to Marketing Sherpa's B2B Benchmark Report.

- Only 23% of companies systematically capture and categorize MQL rejection reasons in their CRM, leaving the majority without actionable data on why leads fail, per Demand Gen Report.

- When rejection reasons are categorized and actioned, companies reduce their rejection rate by an average of 31% within two quarters of implementing a structured feedback loop, based on SiriusDecisions research.

The Three Root Causes of MQL Rejection

Every MQL rejection traces back to one of three root causes. Conflating them is the fastest way to waste both teams' time.

Root Cause 1: Wrong fit. The lead has attributes that don't match your ICP: wrong company size, wrong industry, wrong job title, wrong geography. Marketing attribution research makes clear that fit failures corrupt attribution data. If wrong-fit leads are mixed into your closed-won pool, every channel looks more effective than it is. The lead may be a real person at a real company who is genuinely interested. But they're not the buyer your business can serve profitably. Fit rejections signal a problem with audience targeting, content channel selection, or the ICP definition itself.

Root Cause 2: Wrong timing. The lead has the right attributes but the wrong behavior signals. They're a genuine ICP match who's in early research mode, not actively evaluating solutions. Or they downloaded a whitepaper six months ago and the nurture sequence promoted them before they re-engaged. Timing rejections signal a problem with scoring model thresholds, lead decay rules, or the nurture sequence triggering premature promotion.

Root Cause 3: Wrong data. The lead record is incomplete or inaccurate. No phone number. Company name entered as "N/A." Job title field says "asdf." Personal email address with no company association. The lead may actually be a great fit with real buying intent. You just can't tell because the record is broken. Data rejections signal a problem with form validation, data enrichment configuration, or CRM hygiene.

Each root cause requires a different fix. Fit problems require campaign changes. Timing problems require scoring model changes. Data problems require enrichment and form field changes. Building your rejection taxonomy around these three causes is what makes the feedback loop operational rather than decorative. Which means the taxonomy design itself matters more than most teams realize.

Building a Rejection Taxonomy

The rejection taxonomy is a dropdown in your CRM: five to seven options that cover 90% of why reps reject MQLs. It should be short enough to be self-explanatory and specific enough to be actionable.

| Category | Root Cause | What It Signals |

|---|---|---|

| Not ICP: attribute mismatch | Wrong fit | Targeting, segmentation, or ICP definition issue |

| Low intent: no buying signal | Wrong timing | Scoring weights, behavioral thresholds, or lead decay |

| No contact info / bad data | Wrong data | Form validation, enrichment, or CRM hygiene |

| Duplicate / already in pipeline | Wrong data | CRM deduplication or routing logic |

| Too early: nurture candidate | Wrong timing | Premature promotion, nurture sequence timing |

| Too late: already evaluated competitor | Wrong timing | Lead decay or timing of re-engagement |

| Other | (none) | Use sparingly; flag for quarterly review |

The rule for "Other" is strict: no more than 10% of rejections should land here. If "Other" is consistently above 10%, either the taxonomy is missing a category or reps are using it as a lazy default. Review it quarterly and add a category if a pattern is emerging in the free-text notes.

Free-text rejection reasons are not allowed as the primary field. At 50 rejections a month, free text is analyzable. At 500, it becomes an unmanageable pile of unique sentences that no one can aggregate. Dropdowns are non-negotiable for a feedback loop that needs to scale.

The Rejection-to-Intelligence Loop: A 4-Step Framework

The Rejection-to-Intelligence Loop is the operational framework that converts raw MQL rejection data into three types of actionable marketing intelligence: audience targeting adjustments, scoring model calibrations, and data quality fixes. The four steps run sequentially for each rejected lead, with aggregate analysis happening at weekly and monthly intervals.

Step 1: Capture with taxonomy. The rep selects one of five to seven required rejection reason codes when marking a lead as rejected. No free text as primary input: dropdown only. This enforces consistent categorization that's aggregable across hundreds or thousands of rejections.

Step 2: Route by root cause. Each rejection category maps to a predefined next action: wrong-fit leads are disqualified, wrong-timing leads re-enter nurture with a category-specific sequence, and bad-data leads go to a data enrichment queue. Routing is automated by the reason code. No human judgment required for individual lead disposition.

Step 3: Aggregate at weekly cadence. At the weekly lead quality call, demand gen reviews the rejection category breakdown and identifies the numerically dominant pattern. Is it the same category as last week? Is it trending up? Did a specific campaign produce an outsized proportion of a particular rejection type? Pattern visibility turns individual data points into system signals.

Step 4: Escalate when thresholds breach. Each rejection category has a predefined threshold: if "not ICP" exceeds 20% of total rejections for four consecutive weeks, the ICP definition review is automatically triggered. Thresholds replace the ambiguous question of "when should we be concerned?" with a pre-agreed answer that removes the need for a judgment call.

Rework Analysis: The difference between a rejection feedback loop that improves lead quality and one that doesn't comes down to Step 2: whether routing is automated by reason code or handled manually. Manual routing introduces lag (rejected leads sit unactioned for days), inconsistency (different people make different routing decisions for the same reason code), and volume failure (at 200+ rejections per month, manual routing breaks down entirely). Automated routing by taxonomy category is what makes the loop scalable. The taxonomy decision, specifically which five to seven reason codes to use, is more consequential than any tooling choice.

The Feedback Loop in Practice

The loop has four steps, each happening in sequence.

Step 1: Rep selects rejection reason. When a rep marks an MQL as rejected in the CRM, the rejection reason dropdown is required. Ten seconds, one click. The requirement is enforced as a field validation: the lead status cannot move to "Rejected" without a reason code. This is not optional. Optional fields get filled in 30-40% of the time. Required fields get filled in 95%+.

Step 2: Rejected lead auto-routes to correct next action. Each rejection category triggers a different workflow. "Not ICP" routes the lead to a disqualification queue (it exits the active pipeline but stays in the database). "Too early" routes the lead to the appropriate nurture track with a 90-day re-engagement sequence. "No contact info" routes to a data enrichment queue for a quick automated pass against your enrichment tool. The routing is automated. No one has to manually decide where each rejected lead goes. The reason code makes the decision.

Step 3: Marketing reviews rejection reasons weekly. At the weekly lead quality call, demand gen pulls the week's rejection breakdown by category. Which category was largest? Is it trending up or down compared to the previous four weeks? Was there a specific campaign or source that produced an outsized proportion of rejections? This review is what converts the taxonomy data into a conversation.

Step 4: Monthly rollup triggers scoring or ICP review if a category exceeds threshold. The weekly review catches tactical issues. The monthly rollup catches structural drift. Forrester's revenue operations research identifies systematic measurement of lead quality, including rejection patterns, as one of the core practices that separates high-growth B2B firms from the rest. When a rejection category has been elevated for four or more consecutive weeks, it's not a bad batch. It's a broken system that needs a process-level change, not a campaign tweak. At that point, your MQL definition framework needs to be reopened and renegotiated.

Thresholds That Trigger Action

Thresholds give the feedback loop teeth. Without them, rejection data accumulates but no one knows when to escalate from "track and watch" to "change something."

| Rejection Category | Action Threshold | Action Triggered |

|---|---|---|

| Not ICP: attribute mismatch | >20% of total rejections for 4+ weeks | Revisit ICP definition or campaign targeting |

| Low intent: no buying signal | >30% of total rejections | Review scoring weights for behavioral signals |

| No contact info / bad data | >10% of total rejections | Data enrichment audit or form field validation |

| Duplicate / already in pipeline | >5% of total rejections | CRM deduplication rule or routing logic review |

| Too early: nurture candidate | >25% of total rejections | Lead scoring decay rules or nurture promotion criteria |

These are starting thresholds. Calibrate them to your business after 60 days of data. A company with a 90-day sales cycle will have different baseline timing rejection rates than one with a 14-day cycle. The absolute numbers matter less than the trend and the relative composition.

The "action triggered" column is as important as the threshold. Without a defined action, breaching a threshold just produces a conversation about whether to do something. The pre-defined action removes that ambiguity: when the "not ICP" category hits 20%, the ICP definition review is triggered, regardless of whether the marketing team feels ready to have that conversation.

What Happens to Rejected Leads

Rejected leads don't disappear. They route to one of three paths based on their rejection reason.

Recycle path. Leads rejected for timing reasons (too early, low intent) go back into nurture with a different sequence. The sequence should be calibrated to the rejection reason: "too early" leads get educational content with no CTA pressure for 60 days; "low intent" leads get mid-funnel content focused on building a business case. They re-enter the MQL consideration pool when they hit the scoring threshold again, but this time with decay rules that account for the fact that they've already been through one cycle. The lead rejection and recycling guide covers the full re-entry criteria.

Disqualify path. Leads rejected for fit reasons (not ICP) exit the active pipeline. They stay in the database (you may need them for market analysis or for future campaigns as your ICP evolves) but they're removed from nurture sequences and won't be promoted to MQL again unless the ICP definition changes. Understanding the difference between types of leads, warm, cold, and product-qualified, helps calibrate which disqualified leads might be worth re-engaging later.

Escalate path. Leads rejected with unexpected patterns (a well-known brand from your core ICP that keeps getting rejected, a segment you've historically won that's suddenly failing) get flagged for human review. Someone needs to look at these individually. Is the ICP changing? Is a competitor eating your best segment? Are there product changes that affect fit? These are strategic questions the feedback loop surfaces but can't answer automatically.

Closing the Loop: Reporting Back to Marketing

The rejection feedback loop is only complete when marketing receives a structured view of what the data shows.

Monthly rejection summary report. Each month, RevOps or demand gen pulls the rejection breakdown by category, compares it to the prior month, and flags any categories that breached threshold. The report goes to the demand gen lead, the content lead, and the SDR/BDR lead. It's not a multi-slide deck. A one-page summary with a trend table and three bullets covering the most significant pattern is enough.

Quarterly review. Every quarter, marketing and sales ops sit together to ask: does the rejection data match the current MQL definition? If "not ICP" has been the top rejection reason for three consecutive months, the MQL definition may be allowing leads through that sales has already told marketing are outside ICP. The quarterly review is the checkpoint for updating the MQL scoring model, not a place to re-litigate the ICP from scratch.

Annual MQL-SQL agreement update. The annual MQL definition review should include the full year of rejection history. What did we learn? Which categories trended up? Which improvements worked? The rejection log is the evidence base for renegotiating the MQL-SQL agreement. It replaces "I feel like" with "here's what the data showed."

Organizations that correlate rejection data to lead source see 40% better ROI on demand gen spend because they stop investing in channels that produce structurally non-closeable leads, according to Forrester's B2B Marketing Measurement Study. The feedback loop doesn't just improve quality. It reallocates budget toward channels that actually produce revenue.

What Good Looks Like

Three metrics mark a mature feedback loop.

Rejection rate under 25% with 90%+ categorized reasons. A 25% rejection rate means 75% of what you send is worth a rep's time. It's not perfect, but it's a functional pipeline. The 90% categorization rate means the loop has data quality. You're not flying blind on 30% of rejections.

Top rejection category shifts quarter-over-quarter. If "not ICP" is your top rejection reason in Q1 and you addressed it, it should drop by Q2 and something else should surface. If the same category stays at the top for three consecutive quarters, your fix isn't working, or you're not making the fix.

Marketing can predict next quarter's acceptance rate from current rejection trends. This is the maturity indicator. When marketing can look at the current rejection distribution and project acceptance rate trends, the feedback loop has become a forecasting tool, not just a diagnostic one. You're no longer reacting to bad lead quality. You're anticipating where it's heading and adjusting upstream before the quarter starts. Forecasting together with marketing influence takes that predictive view one layer further into pipeline.

Frequently Asked Questions

What is an MQL rejection feedback loop?

An MQL rejection feedback loop is a system that captures why sales reps reject marketing-qualified leads, categorizes those reasons into a standardized taxonomy, routes each rejected lead to the appropriate next action based on its rejection reason, and surfaces aggregate rejection patterns to marketing for corrective action. It turns individual "no" decisions by sales reps into systematic intelligence that improves the quality of future leads.

How do you operationalize the MQL rejection feedback loop?

Start with a required rejection reason dropdown in your CRM: five to seven options covering fit, timing, and data problems. Make it a field validation: the lead status cannot move to "Rejected" without a reason code. Then configure automated routing: wrong-timing rejections re-enter nurture, wrong-fit rejections are disqualified, bad-data rejections go to an enrichment queue. Finally, set a recurring weekly data pull reviewed at the lead quality call. The entire system can be built in two to three weeks without custom development.

What does sales need to provide for this system to work?

Sales needs to provide one piece of information per rejected lead: a rejection reason code from a standardized dropdown. That's 10 seconds per rejection. The requirement must be enforced as a field validation rather than a reminder. Optional fields get filled in 30-40% of the time; required fields get filled in 95%+. Reps don't need to write paragraphs, investigate root causes, or attend additional meetings. The system does the aggregation work; reps supply the raw data point.

What's the right rejection taxonomy to use?

The taxonomy should have five to seven categories that map to the three root causes: wrong fit (not ICP, attribute mismatch), wrong timing (low intent, too early, too late), and wrong data (no contact info, duplicate). The "Other" category should exist but be capped. If "Other" exceeds 10% of rejections, the taxonomy is missing a category. Review and update the taxonomy quarterly. Starting taxonomy: Not ICP, Low Intent, No Contact Info, Duplicate, Too Early, Too Late/Already Evaluated, Other.

How do you prevent sales from gaming the rejection taxonomy?

Two mechanisms help. First, make the reason codes specific enough to be meaningful but short enough to be self-explanatory. Vague codes invite lazy defaults. Second, review the "Other" category distribution quarterly: if 25% of rejections land in "Other," reps are avoiding the taxonomy, which usually means the options don't reflect what they're actually seeing. Adding a category based on real rejection patterns fixes gaming faster than any enforcement mechanism.

When should the MQL definition be reopened after reviewing rejection data?

When a single rejection category has exceeded its action threshold for four or more consecutive weeks and the associated fix has been implemented. If "not ICP" stays above 20% after two rounds of campaign targeting adjustments, the problem isn't targeting. It's the ICP definition itself. Persistent elevated rejection rates despite repeated fixes are the signal that the definition needs to be renegotiated, not tweaked. The quarterly review is the right forum for that conversation.

Learn More

Senior Operations & Growth Strategist

On this page

- Why Rejections Are Your Best Lead Quality Signal

- The Three Root Causes of MQL Rejection

- Building a Rejection Taxonomy

- The Rejection-to-Intelligence Loop: A 4-Step Framework

- The Feedback Loop in Practice

- Thresholds That Trigger Action

- What Happens to Rejected Leads

- Closing the Loop: Reporting Back to Marketing

- What Good Looks Like

- Frequently Asked Questions

- What is an MQL rejection feedback loop?

- How do you operationalize the MQL rejection feedback loop?

- What does sales need to provide for this system to work?

- What's the right rejection taxonomy to use?

- How do you prevent sales from gaming the rejection taxonomy?

- When should the MQL definition be reopened after reviewing rejection data?

- Learn More