More in

AI at Work News

PwC's 74/20 Divide: What Separates the CEOs Capturing AI's Economic Gains From the Ones Watching Them

Apr 17, 2026

Your Meetings Are Now a Programmable Data Source: What CTOs Need to Know About MCP and Meeting-Context APIs

Apr 9, 2026

AI Lead Scoring, Forecasting, and the EU AI Act: A RevOps Compliance Checklist

Mar 23, 2026

GPT-5.4 Can Use a Computer Autonomously: What That Means for Enterprise Automation

Mar 20, 2026

Agentshub.AI Just Made Enterprise AI Agents No-Code: What CROs Need to Decide in the Next 30 Days

Mar 5, 2026

Voice AI Just Crossed $11B in Valuation: What Sales Leaders Need to Decide Before Their Competitors Do

Feb 18, 2026

More Accurate, More Autonomous: How GPT-5.4 Changes What's Possible in AI-Assisted Sales

Feb 12, 2026 · Currently reading

86% of CEOs Are Increasing AI Budgets, But Only 1 in 5 Has the Governance to Back It Up

Jan 30, 2026

AI Agents Are Taking Over Revenue Workflows: Here's the Governance Checklist RevOps Can't Skip

Jan 27, 2026

Three No-Code AI Agent Platforms Launched in One Quarter: Here's What CEOs Should Take From It

Jan 16, 2026

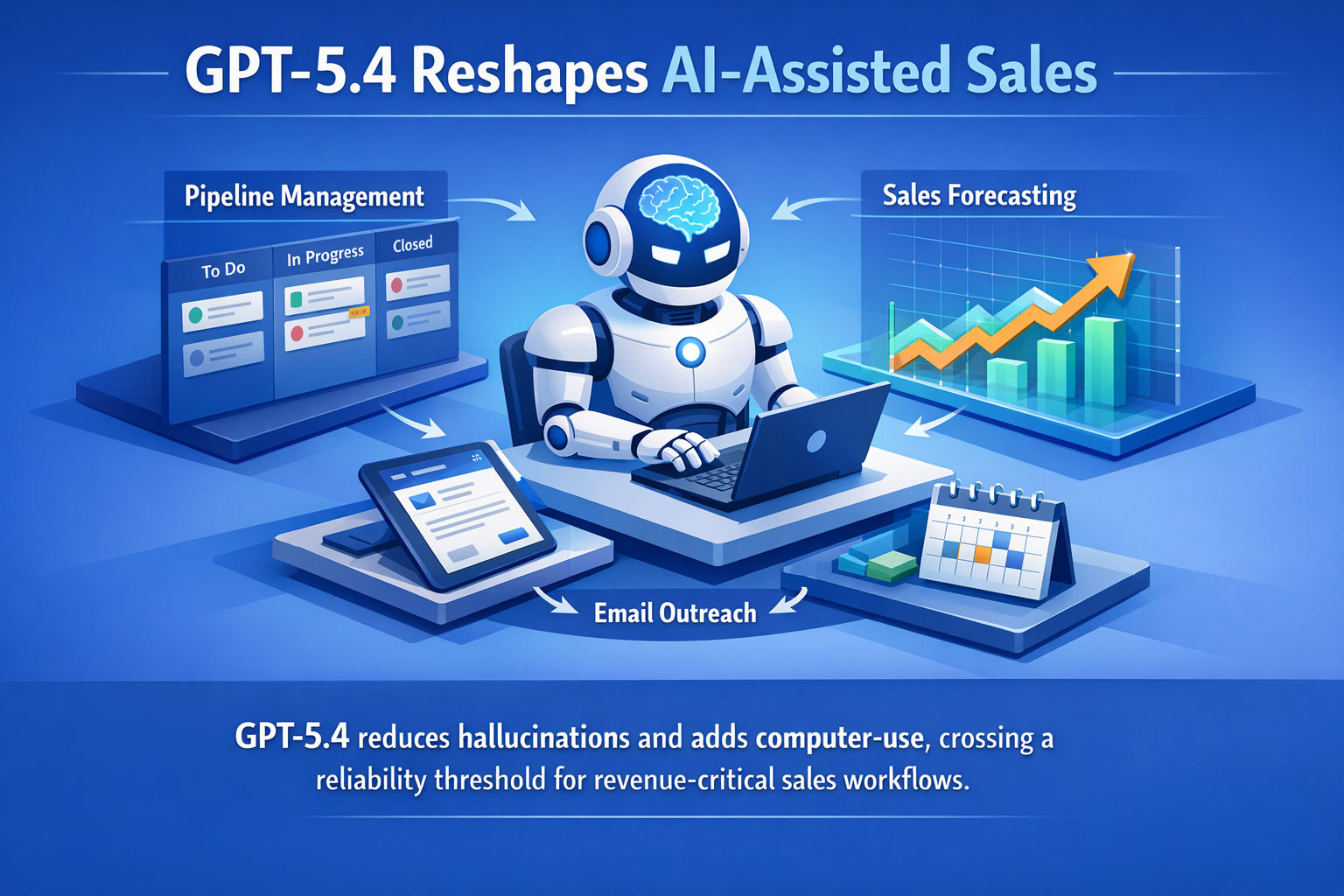

More Accurate, More Autonomous: How GPT-5.4 Changes What's Possible in AI-Assisted Sales

The conversation in most sales organizations about AI has been stuck on a single question: can we trust it? Pipeline summaries that invent numbers, outreach drafts that get the prospect's company name wrong, forecast roll-ups that sound confident but aren't. These aren't theoretical concerns. They're the experiences that made reps skeptical and CROs cautious about expanding AI's role in revenue-critical workflows.

GPT-5.4, released in early March 2026 and covered in detail by TechCrunch, shifts that conversation. The model delivers measurably fewer false claims: individual statements are 33% less likely to be incorrect compared to the previous generation, and full responses are 18% less likely to contain errors. At the same time, it ships with the ability to autonomously navigate software applications, browsers, and desktop environments. Together, these two changes (better accuracy and the ability to actually operate tools) bring certain sales workflows across a practical reliability threshold.

The question for CROs isn't whether to use AI in the sales process anymore. It's about which workflows are ready to hand off, in what order, and based on what criteria.

Where Accuracy Gains Matter Most in Sales

Not all sales tasks carry the same cost of error. A slightly imperfect email subject line is a missed opportunity. An inaccurate pipeline summary shown to the board is a credibility problem. Understanding where the 33% accuracy improvement actually matters helps prioritize where to use the new model.

Pipeline summaries and forecast commentary are the clearest beneficiary. These outputs directly influence executive decisions and investor conversations. Errors here erode trust in the whole AI stack, not just the individual output. With a meaningfully lower false-claim rate, AI-generated pipeline summaries become more viable as a first draft that managers review and approve, rather than a rough output that requires line-by-line verification. This is especially relevant if you're tracking lead status management data that feeds those summaries — the quality of the underlying CRM data still determines the ceiling.

Automated prospect research is another high-impact area. When a rep asks AI to summarize a target company's recent news, product changes, or leadership shifts, errors in that summary can show up in the conversation with the prospect. Reduced hallucination rates make this output more reliable, but it still needs human review before it reaches a live conversation.

Outreach personalization drafts benefit at the margin. The accuracy improvement here helps with factual claims about the prospect or their company included in the message. But the quality of the resulting outreach still depends heavily on the prompt quality and the rep's judgment about what's relevant. AI can draft faster and more accurately; the strategic layer still belongs to the human.

Where accuracy improvements don't change much: completely unstructured creative work where there's no ground truth to be accurate about, or tasks where the bottleneck is judgment rather than information retrieval. AI that's 33% more accurate is still not a replacement for a rep who understands the prospect's political dynamics inside their own organization.

The Computer-Use Angle for Sales Ops

The autonomous software navigation capability in GPT-5.4 (the ability to operate applications without a human at the keyboard) opens a different kind of opportunity, one that's less about what reps experience directly and more about what operations teams can build.

Think about the tasks that currently require a human to sit at a screen and do mechanical work: extracting contact information from a prospect's website, updating CRM records after a call, navigating between a LinkedIn profile and an opportunity record to check whether the right stakeholders are logged, pulling deal data from a system that doesn't have a clean API. These are high-frequency, low-judgment tasks that eat rep time precisely because they require manual software interaction. Many of the same workflows that lead data enrichment teams currently do manually are exactly where computer-use automation is worth testing first.

With computer-use capability, agents can handle this navigation autonomously. A workflow that previously required a rep to open five browser tabs, copy information between them, and update a CRM record can in principle be automated end-to-end. The accuracy improvements matter here too: an agent that navigates your CRM and extracts prospect data needs to get the data right.

The realistic near-term use case isn't full autonomy over the entire sales workflow. It's targeted automation of specific, well-defined mechanical tasks: the ones where the steps are predictable, the success criteria are clear, and the cost of an error is recoverable. Those are the workflows worth testing first.

A Prioritization Framework for CROs

When deciding which AI workflows to roll out or expand with GPT-5.4, five questions help frame the decision.

What's the cost of a hallucination here? If an error in an AI-generated output reaches a prospect or an executive, the cost is high. Start with workflows where AI output stays internal and gets reviewed before acting on. As you build trust in accuracy, expand to workflows where the output is more visible.

Is the task high-frequency and low-judgment? These are the best candidates for automation. High-frequency means the time savings compound. Low-judgment means errors are easier to catch because the right answer is more objective.

Do we have clean structured data to work from? AI accuracy improves when it's working from clear, structured inputs. If the underlying CRM data or prospect information is messy, the accuracy improvement in the model matters less. Prioritize workflows where your data foundation is solid. The Microsoft Copilot Wave 1 readiness framework covers the same data quality prerequisite from a slightly different angle — agents and AI-assisted workflows share the same dependency on clean CRM records.

Can we measure the output quality? Automation without measurement is hard to improve. Build in a way to evaluate whether AI outputs are accurate and useful before you scale them. Even a simple spot-check process (sampling 10% of AI-generated outputs weekly) gives you the feedback loop you need.

Is there a rollback path? For any workflow where AI starts taking actions autonomously (updating records, sending follow-ups, changing lead status), make sure there's a way to reverse those actions if something goes wrong. Start with read-only and draft workflows before moving to write workflows.

Limitations Worth Acknowledging

The 33% per-claim improvement is real and meaningful. But a 33% reduction from an already non-trivial error rate still leaves errors. GPT-5.4 is not suitable for workflows where every output needs to be factually correct without human review.

The computer-use capability is genuinely powerful, but it's also genuinely new. Enterprise deployments at scale will surface edge cases, failure modes, and security considerations that aren't fully visible in early use. The right posture for most sales organizations in the near term is careful testing with well-scoped workflows, not full deployment.

AI in sales is still a tool that augments rep judgment, not one that replaces it. The workflows that benefit most are the ones where the bottleneck is time and mechanics, not insight and relationship. Keep that lens when evaluating where GPT-5.4 fits.

What to Do This Week

Two evaluation actions are worth taking soon.

First, identify your three highest-frequency, lowest-judgment sales workflow steps. These are the tasks that reps do repeatedly, could describe clearly in a procedure, and would happily automate if the output were reliable. Document them. These are your near-term test candidates.

Second, if you're using any AI-generated outputs in current sales workflows (pipeline summaries, outreach drafts, prospect research) spot-check the accuracy of a sample. Get a baseline on current error rates. That baseline makes it concrete whether GPT-5.4's accuracy improvements would actually change your confidence in those outputs.

The shift toward more autonomous, more accurate AI in sales is real. The organizations that will benefit most are the ones who approach it as a disciplined operational rollout, starting with the right workflows, measuring carefully, and expanding from there.

Learn More

- Microsoft Copilot Wave 1 Sales Agent Readiness — What CROs need to prepare before AI sales agents go live

- AI Copilots vs. AI Agents — Understanding the distinction before you decide which to deploy

- Sales Team AI Readiness Audit — Framework for assessing which sales workflows are ready for automation

- Pipeline Stages That Match Your Selling — Structuring your pipeline to get the most from AI-generated forecasts