More in

AI at Work News

PwC's 74/20 Divide: What Separates the CEOs Capturing AI's Economic Gains From the Ones Watching Them

Apr 17, 2026

Your Meetings Are Now a Programmable Data Source: What CTOs Need to Know About MCP and Meeting-Context APIs

Apr 9, 2026

AI Lead Scoring, Forecasting, and the EU AI Act: A RevOps Compliance Checklist

Mar 23, 2026

GPT-5.4 Can Use a Computer Autonomously: What That Means for Enterprise Automation

Mar 20, 2026

Agentshub.AI Just Made Enterprise AI Agents No-Code: What CROs Need to Decide in the Next 30 Days

Mar 5, 2026

Voice AI Just Crossed $11B in Valuation: What Sales Leaders Need to Decide Before Their Competitors Do

Feb 18, 2026

More Accurate, More Autonomous: How GPT-5.4 Changes What's Possible in AI-Assisted Sales

Feb 12, 2026

86% of CEOs Are Increasing AI Budgets, But Only 1 in 5 Has the Governance to Back It Up

Jan 30, 2026

AI Agents Are Taking Over Revenue Workflows: Here's the Governance Checklist RevOps Can't Skip

Jan 27, 2026 · Currently reading

Three No-Code AI Agent Platforms Launched in One Quarter: Here's What CEOs Should Take From It

Jan 16, 2026

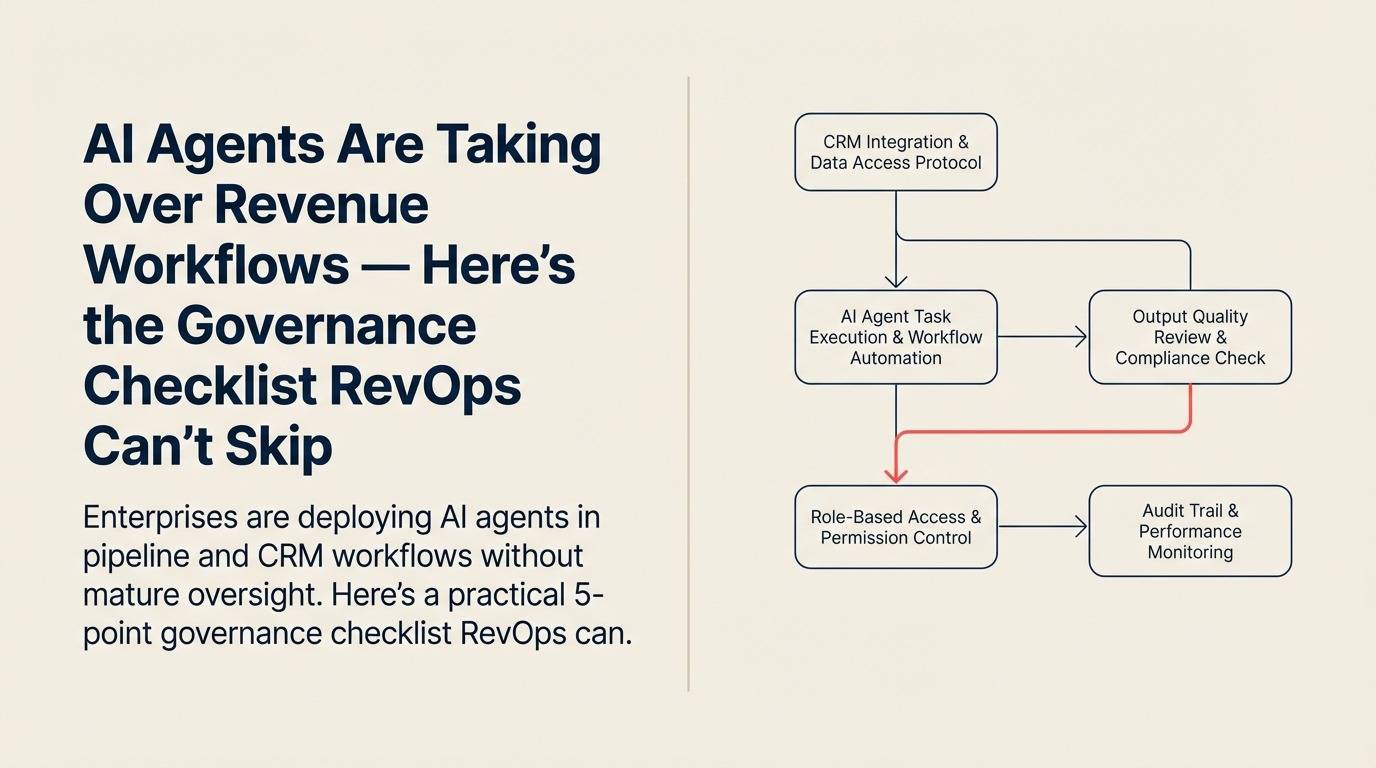

AI Agents Are Taking Over Revenue Workflows — Here's the Governance Checklist RevOps Can't Skip

The data on enterprise AI adoption isn't subtle anymore. Research aggregated by Joget from publicly available Gartner and IDC studies puts 40% of enterprise applications on track to include task-specific AI agents by the end of 2026. The Deloitte State of AI in the Enterprise report places 64% of organizations already using AI in active operations, with 86% planning to increase spend this year.

For RevOps, that trend has a very specific implication. AI agents aren't just being deployed in IT or product functions. They're landing directly in pipeline management, lead scoring, forecasting, and CRM data enrichment: the exact workflows that RevOps owns. And the governance problem is urgent: only one in five companies has a mature oversight framework for those autonomous agents, according to the same research.

That's not an abstract compliance risk. It means AI systems are touching your revenue data without formal audit trails.

Three Governance Failure Modes in Revenue Operations

Before building a checklist, it helps to name what you're actually preventing. In the context of AI agents operating in revenue workflows, there are three distinct failure modes that can compound quietly before anyone notices.

Failure Mode 1: Data Integrity Drift

AI agents that update CRM records (enriching contact data, modifying deal stages, reassigning ownership) can introduce errors that propagate through your entire pipeline model. This is especially acute when CRM data model design wasn't built with agent-driven writes in mind. Unlike a human error that leaves a clear log, agent-driven updates often blend into the activity stream. If your forecasting model is trained on CRM data, an agent that consistently misclassifies deal signals will skew your forecast without an obvious point of failure to investigate.

Failure Mode 2: Unconstrained Action Scope

Agents that were deployed for one function tend to accumulate adjacent tasks over time, not through deliberate expansion, but through prompt drift and workflow integration. A lead qualification agent that starts generating outbound emails. A pipeline review agent that begins setting follow-up tasks in reps' queues. When agent action scope isn't formally defined and enforced, you end up with autonomous systems taking consequential actions in workflows where no one explicitly authorized them.

Failure Mode 3: Audit Trail Gaps

Regulatory frameworks, including the EU AI Act (which is now actively enforced), increasingly require documentation of how AI-assisted decisions were made. The governance gap in AI at work runs deeper than compliance: it affects how confidently RevOps teams can rely on agent-generated data for forecasting. If a lead was disqualified by an agent, or a contract term was populated by an AI workflow, you need to be able to reconstruct that decision path. Companies without agent-level audit logs are building a compliance debt that will surface during their first serious review.

The 5-Point RevOps AI Governance Checklist

This checklist is designed to be implementable in 30 days without a major infrastructure project. It focuses on what RevOps can do with existing tooling and authority.

1. Map every AI agent touching revenue data.

Pull a current list of all AI-powered workflows that read from or write to your CRM, forecasting system, lead database, or sales engagement platform. Include both officially sanctioned tools and anything individual reps or managers may have added. Shadow AI adoption in sales teams is significant: the actual count is usually higher than IT's records suggest. This map is the foundation for everything else.

2. Define a consequence classification for each agent.

Label every agent as low, medium, or high consequence based on the autonomy of its actions. An agent that reads pipeline data and surfaces a weekly report: low. An agent that modifies deal stage, reassigns leads, or sends communications on behalf of a rep without review: high. Consequence classification determines how much governance overhead is justified.

3. Document the authority envelope for each high-consequence agent.

For every agent classified as high consequence, write down in plain language what it's allowed to do without human approval, what requires review before action, and what it's explicitly prohibited from doing. This doesn't need to be a lengthy document. A single page per agent is sufficient. But it needs to exist, and the relevant team leads need to have signed off on it.

4. Establish a change log for agent-driven CRM updates.

Configure your CRM to tag records modified by AI agents with a distinct identifier. Most major CRMs support custom field tagging or activity attribution. This creates the audit trail you'll need for compliance reporting and makes it dramatically easier to investigate data integrity issues when they emerge.

5. Schedule a quarterly agent review.

Set a standing quarterly calendar item with RevOps, Sales leadership, and IT to review active agents, their classification, any incidents, and any scope changes that have occurred. This meeting doesn't need to be long. Its value is in creating a forcing function for governance to stay current as the agent landscape changes. AI deployments evolve faster than annual review cycles can track.

What Changes With Mature Governance

The goal of this framework isn't to slow AI adoption in your revenue operations. It's to make the adoption durable.

Teams that are running AI agents with proper governance in place can do things that ungoverned teams can't: they can expand agent authority with confidence, because they know the boundaries. A RevOps maturity model that doesn't include AI governance as a dimension is already out of date. They can investigate anomalies systematically, because they have the audit trail. And they can respond to compliance questions quickly, because the documentation already exists.

The productivity gains are real. Teams deploying agents in support and operations functions are reporting savings of 40 or more hours per month in case studies cited by the research. RevOps organizations that combine that efficiency with a governance layer will be able to sustain and scale it. Those without the governance layer are building pipeline risk alongside pipeline productivity.

What to Document Before Your Next Audit

If you're heading into a compliance review (or anticipating one), here's the minimum documentation RevOps should be able to produce on demand:

- A complete inventory of AI agents with access to revenue data

- The consequence classification of each

- The documented authority envelope for any high-consequence agent

- A sample of CRM activity logs showing agent-attributed modifications

- Records of any incidents where agent behavior deviated from expected scope, and the resolution

The difference between organizations that pass AI-related compliance reviews and those that don't usually isn't the technology they're running. It's whether they can show the reviewer that someone was watching. For the board-level framing of this same issue, see how CEOs should be thinking about AI governance oversight.

Learn More

- EU AI Act RevOps Compliance Checklist — Specific documentation requirements for AI lead scoring and forecasting tools

- CRM Data Model Design — Building the data structure that supports agent audit trails

- RevOps Maturity Model — How AI governance fits into a mature revenue operations function

- AI Governance Policy for Departments — The practical framework for defining what agents can and can't do

Statistics in this article are drawn from research aggregated by Joget from Gartner and IDC public summaries and the Deloitte State of AI in the Enterprise report.