More in

Sales Process Playbook

The SDR-to-AE Handoff: How to Write It Down So It Actually Works

Apr 18, 2026

Deal Desk Basics: How Smaller Sales Teams Can Approve and Close Faster

Apr 18, 2026

Commit, Best Case, Pipeline: Defining Forecast Terms Your Whole Team Uses the Same Way

Apr 18, 2026

Win/Loss Analysis Without the Bias: A Repeatable Process for Sales Teams

Apr 18, 2026

Building a Sales Process One Deal Type at a Time

Apr 18, 2026

Multi-Threading Deals Without Annoying Your Champion

Apr 18, 2026

The Mid-Pipeline Slump: How to Diagnose and Fix Stalled Deals

Apr 18, 2026

Mutual Action Plans: When They Help and When They Hurt

Apr 18, 2026

Sales Operating Cadence: From Daily Stand-Up to Quarterly QBR

Apr 18, 2026

Forecast Cadence: Weekly vs. Monthly vs. Continuous — What Works and When

Apr 6, 2026

Designing Pipeline Stages That Match How Customers Actually Buy

A VP of Sales at a mid-market SaaS company once pulled her pipeline report before a board meeting and found 22 deals in "Proposal Sent." She walked into the room confident. The board asked about budget confirmation on each deal. She called her reps. Turns out 7 of those deals had never had a real budget conversation. The proposals were out, but the buyers weren't anywhere near a decision. Her pipeline was full of activity, not progress.

"Demo Scheduled" is not a pipeline stage. It's a calendar entry. "Proposal Sent" is not a pipeline stage. It's a task completion. Yet most CRMs are loaded with activity-based stages that describe what the sales rep did, not where the buyer actually is. The result is a pipeline that looks healthy and forecasts that are off by 30-40%.

The fix requires rebuilding stages from the outside in, using observable buyer milestones instead of internal sales activities. Research from Gartner shows that B2B buyers now spend only 17% of their purchase journey actually talking to potential suppliers. The rest happens internally without you. A McKinsey survey of B2B decision-makers found that buyers increasingly prefer self-service research over sales rep interaction, making it even more critical that your pipeline stages reflect buyer-defined milestones rather than seller-initiated activities. This guide gives you the steps to do that.

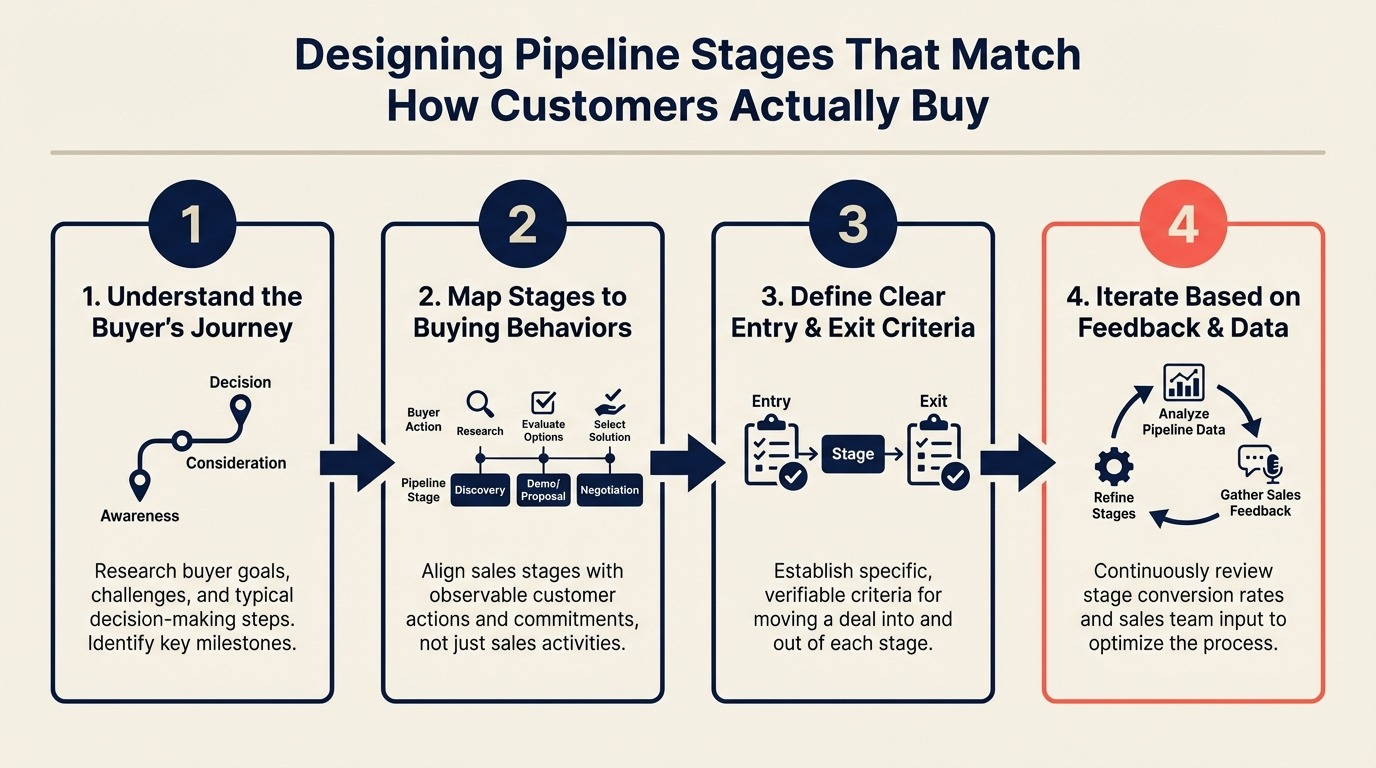

Step 1: Map Your Buyer's Decision Journey, Not Your Sales Process

Start by ignoring your current pipeline entirely. Pull out a whiteboard or a spreadsheet and map what your buyer actually goes through before they sign a contract.

Think about the last 10 deals you closed. What did the buyer actually do at each phase?

A useful worksheet has three columns:

| Buyer Action | Observable Signal | Potential Stage Gate |

|---|---|---|

| Acknowledges a problem worth solving | Booked a discovery call, answered discovery questions with specific pain | Problem Confirmed |

| Decides to evaluate solutions | Agreed to product demo, looped in a second stakeholder | Actively Evaluating |

| Validates with technical team | IT or security team joined a call | Technical Evaluation |

| Builds internal business case | Asked for pricing, ROI template, or a legal review of contract | Business Case Active |

| Decision-making process begins | Procurement involved, legal markups received, verbal yes from economic buyer | Decision In Progress |

| Contract under review | MSA in flight, legal redlines being exchanged | Contract Review |

The stages that emerge from this exercise look different from "Discovery," "Demo," "Proposal," "Negotiation." They're grounded in what buyers actually do, and you can verify each one.

Step 2: Write Entry Criteria as Observable Facts

Here's the most important rule in pipeline design: every stage entry criterion must be a fact that two different reps would agree on, not an interpretation.

"Exec buy-in gained" is not observable. "CFO joined a 30-minute call on March 10th" is. "Strong interest" is not a criterion. "Buyer requested a custom demo for their specific use case" is.

When you're writing entry criteria, ask: could I look at the deal notes and CRM activity log and confirm this happened? If the answer requires judgment or inference, rewrite it.

Good entry criteria examples:

- "Contact confirmed budget exists and is available this fiscal year"

- "At least two stakeholders have participated in a live session"

- "Buyer has asked for a contract or pricing proposal (not just a ballpark)"

- "Economic buyer (person with signature authority) has been identified by name"

Weak entry criteria examples:

- "Prospect shows genuine interest"

- "We believe they have budget"

- "Good fit confirmed"

The gap between these isn't just semantic. Weak criteria mean different reps will put the same deal in different stages, which means your pipeline report is fiction.

Step 3: Set Exit Criteria Using the Two-Rep Test

Every stage also needs exit criteria: the minimum conditions that must be true before a deal advances.

The test: would two different reps, looking at the same deal, agree that it belongs in the next stage? If the answer depends on who's asking, your criteria aren't specific enough. This is also the same bar you want to apply when building qualification frameworks into your discovery calls — observable facts that any manager can verify, not interpretations that vary by rep.

A concrete example. If your stage is "Verbal Commitment" and the exit criterion is "buyer has expressed strong intent to purchase," you'll have reps advancing deals based on optimism rather than facts. Rewrite it as: "Economic buyer has said 'yes, we want to move forward' in a recorded call, email, or CRM note, and has agreed to a specific decision date."

Now anyone reviewing that deal can confirm whether it meets the bar.

This two-rep test catches the most common pipeline disease: stage inflation from reps who advance deals to show momentum rather than actual progress.

Step 4: Calibrate Stage Count to Average Deal Length

The number of stages you need depends on how long your sales cycle runs. If you're also in the middle of evaluating or implementing a CRM to house this pipeline, the stage count decision affects how you configure deal records and automation rules from day one.

For cycles under 30 days (typical in SMB or product-led sales), 4-5 stages are enough. More stages than that create administrative friction without adding forecast value. A deal moving from first contact to close in 3 weeks doesn't need 8 checkpoints.

For cycles between 30-90 days (mid-market), 5-7 stages usually works. You have enough time for distinct phases: initial qualification, technical evaluation, business case development, procurement and legal.

For cycles over 90 days (enterprise), 7-10 stages can make sense, but only if each stage represents a meaningful change in buyer commitment. Don't add stages just because time has passed. A deal sitting in "Technical Evaluation" for 45 days isn't in multiple stages. It's stuck in one stage with a problem.

A useful check: if a deal can legitimately skip a stage, that stage probably doesn't belong in your pipeline. Every stage should be something every deal passes through.

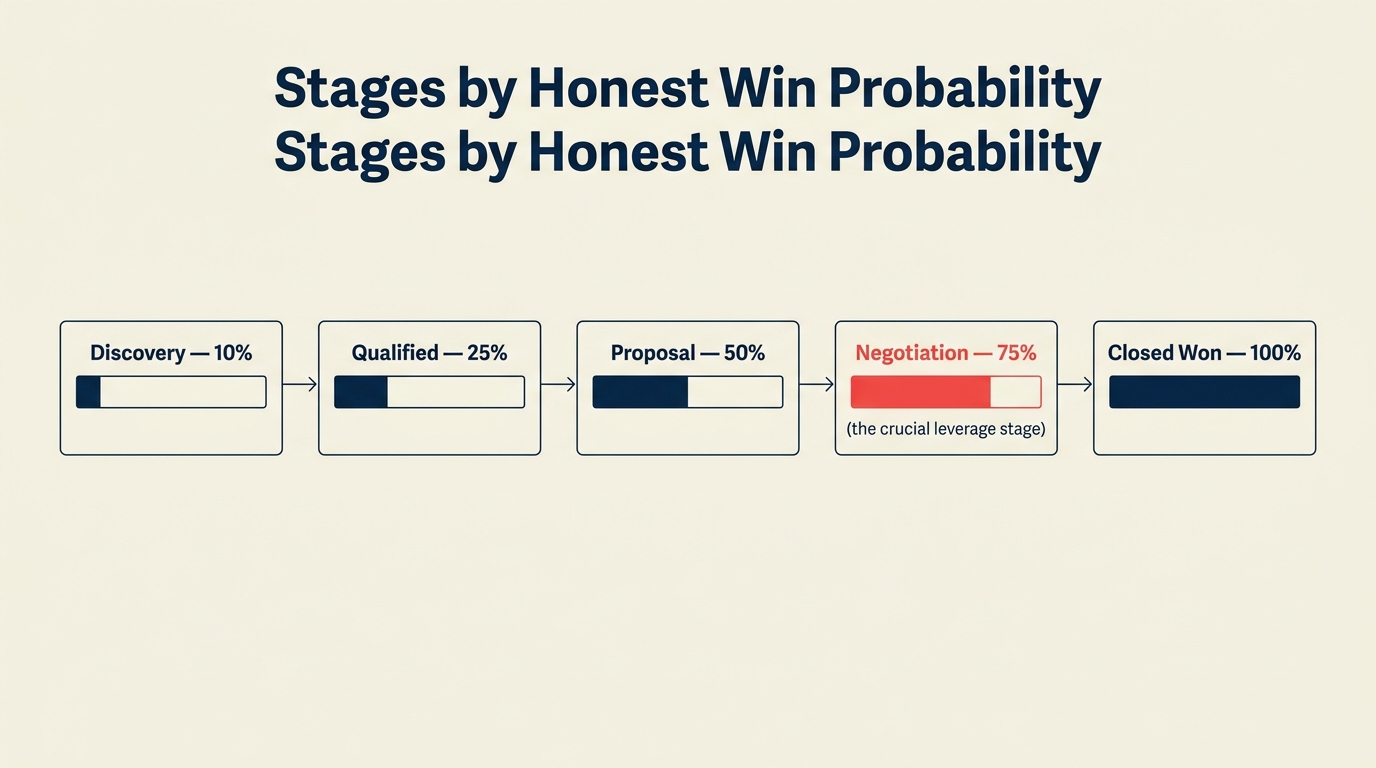

Step 5: Connect Stages to Win Probability Honestly

Here's where most pipelines go wrong a second time. This is also where the gap between pipeline design and forecast cadence becomes visible: a pipeline with honest win probabilities supports accurate forecasting, while one with inflated probabilities makes every forecast call a guess. After the stage design is done, someone assigns win probabilities, and they do it based on gut feeling or convention rather than data.

The result: "Proposal Sent" gets assigned 50% probability across the board. But if you actually look at your historical data, deals in "Proposal Sent" might close at 25% or at 70%, depending on whether budget was confirmed before the proposal went out.

Win probability should come from your historical close rates by stage, not from intuition. Harvard Business Review analysis of B2B pipeline management found that win rates varied significantly depending on whether specific buyer actions had occurred before a proposal was sent — in many cases, deals lacking multi-stakeholder engagement before proposal closed at less than half the rate of well-qualified deals. Pull your last 50 closed-won and closed-lost deals and calculate:

- Of all deals that reached Stage X, what percentage closed?

- Does that change based on deal size, industry, or rep?

If "Proposal Sent" closes at 28% in your data, set it at 28%. Not 50%. Inflated probabilities make your weighted pipeline look better than it is, which makes forecasting pointless.

For newer teams without enough history, start conservative. It's easier to raise probabilities as you build evidence than to explain to your CEO why the forecast was systematically high.

Step 6: Test New Stages Against 20 Historical Deals

Before you launch any new pipeline design, run it through 20 historical closed-won and closed-lost deals.

Pull the deal notes, emails, and CRM activity logs. Apply your new stage criteria and ask: at what point would this deal have advanced to each stage? Where would it have stalled? Where would it have been disqualified?

This retrospective audit does three things:

It reveals whether your entry criteria actually match how deals move in practice. If 12 of your 20 historical deals never produced an "economic buyer identified by name" signal, you may need to adjust that criterion or your qualifying process. Teams using MEDDIC will find this audit more straightforward because the MEDDIC framework explicitly requires economic buyer identification as a named element, making it easy to map against deal records.

It surfaces closed-lost patterns. If 8 of your 10 lost deals stalled at the same stage and for similar reasons, that's a process failure at that stage, not just a run of bad luck.

It gives you a before/after comparison. Take your current pipeline and reclassify every active deal using the new criteria. How many deals move backward? If 40% of your "Proposal" deals drop to an earlier stage, your pipeline was inflated. Now you know.

A useful audit template:

| Deal | Close Result | Entered Stage 3 When? | Entry Criterion Met? | Stall Point | Lost Reason |

|---|---|---|---|---|---|

| Deal A | Won | Week 3 | Yes | N/A | N/A |

| Deal B | Lost | Week 2 | Partial | Stage 4 | Champion left |

| Deal C | Lost | Never | No | Stage 2 | Budget unconfirmed |

Step 7: Get Rep Sign-Off Before Launch

The best pipeline design in the world fails if reps don't use it consistently. The worst outcome isn't resistance. It's quiet non-compliance, where reps advance deals to the stages that look best rather than the stages that are accurate.

Before you launch, run a 30-minute working session with your sales team. Don't present the new stages as a finished product. Bring them as a near-final draft and ask for input.

The questions that surface the most valuable feedback:

- "Is there any scenario where a deal would legitimately skip Stage X?"

- "Is the entry criterion for Stage Y something you could verify in 30 seconds?"

- "Do you disagree with any of the win probability assignments? If so, what does your experience suggest?"

Reps who helped shape the pipeline design enforce it differently than reps who had it handed to them. That 30-minute conversation pays off in data quality for the next 12 months.

Common Pitfalls

Stages that require rep interpretation. Any entry criterion with words like "clearly," "strong," or "genuine" will be interpreted differently by every rep. Replace judgment calls with verifiable facts.

Skipping the closed-lost taxonomy. Pipeline stages need a corresponding closed-lost reason taxonomy. If you're not running structured lost deal reviews yet, the taxonomy you build here is exactly what makes those reviews useful. If your lost reasons are "lost to competitor," "no budget," and "timing," you can't learn anything. Build lost reasons that correspond to where in the pipeline you lost control.

Stages named after internal documents. "Contract Out" and "MSA Sent" describe your process, not the buyer's. Rename them to buyer states: "Legal Review In Progress" or "Contract Under Mutual Review." Harvard Business Review on sales process design makes the case that the best-performing sales organizations define their pipeline around buyer decisions, not seller activities. And that principle has only become more important as buyers complete more of their evaluation journey before engaging a rep.

Too many stages for a short sales cycle. If your average deal closes in 18 days, 8 stages means reps are updating stage twice a week. That's overhead, not signal.

No stage for "active nurture" or "slow-moving qualified." Deals that are qualified but on a longer buyer timeline shouldn't sit in an active stage eating up forecast. Teams that have mapped lead lifecycle stages before building their pipeline often already have a natural holding stage for these deals. Build a parking stage for deals you're nurturing toward a future quarter.

What to Do Next

Pilot the redesigned pipeline with one team or one deal segment for one full sales cycle before rolling it out org-wide. Track three metrics:

- Forecast accuracy: Did your predicted close for the period match actual close within 15%?

- Stage advancement disagreements: How often did managers push back on a rep's stage assignment?

- Closed-lost clarity: Are you able to identify where in the pipeline each lost deal actually fell apart?

If the pilot shows improvement on all three, expand. If forecast accuracy didn't improve, look at your entry criteria and win probability assignments before redesigning again. Usually the criteria were right but the probabilities were still optimistic.

One full cycle gives you enough data to validate. Two cycles gives you enough to calibrate. Don't change the criteria mid-cycle or you'll lose the ability to compare.

Learn More

Co-Founder & CMO, Rework

On this page

- Step 1: Map Your Buyer's Decision Journey, Not Your Sales Process

- Step 2: Write Entry Criteria as Observable Facts

- Step 3: Set Exit Criteria Using the Two-Rep Test

- Step 4: Calibrate Stage Count to Average Deal Length

- Step 5: Connect Stages to Win Probability Honestly

- Step 6: Test New Stages Against 20 Historical Deals

- Step 7: Get Rep Sign-Off Before Launch

- Common Pitfalls

- What to Do Next

- Learn More