More in

AI at Work Insights

The Coordination Tax: The Hidden Cost That Kills Operational Velocity

Apr 13, 2026

Measuring AI ROI Beyond 'Time Saved'

Mar 17, 2026

The Governance Gap: What Leaders Get Wrong About AI at Work

Mar 5, 2026 · Currently reading

AI Agents in the Sales Pipeline: Hype, Reality, and What's Actually Working

Jan 22, 2026

AI Copilots vs. AI Agents: Understanding the Difference Matters

Jan 21, 2026

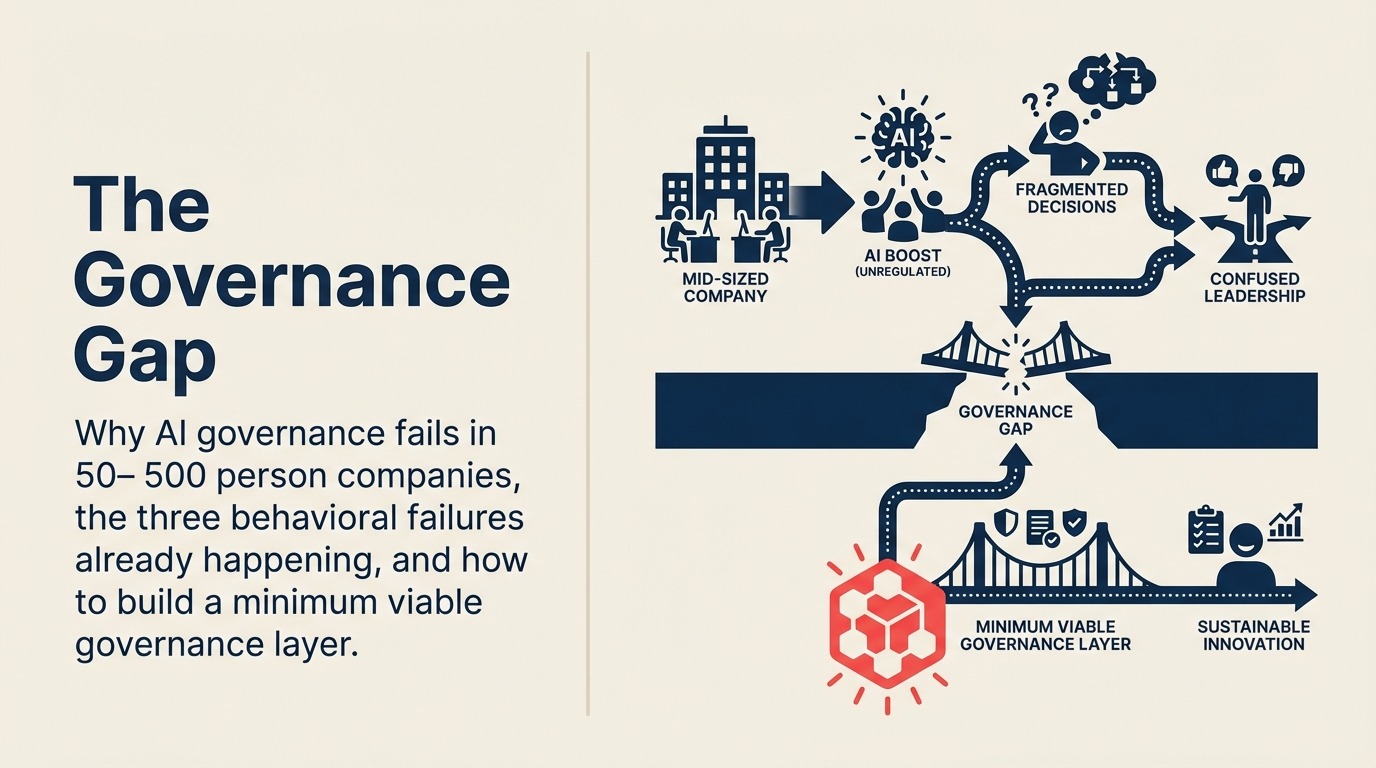

The Governance Gap: What Leaders Get Wrong About AI at Work

Most AI governance conversations happen at the wrong level. Boards want a policy. Legal wants a review process. But the actual governance failures in AI-at-work aren't happening in boardrooms. They're happening when a rep pastes a customer's deal history into ChatGPT, when a manager uses AI-generated performance analysis without telling the person being evaluated, and when nobody has decided which AI decisions require a human to sign off.

The gap isn't a policy gap. It's a behavioral and structural one. And the companies building real governance systems have figured out something that the policy-first crowd hasn't: you can't govern behavior you're pretending isn't happening.

Three failures already happening at your company

Before building any governance structure, it helps to be honest about the failures that are already underway. In companies that haven't explicitly addressed AI governance, these three show up almost universally.

Failure 1: Data leakage through consumer AI tools. The most common and least discussed problem. Employees are using ChatGPT, Claude, Gemini, and a dozen other consumer-grade AI tools to do their jobs. Some of this is innocuous productivity. Some of it involves pasting customer data, internal financial projections, contract terms, or employee information into tools whose data handling and retention policies are governed by consumer terms of service, not enterprise data agreements. Microsoft's 2024 Work Trend Index found that 78% of AI users at work are bringing their own AI tools — tools the company never approved or assessed for data risk.

A rep who pastes three years of a customer's deal history into ChatGPT to get a negotiation strategy isn't being careless on purpose. They're solving a real problem with the most convenient tool available. The failure is structural: the company never told them what was acceptable, never provided an approved alternative, and never created a reason to think twice. The risks here go beyond reputation — AI ethics and data privacy covers why consumer-grade tools create real legal exposure when used with enterprise customer data.

Salesforce Einstein and HubSpot AI operate under enterprise data agreements that most employees don't know exist. The distinction between "AI feature inside your contracted CRM" and "AI tool in a consumer browser tab" is real, but it's invisible to the person doing the work unless someone makes it visible.

Failure 2: Authority confusion between AI output and AI decision. This one creates the most expensive downstream problems. AI output is information. An AI decision is an action taken or a recommendation accepted without independent review. Most organizations haven't defined which decisions require human judgment, which can be accelerated by AI output, and which can be made autonomously by AI within defined parameters.

When a manager uses AI-generated performance scores from a productivity tool to make a compensation or promotion decision without disclosing that AI contributed to the evaluation, that's not just a policy gap. It's a potential employment law exposure in several jurisdictions and a trust problem with your team when it surfaces. Stanford HAI's research on AI in the workplace documents the growing gap between how rapidly employees are adopting AI tools and how slowly organizations are establishing governance frameworks to manage that adoption. Regulations like the EU AI Act are already imposing disclosure and audit requirements on exactly this type of high-stakes AI decision — the CEO strategy implications of the EU AI Act are worth reviewing before your next performance cycle. And it will surface.

The authority confusion problem is compounded by how AI features get embedded in existing tools. HubSpot AI can score a prospect's readiness without a rep realizing the score is AI-generated. When reps act on those scores as if they're facts, they've outsourced a judgment call to a model they've never evaluated. That's a decision, not just assistance.

Failure 3: The invisible adoption problem. Leadership teams consistently underestimate how extensively AI is already embedded in daily workflows. In most companies, by the time a formal AI policy discussion happens at the executive level, 40–60% of employees are already using AI tools daily, many of them tools the company hasn't approved or even catalogued.

This creates a governance bind: you can't build effective guardrails around behavior you don't know exists. And a policy that arrives after the fact, banning or restricting tools people have already built into their workflows, creates resentment rather than compliance.

The productive version of the invisible adoption audit isn't a threat. It's a survey and a conversation. "What AI tools are you using, what are you using them for, and what would you need from the company to feel confident using them appropriately?" That conversation generates the actual map of your governance challenge.

Why AI policies fail

The standard organizational response to an AI governance challenge is to write a policy. The policy gets legal review, gets approved by the executive team, gets posted to the intranet, and then has no measurable effect on behavior.

Policies fail because they describe acceptable and unacceptable behavior in abstract terms but don't change the conditions that produce the behavior. A policy that says "don't paste customer data into external AI tools" doesn't solve the problem if there's no approved internal AI tool for the task the employee is trying to accomplish. The rep will paste the data anyway, now with a slightly lower chance of doing it in front of a manager.

A governance system is different from a policy document in four ways:

It provides approved alternatives, not just prohibitions. If pasting customer data into ChatGPT is off-limits, there needs to be an approved way to accomplish the underlying task. Otherwise the prohibition is a friction-adder, not a problem-solver.

It defines decision rights explicitly. Who can approve a new AI tool for team use? Who decides which AI decisions require human review? Who owns the AI governance function? Without clear decision rights, governance is advisory.

It creates lightweight accountability without bureaucracy. Good governance systems have a way to flag concerns and report ambiguous situations without requiring a formal incident report. A Slack channel that a designated person monitors is more effective than a compliance portal.

It evolves as the tools evolve. An AI governance document from 18 months ago is almost certainly missing coverage for capabilities that now exist in the tools your team is using. Quarterly review is a minimum cadence.

The human-in-the-loop question

The most technically complex governance question isn't about data. It's about decision authority: which AI decisions require a human to review, approve, or override?

The honest answer depends on stakes and reversibility. A decision is high-stakes if getting it wrong causes material harm: financial loss, legal exposure, relationship damage, safety impact. A decision is irreversible if correcting it after the fact is expensive or impossible.

High-stakes, irreversible decisions should always have a human in the loop. Automatically declining a credit application, terminating a customer contract, sending a large-scale outreach campaign, dismissing an employee based on AI-generated productivity data: all of these require human review before action, not just after. Anthropic's guidance on responsible AI deployment frames this as a core principle: AI systems operating in high-stakes domains require human oversight architectures proportionate to the severity of potential errors.

But here's where most governance frameworks fail: they define the need for human review without making the review easy to do. If reviewing an AI recommendation takes 15 minutes and requires navigating three systems, people will skip the review and rubber-stamp the output. Effective governance design makes the human override the path of least resistance, not the bureaucratic detour.

For CRM-embedded AI specifically (Salesforce Einstein's deal scoring, HubSpot's predictive contact features, Zoho Zia's pipeline recommendations), the governance question is: are your sales leaders treating these outputs as data points or as decisions? Building a pipeline hygiene culture where reps and managers treat model outputs skeptically is the behavioral foundation that makes the governance layer actually work. The answer should always be "data points," but the answer in practice depends on whether that distinction has been made explicit to the people using the tools daily.

The minimum viable governance layer

A 200-person SaaS company doesn't need a governance committee, an ethics review board, or a dedicated AI policy officer. What it needs is a set of operating agreements, a clear owner, and a light accountability structure.

Here's what that actually looks like:

An AI tool registry. A simple list of approved AI tools, their approved use cases, and their data handling tier (can handle customer data / can't handle customer data / needs case-by-case approval). This takes about two hours to build and reduces the invisible adoption problem significantly. When employees know which tools are approved and for what, they make better decisions. An AI security and compliance reference is useful input when classifying tools by data tier — it covers the questions to ask vendors about data residency and retention before adding a tool to your registry.

Decision rights mapping. One page that answers: which AI outputs require human review before action? Who approves new AI tools for team use? Who owns the governance process? This doesn't need to be comprehensive on day one. It needs to be specific enough that the most common ambiguous situations get resolved without escalation.

Disclosure norms. An agreement that when AI contributed meaningfully to a decision (a hiring recommendation, a performance evaluation, a major proposal), that contribution is disclosed. Not because it invalidates the decision, but because it changes how the recipient can evaluate it. This is a behavioral agreement, not a policy document, and it has to be modeled by leadership first.

A lightweight escalation path. One person (typically an operations or people leader) who is the designated point of contact for AI governance questions. Not a formal committee. Just a person with the authority to make a call when something ambiguous comes up, and the expectation that they'll do it quickly.

The AI Governance Readiness Scorecard

Rate your organization 1–5 on each of these five operational dimensions to identify where the governance gaps are largest:

Data handling clarity (1–5). Do employees know which categories of data can be used with external AI tools? Is there a documented list of approved tools and their data tier?

Decision accountability (1–5). Have you mapped which AI-assisted decisions require human review? Are decision rights documented and known to the people making those decisions?

Employee awareness (1–5). Do employees know what the AI governance norms are? Have they been trained, not just notified?

Override design (1–5). Is it easy for employees to challenge or override an AI output? Does the workflow make review the default, not the exception?

Audit trail (1–5). Can you reconstruct which AI tools were used in a decision if you needed to? Is there logging sufficient to address a compliance inquiry?

A score of 20–25 indicates a mature governance foundation. 10–15 indicates the gaps that are most likely to cause a material problem in the next 12 months. Below 10 means the invisible adoption problem is almost certainly larger than leadership believes.

A 90-minute workshop agenda

If you want to produce an actual AI governance operating agreement with your leadership team rather than another policy document, here's a workshop structure that works:

Minutes 0–20: Surface the actual use. Start with what's happening, not what should happen. Have each leader share two AI tools their team is using and what for. No judgment, just mapping. This is the invisible adoption audit in action.

Minutes 20–40: Map the stakes. Work through the decision-rights question. Which AI decisions in your organization are high-stakes and irreversible? Use the stakes/reversibility framework to categorize them. This conversation produces the human-in-the-loop requirements without requiring a governance consultant.

Minutes 40–60: Build the tool registry. As a group, classify the tools surfaced in the first segment: approved, needs review, not approved. Assign data tiers. This takes longer than 20 minutes if the list is long, but the output (an actual registry) is worth the time.

Minutes 60–80: Assign decision rights. Who owns the governance function? Who approves new tools? Who handles escalations? Get specific names, not just roles. Agreements with names attached get followed up on.

Minutes 80–90: Define disclosure norms. Agree on when AI contribution to a decision needs to be disclosed and to whom. This is often the most useful 10 minutes of the workshop because it forces the leadership team to be explicit about something that's been implicitly variable.

The output is a one-page operating agreement, not a 20-page policy document. It answers the questions that employees actually face. And because it was built through conversation rather than drafted by legal, it reflects the actual operational reality of how AI is being used.

That's the difference between governance as theater and governance as a system.