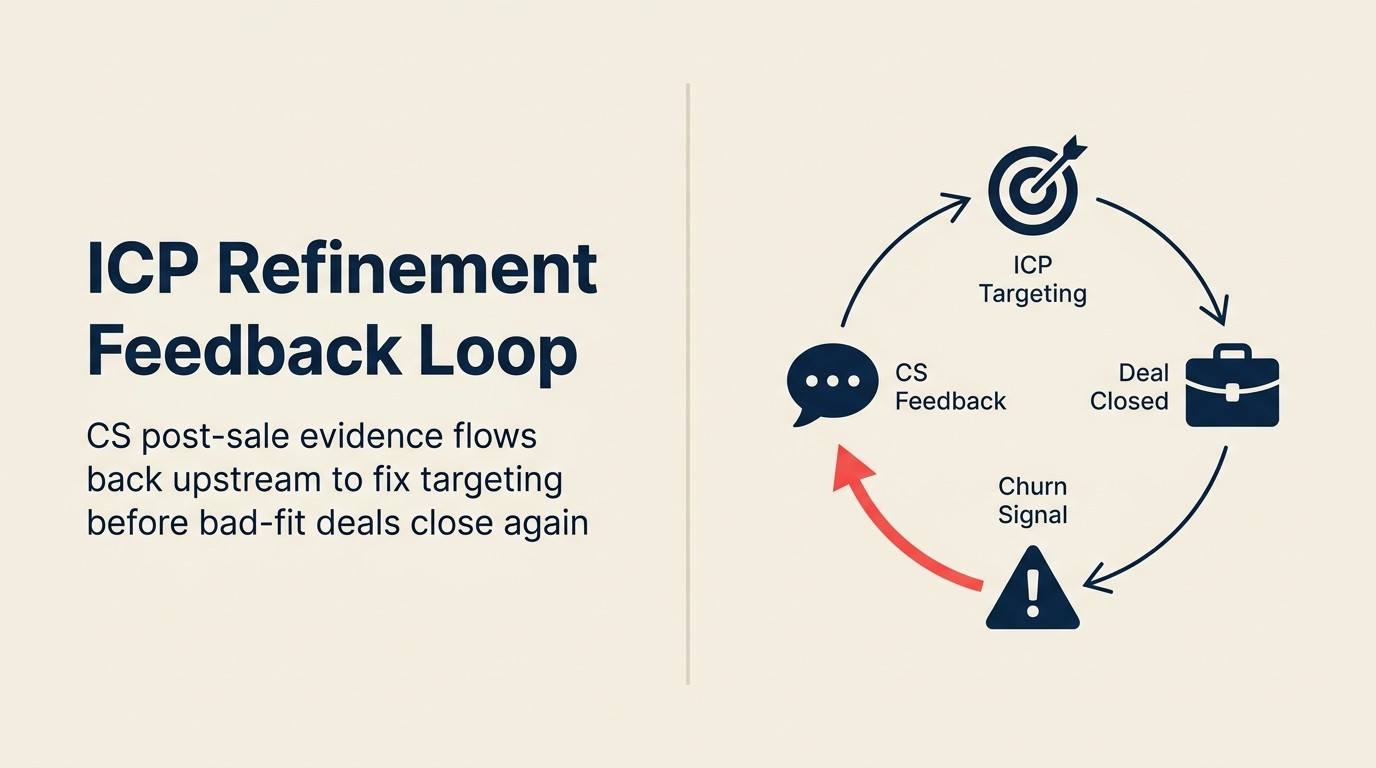

The ICP Refinement Loop: How CS Feedback on Bad-Fit Deals Fixes Upstream Targeting

The ICP (Ideal Customer Profile; see the Sales-CS Alignment Glossary for the full definition) problem at most companies doesn't belong to any single team. Marketing defines it based on who they think they're targeting. Sales approximates it under quota pressure. CS inherits whatever that combination produces. If your marketing and sales teams don't share a single ICP document, start with the shared ICP framework before building the CS feedback loop. The loop has nothing to update without a canonical definition to anchor it.

Here's how the failure happens at the individual deal level: an AE is 12 days from quarter-end. An account comes in that's close to ICP: the industry is right, the size is slightly small, and the primary use case is adjacent to the core product, not squarely in it. Under quota pressure, "adjacent" becomes "good enough." The deal closes.

The CSM is assigned the account. Within 60 days, they know: this customer bought a solution to a problem the product doesn't fully solve. Adoption will be patchy. The champion is enthusiastic but not in a position to drive cross-team usage. This account will likely churn or downgrade at renewal.

That signal, clear, specific, and actionable, almost never travels back to Sales or Marketing in any systematic way. The CSM mentions it on a call. Or logs it in their CS platform where nobody from Sales ever looks. Or just absorbs it as the cost of doing business in a company with a misaligned pipeline.

Then the next quarter, the same type of deal gets closed. And the quarter after that.

Why ICP Drift Is a Revenue Problem, Not a Segmentation Problem

Key Facts: The NRR Cost of ICP Drift

- Bad-fit accounts churn at 2-3x the rate of strong-fit accounts and expand at half the rate, per Gainsight research on B2B SaaS customer cohorts.

- Companies where CS provides structured ICP feedback to Sales quarterly see NRR improve by 8-12 percentage points over 18 months, compared to companies without a formal feedback loop, according to TSIA research on CS-Sales alignment.

- Only 23% of B2B SaaS companies have a formal process for CS teams to flag bad-fit accounts to Sales, per a ChurnZero survey of 600+ CS leaders.

- Even 10% of closed-won deals being bad-fit can suppress NRR by 5-8 points annually, when you account for churn, low expansion, and CS time absorbed, per Bain & Company analysis.

The compounding math here is worth sitting with for a moment. McKinsey's B2B growth research shows that companies using advanced analytics to quantify the spend potential of every customer, existing or prospective, consistently outperform peers on market share growth. That advantage evaporates when your ICP is drifting and your targeting is off.

Say 12% of your closed-won deals in a given quarter are bad-fit. Those accounts churn at elevated rates. Not because CS failed, but because the structural fit was low from day one. That churn shows up 8-14 months later as reduced NRR (Net Revenue Retention; see glossary).

Lower NRR creates pressure on new logo acquisition targets to compensate for the lost base. That pressure increases the incentive for AEs to close near-ICP deals under quota duress. Which generates another cohort of bad-fit accounts. Which churns in the next cycle.

The loop compounds in the wrong direction. But it looks like a pipeline problem, or a CS performance problem, until you trace it back to the source.

The responsibility math is uncomfortable: Sales and Marketing share the upstream cause. CS holds the downstream evidence. Neither team can fix it alone. Marketing can't refine the ICP without churn data. Sales can't qualify harder without knowing which attributes actually predict failure. CS can see the pattern clearly but has no mechanism to get that pattern upstream in a form that changes behavior.

The feedback loop is the mechanism. Without it, the data evaporates into quarterly anecdotes. The win-loss feedback to marketing process is the adjacent upstream loop. CS churn data and win-loss analysis often point to the same segment mismatch from two different directions.

So what does CS actually know that Sales doesn't? The answer is more specific than most teams realize.

What CS Actually Knows That Sales Doesn't

This isn't a complaint about Sales. It's a structural reality: CSMs see things after close that were invisible or theoretical during the sales cycle.

Adoption patterns by segment. Which customer profiles actually engage deeply versus which ones onboard politely and then go quiet. A CSM who manages 40 accounts knows exactly which verticals drive product-led expansion and which ones flatline at 30% seat utilization.

Which promised use cases never materialize, and how fast. The CSM learns within 90 days whether the use cases the AE built the deal around are actually being pursued. Divergence between committed use cases and active use cases is one of the earliest churn signals available.

Champion stability at 30, 90, and 180 days post-close. CSMs see champion departures, reorganizations, and budget reallocation earlier than anyone in Sales. A champion who bought enthusiastically and then left the company is a renewal risk that no product metric captures.

Which objections resolved in the sales cycle re-surface as churn reasons. The CSM often hears the customer say, "We were told this would integrate with [X]." That's the same objection that showed up in the discovery call and was handled with a "we'll work through it." The objection didn't go away. It got deferred.

Expansion rate by customer profile. Which account types actually grow? This is the most commercially important signal CS holds and the one Sales most consistently underuses. McKinsey's customer success 2.0 research finds that existing customers account for between a third and half of total revenue growth, even at early-stage companies, which makes the CSM's visibility into expansion patterns a direct revenue input, not just an operational observation. Accounts that expand are not randomly distributed across your customer base. They cluster around specific profiles, use cases, and buying triggers. The expansion ownership and upsell motion article maps how those expansion signals get routed from CS to Sales once the ICP framework identifies which profiles are worth doubling down on.

The question is how to get this knowledge upstream in a form that actually changes what Sales and Marketing do next quarter.

Building the Feedback Loop: The Four-Stage Model

Stage 1: CS Tags Accounts with Fit Signals at the 90-Day Mark

At 90 days post-close, each CSM tags their accounts with a fit signal. Three categories is enough: strong-fit, near-fit, bad-fit.

Criteria for fit signal assignment:

| Signal | Strong-fit | Near-fit | Bad-fit |

|---|---|---|---|

| Core use case active | Yes | Partially | No / not yet |

| Champion stability | Same person, same role | Role change, relationship maintained | Champion departed |

| ICP segment match | Confirmed | Approximate | Clear mismatch |

| Adoption trajectory at day 90 | On plan or ahead | Slower than expected | Stalled |

| AE-committed use cases active | 2+ active | 1 active | None active |

Bad-fit tags route to Stage 2. Near-fit tags go into a watch list for review at 180 days.

This tagging takes 10-15 minutes per account. The CSM already knows the answer. The tag is just capturing it in a structured form that others can act on.

Stage 2: CSM Routes Bad-Fit Flags to Shared Revenue Review

A bad-fit flag is not a complaint. It's a structured handoff of a specific signal with supporting evidence. The CSM routes it to three people: the AE who closed the deal (for context and accountability), the Sales Ops or RevOps lead (for pattern tracking), and their own CS manager (for coaching and workload context).

The routing format matters. A Slack message saying "this account feels like a bad fit" is not a flag. A CRM note with the fit signal, the specific gap (use case mismatch / champion vulnerability / ICP deviation), and a 2-sentence evidence summary is a flag that can be acted on.

Think about it this way: if the information doesn't live in the CRM, it doesn't exist for the quarterly review. The CSM's memory is not a data source.

Stage 3: Quarterly ICP Review Session

This is the structural heart of the loop. Without a scheduled, recurring meeting with an agenda and named owners, the data collected in Stages 1 and 2 never becomes ICP refinement. It just accumulates.

Who attends: VP CS, VP Sales, RevOps lead, Marketing representative. This is not a CS presentation to Sales. It's a joint review of shared evidence.

What's brought:

- CS churn cohort analysis: accounts that closed in the previous 12-18 months and churned within 12 months, segmented by AE, segment, industry, and stated use case

- CS fit signal summary: distribution of strong-fit / near-fit / bad-fit tags from the past quarter by segment

- Sales close rate by segment: where AEs are closing well, where they're struggling, which segments require excessive discounting to close

- RevOps synthesis: where the churn data and the close-rate data point to the same segment mismatch

Output of each quarterly review:

- 3-5 specific ICP signal updates: "Accounts with fewer than 50 seats at close have 3x higher churn in the first 12 months. Recommended ICP floor: 75 seats"

- Changes to lead scoring criteria based on CS churn data (Marketing implements)

- Updated qualification criteria for Sales: one or two specific filters that AEs should apply more rigorously

- A "do not close" list for the next quarter: segments or account types that the data suggests should be disqualified earlier, not closed under pressure

The "do not close" list is the hardest output to implement. AEs under quota pressure don't want to be told which deals to walk away from. That's where leadership alignment matters. The CRO has to back the list for it to hold.

Stage 4: Marketing Updates Positioning and Lead Scoring

The quarterly review produces signal. But it only creates upstream change if Marketing is in the room and acts on it. Marketing's job in this stage is to translate the CS/Sales synthesis into:

- ICP filter updates (which firmographic criteria to weight more or less in targeting)

- Lead scoring changes (which behavioral signals correspond to the bad-fit segments CS identified)

- Messaging adjustments (which use cases to de-emphasize in positioning because they attract the wrong buyer)

And then critically: Marketing tells CS what they changed. The loop is only closed if information flows in both directions. CS changes Sales and Marketing behavior; Marketing confirms back to CS what changed and why.

The "This Deal Shouldn't Have Closed" Signal

Not every underperforming account is a bad-fit account. Some accounts are bad-fit by design. Others are good-fit accounts with poor adoption: the product was right, the execution was off.

The distinction matters because the upstream response is different:

| Account type | Root cause | Response |

|---|---|---|

| Bad-fit by design | ICP mismatch, wrong use case committed | ICP flag to Sales + Marketing |

| Good-fit, poor adoption | Onboarding failure, insufficient CSM engagement | CS coaching, playbook update |

| Good-fit, external disruption | Champion departure, budget freeze, M&A | AE re-engagement, executive relationship |

A CSM who flags everything as "bad-fit" loses credibility with Sales and RevOps quickly. The flagging criteria in Stage 1 need to be tight enough that a bad-fit tag means something specific: the deal was structurally wrong at close, not just hard to onboard.

The This Deal Shouldn't Have Closed: The Loop Back to Sales article covers the specific triggers and evidence standards for a formal ICP flag.

Where the Loop Breaks: Four Failure Modes

Failure Mode 1: CS Feedback Is Informal

"I mentioned it on a call" is not a feedback loop. Neither is a comment in a team meeting that isn't logged anywhere. For CS feedback to change ICP, it has to live in a structured format, in a shared system, reviewed by people who have the authority to act on it.

Failure Mode 2: Sales Interprets Bad-Fit Feedback as CS Complaints

This one requires leadership framing. If the VP Sales and VP CS haven't explicitly agreed that CS fit-signal data is a commercial input (not a performance critique of individual AEs), then every bad-fit flag will be received as blame, not signal. The quarterly ICP review only works if Sales leadership comes in genuinely curious, not defensive.

Failure Mode 3: RevOps Has No Owner for ICP Between Annual Planning Cycles

ICP reviews that only happen once a year during planning season are too slow. Markets move. Churn patterns emerge in cohorts that take 6-9 months to manifest. A quarterly cadence catches drift before it compounds. But someone has to own the ICP document between reviews: updating it, tracking which changes were implemented, maintaining version history. That's a RevOps function.

Failure Mode 4: Marketing Changes Messaging Without Informing CS

The loop breaks in a different direction when Marketing acts on the signal but doesn't close the communication loop back to CS. CS is still telling prospects that a use case is a fit for them, while Marketing has already removed that use case from the ICP. The customer gets mixed signals. And CS doesn't understand why the new cohort of accounts looks different from what they expected.

What Good Looks Like: The Quarterly ICP Signal Review

The quarterly session is the mechanism. Here's a practical format that works for SMB and mid-market teams:

Attendees: VP CS, VP Sales, RevOps lead, Marketing lead (not optional) Duration: 60-75 minutes Cadence: Quarterly; monthly micro-review if NRR drops more than 2 points in a single quarter

Agenda:

| Time | Topic | Owner |

|---|---|---|

| 0-15 min | CS churn cohort: accounts closed in Q-2 that churned in Q1 or Q2, segmented by profile | VP CS |

| 15-30 min | Fit signal summary: distribution of fit tags from last quarter by segment | RevOps |

| 30-45 min | Sales close rate and discount trends by segment | VP Sales |

| 45-60 min | Synthesis: where churn data and close-rate data point to the same pattern | RevOps |

| 60-75 min | ICP update decisions: 3-5 specific criteria changes, leads scoring updates, "do not close" list | All |

The RevOps lead owns the ICP document and circulates the 3-5 updates to all teams within 5 business days of the review. Marketing confirms implementation timeline within 2 weeks.

RevOps as Neutral Owner

The feedback loop needs a non-partisan owner. CS will over-flag: every struggling account looks like a bad-fit account when you're trying to save a renewal. Sales will under-flag: every near-ICP deal looked closeable from the pipeline view. Both interpretations are correct from each team's vantage point.

RevOps synthesizes the signal. They hold the ICP document. They track which updates were implemented and measure the cohort outcomes 6-12 months later. They're accountable to NRR as an operational metric, not to either team's individual performance. For how RevOps structures joint NRR forecasting across both teams, that article covers the mechanics of turning churn cohort and ICP data into a forward-looking revenue forecast.

This matters for ICP forecasting: ICP quality is a leading indicator of NRR stability 12 months out. A RevOps team that tracks fit signal distribution by close-cohort can predict NRR trajectory with reasonable accuracy 9-12 months in advance. That's a meaningful lever for revenue planning.

The RevOps owner is also the person who closes the loop: confirming to CS which ICP changes were implemented, tracking whether the next cohort's bad-fit rate improves, and flagging when it doesn't.

Practical Starting Point for SMB/Mid-Market Teams

If you have no formal ICP review process today, start with the minimum viable feedback loop:

Week 1: Create a shared Slack channel (#icp-signals) where CSMs post structured bad-fit flags using a simple format: "Flag: [account name] | fit issue: [use case mismatch / ICP mismatch / champion vulnerability] | evidence: [1-2 sentences]." Sales lead and RevOps are in the channel. No commentary threads, just the signal.

Month 1: RevOps pulls the channel logs and produces a summary: how many flags, which segment patterns are emerging. Share with VP CS and VP Sales.

Month 3: Schedule the first quarterly ICP review session. Use the bad-fit flag summary as the starting point. Come out with at least two specific ICP criteria updates.

Month 6: Automate the tagging. Build a 90-day fit signal prompt into your CS platform workflow so every account gets a structured tag, not just the ones the CSM remembers to flag.

The three questions every CSM should answer at the 90-day mark, and route to Sales if any answer is concerning:

- Is the primary use case the customer bought for actively in use?

- Is the champion still in the same role?

- Has the customer mentioned a competitor or expressed regret about switching?

That's the minimum viable ICP signal. It takes 5 minutes per account. And the signal is more valuable than a year's worth of product analytics for predicting which deals will churn.

For a detailed look at how customer health scoring connects to this loop, Customer Health Scoring with Sales Context covers the deal-context overlay that makes health scores predictive rather than descriptive.

The CS-to-Marketing ICP Loop

The CS-to-Marketing ICP Loop is the four-stage model this article documents. Stage 1 is CS tagging accounts with fit signals at the 90-day mark. Stage 2 is routing bad-fit flags to a shared revenue review with structured evidence. Stage 3 is the quarterly ICP review session with VP CS, VP Sales, RevOps, and Marketing as named co-owners. Stage 4 is Marketing translating the synthesis into ICP filter updates, lead scoring changes, and messaging adjustments, then closing the loop back to CS on what changed.

The loop is only complete when Stage 4 information flows back to CS. Without that close, CS continues operating on a stale ICP while Marketing has already changed targeting. The result: mismatched customer expectations at onboarding and an invisible source of churn in the next cohort.

Rework Analysis: The ICP loop compounds in both directions. Teams that run the quarterly session consistently see NRR improve 8-12 points over 18 months because each quarter catches churn signals 6-9 months before they would appear in NRR data. Teams that skip the loop absorb those same signals as unexplained churn, adding more CSMs to manage the symptom rather than upstream AEs to fix the cause.

Quotable Nuggets:

"Bad-fit accounts churn at 2-3x the rate of strong-fit accounts and expand at half the rate, making ICP quality a direct multiplier on NRR, not just a segmentation preference." (Gainsight research on B2B SaaS customer cohorts)

"Only 23% of B2B SaaS companies have a formal process for CS teams to flag bad-fit accounts to Sales, meaning 77% are absorbing preventable churn as a cost of business rather than as a feedback loop problem." (ChurnZero survey of 600+ CS leaders)

"Even 10% of closed-won deals being bad-fit can suppress NRR by 5-8 points annually when you account for churn, low expansion, and CS time absorbed, a gap that compounds every quarter without a structured feedback loop." (Bain & Company)

Frequently Asked Questions

Who owns the ICP definition in a B2B SaaS company?

RevOps should own the document and the update process. Marketing, Sales, and CS all contribute input: Marketing brings targeting data, Sales brings close-rate data, CS brings churn cohort data. But a shared document with three owners tends to drift without a single steward. RevOps as the neutral commercial function is the right owner, accountable to NRR rather than to any individual team's performance.

How do you surface bad-fit account data to Sales without it feeling like blame?

The key is structuring the handoff as a commercial input, not a performance review. Bad-fit flags should include a specific gap (use case mismatch, ICP deviation, champion vulnerability) with 2-3 sentences of evidence, routed to Sales Ops or RevOps, not directly to the individual AE first. The quarterly ICP review session, where VP CS and VP Sales co-own the agenda, is the structural mechanism that removes blame from the dynamic. CS is presenting churn cohort data. Sales is presenting close-rate data. RevOps synthesizes. No team is on trial.

How specific should ICP fit criteria be?

Specific enough to make a binary call. "Mid-market" is too vague. "50-500 employees, in a professional services or operations function, with a documented workflow management need" is actionable. The quarterly review process will tell you which criteria actually predict churn versus which are assumptions that need to be updated. HBR's segmentation research argues that the most durable ICP criteria are built around the jobs customers need done, not just firmographic attributes, a useful reminder when your quarterly review turns up patterns that don't fit neatly into industry or size buckets.

What if Sales leadership dismisses CS feedback as complaints?

This is a VP-level alignment problem. The VP CS and VP Sales need to agree, explicitly, that CS churn cohort data is a commercial input to ICP decisions, not a performance review. If that agreement doesn't exist, the quarterly review will fail before it starts. The CRO or CEO sometimes needs to set the frame.

How long before the feedback loop shows results in NRR?

Expect a 2-3 quarter lag. ICP changes in Q1 affect targeting in Q2, which affects the deals that close in Q2 and Q3, which show up in NRR in Q4 or Q1 of the following year. The feedback loop is a 12-18 month investment. Teams that abandon it after one cycle because they don't see immediate NRR movement miss the structural benefit.

Learn More

Senior Operations & Growth Strategist

On this page

- Why ICP Drift Is a Revenue Problem, Not a Segmentation Problem

- What CS Actually Knows That Sales Doesn't

- Building the Feedback Loop: The Four-Stage Model

- Stage 1: CS Tags Accounts with Fit Signals at the 90-Day Mark

- Stage 2: CSM Routes Bad-Fit Flags to Shared Revenue Review

- Stage 3: Quarterly ICP Review Session

- Stage 4: Marketing Updates Positioning and Lead Scoring

- The "This Deal Shouldn't Have Closed" Signal

- Where the Loop Breaks: Four Failure Modes

- Failure Mode 1: CS Feedback Is Informal

- Failure Mode 2: Sales Interprets Bad-Fit Feedback as CS Complaints

- Failure Mode 3: RevOps Has No Owner for ICP Between Annual Planning Cycles

- Failure Mode 4: Marketing Changes Messaging Without Informing CS

- What Good Looks Like: The Quarterly ICP Signal Review

- RevOps as Neutral Owner

- Practical Starting Point for SMB/Mid-Market Teams

- The CS-to-Marketing ICP Loop

- Frequently Asked Questions

- Who owns the ICP definition in a B2B SaaS company?

- How do you surface bad-fit account data to Sales without it feeling like blame?

- How specific should ICP fit criteria be?

- What if Sales leadership dismisses CS feedback as complaints?

- How long before the feedback loop shows results in NRR?

- Learn More