Why AI Projects Fail: How to Move from Pilot to Production

Most organizations have experimented with AI. Far fewer have achieved meaningful results. The pattern is familiar: promising pilots that never scale, proof-of-concepts that prove nothing about production viability, and innovation labs that produce demos but not deployments.

The gap between AI experimentation and AI execution is where most companies get stuck. They're not failing at innovation. They're failing at the harder work of turning innovation into operational reality.

Why Pilots Don't Scale

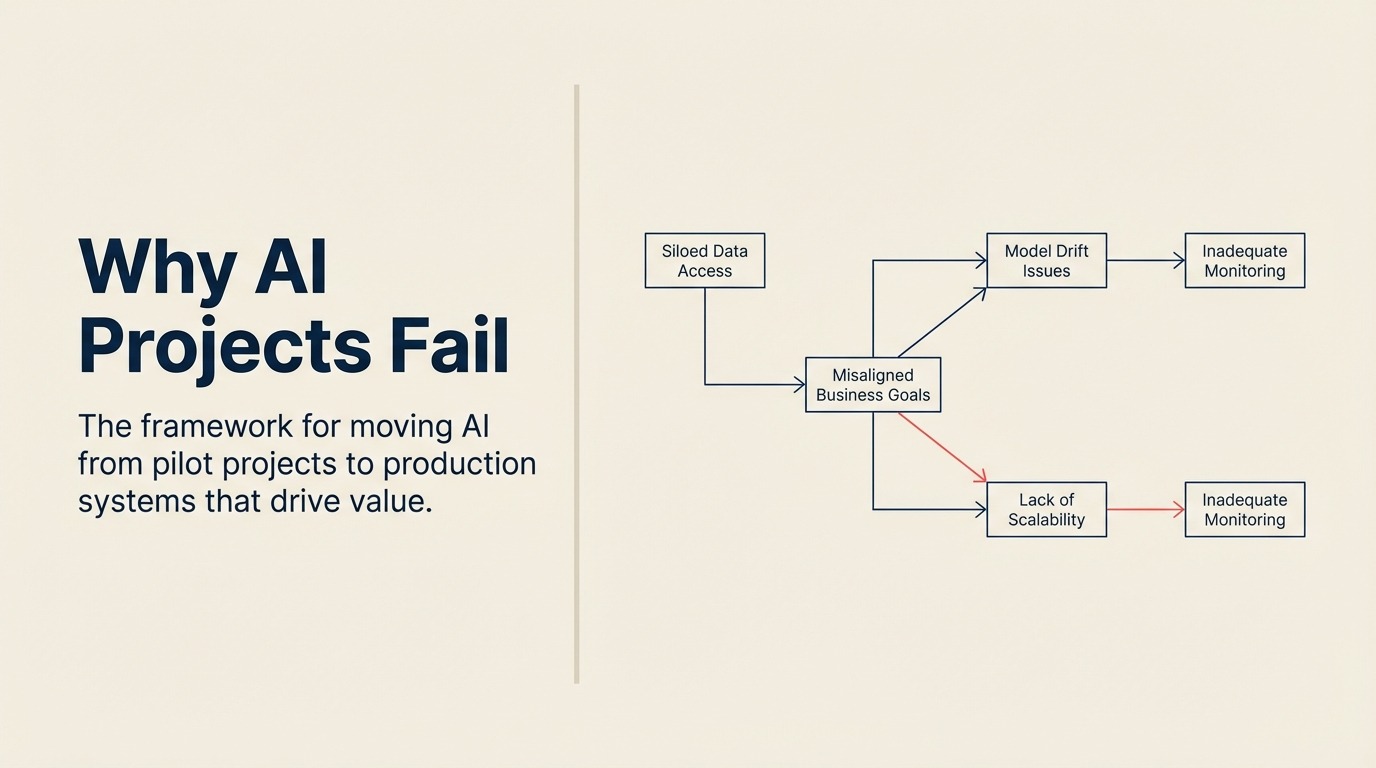

Understanding why AI initiatives stall helps prevent repeating the same mistakes:

Wrong success criteria. Pilots often prove that AI can work technically without proving it can work organizationally. A model that performs well in isolation may fail when integrated with existing systems, processes, and people.

Insufficient data infrastructure. Pilots use curated datasets. Production requires reliable data pipelines. Organizations discover their data isn't as clean, accessible, or integrated as the pilot assumed.

Missing operational capabilities. Running AI in production requires monitoring, retraining, version control, and incident response. Pilots don't build these capabilities. Scaling without them fails.

Cultural resistance. Pilots often run with enthusiasts. Scaling requires adoption by people who didn't volunteer. Without change management, new capabilities get rejected by the broader organization.

Bottom-up fragmentation. When AI initiatives emerge from individual teams without coordination, you get incompatible tools, duplicated effort, and isolated successes that can't combine into enterprise value.

The Execution Framework

Moving from experimentation to execution requires discipline in four areas:

1. Top-Down Strategic Direction

Execution starts with clarity about where AI should create value. This means:

Prioritizing use cases. Not every AI opportunity deserves investment. Identify the applications that matter most for your strategy. Focus resources on the vital few, not the interesting many.

Setting ambitious targets. Vague goals produce vague results. Define specific value targets: cost reduction percentages, revenue impact, time savings, quality improvements.

Allocating real resources. Pilots run on spare capacity. Execution requires dedicated budget, people, and leadership attention.

2. Enterprise-Wide Infrastructure

Individual teams can't build production-grade AI infrastructure. This needs to be centralized:

Data platforms. Establish common data infrastructure that makes the right data accessible for AI applications across the organization.

ML operations. Build shared capabilities for deploying, monitoring, and maintaining AI models. This includes version control, automated retraining, performance monitoring, and incident response.

Governance integration. Embed governance into the development process, not as an afterthought. Make it easy to do the right thing.

Talent and expertise. Create centers of excellence that support teams across the organization. Don't expect every business unit to hire their own AI specialists.

3. Execution Discipline

Moving from pilot to production requires different management:

Phase gates with clear criteria. Define what success looks like at each stage: proof of concept, pilot, production deployment, scaled adoption. Be rigorous about evaluating whether criteria are met.

Integration planning. Every AI application connects to existing systems and processes. Plan integration early, not as an afterthought. Include IT, operations, and affected teams from the start.

Change management. Assume people won't automatically embrace new capabilities. Invest in training, communication, and addressing concerns. Build adoption into project timelines and budgets.

Measured rollouts. Deploy incrementally. Start with lower-risk use cases or limited populations. Expand as you build confidence and address issues.

4. Value Tracking and Accountability

Hold AI investments to the same standards as other major initiatives:

Baseline measurement. Before deployment, measure current state. Without baselines, you can't prove improvement.

Ongoing tracking. Monitor actual results against expectations. Adjust based on what you learn.

Honest assessment. Be willing to kill initiatives that aren't delivering. Sunk costs shouldn't justify continued investment in approaches that aren't working.

Success sharing. Document and share what works. Help successful patterns spread across the organization.

Putting This Into Practice

Start here: Review your current AI initiatives. How many are in pilot status? How long have they been there? What specifically is blocking the path to production?

Common mistake: Treating AI execution like a technology project when it's primarily an organizational change challenge.

Measure success by: Percentage of AI investments that reach production and deliver measurable value, not by number of pilots launched.

AI experimentation is necessary but insufficient. The organizations that pull ahead will be those that master the harder discipline of execution - turning promising technology into production systems that deliver real business value. That's where the returns actually live.