More in

Chat & Conversational News

Slack Is Becoming the Front Door for AI Agents: What CX Leaders Should Redesign Before the Pattern Sets

Apr 17, 2026

Intercom Raised $250M to Build AI Agents That Sell: What CMOs Need to Decide About Conversational AI Investment

Mar 27, 2026

WhatsApp Calls and Chats Are Now in One Platform: What That Means for Your Lead Capture Funnel

Feb 17, 2026

Voice Agents Are Now a $11B Category: How Growth Leads Should Evaluate Adding Voice to Their Conversational Stack

Feb 13, 2026

The B2B Chat Tool Market Is Consolidating: What CMOs Need to Know About Vendor Stability Before the Next Budget Cycle

Feb 2, 2026

Meta Added the Lead Objective to WhatsApp Ads: Here's Why Performance Marketers Should Restructure Their Campaigns Now

Jan 9, 2026

A 93% Autonomous Resolution Rate: What Demand Gen Leaders Need to Know About the New Ceiling for AI-Led Lead Qualification

Jan 6, 2026 · Currently reading

A 93% Autonomous Resolution Rate: What Demand Gen Leaders Need to Know About the New Ceiling for AI-Led Lead Qualification

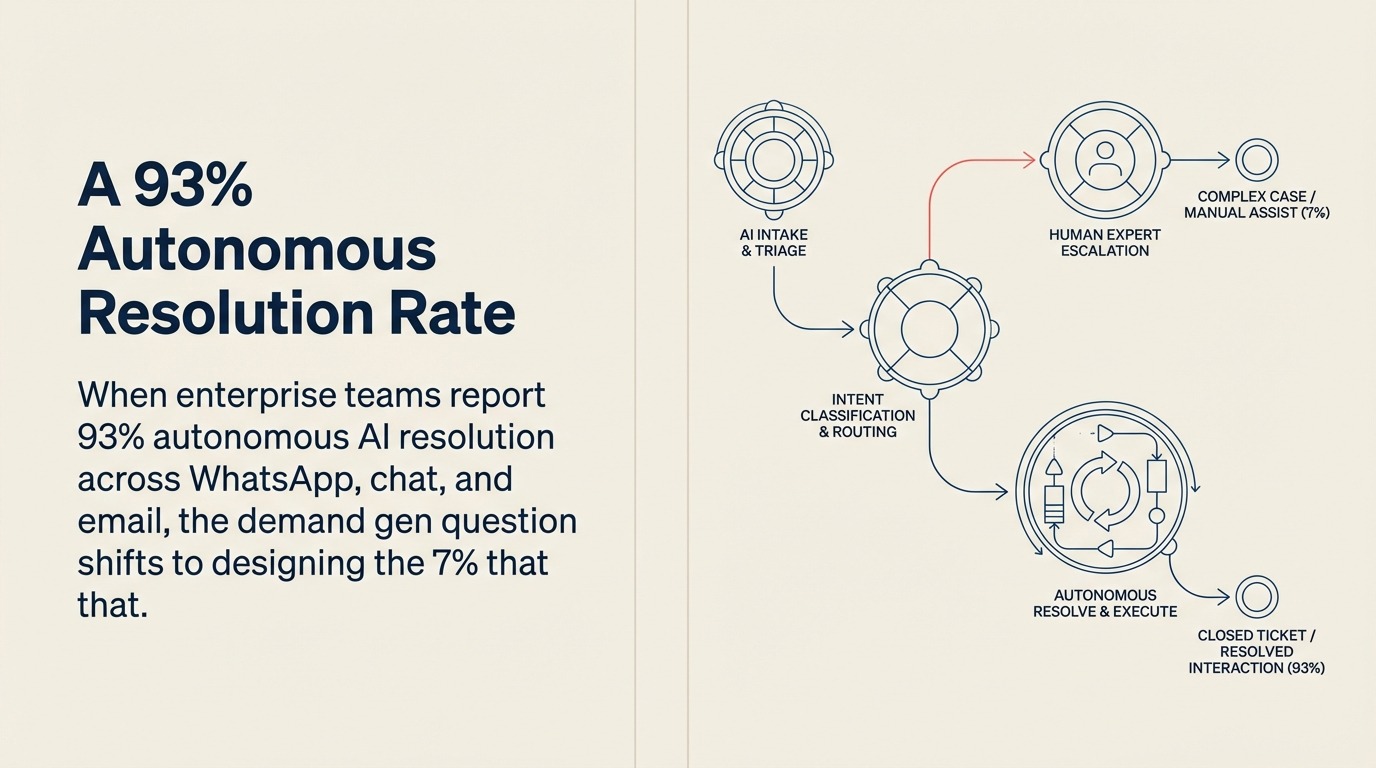

The number that matters in Intercom's $250M financing announcement, covered by The Irish Times, isn't the valuation or the headcount plans. It's the resolution rate: 67% of inbound queries handled autonomously on average, with some enterprise deployments reporting 93% across WhatsApp, web chat, email, and SMS in a unified conversation flow.

For demand gen leaders, this changes the question. The debate over whether AI can handle inbound lead qualification at scale is effectively settled. The new question is about workflow design: if you're getting close to 93% autonomous handling, what does the 7% that escalates look like, and is your team set up to make those conversations count?

That 7% is not random. It's your highest-intent, highest-complexity inbound traffic. The leads that are too important, too nuanced, or too ready to be handled by an AI alone. Getting the escalation criteria right is where the real demand gen leverage lives. For a practical walkthrough of how to structure the handoff from AI to human rep, see the guide on chatbot-to-rep handoff in chat funnels.

What 93% Autonomous Resolution Actually Requires

The gap between a 67% average and a 93% top-decile result isn't a vendor quality gap. It's a configuration gap. Teams hitting 93% have done specific work that teams at 67% haven't.

The work breaks down into three areas. First, knowledge base depth: the AI agent's ability to handle complex qualification conversations is proportional to the quality and structure of the content it has access to. Sparse FAQs produce shallow conversations. Well-structured product, use case, and persona content produces conversations that can cover real objections.

Second, clear conversation flow logic: the agent needs defined paths for different inbound intents, not a generic "how can I help you?" opening. Teams with high resolution rates have mapped out the 8-12 most common inbound scenarios and designed conversation flows for each.

Third, and most importantly, explicit escalation criteria: the agent needs to know when to stop and hand off to a human. Teams that don't define this explicitly end up with either over-escalation (the AI passes everything to humans above a low confidence threshold) or under-escalation (the AI handles conversations it shouldn't, producing bad experiences for high-value leads).

A Three-Tier Qualification Framework

Structuring AI-led qualification around three tiers gives demand gen teams a practical way to define both the automation playbook and the human handoff rules.

Tier 1: Fully autonomous handling. These are inbound conversations where the AI can qualify, educate, and convert without human involvement. The signals are: recognized ICP profile, standard product questions, pricing range within self-serve, no explicit request for a human, no deal complexity signals. The AI handles everything, books a demo or trial, and logs the structured record to the CRM. No human touches this conversation unless the lead re-engages with a complexity signal.

Tier 2: AI-assist with human review. These are conversations where the AI does the qualification work but a human reviews the outcome before the lead moves to the next stage. The signals are: borderline ICP fit, deal size near the threshold for enterprise treatment, mixed intent signals, or a qualification score that falls in an ambiguous range. The AI completes the conversation, flags it for review, and the lead waits for human confirmation before receiving demo scheduling access. Review should happen within the same business day.

Tier 3: Immediate human handoff. These are conversations the AI should not be handling alone. The triggers are explicit: the lead states a deal size above your enterprise threshold, mentions a competitor by name in a way that requires strategic response, expresses frustration or urgency, or directly requests to speak with a person. Any of these signals should route immediately to a live SDR with the full conversation context already available.

The Signals That Define Each Tier

The tier definitions above only work if you've mapped the specific signals that trigger each one. Here's how demand gen teams typically define them.

For Tier 1 signals: company size matches ICP, job title matches buyer persona, stated use case matches one of your documented scenarios, budget indicator is within self-serve range (often expressed indirectly through company revenue or team size), no enterprise-specific terminology in the conversation.

For Tier 2 signals: company size is at the boundary between SMB and mid-market, deal size signals are ambiguous, lead has mentioned integration requirements that could indicate enterprise complexity, sentiment is neutral to positive but not clearly high-intent.

For Tier 3 signals: explicit deal size above your enterprise threshold, competitor mentions that require strategic positioning, phrases indicating time pressure ("need this in 30 days"), explicit requests for a human, negative sentiment about previous vendor experiences, or account names that belong to your named accounts list.

These signals should be hard-coded into your conversation flow logic, not left to the AI's general judgment.

Scaling MQL Volume Without Proportional Headcount

The demand gen argument for AI-led qualification isn't just about conversation quality. It's about volume economics. The lead routing automation and lead response time articles in the lead management library cover the SDR-side mechanics that AI qualification is designed to replace.

The traditional MQL ceiling is defined by SDR capacity. If each SDR can handle 40-60 qualified conversations per week, and you want 500 MQLs per month, you need a math equation that resolves to a headcount number. When that headcount number exceeds your hiring budget, MQL volume stalls.

AI qualification breaks that ceiling. An agent running well-designed Tier 1 and Tier 2 flows can handle thousands of concurrent conversations, qualify them consistently, and produce structured CRM output at a fraction of the cost of equivalent human qualification work. The SDR team's attention shifts to Tier 3, the conversations where human judgment creates real value, rather than being consumed by routine qualification.

For demand gen leaders, this creates an opportunity to decouple MQL volume from SDR headcount growth. That's a significant efficiency argument, and it's one worth presenting to finance with specific numbers from your current funnel.

What to Define Before Deploying AI Qualification

If you're planning to deploy or upgrade an AI qualification system in the next quarter, four decisions need to be made before the first conversation goes live.

Define your tier boundaries precisely. Write down the specific signals for each tier before building any flows. Ambiguous boundaries produce inconsistent routing and bad lead experiences.

Map your 8-12 core inbound scenarios. Not every inbound conversation is unique. Most fall into a small number of recognizable patterns: pricing questions, competitive comparisons, integration questions, use case fit questions, and a few others specific to your product. Design flows for each scenario before going live.

Establish your CRM output schema. Every AI conversation should produce a defined set of fields in your CRM: qualification tier, stated use case, company profile data collected, deal size signal, next action. If you can't describe the CRM output before the system goes live, you won't be able to use the data productively afterward.

Set your review SLA for Tier 2. Tier 2 conversations are high-intent leads waiting for human review. A multi-day review lag erases the conversion advantage you gained from fast AI qualification. Decide the SLA (same business day is the standard) and make sure it's operationalized in your SDR workflow, not just documented.

Getting these four decisions right before deployment is what separates teams that hit 93% autonomous resolution from teams that plateau at 67% and wonder why. For the broader strategic case this data supports, see the CMO business case for conversational AI investment — the two articles are designed to be read together.

Learn More

- Meta Added the Lead Objective to WhatsApp Ads — Configuring the ad campaigns that feed leads into your Fin AI qualification flow

- WhatsApp Calls and Chats Are Now in One Platform — How unified voice and chat connects to the 7% escalation tier your AI qualification doesn't resolve

- Lead Capture Automation — Automating lead routing from conversational AI qualification to CRM and SDR workflows

- Intercom vs. Drift in B2B Chat — Platform comparison for teams choosing between AI qualification options