Joint Lead Scoring: Building a Scoring Model Fit for Both Marketing and Sales

Marketing spent three months building the scoring model. Interviewed sales, analyzed closed-won data, set up the MAP rules. Then shipped it.

Sales looked at the first batch of high-scoring leads, called a few, and went back to working their own list. The score, they said, didn't match what they actually saw in the account.

This is how most lead scoring projects end. Not with a bad model. With a model nobody trusts.

The problem usually isn't the math. It's the process. When marketing builds a scoring model and hands it to sales, sales has no ownership in the outcome. They didn't decide what signals matter. They didn't validate the weights. When the model produces a lead they wouldn't have called, they have no reason to defer to it.

A jointly built model (one where both teams contributed to the weights, tested it against real closed-won data, and signed off on the thresholds) is the only kind that survives contact with the sales team. Before building the model, both teams should have agreed on the shared ICP framework. The scoring weights are only as good as the underlying ideal customer definition.

The Scoring Model Nobody Uses

Before we get to what to build, it's worth being honest about why most scoring models fail. Forrester's overview of individual interest scoring frames the core challenge well: a score is only useful when the teams it's meant to serve trust the inputs.

Problem 1: Marketing defines the model unilaterally. Sales wasn't in the room when the weights were set. They have no context for why a pricing page visit is worth 25 points but a webinar attendance is worth 15. When the model produces counterintuitive results, they don't trust it enough to override their gut.

Problem 2: The model over-weights engagement over fit. Email opens, blog visits, social clicks: these inflate scores for people who are interested in content but will never buy. A marketing manager at a 10-person company who reads every email you send scores higher than a VP of Operations at a 300-person target company who visited your pricing page once. The high scorer isn't a better lead.

Problem 3: No decay rules. A lead who downloaded a guide 14 months ago still has that score attached. Old engagement signals don't reflect current intent. Without decay, old high scores clutter the top of the priority queue.

Problem 4: The model never gets recalibrated. Markets change. Your ICP shifts. New channels generate different quality leads. A scoring model built on last year's closed-won data can be actively wrong by the time it's been running for 18 months.

Joint ownership fixes Problem 1 structurally, because sales is involved in building the model and they have context for the weights. The other problems require specific design decisions, which is what the rest of this article covers. If you're not sure whether your company needs formal scoring at all, the when you don't need lead scoring article covers the cases where simpler qualification beats a scoring system.

The Three-Dimension Model

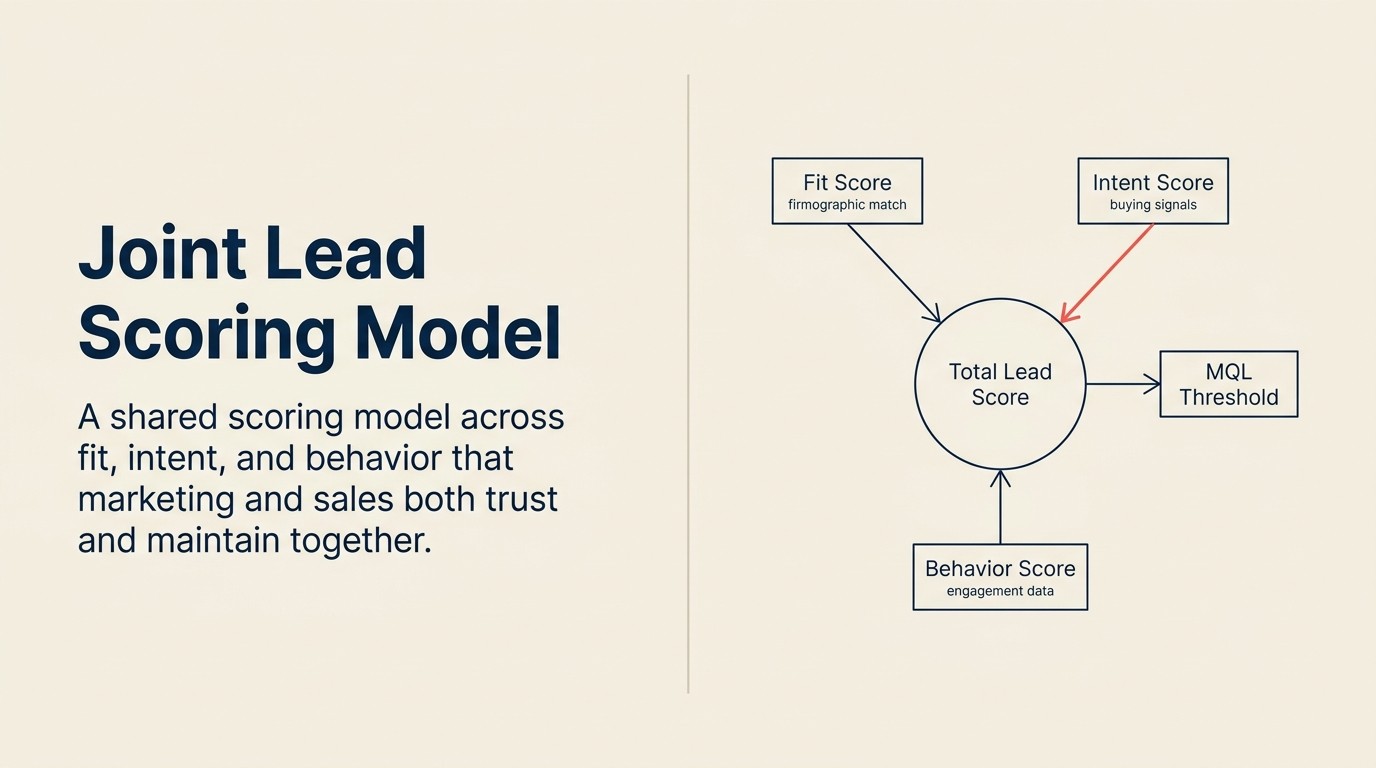

The Fit + Intent + Behavior Scoring Triangle

The Fit + Intent + Behavior Scoring Triangle is a three-dimension lead scoring model in which each axis measures a distinct quality of a lead: independently scorable, independently reportable, and independently calibratable.

- Fit (who they are): Static firmographic and role signals. ICP match, title seniority, tech stack, company size. Does not decay quickly.

- Intent (what they're researching now): Active buying-window signals. Demo requests, pricing visits, G2 category research, Bombora topic surges. Decays within days to weeks.

- Behavior (how engaged they are with you): Engagement depth signals. Webinar attendance, content downloads, trial usage. Decays over 30-90 days.

A lead needs to cross minimum thresholds on at least two dimensions before reaching MQL status. High behavior alone does not qualify a lead. High fit alone does not qualify a lead. The triangle requires at least two legs to stand.

Key Facts: Lead Scoring Effectiveness

- Companies that use lead scoring see a 77% increase in lead generation ROI versus companies that don't, according to MarketingSherpa research on B2B qualification practices.

- Sales teams that participated in designing the lead scoring model are 3x more likely to use lead scores in prioritization, per a SiriusDecisions study on cross-functional alignment.

- Lead scoring models that include behavioral decay rules outperform static models by 28% in SQL-to-opportunity conversion rate, based on analysis by Aberdeen Group.

A scoring model built on three separate dimensions (fit, intent, and behavior) is more transparent and maintainable than a single composite score because each dimension is understandable on its own.

Fit score: Who the company and person are. Static or slow-moving signals that reflect whether this account is in your target market.

Intent score: What they're actively researching. Signals that indicate a buying window is open or approaching.

Behavior score: What they've done with your content and product. Engagement signals that indicate depth of interest.

Each dimension can be scored separately, reported separately, and calibrated separately. When a lead has high fit but low intent, that's a useful signal that's lost in a single composite number.

The fit vs. intent scoring article goes deeper on the tradeoffs between these two dimensions. This article focuses on how to build all three together.

Fit Scoring: What to Include

Fit signals answer: "Is this the kind of company and person we should be selling to?"

Firmographic signals (company-level):

| Signal | Points (example) | Source |

|---|---|---|

| Company size: 50-500 employees | +20 | Form field, Clearbit, ZoomInfo |

| Target industry match | +15 | Form field, enrichment |

| Geography: served region | +10 | IP, form field |

| Company size: 501-2000 employees | +10 | Enrichment |

| Company size: outside target range | -15 | Enrichment |

Role/title signals (person-level):

| Signal | Points (example) | Source |

|---|---|---|

| VP or C-level in relevant function | +25 | Form field, LinkedIn enrichment |

| Director in relevant function | +15 | Form field, LinkedIn enrichment |

| Manager in relevant function | +5 | Form field |

| IC in relevant function | 0 | Form field |

| Outside target function | -10 | Form field |

Technology stack signals: If your product integrates with or replaces specific tools, a contact whose company uses those tools is a better fit than one who doesn't.

| Signal | Points (example) | Source |

|---|---|---|

| Uses target integration partner | +15 | Clearbit, G2, enrichment |

| Uses tool you replace | +10 | Clearbit, self-reported |

| No relevant tech stack overlap | 0 | N/A |

Negative fit signals (disqualifiers): These are critical and often skipped.

| Signal | Points | Effect |

|---|---|---|

| Known competitor domain | -100 | Effectively disqualifies |

| Student email / academic domain | -50 | Suppresses |

| Outside served geography | -30 | Reduces score significantly |

| Company too small (< 10 employees) | -20 | Deprioritizes |

The negative signals matter as much as the positive ones. A scoring model without disqualifiers produces high scores for people who will never buy.

Intent Scoring: Third-Party and First-Party Signals

Intent signals answer: "Are they actively looking to solve this problem right now?"

First-party intent signals come from your own properties. These are the most reliable because you've directly observed the behavior.

| Signal | Points (example) | Why |

|---|---|---|

| Demo request submitted | +50 | Explicit buying signal |

| Pricing page visit (3+ pages deep) | +35 | Active evaluation |

| ROI calculator used | +30 | Commercial intent |

| Competitor comparison page | +25 | Evaluation mode |

| Free trial started | +40 | Strong product intent |

| Pricing page visit (single) | +15 | Research signal |

Third-party intent signals come from data providers who track research behavior across multiple sites: G2, Bombora, TechTarget, and similar platforms. Gartner's guide to B2B lead scoring with intent signals shows that companies using intent data alongside firmographic fit criteria are twice as likely to achieve a 10% or higher top-of-funnel conversion rate.

| Signal | Points (example) | Source |

|---|---|---|

| Topic surge: relevant category (Bombora) | +30 | Bombora |

| G2 profile views for your category | +25 | G2 Buyer Intent |

| Category research spike | +20 | TechTarget, Bombora |

Third-party intent data is powerful but requires budget and integration work. If you don't have it, first-party signals alone can build a working model. Proxy signals work too: if a contact has consumed 4+ pieces of bottom-of-funnel content in 30 days, that's a reasonable intent signal even without external data.

How to weight intent relative to fit: For most inbound-led B2B motions, intent should carry more weight than fit when both are present. A perfect-fit company doing active research is more valuable than a perfect-fit company that downloaded one blog post. But intent without fit is still noise, so weight it accordingly.

Behavior Scoring: Engagement Depth

Behavior signals answer: "How engaged is this person with us specifically?"

Content engagement:

| Signal | Points (example) | Notes |

|---|---|---|

| Webinar attended (live) | +20 | Live attendance > recorded view |

| Guide or ebook download | +10 | |

| Blog visit (3+ pages) | +8 | |

| Webinar registered, no-show | +3 | Weak signal |

| Newsletter open | +2 | Very weak; don't over-weight |

| Email click (link to content) | +5 | Stronger than open |

Product engagement (if applicable):

| Signal | Points (example) | Notes |

|---|---|---|

| Trial account created | +30 | Strong intent |

| Feature used in trial | +15 per feature | |

| Trial exceeded usage limits | +25 | Capacity signal |

Decay rules (non-negotiable):

Old behavior signals should decay. A lead who visited your blog 14 months ago is not currently engaged. Running their old behavior score forward poisons the model.

| Signal age | Score multiplier |

|---|---|

| 0-30 days | 100% |

| 31-60 days | 75% |

| 61-90 days | 50% |

| 91-180 days | 25% |

| 180+ days | 0% (or cap contribution) |

Most MAP platforms support decay rules natively. If yours doesn't, run a monthly batch job that recalculates scores with an aging factor applied.

Once you have scores for each dimension, the next question is how to combine them into a decision that sales will actually act on.

Combining the Three Dimensions

Three approaches to combining fit, intent, and behavior into a usable score:

Option 1: Composite score. Add all three dimension scores into a single number. Simple to report. Easy to compare. But it loses dimension-level visibility. You can't tell if a score of 85 is high-fit/low-intent or low-fit/high-behavior.

Option 2: Threshold logic (recommended for most teams). Define minimum thresholds per dimension. A lead only reaches MQL status if it hits minimums on at least two of three dimensions. This prevents a highly engaged-but-wrong-fit lead from scoring into MQL.

Example threshold logic:

- MQL requires: Fit score ≥ 30 AND (Intent score ≥ 20 OR Behavior score ≥ 25)

- Hot MQL: Fit ≥ 40 AND Intent ≥ 30

Option 3: Matrix model. Report fit and intent as separate axes. High-fit/high-intent = immediate outreach. High-fit/low-intent = nurture. Low-fit/high-intent = handle carefully. Low-fit/low-intent = suppress. This gives sales the most useful context but requires UI support to be practical.

For teams with a MAP and CRM that can display multiple score fields, threshold logic with separate dimension reporting gives you the best of both approaches.

Quotable: Companies with mature lead management and scoring practices generate 50% more sales-ready leads at 33% lower cost per lead than companies using ad hoc qualification, according to Forrester Research on B2B demand generation.

But the right combination logic only works if sales trusts the model enough to act on it. That requires a different kind of work entirely.

Getting Sales Buy-In on the Model

Joint ownership isn't a checkbox. It requires specific steps.

Step 1: Interview 3-5 sales reps before building. Ask them: "When you look at a new lead, what's the first thing you check? What makes you decide to call immediately versus next week? What have been your best leads in the past 6 months, and what did they have in common?"

Their answers tell you which signals matter in practice. Not which signals marketing thinks matter.

Step 2: Share draft weights and ask for challenges. Present the scoring logic with the weights. Invite disagreement. "Marketing gave pricing page visits 35 points. Does that match what you see? When someone visits pricing, how often does that lead to a real conversation?"

Where sales pushes back, either explain the data that supports the weight or adjust it. Both outcomes strengthen the model. The MQL-to-SQL score thresholds need to be set jointly for the same reason. They're the output of the model, and sales has to trust what those thresholds produce.

Step 3: Validate against known-good and known-bad leads. Pull 20-30 closed-won deals from the past 12 months. Run the scoring logic against their lead records and check their scores. If your best deals would have scored low under the model, you have a calibration problem. Same exercise with closed-lost: top closed-lost leads should score lower than top closed-won. The lead qualification frameworks in the lead management collection offer additional perspectives on what signals actually distinguish closeable leads from noise.

Step 4: Run a pilot before going live. Score a batch of new leads under the new model. Sales reviews the top 20 scored leads and rates whether they agree with the prioritization. Calibrate based on feedback before rolling out broadly.

This process takes 4-6 weeks. It's slower than just building the model yourself. But it's the difference between a model that gets adopted and one that gets ignored.

Rework Analysis: The four-step buy-in process (interview reps, share draft weights, validate against closed-won data, run a pilot) typically takes 4-6 weeks and is the single highest-leverage investment in a scoring model's long-term adoption. Teams that skip to "let's just launch and adjust later" consistently report the same outcome 3-6 months in: sales stops looking at scores and works their own list. The pilot step matters most. Scoring 20 real leads with sales review before go-live surfaces calibration errors before they damage trust.

Score Governance: Who Changes the Weights

A scoring model without governance decays. Someone adds a point for opening a newsletter because it was easy. Marketing updates a product page and the click behavior changes but nobody updates the scoring logic. Weights that were right six months ago are wrong now.

Governance doesn't need to be bureaucratic. It needs to be clear. The marketing-sales SLA template is a good place to document the governance rules alongside the broader alignment commitments both teams sign off on.

| Decision | Owner | Approval required |

|---|---|---|

| Weights for existing signals | Marketing Ops | VP Marketing sign-off; notify VP Sales |

| Adding new signal category | Marketing Ops | Both VP Marketing and VP Sales sign-off |

| Changing MQL/SQL thresholds | Marketing Ops | Both VPs sign-off; document change in SLA |

| Disqualifier changes | Marketing Ops | Both VPs sign-off |

| Emergency fix (model clearly broken) | Marketing Ops | Verbal approval from both VPs; formal sign-off within 1 week |

Quarterly review meeting: 45 minutes. Pull conversion data by dimension score. Review any signals that were added or changed. Confirm the model is still calibrated against current closed-won data. Both VPs attend.

Governance keeps the model from decaying. But you still need a process to catch the moments when the model quietly stops predicting. That's what validation is for.

Testing and Validating the Model

A model validated against closed-won data is the minimum standard for trusting it.

Correlation analysis: For each signal in the model, calculate the correlation between having that signal and eventually closing as won. Do this per segment (SMB vs. mid-market) if your ICP varies. Signals with low correlation to closed-won should be weighted lower or removed. Signals with high correlation should be weighted higher.

This analysis doesn't require a data science team. A spreadsheet with closed-won vs. closed-lost data and signal presence/absence columns will surface the correlations you need.

Quarterly recalibration:

- Pull last 90 days of SQLs

- Check SQL-to-opportunity conversion rate by score band (60-70, 70-80, 80-90, 90+)

- If conversion rates don't correlate with score bands, the model needs recalibration

- Pull any signals that were added in the last quarter and check their individual correlation with conversion

- The conversion rate analysis from the pipeline team feeds directly into this check. Score bands that don't correlate with stage progression show up clearly there.

Annual full rebuild: Every 12-18 months, rebuild the model from scratch using the most recent 12 months of closed data. Gartner research on predictive lead scoring confirms that models rebuilt on fresh data consistently outperform those left static. Even small shifts in your ICP or channel mix can make 18-month-old weights actively misleading. Market conditions change. Your ICP may have shifted. Old models built on stale data underperform without anyone noticing because the degradation is gradual.

The MQL definition framework and the scoring thresholds should be reviewed together during annual rebuilds, since they're designed to be aligned.

The Bottom Line

The scoring model both teams built is worth more than the perfect model only marketing trusts.

That doesn't mean you should build a bad model to get buy-in. It means the process of involving sales in building the model (the interviews, the weight challenges, the pilot validation) makes the model better as well as more adopted.

Sales knows things about what makes a lead good that marketing can't see in engagement data. Marketing knows things about what generates intent signals that sales doesn't observe. A jointly built model combines both.

Build it together. Validate it against real data. Govern it explicitly. Recalibrate quarterly.

The goal isn't a score. It's a shared language for prioritization, one both teams use because both teams trust it.

Frequently Asked Questions

What is joint lead scoring?

Joint lead scoring is a scoring model built collaboratively by marketing and sales, where both teams contributed to the signal weights, validated the model against closed-won data, and signed off on the MQL/SQL thresholds. The "joint" element isn't ceremonial. It means sales has ownership in the outcome, which is the only way a scoring model survives contact with a real sales team.

What are the three dimensions of lead scoring?

The three dimensions are fit (who the company and person are, based on firmographic and role signals), intent (whether they're actively researching a solution right now, including pricing visits, demo requests, and third-party intent surges), and behavior (how engaged they are with your content and product). Each dimension decays at a different rate: fit is nearly static, intent decays within days, behavior decays over 30-90 days.

Why do most lead scoring models fail?

Most scoring models fail because marketing built them unilaterally (so sales doesn't trust or use them), they over-weight engagement signals over fit (so the "engaged but never buys" persona scores too high), they have no decay rules (so old signals pollute current scores), and they're never recalibrated against current closed-won data. Joint ownership addresses the first problem; the other three require deliberate design decisions.

How should fit and intent be weighted relative to each other?

It depends on your sales motion. Inbound-led motions: Fit 50%, Intent 35%, Behavior 15% (fit is the differentiator since inbound already pre-filters for some intent). ABM motions: Fit 25%, Intent 60%, Behavior 15% (you've pre-screened accounts on fit, so intent is the trigger). PLG motions: Fit 25%, Intent 25%, Product behavior 50% (trial usage predicts conversion better than either traditional signal).

What are decay rules in lead scoring, and why do they matter?

Decay rules automatically reduce a lead's score as behavioral signals age. A lead who visited your pricing page 14 months ago is not currently in a buying window, but without decay, that old signal still contributes to their score and can push them to the top of the priority queue. Standard decay: signals at full value for 0-30 days, dropping to 75% at 31-60 days, 50% at 61-90 days, 25% at 91-180 days, and 0% after 180 days.

How do we validate that our scoring model is working?

Pull your last 90 days of SQLs. Check SQL-to-opportunity conversion by score band (60-70, 70-80, 80-90, 90+). If higher score bands don't convert at meaningfully higher rates, the model needs recalibration. Also run the closed-won vs. closed-lost analysis: your top 50 won deals should have scored materially higher than your top 50 lost deals. If they don't, the model isn't predicting outcomes.

Learn More

Senior Operations & Growth Strategist

On this page

- The Scoring Model Nobody Uses

- The Three-Dimension Model

- Fit Scoring: What to Include

- Intent Scoring: Third-Party and First-Party Signals

- Behavior Scoring: Engagement Depth

- Combining the Three Dimensions

- Getting Sales Buy-In on the Model

- Score Governance: Who Changes the Weights

- Testing and Validating the Model

- The Bottom Line

- Frequently Asked Questions

- What is joint lead scoring?

- What are the three dimensions of lead scoring?

- Why do most lead scoring models fail?

- How should fit and intent be weighted relative to each other?

- What are decay rules in lead scoring, and why do they matter?

- How do we validate that our scoring model is working?

- Learn More