Handoff Scorecard: Did Sales Hand Off Well? (Template + Scoring Rubric)

Here's what happens without a handoff standard: a CSM receives a new account, opens the CRM, sees a company name and a close date, and starts from scratch. She schedules a discovery call with the customer to learn things the AE already knew. The customer wonders why they're being re-discovered. Onboarding starts two weeks late. The first health score measurement misses key context. By month three, a preventable gap has become a structural problem.

CS teams accept incomplete handoffs not because they prefer them, but because they have nothing to push back against. "This handoff is incomplete" requires a definition of complete. Without a standard, every handoff is debatable and every gap is subjective. The CSM either accepts the incomplete record and works around it, or raises a complaint that goes nowhere because Sales leadership has no objective measure of the problem either. Forrester's postsale customer lifecycle research identifies the initiation phase (the window immediately after Closed-Won) as the highest-risk stage for information loss between selling and retaining teams.

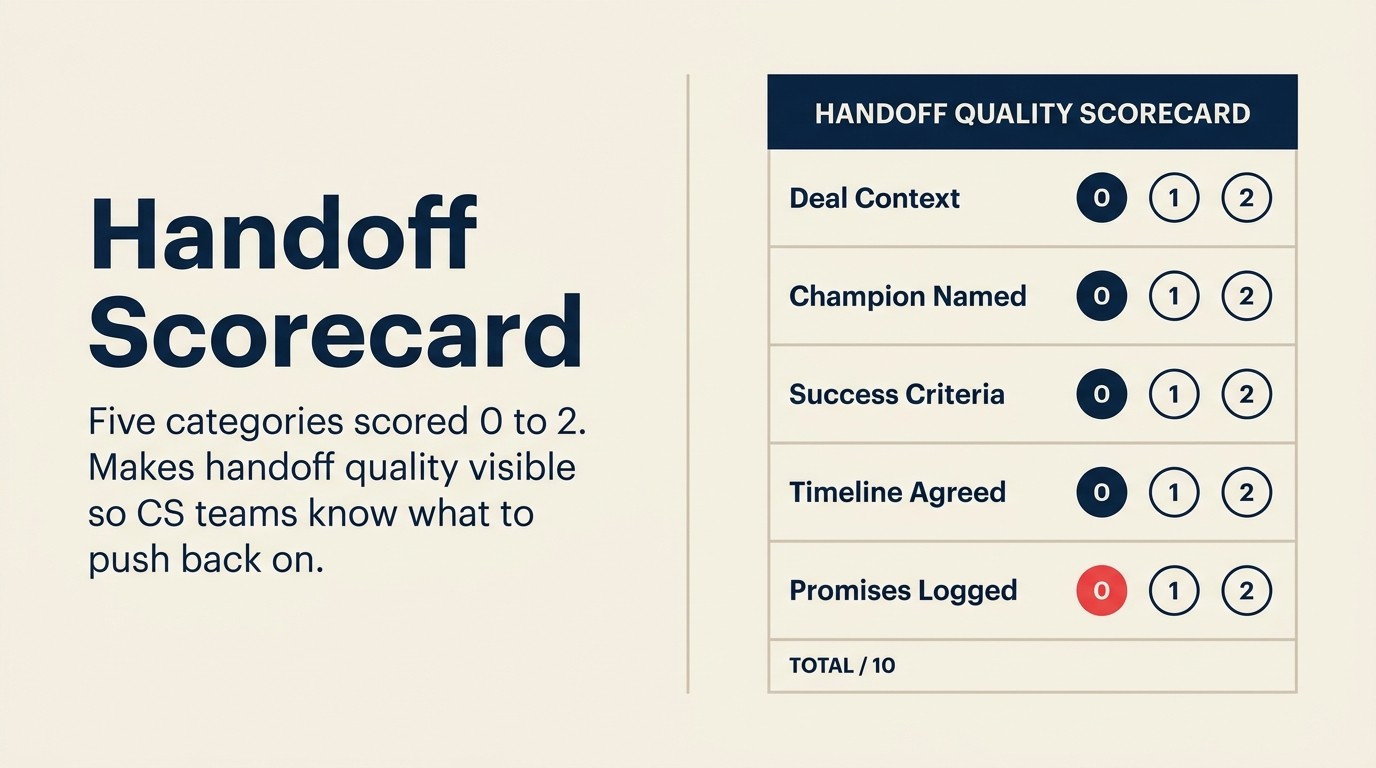

A handoff scorecard fixes this. It makes quality visible, not as a judgment on individual AEs, but as a measurable signal that both teams can track, trend, and improve over time.

Why a Scored Handoff Standard Changes Behavior

Most handoff checklists exist without a scoring mechanism. A checklist tells the CSM what should be there. A scorecard tells both teams whether it is there, and by how much. The distinction matters because it shifts the conversation from "did you do the handoff?" (yes/no, always yes) to "how well did the handoff score?" (0-40, with a published rubric defining each level).

Rework Analysis: The threshold at which handoff quality produces measurable NRR impact appears to be completeness across all five categories, not depth within any single one. Accounts with complete five-category handoffs show 24% higher NRR at 12 months than accounts with partial handoffs, per Gainsight's platform benchmark data. But accounts missing any single category, even just CRM Hygiene, show NRR closer to the partial-handoff baseline than the complete-handoff baseline. The implication is that the scorecard's value isn't in identifying weak AEs; it's in ensuring that no single category becomes the consistently skipped one. Category 4 (Expectation Record) is the most commonly incomplete category and the one with the highest downstream NRR impact when absent.

Quotable benchmark: Only 31% of B2B SaaS companies have a formal, scored handoff quality standard. The other 69% rely on informal expectations that AEs interpret differently, making handoff quality subjective, undebatable, and unimprovable at scale. (Forrester, Revenue Operations research)

Quotable benchmark: Accounts with complete five-category handoffs show 24% higher NRR at 12 months than accounts with partial handoffs. Companies that implement a formal handoff quality standard reduce time-to-first-value by 18% on average. (Gainsight, customer success benchmark data, mid-market SaaS)

Quotable benchmark: The average CSM spends 4-6 hours per new customer filling in context gaps the AE held at close but didn't transfer. That's a process failure, not a product failure. At a fully-loaded CSM cost of $80-120/hour, that's $320-$720 of invisible cost per account, before the downstream churn risk is factored in. (Forrester, post-sale operations research)

Key Facts: Handoff Quality and NRR

- Accounts with complete five-category handoffs show 24% higher NRR at 12 months than accounts with partial handoffs, per Gainsight's platform benchmark data.

- Companies that implement a formal handoff quality standard reduce time-to-first-value by 18% on average, per Gainsight's customer success benchmark data across mid-market SaaS.

- Only 31% of B2B SaaS companies have a formal, scored handoff quality standard. The rest rely on informal expectations that AEs interpret differently, per Forrester's Revenue Operations research.

What the Scorecard Measures

The scorecard assesses five categories that together define a complete handoff. Each category addresses a distinct gap in what CS needs to onboard a customer without independent research.

Deal Context captures the qualitative story of the sale: why the customer bought, what drove urgency, what almost killed the deal. CS can't read the CRM notes and recover this information if the AE didn't write it down. It's the category most likely to be entirely absent.

Stakeholder Map documents who the humans are: champion, executive sponsor, day-to-day contact, and anyone who objected. Without this, the CSM starts the customer relationship without knowing who to call when something goes wrong, or who the champion is that they need to keep close.

Technical Fit records what the product needs to actually run in this customer's environment: integrations required, any custom configuration committed during the sale, and the implementation timeline the customer is expecting. This is where timeline over-promises live and where CS gets surprised at kickoff. See preventing sales over-promises for the pre-close review process that catches these gaps before they reach the handoff.

Expectation Record documents what was promised. Success criteria from discovery. Any non-standard commitments. The agreed go-live milestone. This category is the primary prevention mechanism for the "Sales over-promised, CS under-delivers" failure mode.

CRM Hygiene confirms the basic data quality of the account record: are contacts complete, are notes updated, is the opportunity correctly staged. Bad CRM hygiene creates downstream reporting problems and forces CS to repair data before they can manage the account. Here's the complete template, ready to copy into your CRM or shared doc.

The Handoff Scorecard (Copy-Paste Ready)

Score each line item: 0 = missing, 1 = partial, 2 = complete

Category 1: Deal Context (Max 10 points)

| # | Line Item | Score (0 / 1 / 2) | Notes |

|---|---|---|---|

| 1.1 | Use case documented: what problem the customer is solving and why this product was chosen | ||

| 1.2 | Business problem stated: the specific pain or goal that drove urgency (not just "they needed a CRM") | ||

| 1.3 | Why they bought now: timing context (trigger event, budget cycle, executive push) | ||

| 1.4 | What almost killed the deal: the main objection or obstacle that required resolution | ||

| 1.5 | Competitor considered: which alternative was actively evaluated, and what tipped the decision | ||

| Category 1 Total | /10 |

Scoring guidance:

- 2: Complete: Specific, written, actionable. CSM can read this and know what to say at kickoff.

- 1: Partial: Present but vague. "Customer wanted better reporting" scores 1 on business problem. "Customer's Q3 board review required pipeline visibility they couldn't get from their current tool" scores 2.

- 0: Missing: Field blank or populated with placeholder text ("see email").

Category 2: Stakeholder Map (Max 8 points)

| # | Line Item | Score (0 / 1 / 2) | Notes |

|---|---|---|---|

| 2.1 | Champion name and role: the internal advocate who drove adoption of the purchase | ||

| 2.2 | Executive sponsor identified: the senior stakeholder who approved budget and has renewal authority | ||

| 2.3 | Day-to-day contact: the person the CSM will work with most frequently post-kickoff | ||

| 2.4 | Anyone who objected: internal stakeholders who pushed back and why (still relevant post-close) | ||

| Category 2 Total | /8 |

Scoring guidance:

- 2: Complete: Name, title, contact information, and relationship context all present.

- 1: Partial: Name present but no contact info, or role without context ("there was a skeptic in IT" scores 1; "Mark Chen, IT Director, concerned about data security, resolved with our SOC2 documentation" scores 2).

- 0: Missing: Category not populated.

Category 3: Technical Fit (Max 8 points)

| # | Line Item | Score (0 / 1 / 2) | Notes |

|---|---|---|---|

| 3.1 | Integration requirements listed: every system this customer expects to connect, with names and versions | ||

| 3.2 | Custom configuration committed: any non-standard setup promised during the sale | ||

| 3.3 | Implementation timeline agreed: the specific go-live date or timeline range the customer is expecting | ||

| 3.4 | Customer IT readiness noted: known constraints on the customer side (review processes, IT bottlenecks, data migration requirements) | ||

| Category 3 Total | /8 |

Scoring guidance:

- 2: Complete: Specific and actionable. "Salesforce integration via REST API, customer IT review required, 3-week lead time; go-live target April 30" scores 2 on items 3.1 and 3.3.

- 1: Partial: Present but vague. "They use Salesforce" scores 1 on integration requirements.

- 0: Missing: No technical context documented.

Category 4: Expectation Record (Max 8 points)

| # | Line Item | Score (0 / 1 / 2) | Notes |

|---|---|---|---|

| 4.1 | Success criteria from discovery: what the customer told us they need to achieve to call this a success | ||

| 4.2 | Non-standard promises flagged: any commitment made during the sale that isn't in the standard product or onboarding scope | ||

| 4.3 | Agreed go-live milestone: the specific first outcome or go-live definition both sides acknowledged | ||

| 4.4 | Pricing or scope exceptions noted: any discounts, inclusions, or carve-outs the CS team needs to honor | ||

| Category 4 Total | /8 |

Scoring guidance:

- 2: Complete: Success criteria are specific and measurable; promises are explicitly noted with context. "Customer success = first pipeline report running in CRM by Day 30, used by 3+ reps" scores 2.

- 1: Partial: Directionally correct but underspecified. "They want better pipeline visibility" scores 1.

- 0: Missing: No expectation record. This is the highest-risk missing category.

Category 5: CRM Hygiene (Max 6 points)

| # | Line Item | Score (0 / 1 / 2) | Notes |

|---|---|---|---|

| 5.1 | Contact records complete: champion, exec sponsor, and day-to-day contact have verified email and phone | ||

| 5.2 | Notes field updated post-close: CRM notes include at minimum a close summary and any relevant context from the final negotiation | ||

| 5.3 | Opportunity stage correct: marked Closed-Won with close date, ARR, and contract term populated | ||

| Category 5 Total | /6 |

Scoring guidance:

- 2: Complete: All fields populated and accurate.

- 1: Partial: Opportunity marked Closed-Won but ARR or close date missing; contact email present but phone number blank.

- 0: Missing: Core fields blank or incorrect.

Total Score and Rubric

| Category | Max Points | Your Score |

|---|---|---|

| Deal Context | 10 | |

| Stakeholder Map | 8 | |

| Technical Fit | 8 | |

| Expectation Record | 8 | |

| CRM Hygiene | 6 | |

| Total | 40 |

Rubric:

| Score | Rating | Recommended Action |

|---|---|---|

| 36-40 | Excellent | CS can onboard independently. No required AE involvement beyond kickoff attendance. |

| 28-35 | Acceptable | 1-2 gaps identified. CSM closes gaps via async with AE before kickoff. AE joins kickoff optionally. |

| 20-27 | Below Standard | Multiple gaps across categories. AE must join kickoff call and complete missing fields within 24 hours of kickoff. Flag to CS manager. |

| Below 20 | Incomplete | Delay kickoff. Request re-handoff from AE. Do not start onboarding against an incomplete record. Escalate to Sales manager. |

How to Introduce the Scorecard Without Starting a War

The first instinct of any Sales team hearing about a handoff scorecard is to read it as a grade. "CS is going to score my handoffs and report to my manager." That framing makes it adversarial from the start, and means the first conversation you have about it will be defensive.

Frame it differently. The scorecard is shared accountability infrastructure, not a performance review tool. CS wants better onboarding outcomes. Sales wants their accounts to succeed, because churned accounts affect their NRR comp and their relationships. The scorecard names what "complete" looks like so both teams have the same target.

When introducing to Sales leadership: Lead with the data on NRR correlation, not with the stories of bad handoffs. "Accounts with complete handoffs show 24% higher NRR at 12 months" is a business case. "CSMs are getting dumped on" is a complaint. One gets a response; the other gets a defensive reaction. McKinsey's research on the NRR advantage in B2B tech gives sales leadership a language they already speak: companies in the top quartile for NRR sustain meaningfully higher valuations, and the handoff is where NRR is won or lost first.

The first month of scores: Share category averages, not individual AE scores. "Our average expectation record score is 0.8 out of 2.0. This category needs attention" is a team observation. Naming individual AEs with low scores in the first month creates defensiveness before the practice has any credibility. Build the baseline first, then use individual data in 1:1 performance conversations after two to three months.

Involve AEs in the calibration. Before rolling out the scorecard, run one calibration session with two or three AEs and two or three CSMs scoring the same five closed deals together. The calibration session surfaces disagreements about what "complete" means for each line item, and those disagreements are more valuable than the scores. They're exactly the definition gaps the scorecard is designed to close.

Using Scorecard Data Over Time

A scorecard run once is a form. A scorecard run every month for six months is a trend line. The trend is where the useful data lives.

Monthly score trend by AE. Track average scores by AE over rolling three-month windows. A single low-scoring deal might be an anomaly. A three-month decline in category 4 (expectation record) for a specific AE is a coaching signal. This AE is either skipping expectations documentation or consistently unclear about what they're committing to. Patterns like this feed directly into the ICP refinement loop. If specific deal types consistently produce low expectation scores, the ICP itself may need tightening.

What a score drop signals. A team-wide drop in category 1 (deal context) might follow a change in deal process: a new proposal template, a CRM migration, or a high-velocity period where documentation was deprioritized. A drop in category 3 (technical fit) might follow a new integration launch where Sales is selling a capability CS doesn't yet have a standard implementation path for.

Tying handoff quality into AE performance conversations. Handoff quality belongs in the AE performance conversation alongside pipeline metrics and quota attainment. Not as a punishment mechanism. Use it as a pattern recognition tool. An AE with consistently high scores is demonstrating post-close discipline that should be recognized. An AE with consistently low scores in category 4 has a documentation habit that's costing CS time and creating churn risk. HBR's 2024 research on new sales compensation models argues that tying a portion of variable pay to post-sale outcomes (including handoff quality) is one of the most reliable ways to change this behavior durably.

Integration with the Handoff Process

When the scorecard runs. Immediately after the kickoff call, within 48 hours. Not weeks later, when memory has faded and the CSM has already worked around the gaps. The kickoff is the moment when CS first validates whether the handoff packet matched reality. That's the right time to score.

Who fills it. The CSM who received the account. Not the AE. Not the CS manager. The CSM who ran the kickoff has the most direct evidence of what arrived and what was missing.

Who sees the results. CS manager and Sales manager see the results. The AE does not receive the scorecard directly, at least not initially. The communication path runs manager-to-manager first. When handoff quality becomes a standard topic in the Sales-CS leadership cadence, AEs can receive their own score trends as part of the regular feedback loop. But starting with direct AE notification before the process has credibility creates friction that sinks the whole initiative. For teams building this into a shared customer record, the scorecard fields map cleanly to the seven fields both teams must agree on.

Frequently Skipped Line Items

Five fields that AEs consistently underweight, and what CSMs can do about each:

4.2: Non-standard promises flagged. AEs skip this because documenting non-standard commitments creates accountability they'd rather avoid. Or they genuinely don't know what "non-standard" means. Solution: give AEs a two-sentence definition in the handoff form ("non-standard = anything not in the default tier the customer is buying, including timeline commitments, custom configurations, and scope inclusions") and make this field required-to-save in the CRM.

1.4: What almost killed the deal. This feels like dwelling on the negative after a win. But the main objection is also the most likely re-emergence risk in the first 90 days. Whatever nearly killed the deal is the thing the customer will be watching to see whether it resurfaces. CSMs need this context before kickoff, not after the customer brings it up again. Script for CSM: "I saw the objection log was blank. Can you give me a quick 2-minute voice note on the biggest obstacle you had to overcome?"

2.4: Anyone who objected. AEs focus on the champion, not the skeptics. But objectors are real stakeholders who will watch the implementation for validation that their concerns were right. CSMs need to know who they are. Script: "I need to know who was skeptical going into this deal, not to re-litigate it, but so I can make sure they see the win they were worried about."

3.4: Customer IT readiness. This gets skipped when the AE didn't do a technical discovery call. The CSM ends up learning about the 6-week IT review process on Day 1 of onboarding, when it should have been in the handoff. Script: "Did you ask about their IT process for new software implementations? Any constraints I should know about?"

1.3: Why they bought now. The most frequently blank field in the deal context category. AEs know the customer's stated business goal but often don't know the specific timing trigger. Knowing that the customer had a board review in Q3 where pipeline visibility was cited as a gap changes how the CSM frames the first 30 days. Script: "What happened that made this deal close this quarter instead of next quarter?"

Frequently Asked Questions

How long does it take to fill out this scorecard?

A CSM who attended the kickoff call can complete the scorecard in 10-15 minutes immediately after the call. The scoring doesn't require research. It reflects what the CSM experienced during kickoff and what was or wasn't present in the handoff packet. The most time-consuming part is writing notes for partial scores, which is also the most valuable part.

Should the scorecard be run for every deal or only deals above a threshold?

Run it for every deal above your onboarding threshold (typically any deal with a dedicated CSM assignment). Below that threshold, transactional SMB deals managed by an AM, a simplified version covering only categories 4 and 5 is sufficient. You want enough data to see patterns, which means consistent application to a defined deal population.

What if the AE disputes a score?

AEs will occasionally disagree with a partial or zero score on a line item they believe they completed. This is a calibration signal, not a problem. Build a 20-minute monthly calibration session where one or two disputed scores are reviewed together. The disagreement usually surfaces an ambiguous definition in the rubric that's worth tightening. Treat disputes as product feedback on the scorecard itself.

How do we implement this in a CRM?

Build each category as a set of custom fields on the opportunity or account record. The CSM fills them post-kickoff. The fields roll up to a score using a simple formula field. The score populates a health-signal field visible to both CS and Sales managers. Most CRMs support this configuration natively. It's a 2-3 hour implementation setup, not a project.

How should we introduce the handoff scorecard to the Sales team without triggering resistance?

Lead with the business case, not the compliance framing. "Accounts with complete handoffs show 24% higher NRR" is a Sales argument. NRR affects AE comp, relationship quality, and renewal credibility. "CS is grading your handoffs" is a management argument that produces defensiveness. Run the first calibration session with two or three willing AEs and two or three CSMs scoring the same five recent deals together. The calibration surfaces disagreements about what "complete" means. Those disagreements are the real output of the first session, not the scores themselves.

What handoff score is considered acceptable before CS begins onboarding?

The rubric defines four thresholds: 36-40 (Excellent: CS onboards independently), 28-35 (Acceptable: CSM closes 1-2 gaps async before kickoff), 20-27 (Below Standard: AE joins kickoff and completes missing fields within 24 hours), and below 20 (Incomplete: delay kickoff, request re-handoff, escalate to Sales manager). A score of 28 or above is the practical minimum for beginning onboarding without risk of significant context gaps. Teams new to scoring typically discover their baseline sits in the 22-27 range, which means AE kickoff attendance and same-week gap closure become the default rather than the exception.

How often should handoff scores be reviewed and with whom?

Review category averages monthly with the Sales-CS leadership pair, not individual AE scores in the first two to three months. Build up to individual AE score trends (rolling three-month averages) in performance conversations after the baseline is established. The monthly cadence also serves as the feedback loop for whether specific deal types, integration requirements, or product areas are consistently producing low scores in particular categories. Those patterns signal training gaps or process changes, not just individual behavior.

What should the weekly cadence for maintaining scorecard hygiene look like?

The scorecard is a post-kickoff artifact, not a weekly maintenance task. The CSM scores within 48 hours of the kickoff call. After that, the score lives in the CRM record and informs two ongoing activities: (1) the monthly Sales-CS leadership review where category averages are tracked, and (2) the AE's own performance conversation where rolling score trends are discussed. Between kickoff and the next month's review, the scorecard doesn't need active maintenance. It's a point-in-time measure of handoff quality, not a dynamic health signal.

Learn More

Senior Operations & Growth Strategist

On this page

- Why a Scored Handoff Standard Changes Behavior

- What the Scorecard Measures

- The Handoff Scorecard (Copy-Paste Ready)

- Category 1: Deal Context (Max 10 points)

- Category 2: Stakeholder Map (Max 8 points)

- Category 3: Technical Fit (Max 8 points)

- Category 4: Expectation Record (Max 8 points)

- Category 5: CRM Hygiene (Max 6 points)

- Total Score and Rubric

- How to Introduce the Scorecard Without Starting a War

- Using Scorecard Data Over Time

- Integration with the Handoff Process

- Frequently Skipped Line Items

- Frequently Asked Questions

- How long does it take to fill out this scorecard?

- Should the scorecard be run for every deal or only deals above a threshold?

- What if the AE disputes a score?

- How do we implement this in a CRM?

- How should we introduce the handoff scorecard to the Sales team without triggering resistance?

- What handoff score is considered acceptable before CS begins onboarding?

- How often should handoff scores be reviewed and with whom?

- What should the weekly cadence for maintaining scorecard hygiene look like?

- Learn More