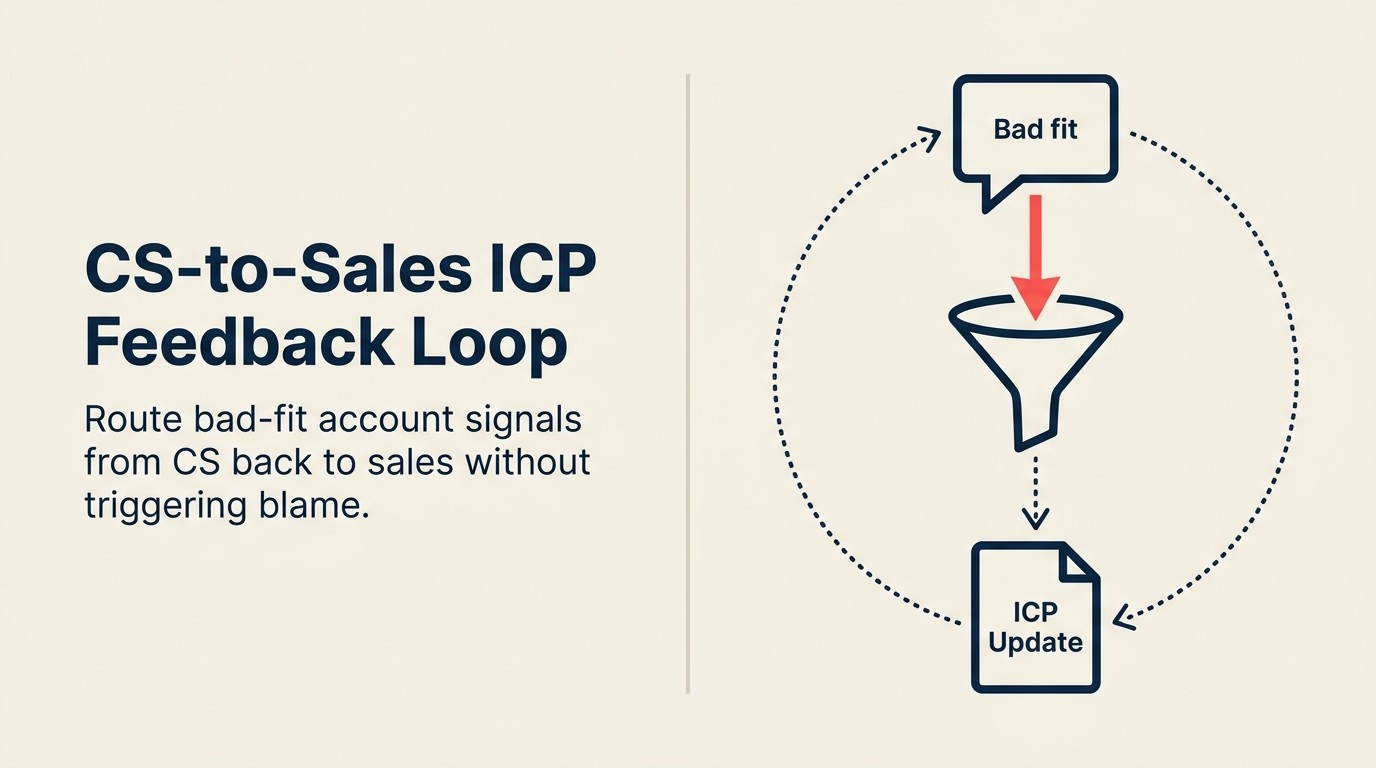

"This Deal Shouldn't Have Closed": How to Run the CS-to-Sales ICP Feedback Loop Without Blame

The account churned at month 11. In the post-mortem, the CSM (customer success manager) team already knew it was coming. They'd known since the kickoff call that this customer was never going to reach value. The use case didn't fit. The team was too small to run the implementation. The champion had no real budget authority and was going to be gone within six months anyway.

But nobody had a clean way to say "this deal shouldn't have closed." Not to the AE (account executive) who hit quota on it. Not to the sales leader who celebrated it. Not to marketing, whose ICP (ideal customer profile) definition created the conditions for it to get into the funnel in the first place.

So the feedback doesn't flow. The same profile gets qualified again next quarter. CS burns more capacity on another account that can't reach value. And the cycle repeats: quietly, expensively, and without anyone technically being wrong. McKinsey's research on net revenue retention shows that NRR (net revenue retention) is among the strongest predictors of B2B SaaS valuation, and bad-fit churn is one of the fastest ways to suppress it. The shared ICP framework that marketing and sales built together is the place where this cycle gets broken, but only if CS data actually reaches the definition review.

This is the most avoided feedback loop in SaaS. Here's how to run it without starting a war.

The "Bad Fit" Feedback Protocol is a five-step quarterly process for routing CS churn intelligence back to sales and marketing without triggering defensiveness. It works by categorizing churns into three root causes: wrong ICP, misaligned use case, and over-promised. Each category has a defined owner, a defined fix, and a defined escalation threshold that triggers an ICP review with marketing, AE coaching, or deal approval process changes.

Why This Feedback Loop Is the Most Avoided One in SaaS

There are three parties who have every reason to not want this conversation.

Sales leaders protect AE judgment and quota attainment. If a CS team says "this account was a bad fit from day one," the implicit accusation is that the AE who closed it either didn't qualify properly or pushed a deal over the line that shouldn't have gone. Neither interpretation is comfortable for a sales leader to accept publicly. And if the AE hit quota on that deal, the organization already rewarded the behavior.

CS teams don't have a defined channel to route the signal. Bad-fit observations sit in churn reports, not in sales playbooks. There's nowhere official for a CSM team lead to file a "this account was unwinnable" note that reaches the sales enablement manager, let alone triggers an ICP review. It surfaces in side conversations. It goes into a churn root cause analysis that nobody reads. It doesn't reach the people who make qualification decisions.

Marketing doesn't want the ICP challenged. The ICP definition that sales is using often comes from marketing, based on cohort analysis, research, and positioning work. When post-sale data suggests the ICP has a wrong assumption baked in, it's asking marketing to revisit a deliverable they already consider closed. Nobody loves that ask.

The organizational incentive is to close the file on a churn event, not reopen the acquisition question. And that incentive is exactly what makes the cycle repeat.

Key Facts: Bad-Fit Churn Costs

- 23% of B2B SaaS churn is attributable to poor fit at acquisition: the customer profile never matched the success criteria, according to Bain & Company's customer loyalty research.

- Companies that conduct regular post-churn ICP reviews reduce bad-fit churn by 31% within two quarters, per a SiriusDecisions study on CS-to-sales feedback loops.

- 67% of CSMs say they knew within the first 30 days of onboarding that an account was unlikely to renew, but fewer than 20% had a formal channel to report that signal back to sales, per Totango's annual CS industry survey.

The Cost of Skipping It

When the feedback loop doesn't run, the consequences compound quietly.

The same bad-fit profile gets sold again next quarter. Nothing in the lead scoring, the qualification criteria, or the discovery questions changes. AEs who want to close deals close deals. If the profile looks attractive on the surface (right company size, right title, willing to sign), it goes to CS.

CS keeps burning capacity on accounts that can't reach value. A bad-fit account typically requires more onboarding support, more escalation hours, and more check-in calls than a well-fit account. And it produces no expansion. The CS team knows this. But without a feedback channel, the best they can do is complain in internal Slack or document it in a churn report that sales leadership doesn't read. The 8 warning signs of sales-CS misalignment, especially the ones around closed-lost attribution, are almost always present in organizations where this loop isn't running.

NRR stays suppressed. Not because the product is broken, but because the wrong customers are buying it. When bad-fit accounts represent 15-20% of the new customer cohort in any given quarter, they drag NRR down regardless of how well CS manages the well-fit accounts. This is the most expensive version of the problem: it looks like a CS execution issue when it's actually an acquisition issue.

And the teams build resentment instead of shared intelligence. CS believes sales doesn't care about what happens after close. Sales believes CS blames them for everything that churns. Neither team can see the data that would help them understand which accounts are structurally unwinnable versus which are CS execution problems.

Understanding why the loop fails is only half the problem. The other half is knowing what "bad fit" actually means precisely enough to act on it.

What "Bad Fit" Actually Means: Three Distinct Categories

Not all bad-fit churn has the same root cause, and that distinction matters because each category routes to a different fix.

Category 1: Wrong ICP. The company profile was outside the parameters that predict customer success. Too small to run the implementation. Wrong industry for the core use case. Technical stack incompatibility. Team maturity level that can't support the product. These accounts never had a realistic path to value, regardless of what was sold or how well CS onboarded them.

Routes to: ICP definition update. If Category 1 accounts represent more than X% of a churn cohort, it triggers a formal ICP review with marketing. The criteria that define a qualified lead need adjustment.

Category 2: Misaligned use case. The company profile fit the ICP, but the problem the customer bought to solve wasn't the problem the product actually solves. Gartner's ICP framework research identifies use case alignment as one of the most commonly skipped dimensions in ICP development: companies define firmographic fit but rarely validate behavioral and situational fit before deals advance. The AE either didn't catch the mismatch or pushed through it. The customer arrives at onboarding expecting capability that doesn't exist in the way they need it.

Routes to: Sales coaching and discovery question review. The AE either lacked product knowledge or qualified too loosely on use case fit. The fix is at the qualification stage: sharper discovery questions that surface use case alignment before close, and coaching for the individual AE involved. This is also where the sales-CS alignment maturity model is useful. Teams at lower maturity levels typically can't route this kind of Category 2 signal at all.

Category 3: Over-promised. The ICP was fine and the use case was real, but the deal was closed on commitments that CS can't deliver. The AE made claims about timeline, features, integrations, or support levels that don't match reality. The customer arrives feeling misled.

Routes to: Deal approval process review and AE coaching, handled privately, not publicly. This is the most sensitive category because it implicates specific AE behavior. It needs to be handled at the individual level, not in a team meeting.

Why this taxonomy matters: without categories, the "bad fit" conversation becomes one undifferentiated pile of blame. With categories, each churn event becomes a routing decision. Where does this go? Who owns the fix? What changes next quarter?

How to Operationalize the Feedback Without Finger-Pointing

The goal is a quarterly cohort review, not a case-by-case blame session. Here's the five-step structure:

Step 1: Tag churn root cause at close. When an account churns, the CSM team lead codes it to one of the three categories above before the record is closed. This is a required field in the CS platform, not an optional note. Without structured tagging, there's no cohort to analyze: just a list of churned accounts with free-text explanations that can't be aggregated.

Step 2: Quarterly bad-fit review session. CS brings the tagged cohort from the past quarter to a joint session with sales leadership and RevOps (revenue operations). The framing is critical: this is a pattern meeting, not a case review. You're not asking "why did Jamie close the Acme deal?" You're asking "what do these seven churns have in common that we can act on?"

Step 3: ICP update trigger. If Category 1 accounts represent more than a defined threshold of the churn cohort (say, 25%), it triggers a formal ICP review with marketing. That review should happen within 30 days of the quarterly session, not at the next annual planning cycle.

Step 4: Sales coaching trigger. If Category 3 (over-promised) exceeds its threshold, it routes to AE-level coaching. Not a team announcement, not a new policy, not a Slack post. The VP Sales and the specific AE's manager have a private conversation. The pattern informs future training; the individual conversation addresses the behavior.

Step 5: Close the loop with CSMs. After the quarterly session, the CSM team lead tells the CSM team what changed. If Category 1 churn triggered an ICP update, CSMs know that specific profile will now be flagged earlier in qualification. If Category 3 triggered coaching changes, CSMs know that the over-promise pattern is being addressed. The feedback loop only sustains itself if CSMs can see that their input influenced something.

Getting the process right is table stakes. What determines whether Sales actually engages is the language you use to open the room.

The Language That Makes It Land Without Defensiveness

Framing is the difference between a productive quarterly review and a blame session that nobody agrees to schedule next quarter. Three language principles:

Frame it as a cohort pattern, not an individual failure. "Three of our seven churns this quarter shared these profile markers: teams under 25 people, no dedicated ops function, and a champion below director level" is a data observation about a pattern. Forrester's research on aligning teams around a shared ICP confirms that pattern-level reviews, not deal-by-deal audits, are what drive ICP improvements that actually stick across sales and CS. "Jamie closed a bad deal" is an accusation. The first invites problem-solving. The second triggers defensiveness.

Lead with NRR impact, not effort burden. Saying "these accounts cost CS 400 hours and produced zero expansion" is a revenue conversation. Saying "we're burned out dealing with bad-fit accounts" is a complaint. Sales leaders respond to revenue data. Present the cohort's NRR contribution (or lack of it) before you talk about CS capacity.

Invite sales to co-own the fix. "What changed in the market that made this profile look attractive to AEs this quarter?" treats the sales team as intelligence sources, not defendants. You're asking for their read on why these accounts closed, which they may have genuine insight into, rather than asking them to accept blame for something that already happened. That question usually produces useful information: a competitor created urgency, a new persona was being targeted, a promo created artificial demand. Those are fixable signals.

Quotable Nuggets

"23% of B2B SaaS churn is attributable to poor fit at acquisition: the customer profile never matched the success criteria, according to Bain & Company's customer loyalty research."

"67% of CSMs say they knew within the first 30 days of onboarding that an account was unlikely to renew. But fewer than 20% had a formal channel to report that signal back to sales, per Totango's annual CS industry survey."

"A single bad-fit enterprise account costs an average of 2.3x the CS hours of a well-fit account at the same ARR tier, according to Gainsight's Customer Success Benchmark report."

Rework Analysis: Bad-fit churn rarely looks like churn in the pipeline forecast. It looks like a healthy new logo until month 9, when the health score collapses and the CSM is suddenly managing an escalation on an account that was never going to succeed. Companies that run The "Bad Fit" Feedback Protocol quarterly, tagging churn by category before the account closes, then routing that cohort to a joint review, see the pattern 2-3 quarters earlier than companies that rely on informal post-mortems. The cost difference is measured in CSM capacity freed, NRR points recovered, and ICP definitions that stop attracting the wrong buyers.

The Bridge to Marketing-Sales Alignment

This feedback loop doesn't stop at the sales-CS seam. CS is the downstream validator of ICP assumptions that originated upstream: in marketing research, in product positioning, in the criteria marketing uses to define what a qualified lead looks like.

When CS data shows that a specific ICP profile doesn't produce successful customers, that finding needs to reach marketing, not just sales. The ICP Refinement Loop: CS Feedback to Sales covers the mechanics of that handoff, but the quarterly bad-fit review is where the trigger originates.

CS is uniquely positioned to validate or challenge ICP assumptions because they're the only team that sees whether the customer who matched the ICP criteria actually became a successful customer. Marketing can optimize for profile match. Sales can optimize for deal closure. Only CS knows whether those optimized-for outcomes actually led to value.

This connects directly to the shared ICP framework that marketing and sales maintain together. The full loop runs from marketing's ICP definition, through sales qualification, through CS post-sale observation, and back to marketing's next revision. CS is the downstream validator in a loop that starts upstream. The quarterly bad-fit review is how that validation signal gets formalized. The marketing-sales alignment glossary defines the ICP criteria language that should be consistent across all three teams when that loop is working correctly.

Anti-Patterns

The annual ICP review that nobody acts on. A once-a-year ICP review is too far from the churn events to change behavior in the next quarter. By the time the annual review happens, the churn data is six to twelve months old, the AEs who closed those deals may have moved on, and the market conditions that made those profiles look attractive have changed. Quarterly cadence is the minimum viable feedback frequency.

CS churn reports that stop at "product fit" without surfacing acquisition-stage root causes. Most CS platform churn reports categorize churns by what happened during the customer lifecycle: product adoption failure, support frustration, budget cut. They rarely trace back to what happened before the customer signed. A churn tagged as "product fit issue" may actually be a Category 1 bad-fit churn that was inevitable from qualification. Without the three-category taxonomy, that distinction gets lost.

Running the feedback loop in a Slack channel. Asynchronous, informal channels produce informal information. When the quarterly bad-fit review is "CS team lead posts a summary in #revenue-ops and waits for replies," nothing happens. The session needs a date on the calendar, RevOps in the room, and a document that gets updated with decisions. The structure is the process.

What Rework Makes Easier

When deal records in the CRM and CS account records share a schema, the churn root cause tag and the deal profile live in the same place. RevOps can run a report that joins "Category 1 churn" with "ICP fit score at close" without manually cross-referencing two platforms. The quarterly session runs from a shared dashboard rather than a manually assembled spreadsheet. For the architectural detail behind this, see Shared Customer Record Architecture.

Frequently Asked Questions

How do you surface "bad fit" feedback to sales without it turning into finger-pointing?

Frame the quarterly review as a cohort pattern meeting, not a case-by-case deal audit. The question isn't "why did this AE close a bad deal?" It's "what do these seven churns have in common that we can act on?" Leading with NRR impact rather than CS effort reinforces that this is a revenue conversation, not a complaint. Sales leaders respond to data showing that specific ICP profiles produce near-zero expansion and outsized CS costs. The "Bad Fit" Feedback Protocol is designed to make every discussion a routing decision: which category, which fix, which owner, rather than a verdict on individual performance.

When should a bad-fit churn cohort trigger an ICP update?

When Category 1 accounts (wrong ICP: company profile outside the parameters that predict success) represent more than a defined threshold of the churn cohort, typically 25%, it triggers a formal ICP review with marketing within 30 days of the quarterly session. This threshold prevents ICP reviews from being triggered by every bad hire or edge case close while ensuring that systemic acquisition mistakes get surfaced fast enough to change the next quarter's qualification behavior.

What are the three categories of bad-fit churn?

Category 1 is wrong ICP: the company profile was outside the parameters that predict success (too small, wrong industry, incompatible technical stack). This routes to an ICP definition update with marketing. Category 2 is misaligned use case: the company fit the ICP, but the problem they bought to solve wasn't the problem the product actually solves. This routes to sales coaching and sharper discovery questions. Category 3 is over-promised: the ICP and use case were fine, but the AE made commitments that CS can't deliver. This routes to private AE coaching and deal approval process review.

How often should the bad-fit feedback loop run?

Quarterly is the minimum viable cadence. An annual ICP review is too infrequent. By the time it runs, the churn data is 6-12 months old and the AEs who closed those deals may have moved on. Quarterly cadence means the churn signal from a given quarter informs qualification behavior within 90 days, rather than being absorbed into an annual planning document that nobody references during the sales cycle.

Is it the CSM team's job to flag bad-fit deals, or sales leadership's?

Both, at different stages. The CSM team lead flags individual churns and codes them to category before the record closes. That's the CS team's accountability. The joint quarterly session (CS, sales leadership, RevOps) is where those coded churns become actionable patterns. Sales leadership's accountability is to engage with the pattern data and own the fixes that fall in their domain: coaching, qualification criteria changes, deal approval processes. The protocol fails if either team treats the other's accountability as the only one that matters.

How do you prevent the quarterly bad-fit review from becoming a blame session?

Three language disciplines help. First: frame every observation as a cohort characteristic, not an individual failure. "Three of our seven churns shared these profile markers" is data. "Jamie closed a bad deal" is an accusation. Second: lead with NRR impact, not CS effort. Revenue data lands differently than capacity complaints. Third: invite sales to co-own the diagnosis by asking "what changed in the market that made this profile look attractive to AEs this quarter?" That question positions sales as intelligence sources, not defendants, and usually produces genuinely useful context about competitive dynamics, promotional pricing, or new personas that were being targeted.

Learn More

Senior Operations & Growth Strategist

On this page

- Why This Feedback Loop Is the Most Avoided One in SaaS

- The Cost of Skipping It

- What "Bad Fit" Actually Means: Three Distinct Categories

- How to Operationalize the Feedback Without Finger-Pointing

- The Language That Makes It Land Without Defensiveness

- Quotable Nuggets

- The Bridge to Marketing-Sales Alignment

- Anti-Patterns

- What Rework Makes Easier

- Frequently Asked Questions

- How do you surface "bad fit" feedback to sales without it turning into finger-pointing?

- When should a bad-fit churn cohort trigger an ICP update?

- What are the three categories of bad-fit churn?

- How often should the bad-fit feedback loop run?

- Is it the CSM team's job to flag bad-fit deals, or sales leadership's?

- How do you prevent the quarterly bad-fit review from becoming a blame session?

- Learn More