How to Read an AI Use Case Using the ACE Formula

Every AI vendor pitch sounds the same. "AI-powered." "Intelligent automation." "Transforms your workflow." The adjectives are interchangeable. The demos are polished. And by the third one in a week, most buyers are making decisions on gut feel: the interface looked clean, the salesperson was sharp, the case study mentioned a company they recognized.

None of that is a buying framework.

The real problem isn't the vendors. It's that most teams don't have vocabulary for what AI actually does. So when two tools both claim to "automate your sales process," there's no structured way to ask whether they're doing the same thing. One might classify and summarize inbound data. The other might score probabilities and auto-assign leads. They look identical in a pitch deck and work completely differently in production.

The ACE Framework fixes this. It gives you five verbs that describe everything business AI does: Ingest, Analyze, Predict, Generate, Execute. Once you know those five, you can tag any AI product in under five minutes, compare tools in the same category honestly, and spot the governance risks before you're three weeks into a pilot. This article walks through the five-step tagging protocol with worked examples across real products so the method becomes a reflex, not a checklist.

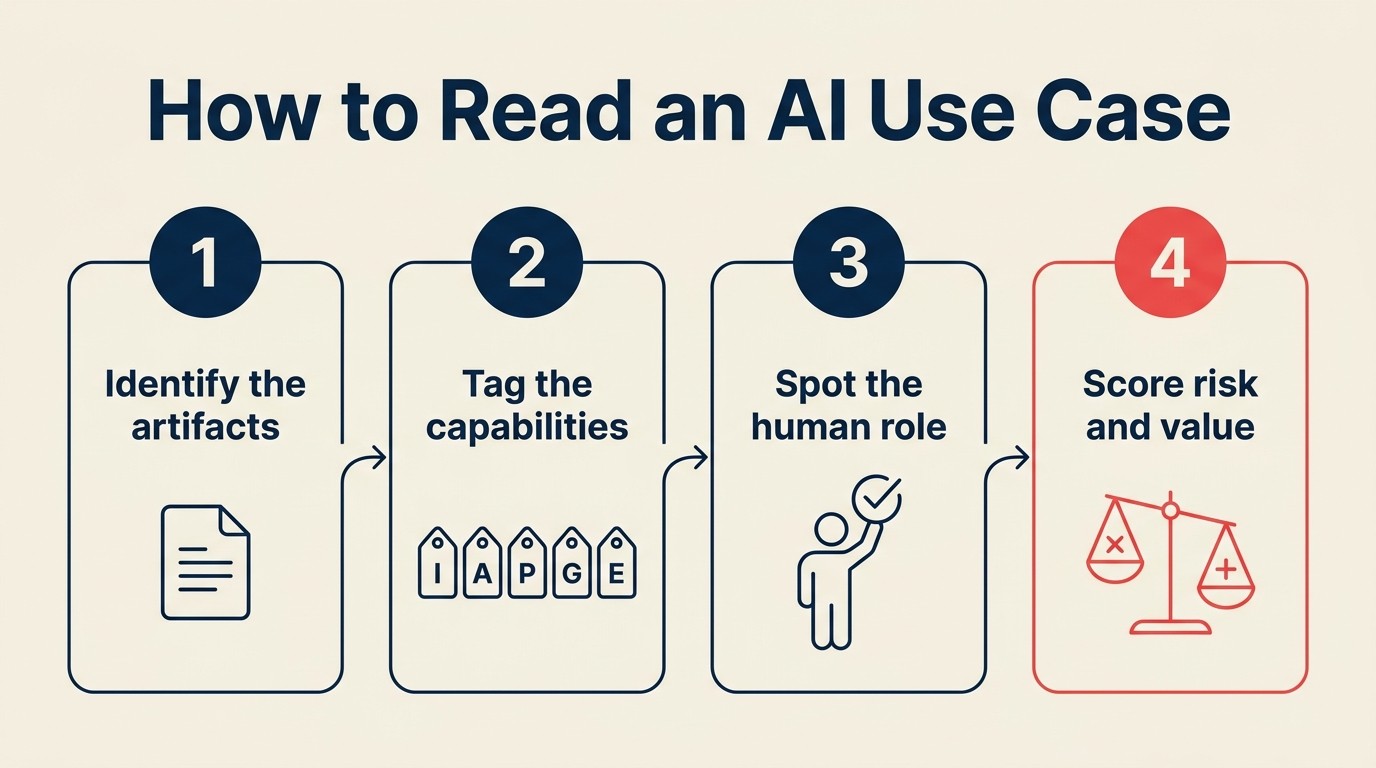

The ACE tagging protocol: 5 questions in order

The ACE Framework describes business AI as five capabilities operating on data: Ingest, Analyze, Predict, Generate, Execute. The tagging protocol applies those five concepts in sequence to any use case. You're building a receipt, not a judgment call.

Ask these questions in order:

Step 1: What data does it consume?

Start at the foundation. Every capability needs data, and the data type shapes everything downstream. Text? Structured records? Images? Audio? Code? Many tools consume multiple types, but there's usually a primary one. If you can't identify the input, you can't tag anything else.

Step 2: Which capabilities does it use?

Walk through each of the five verbs. Does it Ingest (convert raw signals into usable form)? Analyze (classify, extract, summarize)? Predict (score probabilities, forecast outcomes)? Generate (produce text, images, code, plans)? Execute (change state in an external system)? Most real products use two to four capabilities in sequence. List all that apply.

Step 3: What is the dominant pattern?

Capability combinations cluster into reusable patterns. Analyze + Predict on inbound records is Scoring and Routing. Ingest on audio feeding Analyze and Generate is Meeting Intelligence. Ingest on images feeding Analyze into a record update is Vision Extract. The pattern tells you what adjacent tools do the same job and what failure modes to expect.

Step 4: What is the output: artifact or state change?

This is the Generate vs. Execute boundary. Generate produces a draft: an email, a score, a summary that sits waiting for something to happen next. Execute changes external state: sends the email, updates the CRM record, issues the refund. That boundary matters for governance. A tool that Executes autonomously without your approval carries a different risk profile than one that only Generates drafts.

Step 5: Where does the human fit in the loop?

Is there a review gate before anything Executes? Monitoring after the fact? Or is the loop fully autonomous? Fully autonomous Execute is the highest-risk configuration. It's appropriate sometimes, but it should be an explicit design decision, not an oversight.

Five worked examples

Example 1: Salesforce Einstein lead scoring

What it claims to do: "AI-powered lead scoring that tells your reps which leads to prioritize."

ACE tagging:

| Question | Answer |

|---|---|

| Data consumed | Structured (CRM records: firmographics, deal stage history, lead interactions, email open rates) |

| Capabilities | Analyze (extract relevant features from CRM records) + Predict (output a probability score per lead) |

| Dominant pattern | Scoring and Routing |

| Output | A score (Generate) that optionally triggers auto-assignment (Execute) |

| Human in the loop | Reps review their high-score leads. Managers can configure auto-routing rules |

What this tells you: Einstein is primarily a Predict tool with an Analyze preprocessing step. It doesn't write emails, analyze call audio, or update CRM fields on its own. If your team is shopping it to automate outreach, that's not what it does. The Execute capability (auto-routing) exists but is optional. Most teams start with the score as a signal for human reps, not as a trigger for automated action.

Common mistake: Expecting Predict to work without clean historical data. If your CRM doesn't have labeled outcomes (won/lost records tied to firmographic features), the scoring model has nothing to learn from. Clean historical data is the prerequisite. Garbage in, garbage scores out.

Example 2: Gong call analysis

What it claims to do: "Revenue intelligence from your sales calls. AI surfaces what's working and why deals close."

ACE tagging:

| Question | Answer |

|---|---|

| Data consumed | Audio (recorded calls) + Text (transcripts) + Structured (CRM records for deal context) |

| Capabilities | Ingest (audio to transcript via speech-to-text) + Analyze (topics, objections, sentiment, talk-time ratio) + Generate (call summary, coaching insights, next-step suggestions) + Execute (write CRM notes, push to Salesforce) |

| Dominant pattern | Meeting Intelligence |

| Output | Both. Summaries and coaching insights are Generate (artifacts for human review). CRM note updates are Execute (state change) |

| Human in the loop | Reps read the summary. Managers review coaching dashboards. Gong doesn't take action on the customer |

What this tells you: Gong uses four of the five ACE capabilities. The Ingest step (transcription quality) is foundational: if calls are recorded in noisy environments or transcription accuracy is low, every downstream capability degrades. The Execute step (CRM write-back) is real but low-risk: it's updating a note field, not sending an email or issuing a refund.

Common mistake: Treating Gong's insights as conclusions rather than signals. Analyze flags that reps who ask about timeline close at higher rates. That's a correlation from past data. It's a prompt to investigate, not a proven playbook.

Example 3: ChatGPT writing a sales email

What it claims to do: Nothing formally. It's a general-purpose AI assistant. In this scenario, a rep uses it to draft outreach.

ACE tagging:

| Question | Answer |

|---|---|

| Data consumed | Text (the rep's prompt, deal context they paste in, any instructions) |

| Capabilities | Analyze (understand the prompt and context) + Generate (produce the draft email) |

| Dominant pattern | Workflow Copilot (tool mode) |

| Output | A draft (Generate only). Nothing has been sent |

| Human in the loop | Fully in control. The rep decides whether to use the draft, edit it, or discard it. Execute is separate and manual |

What this tells you: ChatGPT used this way is a pure Generate tool. It has no access to your CRM, can't send anything, and knows nothing about the actual prospect beyond what the rep pastes in. The risk profile is low: worst case is a poor draft that gets discarded. But it's also why the productivity ceiling is limited. Every output requires a human to verify and push out.

This is the simplest ACE formula in practice. Analyze + Generate, no Execute, human decides everything. Once you tag it this way, the comparison with an autonomous SDR agent (which adds Execute and removes the human gate) becomes much sharper.

Example 4: Bill.com AI invoice automation

What it claims to do: "Automate invoice capture and coding. AI extracts data from your invoices and routes them for approval."

ACE tagging:

| Question | Answer |

|---|---|

| Data consumed | Image (invoice scans, PDFs) + Structured (vendor master records, chart of accounts) |

| Capabilities | Ingest (OCR and document parsing on invoice image) + Analyze (extract vendor name, line items, amounts, GL codes) + Execute (create invoice record, set payment schedule, route for approval) |

| Dominant pattern | Vision Extract |

| Output | Extracted structured data (Analyze output) becomes a live invoice record (Execute) |

| Human in the loop | Approval gates exist. Invoices above a configurable dollar threshold require human sign-off before payment |

What this tells you: The Ingest step is where most accuracy problems originate: hand-filled forms, unusual layouts, and low-resolution scans all degrade OCR. The Execute step has real consequences: payment schedules and approval queues are live records in your system. The human approval gate is the right design, but it needs to be explicitly configured. Most teams underestimate how much the dollar threshold matters.

Common mistake: Setting the approval threshold too high (say, $5,000) and assuming everything below is low-risk. A wave of small duplicate invoices from the same vendor at $800 each can slip through. The Analyze capability isn't checking for duplicates unless that logic is explicitly built in.

Example 5: Cursor and Claude Code (autonomous coding agents)

What it claims to do: "An AI coding agent that reads your codebase, writes code to spec, runs tests, and opens a pull request."

ACE tagging:

| Question | Answer |

|---|---|

| Data consumed | Code (the repository, existing files, past PRs) + Text (the spec or issue description, user instructions) |

| Capabilities | All five. Ingest (read and parse the codebase) + Analyze (understand the existing structure, find the relevant files) + Predict (determine the most likely correct implementation approach) + Generate (write the code changes) + Execute (run tests, create the PR, optionally merge) |

| Dominant pattern | Autonomous Agent |

| Output | Code changes are Generate. PR creation and test execution are Execute. Merge (if configured) is Execute with high consequence |

| Human in the loop | Reviews the PR before merge. This is the critical gate. Without it, Execute reaches into production |

What this tells you: All five ACE capabilities are active, which means all five failure modes are live simultaneously. Ingest can misread an unfamiliar codebase. Analyze can misidentify relevant files. Predict can choose an implementation that's technically correct but architecturally wrong. Generate can write code that passes tests but introduces subtle logic errors. Execute (the merge) is irreversible in production.

The PR-before-merge gate is the only point where a human can catch failures from any of the four prior capabilities. Teams that remove it to "fully automate" deployment pipelines are removing the safety valve on all five failure modes at once.

Worth noting: Cursor and Claude Code differ in scope. Cursor is primarily a Workflow Copilot (Generate-heavy, human drives every step in the IDE). Claude Code can operate more autonomously (Execute-capable, multi-step tasks). Tagging both forces the distinction that "AI coding tool" hides entirely.

Your turn: the audit worksheet

Run this for three AI tools your team uses right now. Don't start with vendor documentation. Start with what the tool actually does in your workflow, then map backward.

For each tool, complete this table:

| Field | Your answer |

|---|---|

| Tool name | |

| What does it consume? (data types) | |

| Which ACE capabilities does it use? | |

| What pattern does it match? | |

| Is the output a draft (Generate) or a state change (Execute)? | |

| Where is the human in the loop? | |

| What happens if the AI is wrong at each capability? |

Then answer these three questions:

- Which capability dominates? (The one that drives most of the tool's value proposition.)

- Does it Execute? If yes, are the Execute guardrails explicit and documented, or assumed?

- Comparing two tools you use in the same category: are they using the same ACE formula, or different ones?

Question three is the revealing one. Two CRM intelligence tools can look identical in a demo but use completely different capability combinations. One is Analyze + Generate (summarizes deal notes, drafts next steps). Another is Analyze + Predict + Execute (scores deal health, forecasts close, auto-routes at-risk deals to a manager). ACE tagging surfaces that difference in 10 minutes rather than a 60-minute demo.

Common mistakes when tagging

Mistake 1: Conflating Analyze with Predict.

Analyze answers "what is this?" Predict answers "what is likely next?" A tool that classifies support tickets by type is Analyze. A tool that scores which tickets are likely to escalate to churn is Predict (with Analyze as input). They fail differently too. Analyze failure shows up immediately (wrong classification). Predict failure shows up weeks later when probability estimates drift from reality.

Mistake 2: Missing Execute.

Teams undercount Execute because it's behind the scenes. A meeting intelligence tool that "updates your CRM after calls" is Executing. A lead scoring tool that "auto-assigns to the right rep" is Executing. If you only see the dashboard, you miss the API calls already changing records in your system. Walk through the integration settings, not just the interface. Write permissions, webhooks, automation triggers — those are Execute.

Mistake 3: Undercounting data types.

Gong isn't just processing audio. It's ingesting the CRM record, the call audio, and the transcript simultaneously. The audio carries the signal, but the CRM context (deal stage, company size, rep) is what makes Analyze and Predict useful. Tag only the primary channel and you'll miss the data dependencies — and the failure modes that appear when secondary inputs are messy.

Mistake 4: Listing capabilities without naming the pattern.

A list of capabilities is a parts list. A pattern is the assembly. Two tools might both use Analyze + Generate, but one is a Workflow Copilot (human-driven, single-output, iterative) and the other is a Document Review engine (batch-processed, multi-document, structured output). The pattern tells you which failure modes are most likely and what workflow changes the tool requires. Capabilities without a pattern is half a tag.

Using ACE tags for buying decisions

Find redundancy in your stack. Tag all your current AI tools and lay the results next to each other. Three tools doing Analyze + Generate on text is consolidation opportunity. Zero tools doing Predict despite paying for "predictive analytics" is a gap. The tags show you the shape of your actual AI investment, which is often different from what you think you have.

Compare tools honestly. Two "AI sales tools" can use completely different ACE formulas. If one is Analyze + Generate and the other is Analyze + Predict + Execute, they're solving different problems. You're comparing a copilot to an automation engine. That distinction should drive your evaluation criteria, not demo quality.

Match the formula to the actual need. Most buying errors happen because teams know they want "AI for X" but haven't identified which capability they need for X. Rep productivity (drafting faster)? You need Generate. Prioritization (which deals to focus on)? You need Predict. Manual data entry from documents? You need Ingest + Analyze + Execute. Starting with the capability need, not the category label, gets you to the right shortlist faster.

One note: the tagging protocol is a diagnostic, not a score. A tool with five capabilities isn't better than a tool with two. A badly governed Autonomous Agent using all five is riskier than a simple Generate-only copilot. What matters is whether the capability mix solves your actual problem, whether Execute steps are appropriately guarded, and whether the data feeding the system is clean enough to trust.

Building the reflex

The goal isn't to tag every tool perfectly on the first try. The goal is to build the reflex.

Start with the five capabilities as a mental checklist during demos. When a vendor says "our AI analyzes your data," ask: is that Analyze (current state) or Predict (future probability)? When they say "automates your workflow," ask: does it Generate drafts or Execute actions? When they say "intelligent routing," ask whether Execute is present and what the human approval mechanism is.

In a month of this, you'll read pitches differently. Priya's three-vendor problem becomes a 15-minute comparison instead of a 60-minute gut check. And tagging initiatives becomes the first step in any project brief, not an afterthought.

Five verbs. Six data types. Ten patterns. The practice is what makes it stick.

This article is part of the ACE Framework Foundation collection. Related reading: the Generate vs. Execute boundary, tagging AI initiatives, and the deep-dives on Predict and Execute.

Senior Operations & Growth Strategist

On this page

- The ACE tagging protocol: 5 questions in order

- Five worked examples

- Example 1: Salesforce Einstein lead scoring

- Example 2: Gong call analysis

- Example 3: ChatGPT writing a sales email

- Example 4: Bill.com AI invoice automation

- Example 5: Cursor and Claude Code (autonomous coding agents)

- Your turn: the audit worksheet

- Common mistakes when tagging

- Using ACE tags for buying decisions

- Building the reflex