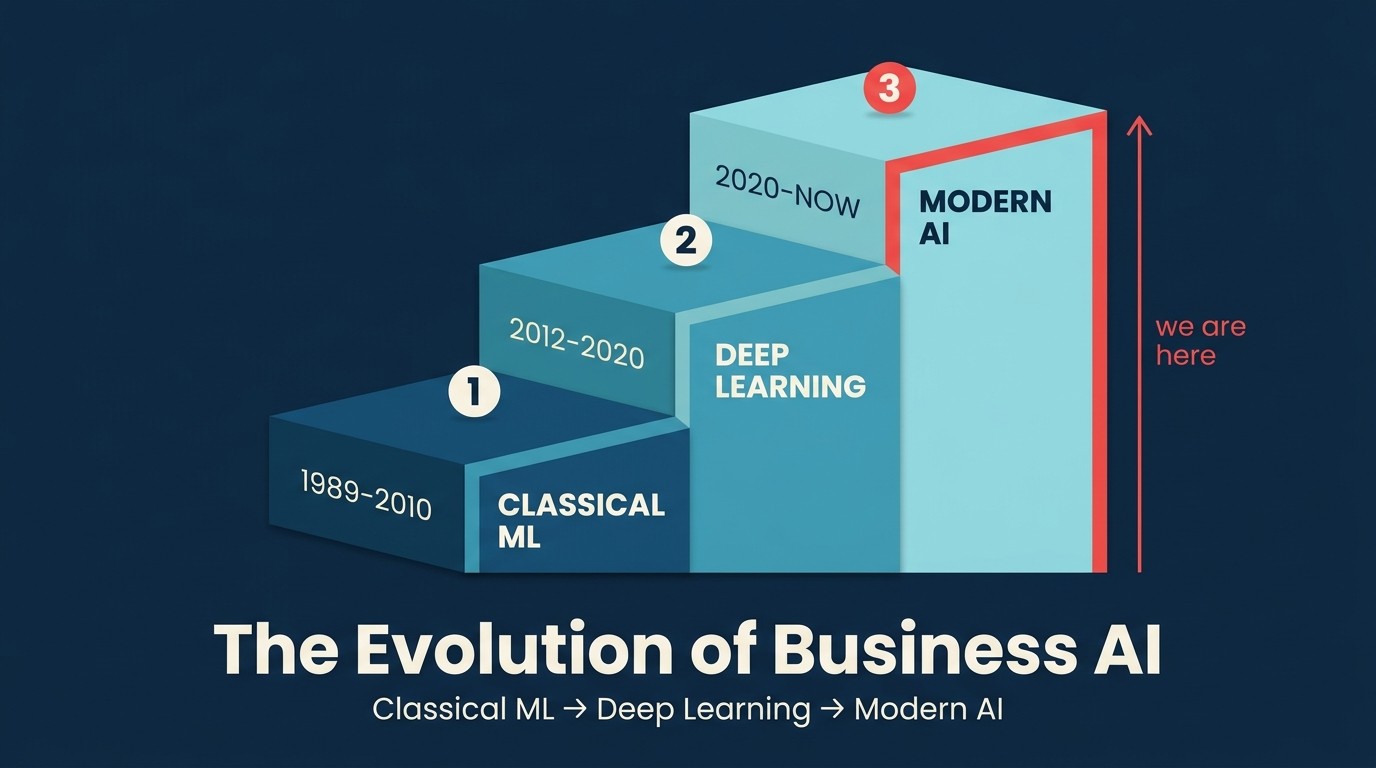

The Evolution of Business AI: From Classical ML to Modern AI

Every vendor demo opens with "the AI revolution." Most of the people giving those demos started their careers after the last major AI wave. So when they say AI changed everything in 2022, they mean it. But the historical record tells a different story.

Business AI is over 30 years old. FICO scores automated credit decisions in 1989. Spam filters used statistical classification in the 1990s. Amazon's recommendation engine was live before most current operators had their first job. AI didn't start in 2022. The interface changed. The accessibility changed. The noise level certainly changed. But the underlying thing had been building for three decades before ChatGPT launched.

This matters for practical reasons, not historical ones. If you think AI is a 2022 phenomenon, you'll overweight the latest tools and underweight the durable investments that have determined AI success in every era. You'll chase the newest model instead of doing the unglamorous data work. And you'll have to rebuild your mental model every six months as the product landscape shifts.

Understanding the three eras of business AI, and what actually changed in each one, gives you a frame for evaluating the current wave without getting whipsawed by the hype. It also explains why the ACE Framework is built around capabilities rather than products: the capabilities predate ChatGPT by decades, and they'll outlast whatever comes next.

Era 1: Classical ML (roughly 1990 to 2010)

The first era of business AI was quiet. It didn't have a brand. Nobody wrote keynote slides about it. It just ran inside systems that companies paid for and mostly ignored.

The technical backbone was classical machine learning: decision trees, logistic regression, statistical models trained on historical data. These weren't neural networks in the deep learning sense. They were, at their core, sophisticated pattern-matching tools that turned labeled historical records into probability estimates.

What worked. Spam filters, FICO scores (introduced in 1989, dominant through the 1990s), and recommendation engines at early Amazon and Netflix. These weren't glamorous. But they worked at scale. By the mid-2000s, large banks were running automated underwriting systems that processed thousands of loan applications with minimal human review at the first screen.

Who deployed it. Large enterprises with data science teams and custom infrastructure. You needed PhDs, proprietary training pipelines, and months to build and deploy a meaningful model. A 200-person mid-market company didn't have this.

ACE Framework mapping. This era was mostly Predict: scoring, forecasting, anomaly detection, ranking. Some Analyze was present. Ingest, Generate, and Execute were absent or extremely narrow. This was AI for the few.

Era 2: Deep Learning at Scale (roughly 2010 to 2020)

The second era brought a different kind of change. It wasn't that Predict got dramatically better. It was that a new family of capabilities became commercially viable.

Deep learning, powered by GPUs and trained on internet-scale datasets, unlocked things classical ML couldn't do well: perceiving images, understanding speech, translating between languages. The AlexNet paper in 2012 showed that a deep neural network could outperform every existing approach on image recognition. That result set off a decade of applied research that found its way into products within a few years.

What changed. Reliable Ingest at scale. AI could now process images (Google Vision API, 2015), transcribe speech at near-human accuracy (Google Cloud Speech-to-Text, 2016), and translate in real time. Information trapped in audio and images could flow into AI systems at low cost.

Business applications. Voice assistants (Siri 2011, Alexa 2014), image recognition for retail and manufacturing quality control, translation tools. Gong and Chorus were founded in this window, built on the idea that AI could now reliably transcribe a sales call and extract meaning from it.

Who deployed it. Big Tech built the foundation (Google, Microsoft, Amazon) and opened it as APIs. Fast-followers with engineering teams consumed those APIs. The barrier dropped from "hire a PhD team" to "hire a software engineer who knows REST."

ACE Framework mapping. Ingest became a real, accessible capability. Analyze got richer. Predict continued to mature. Generate remained primitive. Execute was technically possible but rarely autonomous.

Era 3: LLMs and Agents (2022 to now)

ChatGPT launched in November 2022 and reached one million users in five days. That pace of adoption was real, and it reflected something genuinely new: for the first time, the interface to AI was natural language. You didn't need to know how to write code, configure an API, or understand model architecture. You typed.

But the technology behind the interface wasn't born that month. GPT-3 had been publicly available since 2020. Transformer architecture dates to a 2017 Google paper. The research that made GPT-4 possible had been underway for years. What changed in November 2022 was packaging and access, not the underlying physics of what AI could do.

What changed. The Generate capability moved from peripheral to dominant. AI could now produce fluent, coherent text across almost any domain. And because the interface was conversational, the user didn't have to know anything about the underlying model. A sales rep could write a prospecting email. A founder could draft a board summary. An operations lead could build a first draft of a process document.

And then agents arrived. AI that doesn't just generate a draft but takes sequences of actions: searching, summarizing, writing, and then actually doing something in an external system. This is Execute becoming real at scale.

Business applications. GitHub Copilot (code generation), Gong's AI call summaries, Intercom Fin (support agent that handles tickets end-to-end), Salesforce Agentforce, Jasper and Writer for content. The list keeps growing because the barrier to building with this technology dropped to nearly zero.

Who deploys it. Everyone. Product managers. Operations leads. Sales reps. CEOs. This is the first era where a non-technical person can get meaningful value from AI without a configuration step beyond opening a browser tab.

Mapping to the ACE Framework. Generate became a first-class business capability. Execute became real: AI systems that send emails, update CRM records, commit code, and trigger workflows without a human touching each step. The full five-capability picture, Ingest, Analyze, Predict, Generate, Execute, is now accessible to mid-market companies for the first time.

What hasn't changed across all three eras

The uncomfortable truth that doesn't make it into vendor demos: most of what determines whether AI works or fails has been true since the 1990s. The era changed. The blockers didn't.

Data quality still determines outcomes. A 2025 Gartner report found that 60% of AI projects will be abandoned through 2026 due to inadequate data. This is a 30-year-old problem wearing a new hat. The bank's credit model from 2001 failed on bad inputs. Your CRM-based lead scoring fails on bad inputs today. Data readiness is still the unsexy prerequisite that decides everything.

System integration still takes longer than expected. Every era made AI capabilities more accessible. None of them made connecting those capabilities to existing business systems significantly faster. You still need API contracts, data pipeline work, and permission management in the same painful sequence as before.

Governance still matters. Credit scoring in Era 1 had to comply with fair lending rules. Image recognition in Era 2 surfaced bias in hiring tools. LLMs in Era 3 produce confidently wrong answers and leak sensitive data. The nature of the governance problem evolved. The need never went away.

Predict is still the hardest capability. Predict depends on labeled historical data that reflects the future you're trying to predict. That dependency doesn't change with the underlying model. Bad inputs produce bad scores in all three eras.

Humans still need to stay in the loop for high-stakes Execute. The Generate vs. Execute boundary deserves human oversight regardless of the year. Era 1 had automated loan denials that violated fair lending rules. Era 3 has AI agents that send wrong emails and update CRM records incorrectly. Different tools, same problem category.

How the ACE Framework maps across all three eras

The ACE Framework is structured around capabilities rather than technologies because the capabilities predate the current tools by decades.

Ingest existed in all three eras: OCR and manual entry in Era 1, Google Vision and speech-to-text APIs in Era 2, multimodal models in Era 3. The capability is consistent. The fidelity and access improved.

Analyze is the oldest capability. Text classification and entity extraction existed in research before commercial AI was a category. Era 3 makes them available via natural language instruction to anyone with a browser.

Predict is Era 1's home territory, refined over 30 years. The logic (historical data produces probabilistic forecasts) hasn't changed. LLM-augmented approaches now incorporate unstructured signals alongside structured data, but the capability category is the same.

Generate barely existed in Era 1 beyond template fills. Era 3 made it the most visible capability, because its output is legible to any human without technical context.

Execute existed in all three eras in increasingly wide forms: automated loan denials in Era 1, content flagging at scale in Era 2, autonomous AI agents in Era 3. What changed is the scope of actions and how fast AI can chain them. The governance requirements grew proportionally.

Why framework stability matters

Specific model names have a short shelf life. GPT-4 became GPT-4o. Claude-3 became Claude-3.5, then 3.7. Gemini Ultra was announced, updated, and renamed within a year. GitHub Copilot added features quarterly. Jasper got acquisition rumors, partnership changes, and a pivot or two.

If your understanding of AI is organized around specific products, you're rebuilding your mental model every six months. This is exhausting, and it leads to bad decisions: chasing the newest model rather than doing the unglamorous integration work, over-indexing on a vendor that might not exist in two years, and confusing "new interface" with "new capability."

The ACE Framework is organized at a level of abstraction that outlasts product cycles. Ingest is Ingest whether you're calling Google Vision API in 2016 or a multimodal GPT-4o endpoint in 2025. Predict is Predict whether you're running XGBoost on a server or calling Salesforce Einstein Forecasting. The tools change. The capabilities don't.

This isn't just philosophical. It has practical implications for how you structure your AI thinking. If you evaluate AI initiatives using capability tagging rather than product names, your evaluation framework survives vendor changes, mergers, and model deprecations. You can swap the tool. The capability map stays consistent.

What might come in Era 4: speculation

No one knows what Era 4 looks like. But there are research directions worth watching that could promote new first-class capabilities.

World models give AI persistent physical understanding: how objects behave in space, how mechanical systems work. This matters most for manufacturing and physical infrastructure. Reliable world models would represent a genuinely new axis of capability, not just a better version of existing ones.

Persistent memory changes how Analyze and Predict work. Today's AI largely starts fresh each session. A system that accumulates context about your business over months would work very differently than one that forgets you on every refresh.

Multi-agent coordination pushes Execute toward full autonomy: one agent plans, another executes, a third validates, a fourth escalates to a human. Early versions exist (AutoGPT, Microsoft Copilot Studio), but reliable multi-agent systems at business scale are still early.

If any of these matures into commercial capability, "Remember" or "Coordinate" might deserve a seat alongside the five existing ACE capabilities. The framework is explicit that it may need revision. The current five cover the field as of 2026.

How to prepare for Era 4

The preparation strategy isn't "pick the best model today." Every company that bet heavily on a specific model or vendor has had to redo work when things shifted. The durable investments are era-independent.

Invest in Foundation work first. Data readiness is boring. It's also the most durable AI investment. Clean, labeled, accessible data extracts value from every generation of tooling. Messy inputs produce bad outputs regardless of the model.

Keep the underlying model swappable. If your workflows are built on one vendor's API with no abstraction layer, that vendor can revoke your approach at any time. Libraries like LangChain and LlamaIndex exist specifically so you can swap models without rebuilding workflows. The ACE Framework's limits explicitly include being technology-agnostic. Your stack should be too.

Evaluate by capability, not product name. Ask which ACE capabilities a new initiative uses. Tag tools by capability. Reading an AI use case by its capability mix is a skill that transfers across eras, because the vendor landscape will keep shifting.

The 30-year arc, in one frame

Business AI has been in the field since before most of the people selling it today started their careers. The capabilities have expanded. The access barriers have fallen. The interface changed dramatically in 2022, and that change is real and significant.

But the underlying logic hasn't changed. Data quality determines outcomes. Integration is hard. Governance matters. Predict is still the hardest capability to get right. Humans still need to stay in the loop for consequential actions.

The ACE Framework doesn't describe 2026 AI. It describes what business AI is: five verbs, applied to data, composed into workflows. Those five verbs predate ChatGPT by decades. And if Era 4 adds a sixth, we'll update the framework to reflect it.

Priya doesn't need to believe AI started in 2022. She needs to understand what it does, how to evaluate it, and where the durable investments are. That understanding is what the framework is built to provide.

What to read next

- The ACE Framework: the full five-capability model, with the six-layer stack and a worked example on Gong

- Predictive AI vs. Generative AI: how the industry's popular binary maps to the ACE Framework, and where it breaks down

- What Is Business AI?: a practical definition that covers all three eras

- ACE Framework limits: honest limits, including the fact that this framework will need revision as the field evolves

- Data Readiness: the prerequisite that matters equally in all three eras

- Predict: the oldest capability, and still the hardest to get right

Senior Operations & Growth Strategist

On this page

- Era 1: Classical ML (roughly 1990 to 2010)

- Era 2: Deep Learning at Scale (roughly 2010 to 2020)

- Era 3: LLMs and Agents (2022 to now)

- What hasn't changed across all three eras

- How the ACE Framework maps across all three eras

- Why framework stability matters

- What might come in Era 4: speculation

- How to prepare for Era 4

- The 30-year arc, in one frame

- What to read next