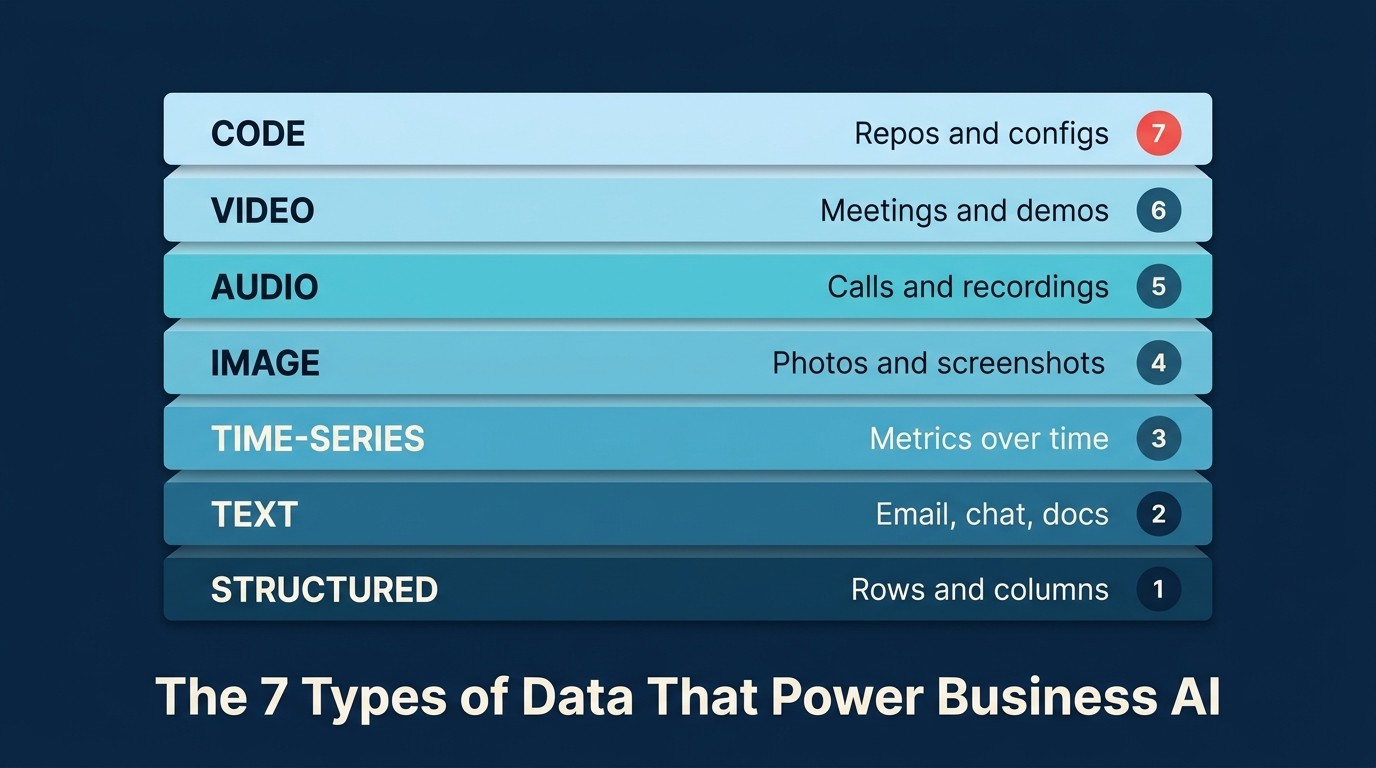

The 7 Types of Data That Power Business AI

AI tools don't fail in demos. They fail when they meet your actual data.

The demo runs against clean, prepared, vendor-controlled inputs. Your real environment has meeting transcripts where every speaker is labeled [Speaker 1]. A CRM where 40% of records are missing the fields the scoring model depends on. A document library that includes three years of outdated pricing, given equal weight with last month's updates. The model is fine. The data is the problem. And without a vocabulary for diagnosing which data problem you have, the failure looks like bad AI when it's a fixable plumbing issue.

This is the most common pattern in AI deployments that disappoint: not a capability gap, but a data gap that nobody identified before the contract was signed. The vendor's sales process has no incentive to surface it. The buying team rarely has the framework to find it.

The ACE Framework puts Data at the Foundation layer for exactly this reason. Before any capability works (Ingest, Analyze, Predict, Generate, Execute), data has to exist, be accessible, and be in a format the AI can consume. The seven data types in this article are the distinct formats information takes inside a business. Each one lives in different systems, breaks in different ways, and requires different preparation before it's useful.

Read this article like a reference. Then run the inventory checklist at the end against any AI project currently in flight or on your roadmap. The gaps will be obvious once you know what to look for.

Why data types matter before anything else

In the ACE Framework for business AI, Data sits at the Foundation layer, below all five capabilities (Ingest, Analyze, Predict, Generate, Execute), below patterns, below agents. That's not modesty. It's cause and effect. Every AI capability requires data as raw material. Change the quality, format, or accessibility of that data and you change what the AI can do.

The seven canonical data types represent the distinct formats in which information exists inside a business. Each requires different infrastructure to store, different pipelines to move, and different AI models to process. Understanding them isn't academic. It's the first practical step toward knowing whether an AI tool will actually work before you sign the contract.

Here's the inventory. Read it like a reference. Then use the checklist at the end to audit your own stack.

1. Text

Text is the most abundant data type in almost every business, and also the least structured, which makes it both AI's biggest opportunity and one of its biggest headaches.

Where it lives: Gmail, Outlook, Slack, Microsoft Teams, Notion, Confluence, Salesforce CRM notes, Zendesk tickets, Google Docs, contract folders, customer reviews, survey responses.

What AI does well with it: Intent detection (is this email urgent or FYI?). Summarization (condense a 40-message thread to three bullet points). Extraction (pull the vendor name, contract date, and renewal clause from a PDF). Classification (tag this support ticket as "billing," "bug," or "feature request"). Generation (draft a follow-up based on the full conversation context).

Common problems: Fragmented across 20 tools that don't talk to each other. No schema (free-text fields mean "next steps" looks different in every rep's notes). Sensitive data mixed in with operational data, creating compliance exposure.

The honest failure mode: Rachel's proposal tool cited outdated services because its text corpus included old pitch decks and email threads with no recency weighting. The AI averaged everything, treating a 2019 service description the same as a 2026 one.

2. Structured Data

Structured data is information organized into rows and columns with explicit field names. It's the data type AI has been working with the longest, and still the one that predictive AI capabilities depend on most heavily.

Where it lives: Salesforce, HubSpot, Pipedrive (CRM records), Snowflake, BigQuery, Redshift (data warehouses), Excel, Google Sheets, ERPs like NetSuite or Sage, form submissions, API responses.

What AI does well with it: Lead scoring (73% probability of closing based on 18 signals). Pipeline forecasting (Q2 closed-won between $3.8M and $4.4M). Anomaly detection (this expense is 340% above category average). Churn prediction. Classification and segmentation at scale.

Common problems: Stale records (a 12,000-contact CRM where 4,000 entries have wrong titles and dead email addresses produces untrustworthy scores). Missing fields (if 60% of closed-won records have no "source" field, the model can't learn which sources convert). Siloed systems (Finance in NetSuite, Sales in Salesforce, Customer Success in Gainsight, with no integration and no cross-system reasoning).

3. Image

Business use cases for image AI extend well beyond e-commerce and manufacturing. The range runs from scanned invoices to product photos to screenshots of dashboards.

Where it lives: File storage (Google Drive, Dropbox, SharePoint), customer-uploaded portals, e-commerce catalogs (Shopify, WooCommerce), marketing asset libraries, manufacturing quality control systems, scanned document repositories.

What AI does well with it: OCR (converting scanned text to machine-readable characters, critical for invoice processing). Visual classification (defect vs. no-defect on a manufacturing line). Object detection. ID verification for KYC flows. Image generation (product shot variants, marketing visuals).

Common problems: Inconsistent quality (a model trained on clean studio photos fails on blurry field uploads). IP and copyright exposure from generation tools. Customer-uploaded documents often contain PII (passport numbers, medical forms) that carries its own governance requirements even though the data is visual.

4. Audio

Audio data enables one of the highest-ROI AI use cases in B2B: meeting intelligence. The moment a sales call or customer support conversation can be transcribed and analyzed, the business gains a data type it simply didn't have before: a searchable record of every spoken interaction.

Where it lives: Gong, Chorus, Fireflies (sales call recording platforms), Zoom cloud recordings, Microsoft Teams, call center systems, voicemail-to-text services.

What AI does well with it: Transcription. Sentiment analysis (was the customer frustrated at the end of the call?). Topic extraction (what objections came up?). Speaker identification. Call scoring (did the rep ask enough discovery questions?). Compliance monitoring.

Common problems: Consent requirements (recording without all-party consent is illegal in several US states and many other jurisdictions; legal review is mandatory before deployment). Background noise and speaker overlap degrade transcription accuracy. Rachel's meeting intelligence failure is the textbook case: the transcription model worked fine, but the speaker identification step had no access to her calendar or CRM contact list. The pipeline was missing a connection, not the AI.

5. Video

Video is audio plus image plus time, which makes it the richest and most expensive data type to work with. Processing video requires substantially more compute than any other type, so the ROI threshold is higher.

Where it lives: YouTube (owned channels), Loom (async messaging), Zoom cloud recordings, Vimeo (training content), security camera systems, product demo libraries.

What AI does well with it: Transcription (since video includes audio). Scene understanding. Highlight extraction. Chapter generation. Content moderation. Video generation (synthetic avatars, demo clips).

Common problems: Storage costs accumulate fast (one hour of 1080p video is 2-4 GB; 200 recorded meetings per week adds up quickly). Processing costs are significant for long-form content. Consent and biometric data requirements apply. Video captures faces, which adds obligations under laws like BIPA (Illinois) and GDPR beyond what audio alone requires.

6. Code

Code is structured text with formal syntax, but it behaves differently enough from natural language to deserve its own category. AI built for code (GitHub Copilot, Amazon Q Developer, Cursor) is purpose-built for its syntax patterns, not just fine-tuned on prose.

Where it lives: GitHub, GitLab, Bitbucket (repositories), CI/CD systems (Jenkins, GitHub Actions), log aggregators (Datadog, Splunk, Sumo Logic), infrastructure-as-code files (Terraform, Ansible).

What AI does well with it: Code generation. Code review (flag security vulnerabilities, style violations, performance issues). Documentation. Debugging from error logs. Refactoring. Vulnerability scanning (find hardcoded credentials). Log analysis.

Common problems: Context window limits (AI reasons well about a single file, but struggles across a 500,000-line monorepo; tools like Cursor handle this via retrieval strategies). Secrets in repositories (API keys and credentials committed to code dramatically increase attack surface when connected to an AI assistant). Missing intent (the AI can read what the code does; it usually can't read why, and documentation and comments are the bridge).

7. Time-Series

Time-series data is any measurement recorded at regular intervals: a metric at 9:00 AM, 9:01 AM, 9:02 AM. It's the native language of operations, finance, and infrastructure monitoring, and it enables forecasting and anomaly detection that no other data type can substitute for.

Where it lives: Monitoring tools (Datadog, New Relic, Prometheus), IoT sensor systems, financial systems (daily revenue, expense, headcount), website analytics (Google Analytics, Mixpanel, Amplitude), POS systems (transaction volume by hour and day).

What AI does well with it: Forecasting (next month's revenue, next quarter's churn rate). Anomaly detection (this metric is 3.4 standard deviations from its rolling baseline). Trend analysis (support volume is growing faster than revenue). Seasonality modeling.

Common problems: Clock drift and missing timestamps break the regular intervals that time-series models assume. Mixing sampling granularities (one system logs every minute, another every hour) produces unreliable baselines. Insufficient history is the most common gap: a forecasting model trained on 3 months of data can't reliably predict annual patterns. The rule of thumb is 2-3 full cycles of whatever pattern you're trying to model.

How data types combine in real use cases

Most business AI use cases span two or three data types. Understanding the combination tells you which pipelines to build and which data readiness problems to solve first.

| Use Case | Data Types | ACE Capabilities |

|---|---|---|

| Sales call intelligence (Gong-style) | Audio + Text + Structured | Ingest + Analyze + Generate |

| Lead scoring (Salesforce Einstein-style) | Structured + Text | Analyze + Predict |

| Invoice processing (AP automation) | Image + Structured | Ingest + Analyze + Execute |

| Support ticket triage (Zendesk AI-style) | Text | Analyze + Predict + Execute |

| Fraud detection (Stripe Radar-style) | Structured + Time-series | Ingest + Analyze + Predict + Execute |

| DevOps log analysis | Code + Time-series | Ingest + Analyze + Predict |

| Product demo analysis | Video + Text + Structured | Ingest + Analyze + Generate |

When a vendor pitches an AI tool, ask which data types it consumes. If those types aren't clean, accessible, and properly connected in your stack, the tool won't perform as promised regardless of how good the underlying model is.

Which data type feeds which ACE capability

This matrix maps the seven data types against the five ACE capabilities. "High" means the data type is a primary input. "Medium" means it's secondary or supporting. "Low" means the connection is uncommon.

| Data Type | Ingest | Analyze | Predict | Generate | Execute |

|---|---|---|---|---|---|

| Text | High | High | Medium | High | Low |

| Structured | Medium | High | High | Medium | Medium |

| Image | High | High | Low | High | Low |

| Audio | High | High | Low | Medium | Low |

| Video | High | Medium | Low | Medium | Low |

| Code | Medium | High | Low | High | Medium |

| Time-series | Medium | High | High | Low | Medium |

Three things stand out in this matrix.

Ingest is the entry point for non-text types. Images, audio, and video can't be reasoned about directly. They need conversion first (OCR, transcription, scene analysis). If your Ingest pipeline is broken, everything downstream fails.

Analyze is universal. Every data type feeds Analyze, because making sense of information always follows taking it in. This is why the Analyze capability appears in almost every real AI use case.

Predict runs on Structured and Time-series. Forecasting and scoring require historical patterns in structured form. Dirty structured data or short time-series history will underperform even with a good model.

Before you start any AI project: data inventory checklist

Run through this before signing a vendor contract or launching an internal initiative. It takes less than an hour and catches the most expensive mistakes.

1. Which data types does this use case require? Write them down specifically. Not "data" in general. Text (from where?), structured (which system?), audio (which recordings?), and so on.

2. Do you have that data today? Don't count data you plan to collect. Count data you have. If the use case requires 18 months of sales call recordings and you've been using Gong for 4 months, you don't have the data.

3. Is it accessible to the AI tool? Data that exists but can't be reached is data you don't have. Common blockers: no API, integration not built, on-premise access required, IT policy hasn't approved the connection.

4. Is it clean enough to be useful? For structured data: what percentage of records have the key fields populated? For text: is it fragmented across systems? For audio: what percentage of calls are actually recorded and stored?

5. Is it permissioned correctly? Customer audio, employee communications, and financial records all carry data-handling obligations. Confirm your DPA with the vendor and your internal policies before connecting.

6. Which data readiness problems need to be solved first? This is where most AI projects stall. The tool is ready; the underlying data isn't. Fix the data problem, then deploy the AI that depends on it. Boring sequence. The one that works.

What this tells you about Rachel's problem

Rachel's three failed AI tools each had a specific data problem, not an AI problem.

The meeting intelligence tool produced [Speaker 1] labels because the vendor's pipeline wasn't integrated with her calendar or CRM. Transcription worked fine. The speaker identification step simply never received the contact data it needed to match voices to names.

The lead-scoring model returned 7/10 for everyone because her CRM lacked differentiated historical data. Too many closed-won records had missing fields (source, industry, company size). The model couldn't find distinguishing patterns and defaulted to the average.

The proposal tool cited outdated services because its text corpus had no recency weighting. A 2019 service description carried the same weight as a 2026 one.

In each case, the AI worked as intended. And now Rachel can name the specific data type, identify where the gap was, and describe what would need to change. That's the value of a data inventory: not just a list, but a diagnostic.

What to read next

This article gave you the catalog. The next step is understanding what makes these data types usable for AI.

- Data readiness for AI — the practical prerequisites: accessible, structured, fresh, and permitted

- Clean data field guide — diagnosing data quality problems before they sink a project

- Ingest — the first ACE capability, and the one that determines whether image, audio, and video data enter your workflows at all

- Analyze — the capability that applies to every data type, where raw data becomes business insight

- The ACE Framework — the full periodic table, with the six-layer stack showing how data, capabilities, and patterns connect

Senior Operations & Growth Strategist

On this page

- Why data types matter before anything else

- 1. Text

- 2. Structured Data

- 3. Image

- 4. Audio

- 5. Video

- 6. Code

- 7. Time-Series

- How data types combine in real use cases

- Which data type feeds which ACE capability

- Before you start any AI project: data inventory checklist

- What this tells you about Rachel's problem

- What to read next