Building a VoC Pipeline from CS to Product: From Raw Signal to Actionable Input

Here's a pattern that plays out at nearly every mid-market SaaS company. A CSM hears a customer say they're manually exporting data to Excel because your reporting doesn't filter by region. Another CSM hears it from a different account two weeks later. A third hears a variation from a third account. All three log it somewhere (or don't) in their own shorthand, with different severity signals attached. Six months later, a PM asks in a planning session: "Is there actual demand for regional reporting?" Nobody can answer confidently. The signal existed. The pipeline didn't.

The broken telephone isn't a motivation problem. CSMs aren't forgetting to care about product quality. The problem is infrastructure: CS has no shared structure for converting what customers say into a format that Product can actually use for decisions. What most teams call a VoC process is actually just a capture impulse with no routing logic attached. The Slack channel, the quarterly survey, the "send us feedback" form: none of these are pipelines. They're data graveyards with a slightly better user interface. Forrester research on VoC program maturity finds that nearly half of VoC programs rate their own maturity as low or very low. The gap between capture and action is the rule, not the exception.

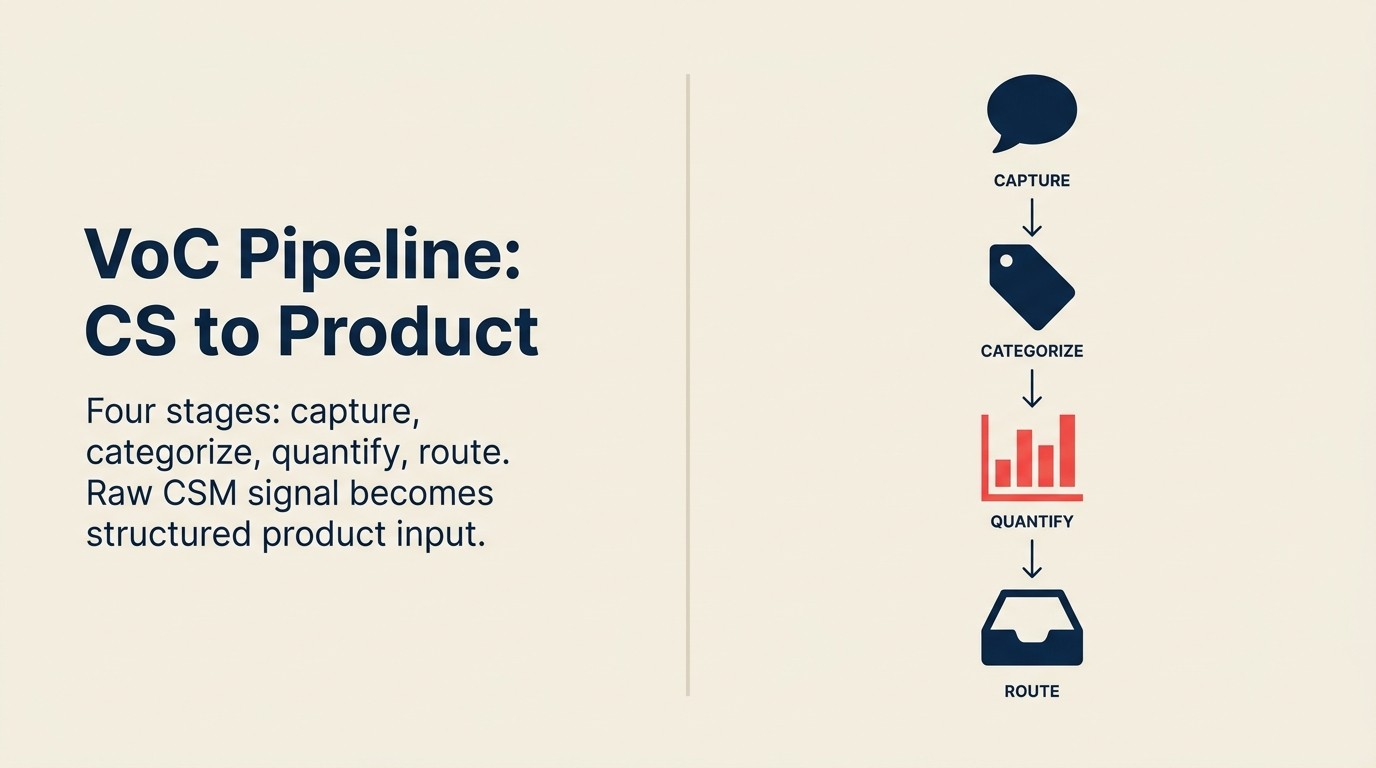

The 4-Stage VoC Pipeline is a four-stage operational infrastructure (capture, categorize, quantify, route) that converts raw customer signal into prioritized product input on a defined cadence. It's not a culture change program. It's a shared system designed by CS Ops and Product leadership that functions regardless of the personal relationship between individual CSMs and PMs. The same voice-of-customer signal that CS surfaces for Product also shapes how Sales teams message to prospects. The two pipelines share a common upstream source.

What a VoC Pipeline Actually Is

Key Facts: Signal Capture and Product Decisions

- 70% of product teams report that customer feedback is "inconsistently structured" when it arrives, making it hard to aggregate across accounts, per Productboard's State of Product Management survey.

- Only 22% of CS teams have a standardized capture format that feeds directly into the product planning process; most still rely on ad hoc Slack messages or quarterly summaries, per Totango's CS benchmark report.

- Feature requests that arrive with ARR weight attached are 2.6x more likely to make it to a quarterly roadmap review, compared to requests logged without revenue context, per internal benchmarking shared by multiple Productboard customers.

The word "pipeline" is deliberate. A pipeline has stages, flow direction, and output. When a prospect goes into the sales pipeline, everyone understands what happens: they move through qualification, discovery, proposal, and close. Each stage has defined criteria. Deals don't just "sit" in the pipeline indefinitely. They advance or they exit.

Customer feedback should work the same way. A raw signal enters the system at Stage 1 and exits as a prioritized product input at Stage 4. If a signal can't move through the pipeline, it should be explicitly declined or parked, not left open forever.

The four stages:

Stage 1: Capture. The moment a CSM hears something relevant from a customer, the signal enters the system in a structured format. Not free text. Not a Slack message. A structured record with defined fields. The voice of the customer discipline in quality management has defined this capture-to-action model for decades. The B2B SaaS context just adds ARR weighting on top of the classical structure.

Stage 2: Categorize. Captured signals are tagged by type (feature request, workflow gap, bug, missing integration) by CS Ops or a PM liaison. This is how signals become themes that can be aggregated across accounts.

Stage 3: Quantify. Each theme gets a revenue weight, not just an account count. Ten SMB accounts requesting a feature is not the same as one enterprise account requesting it as a condition of renewal. The quantification step is where CS signal becomes something Product can rank against strategic priorities.

Stage 4: Route. Quantified themes go to the right PM on a defined cadence, through a defined channel. The quarterly feedback review is the primary ritual. Urgent signals have an expedited path.

The reason most "VoC programs" fail is that they're Stage 1 only. Capture happens. Nothing after it does. A Slack channel for customer feedback is Stage 1 with no Stages 2, 3, or 4. It's a place where signal enters and stops moving. So what does it actually take to design each stage well?

Stage 1: Capture

Capture is the hardest stage to design well because it has to fit inside the CSM's existing workflow. If capturing product signal requires a CSM to open a separate tool, fill out a separate form, or take more than 90 seconds of extra work per call, it won't happen consistently. The discipline degrades within weeks.

Four types of signal warrant capture:

Feature requests: a specific ask for functionality that doesn't exist, often with a workaround the customer is already using. "We'd love to be able to filter the dashboard by region" paired with "right now we export to Excel and do it manually" is a complete feature request signal.

Workflow gaps: the customer describing manual steps they're taking because the product doesn't support the full workflow. These are often more valuable than feature requests because they reveal the problem, not just the proposed solution.

Competitive mentions: what the customer says a competitor does that your product doesn't. These arrive in two flavors: "we're evaluating [competitor] because they have X" and "we came from [competitor] and we miss Y." Both matter for different reasons.

Churn-risk signals tied to product gaps: the "if you don't build X, we're going to have to reconsider the renewal" category. These are the highest-urgency signals and need an expedited path to Product, not just the standard quarterly cadence. CS Ops should cross-reference these signals with early warning systems in the account health layer. A product-gap churn signal often surfaces alongside health score degradation.

What to standardize: the signal type, the verbatim quote, and the account context (tier, ARR, renewal date). What to leave flexible: the description of the workaround, the severity assessment, and any additional context the CSM thinks is relevant. Over-standardizing kills verbatim quality. Under-standardizing makes aggregation impossible.

The right home for capture is inside the CRM or CS platform that CSMs already use every day. Custom fields on call notes or account records, not a separate product feedback tool. The integration between the capture layer and wherever Product works (Productboard, Jira, etc.) is a sync problem, not a workflow problem. Solve the sync separately. Once capture is working, the next challenge is turning raw signals into themes Product can act on.

Stage 2: Categorize

Raw captured signals are not product inputs. They're data points. Categorization is what turns data points into themes, the level at which Product can actually make decisions.

A tagging taxonomy needs three things to work: it has to be jointly owned (CS Ops and Product together), it has to be small enough to apply consistently (fewer than ten primary tags), and it has to map to how Product thinks about its roadmap areas. The CS-product alignment maturity model gives teams a benchmark for where their current tagging practice sits and what the next stage looks like.

Four primary categories cover most mid-market SaaS feedback:

| Category | Signal type | Example |

|---|---|---|

| Feature request | Specific ask with a defined behavior | "Allow users to filter reports by date range and region simultaneously" |

| Workflow gap | Manual step in the customer's process | "We export to Excel and pivot manually every week because the native view doesn't support this" |

| Bug / reliability | Behavior that doesn't match the documented product | "The export fails for accounts with more than 500 rows" |

| Missing integration | A third-party tool connection that doesn't exist | "We can't use this alongside HubSpot without manually syncing the data" |

Who does the categorization? Not the CSM. CSMs should apply a rough primary tag at capture time ("feature request" vs. "workflow gap"). But the final categorization, especially the alignment to roadmap themes, should happen at the CS Ops or PM liaison level. CSMs aren't positioned to know which PM owns which area or which theme a request maps to.

Categorization drift (when the taxonomy gets applied inconsistently over time) is one of the most common pipeline failures. The fix is a monthly calibration review between CS Ops and the PM liaison: pull a sample of recent tags and check alignment. If the same type of signal is being tagged differently by different CSMs, the category definition needs clarification, not a conversation with individual CSMs. With clean categories in place, the next step is the one most teams skip: attaching revenue weight to each theme.

Stage 3: Quantify

This is where the pipeline diverges from everything most teams currently do. Account count is not weight. Fourteen accounts requesting a feature is a raw count. Fourteen accounts representing $340K ARR with three of them renewing in the next 90 days is a weighted signal. The difference determines whether a PM treats it as background noise or a prioritization input.

The core quantification logic has two components:

ARR at risk: the contract value of accounts requesting the feature, adjusted for renewal proximity and stated churn signal. An account renewing in 60 days with a CSM-flagged churn risk tied to this feature carries higher weight than an account with the same ARR renewing in 18 months with no expressed urgency.

ARR expansion potential: the headroom in accounts where this feature is described as a blocker for adding seats or modules. An account at $80K ARR with 40 untapped seats and a dependency on this feature for expansion is a significant weight that won't show up in a vote count.

The ARR-Weighted Feedback Quantification article covers the full formula with a three-account worked example. The key point at the pipeline level: quantification should happen at Stage 3, not later. If signals reach Product without revenue weight, PMs default to what's measurable, which is usually ticket count. From there, routing is what gets decisions made.

Stage 4: Route

Routing solves two problems: it gets the right signals to the right people, and it does it on a cadence that fits product planning cycles rather than whenever CS has something urgent.

Two routing paths:

The batch path: the quarterly feedback review. This is the primary ritual where CS Ops presents the top weighted themes to PM leads, with account context, verbatims, and ARR weights attached. It's not a feature request meeting; it's an input session. PMs bring the roadmap context; CS brings the revenue-weighted signal. Together they produce a prioritized shortlist, not just a ranked list of everything CS submitted.

The urgent path: for churn-risk signals tied to product gaps where the renewal is imminent. These bypass the quarterly cadence and go directly to the relevant PM within 48 hours of capture. The CSM flags it; CS Ops confirms the ARR weight; it routes to the PM as a one-page brief. The brief includes: account name, ARR, renewal date, verbatim, and the specific decision CS needs before the next renewal call.

What breaks routing: a shared inbox that no PM owns. If the destination for feedback is a generic product inbox or a Jira epic called "Customer Requests," nothing happens. Each route path needs a named PM or PM lead on the receiving end. Triage by committee fails.

Common Pipeline Failures and Fixes

Capture-only pipeline. The team has a form or a CRM field for product feedback. Signals go in. No Stage 2, 3, or 4 exists. The feedback accumulates into an unread backlog. This is the feature request graveyard pattern in its earliest form. Fix: build Stage 2 first, before adding more capture instrumentation. A pipeline with 50% capture and 100% categorization is more useful than one with 100% capture and 0% categorization.

Categorization drift. The taxonomy gets applied inconsistently. "Feature request" and "workflow gap" get used interchangeably. Themes can't be aggregated. Fix: monthly calibration session between CS Ops and PM liaison, with a sample review of recent tags.

Routing to no one. Quantified themes get batched into a document and sent to "Product." No PM has ownership of the document. It sits unread for three months. Fix: route to a named PM or PM lead, not to a team alias or shared folder.

Feedback review that's not decision-making. The quarterly session becomes a presentation by CS Ops and a listening session by Product, with no output. Fix: the session ends with a prioritized shortlist and a named PM owner for each item. Items with no owner don't appear on the shortlist. They go back into the queue.

Rework Analysis: Teams that implement the 4-Stage VoC Pipeline see the most immediate improvement at the transition from Stage 1 to Stage 2, the categorization step. Based on patterns across mid-market SaaS teams, companies that invest in a shared tagging taxonomy before adding capture instrumentation produce 3x more actionable signal per CSM than those that scale capture first. The pipeline's value isn't in how much feedback enters; it's in how little gets lost between Stages 2 and 4. Rework's CS-product alignment features are designed to embed this routing discipline into the tools CS teams already use daily.

Tooling Notes

Mid-market teams typically run a version of this pipeline on a CRM plus spreadsheet combination before they invest in dedicated VoC tooling (Productboard, Aha!, UserVoice). That's fine. The pipeline model works with any tooling. What it doesn't work without is the workflow discipline.

The moment to move from spreadsheet-based to dedicated tooling is when CS Ops is spending more than two hours per week manually aggregating and routing signals. At that point, the manual overhead starts creating lag in Stage 3 and Stage 4, and the quarterly review arrives with stale data. Tomasz Tunguz on revenue at risk makes the underlying case for why CS needs structured, per-account revenue data. The same data infrastructure that powers ARR weighting also enables the quantification stage of the VoC pipeline.

But the tool selection matters less than the workflow design. A Productboard instance without a defined tagging taxonomy, a named routing path, and a quarterly review cadence is still a Stage 1 pipeline with better UX. The four stages don't come with the software.

How to Audit Your Current Pipeline

Three diagnostic questions for VP CS and Head of Product to answer together:

1. Can you pull a list of all product feedback submitted by CS in the last 90 days, with ARR attached to each item? If the answer is no, or if it requires significant manual work, your Stage 3 (quantify) doesn't exist yet.

2. Can you identify which PM owns each piece of feedback currently sitting in your system? If most items are unowned, your Stage 4 (route) has a structural gap.

3. In the last product planning cycle, how many items on the roadmap can be traced back to a specific CS-sourced signal with a named account and ARR weight? If the answer is zero or unclear, the pipeline isn't feeding decisions. It's feeding a database. Tracking feature adoption strategy post-launch is one of the best ways to close this audit loop: if CS-sourced features land with low adoption, Stage 2 categorization may be solving the wrong problem.

A 30-Day Build Plan

For teams with no structured VoC pipeline today:

Week 1: CS Ops and the PM lead jointly define the four-category tagging taxonomy. Add signal type as a field to the existing CRM call note template. Brief CSMs, five minutes in the team sync.

Week 2: CS Ops pulls all product-relevant notes from the past 90 days and manually categorizes them. This is the baseline. It also shows how much signal already exists in the system that never got routed.

Week 3: CS Ops builds the minimum viable spreadsheet: signal type, verbatim, account name, ARR, renewal date, CSM-assigned churn risk flag. Share with the PM lead. Identify the top five weighted items.

Week 4: Schedule the first quarterly feedback review. Block 60 minutes. Bring the top five weighted items with verbatims. The output of the session: a prioritized shortlist with named PM owners and a decision status for each item (build / defer / decline). Capturing Feedback Systematically covers the capture layer in detail, including structured note formats and the verbatim principle that makes PM decisions defensible. Use the CS-PM 1:1 cadence to build ongoing alignment between cycles so the quarterly review doesn't arrive cold.

The pipeline doesn't need to be perfect at launch. It needs to produce a decision. Once PMs see that CS-sourced signal with ARR weight attached changes the conversation rather than just adding noise, the pipeline earns its place in the planning process.

Frequently Asked Questions

What's the difference between a VoC pipeline and a feature request backlog?

A backlog is a list. A pipeline is a flow with defined stages and outputs. Backlogs accumulate indefinitely; pipelines process signal to a decision (build, defer, decline) on a defined cadence. Most product backlogs are feature request graveyards with better formatting. The pipeline model requires each signal to move through quantification and routing before it becomes a backlog item, so only signals with revenue weight and a named PM owner make it to the roadmap conversation.

How often should the quarterly feedback review actually happen?

Quarterly is the standard cadence for the batch path. But the name is slightly misleading. For many teams, "monthly triage for urgent items" plus "quarterly strategic review" is the right two-cadence model. Urgent signals (churn risk + renewal within 90 days) can't wait three months for the batch path. Build the expedited path first; the quarterly session is the structure that handles everything below the urgency threshold.

How do you get PMs to trust CS-sourced data?

Attach revenue weight to it. PMs who are skeptical of CS feedback are usually skeptical of anecdotes ("three customers complained") not of data ("three accounts representing $280K ARR renewing in the next 90 days have explicitly tied this to their renewal"). The ARR weight is the translation layer between CS language and PM language. Present in a format PMs already use for roadmap decisions.

What is the 4-Stage VoC Pipeline?

The 4-Stage VoC Pipeline is a structured operational model (capture, categorize, quantify, route) that converts raw customer signal from CS into prioritized product input on a defined cadence. Unlike a feedback form or Slack channel, each stage has defined entry criteria, owner accountability, and an output that feeds directly into the next stage. The pipeline distinguishes itself from a backlog by requiring every signal to move through revenue quantification before reaching Product.

What tools do you need to run a VoC pipeline?

The 4-Stage VoC Pipeline runs on the tools CS teams already use: typically a CRM for capture and a spreadsheet for categorization and quantification. Dedicated VoC tooling like Productboard or Aha! adds value once CS Ops is spending more than two hours per week manually aggregating signals. The workflow discipline matters more than the tooling: a Productboard instance without a defined tagging taxonomy and a quarterly review cadence is still a Stage 1 pipeline with better UX.

How do you measure whether the VoC pipeline is working?

Three diagnostic questions: Can you pull all CS-sourced feedback from the last 90 days with ARR attached? Can you identify which PM owns each piece of feedback? How many items on the last roadmap trace back to a specific CS signal with a named account? If any of these answers is "no" or "unclear," the pipeline has a structural gap at Stage 3 (quantify) or Stage 4 (route).

Learn More

Senior Operations & Growth Strategist

On this page

- What a VoC Pipeline Actually Is

- Stage 1: Capture

- Stage 2: Categorize

- Stage 3: Quantify

- Stage 4: Route

- Common Pipeline Failures and Fixes

- Tooling Notes

- How to Audit Your Current Pipeline

- A 30-Day Build Plan

- Frequently Asked Questions

- What's the difference between a VoC pipeline and a feature request backlog?

- How often should the quarterly feedback review actually happen?

- How do you get PMs to trust CS-sourced data?

- What is the 4-Stage VoC Pipeline?

- What tools do you need to run a VoC pipeline?

- How do you measure whether the VoC pipeline is working?

- Learn More