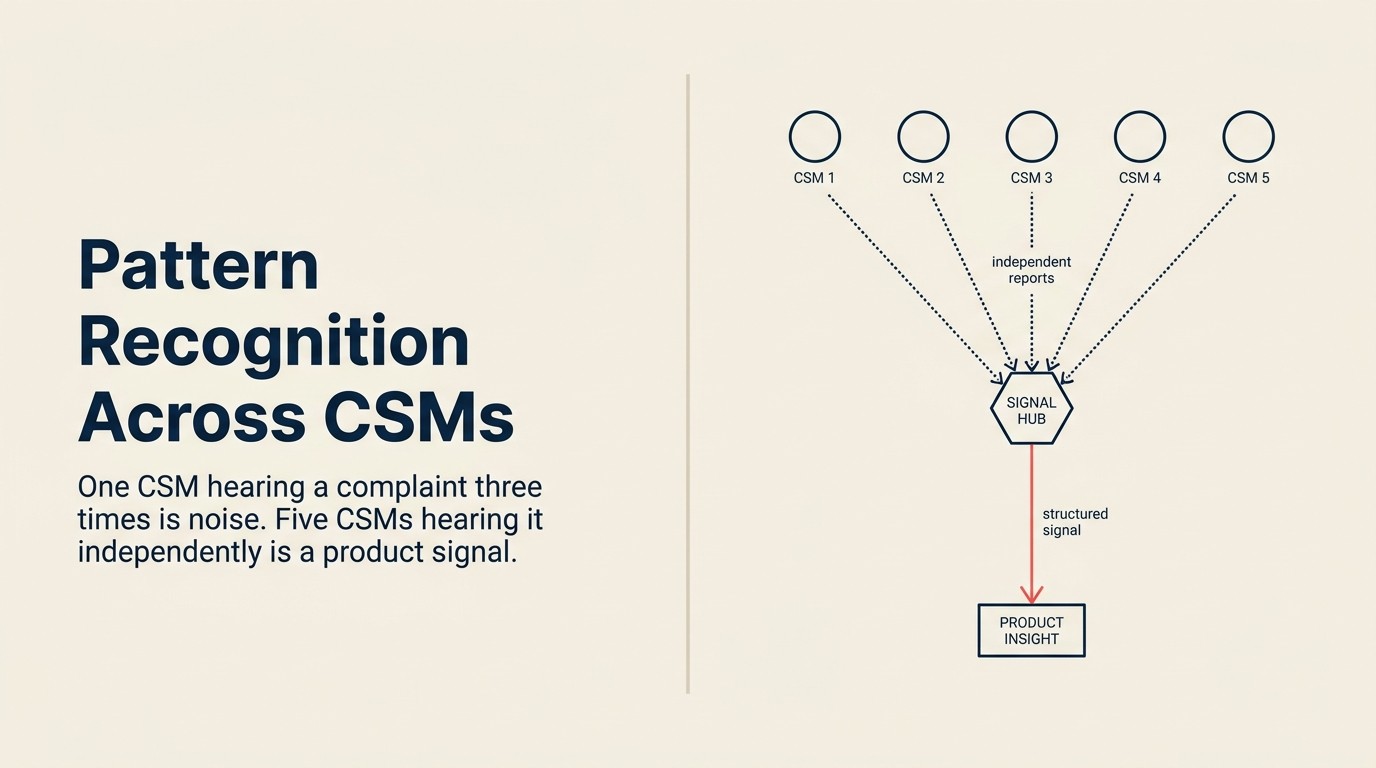

Pattern Recognition Across CSMs: Turning Anecdotes into Product Signals

One CSM reports "customers keep asking about bulk export." The PM logs it under "nice to have" and moves on. Three months later, two more CSMs mention bulk export in separate QBR reviews. It goes in their notes, account-bound, not surfaced anywhere. Six months after the first report, six different CSMs have each said the same thing in passing, in different calls, in different words, without anyone connecting the threads.

The feature still isn't on the roadmap. The PM team still doesn't know six CSMs have flagged it. And the CSMs have mostly stopped mentioning it because nothing happened the first time.

This isn't a communication failure. It's an infrastructure failure. Individual CSM notes are siloed by account. There's no taxonomy to connect "bulk export," "download all," and "mass export" as the same underlying request. And there's no feedback loop that shows CSMs their observations are landing anywhere.

The organization has the signal. It just can't read it.

The CSM Anecdote-to-Signal Threshold describes the point at which independent reports from different CSMs cross from coincidence into statistically meaningful evidence of a product gap. Below the threshold, each report is an account-level observation. Above it, the pattern warrants formal escalation with ARR weighting. The threshold is not a fixed number. It's a function of CSM team size, ARR concentration, and churn risk, but it provides an explicit rule for when to act rather than a vague instruction to "surface patterns."

Why Pattern Recognition Fails at Scale

Three structural problems prevent cross-CSM pattern detection from happening naturally. The voice of the customer discipline (formally defined as capturing customers' actual descriptions of desired functions and features) has existed in quality management for decades, but most B2B SaaS teams still have no systematic process for aggregating it across account managers.

CSMs document for their accounts, not for the org. Notes are account-bound by design. A CSM adds a note to Acme Corp's account record. That note is searchable if you're looking at Acme Corp. But it's invisible if you're looking for every account that mentioned bulk export this quarter. The data architecture that makes individual accounts visible makes cross-account patterns invisible.

No shared taxonomy means three labels for the same topic. One CSM writes "bulk export." Another writes "download all records." A third writes "mass data export." These are three separate entries in any search. No pattern emerges from the frequency data because the frequency is distributed across three distinct strings. Without a controlled vocabulary, the same customer need looks like three different customer needs.

No incentive to contribute when the loop never closes. CSMs are pragmatic. If logging customer feedback to a shared system produces no visible outcome (no acknowledgment, no backlog entry, no feature shipped), they stop logging. Not out of defiance, but out of rational resource allocation. Logging takes time. If nothing happens, that time is wasted. The contribution rate declines until only the most conscientious CSMs are filing signals, and the sample is no longer representative.

Key Facts: Why Cross-CSM Pattern Detection Fails

- The average enterprise B2B SaaS company has 8-20 CSMs managing accounts independently, each with account-bound notes and no shared feedback taxonomy, making cross-account pattern detection structurally impossible without deliberate process design, per Gainsight's CS Operations Benchmark.

- 71% of product managers report that customer feedback they receive is not systematically aggregated before it reaches them. They're hearing individual anecdotes, not patterns, per a ProductBoard survey of 600 PMs.

- CS teams with a shared feedback taxonomy produce 3.5x more actionable product signals per quarter than teams without one, even when the total feedback volume is identical, per Gainsight research.

The Foundation: A Shared Feedback Taxonomy

Before any tooling, teams need a controlled vocabulary. This is the step most teams skip because it feels slower than just starting to collect feedback. But an unstructured feedback collection is just noise with timestamps.

How to design a topic taxonomy:

The taxonomy has three levels:

- Feature area: the product domain (e.g., CRM, Reporting, Integrations, Workflow Automation)

- Customer need: what the customer is trying to accomplish (e.g., bulk data operations, export and import, field customization)

- Severity: how the gap affects the customer's workflow (blocking, degraded, minor friction)

A well-tagged feedback item looks like: Reporting > Bulk Data Operations > Blocking

That tag is queryable. You can ask: "How many accounts have a blocking issue in bulk data operations?" in one click.

Who owns taxonomy governance:

CS Ops, not individual CSMs. If individual CSMs can add their own taxonomy items, the taxonomy grows without constraint and loses its ability to aggregate. New taxonomy items should require a CS Ops review before they're live. A 48-hour SLA is sufficient.

What to do with one-off requests that don't fit:

Tag them as Unclassified > [feature area] > [severity] and review the unclassified bucket monthly. If the same unclassified tag appears three or more times in a month, it's a candidate for a new taxonomy item.

With the vocabulary in place, the next question is how to collect signals consistently across the team.

Method 1: Structured CSM Sync (Weekly or Bi-Weekly)

This is the meeting format that surfaces patterns. But most CS team syncs are account reviews, not pattern detection sessions. The format needs to change before the output can change.

Pre-read: Before the meeting, each CSM submits 1-3 customer observations using a shared template. Not free text, but structured fields: product area, customer need, severity, number of accounts that mentioned this, any verbatim customer language worth preserving.

The template takes 5 minutes per observation. If it takes longer, the template is too complex.

Meeting focus: The goal is to identify overlaps, not to review accounts. "Which topics appeared in multiple CSMs' pre-reads?" is the only productive question. Anything that appeared in only one CSM's submission is an account issue, not a product signal. Handle it offline.

Output: A ranked list of recurring themes, not a meeting summary. The output should answer: "What did more than one CSM hear this week?" with a count and the affected ARR.

Time investment: 30 minutes, not 90. If the meeting runs longer, the format isn't working. Either the pre-reads aren't being completed (which means the meeting is doing the work the pre-reads should do) or the agenda has drifted back to account reviews.

Example: What the 1-CSM, 3-CSM, 5-CSM threshold looks like

1 CSM reports bulk export friction: Log the observation. Add it to the unclassified or known feedback bucket. No action required from product. CSM continues monitoring.

3 CSMs independently report bulk export friction in the same quarter: Surface in the structured sync. Document as a candidate pattern. CSM Ops runs an account query: how many accounts total have referenced this issue? What ARR is affected? Send the quantified finding to PM as a FYI, not a priority request.

5 CSMs independently report bulk export friction across different account segments: This is a signal, not an anecdote. Escalate through the support tickets to product backlog pipeline with full ARR weighting. The pattern has crossed the threshold where it warrants formal PM evaluation.

The threshold isn't arbitrary at five CSMs. It's the point where the probability of independent reports describing the same underlying issue becomes statistically meaningful rather than coincidental in a team of 8-20.

Teams that can't run weekly syncs need an async alternative that scales as the team grows.

Method 2: Aggregated Feedback Tagging in the CS Platform

For teams that can't or don't want to rely on synchronous syncs, async pattern detection through the CS platform is the alternative, and it scales better as the CSM team grows.

How to tag feedback in tools like Gainsight, ChurnZero, or Salesforce:

Every customer interaction that surfaces a product observation should produce a tagged feedback item in the CS platform. Not a note on the account record, but a discrete feedback item with taxonomy tags, ARR of the affected account, and a timestamp.

Most CS platforms support this natively. Gainsight calls them "Timeline activities" with custom tags. ChurnZero has a feedback module. Salesforce supports it with custom objects. The implementation varies; the discipline to tag consistently does not.

Weekly or monthly product signal report:

CS Ops runs a query at the end of each week or month: "What are the top 5 recurring topics by frequency, and what is the total ARR associated with each?"

The output is a one-page report, not a data dump. Five topics, ranked by frequency, with ARR context for each. This report goes to the PM team's shared inbox, not to individual PMs, but to a team-level channel or email list.

Who sends this to product and in what format:

CS Ops sends it. Not a CSM, not the VP CS. CS Ops because they have the aggregation capability and because routing it through leadership adds a filter that can distort the signal. The report is data, not advocacy. The sales-CS health scoring alignment article covers how to layer sales-context signals into the same accounts, so the report CS Ops sends product reflects not just support volume but also expansion pipeline status and renewal risk.

Method 3: CS Digest to Product (Written, Not Verbal)

McKinsey's research on customer listening programs found that companies with formal feedback aggregation systems (as opposed to teams relying on individual manager judgment) are far more likely to act on patterns rather than isolated complaints. The key distinction is the presence of a shared taxonomy that lets teams recognize the same signal across different channels.

Verbal pattern-sharing decays. The PM who was in the sync meeting remembers the highlights. The PM who wasn't in the meeting hears a summary of the summary. By the time it reaches sprint planning, the original signal is unrecognizable.

Written artifacts persist. They can be referenced, searched, and revisited when a related issue surfaces three months later.

Format of a CS monthly product digest:

CS → Product Monthly Signal Digest

[Month, Year]

Top 3 Recurring Themes

1. [Theme name]: [N unique accounts, total ARR $X, severity: blocking/degraded/minor]

Summary: [2-3 sentences]

Verbatim customer language: "[quote]"

Trend: Increasing / Stable / Decreasing vs prior month

2. [Theme name]: [same format]

3. [Theme name]: [same format]

New Signals (First Appearance This Month)

- [Topic]: [1 sentence, N accounts]

Resolved or Closed (Signals That No Longer Appear)

- [Topic]: [Reason, e.g., "feature shipped," "workaround documented," "accounts resolved"]

Action Requested From Product

- Acknowledge receipt by [date]

- Provide preliminary priority assessment for top-ranked theme by [date]

Frequency vs recency trade-off:

Monthly digest vs quarterly deep-dive. Monthly wins for fast-moving product teams with frequent sprint cycles. Quarterly wins for teams where PM bandwidth for synthesis is genuinely limited and where the roadmap operates on quarterly planning cycles. Don't try to do both. Pick one cadence and maintain it.

What product should do with the digest:

Acknowledge receipt. Provide preliminary priority assessment for the top-ranked theme within five business days. Even "not in scope this quarter" is an acceptable response. The acknowledgment is what keeps the digest arriving next month.

The Threshold Problem: How Many Reports Constitute a Signal?

HBR's research on building customer data feedback loops argues that the most valuable signal is not volume but recurrence. The same friction appearing independently across different users and contexts is stronger evidence of a real product gap than a single customer who complains frequently.

Frequency alone is a bad threshold. Five customers reporting the same issue sounds like a signal. But if those five customers represent $150K in total ARR and none are at risk, it's a different business case than three customers representing $1.2M in ARR where two are flagged for churn.

Rule-of-thumb frameworks:

Frequency count: The threshold that works for most mid-market teams is 3+ unique accounts in a single quarter. Below 3, it's an anecdote. Above 3, it's a candidate for the structured escalation path.

ARR weight: Any single theme where the cumulative ARR of affected accounts exceeds 10% of your total ARR warrants immediate escalation regardless of account count. One enterprise customer representing $500K in a $5M ARR portfolio is a different situation than fifteen $10K accounts.

Churn risk correlation: If 2 or more at-risk accounts are citing the same issue, escalate immediately regardless of frequency or ARR. The churn coefficient amplifies every other signal. A feature gap that would be P3 in a healthy account is P1 in an account with renewal in 60 days and a yellow health score.

When a single high-ARR customer complaint outweighs ten small accounts:

Regularly. A named enterprise account representing 8% of ARR that is actively threatening churn over a specific product gap will and should outweigh ten small accounts with minor friction. This isn't a flaw in the scoring system. It's an honest acknowledgment of business reality. Customer-impact scoring for product decisions covers the composite scoring model that makes this trade-off explicit rather than implicit.

Closing the Loop to Sustain Contribution

This is the people problem. The process works. But it only keeps working if CSMs continue contributing, and CSMs continue contributing only if they see evidence that it matters.

Acknowledge when a pattern becomes a backlog item:

When a recurring theme from the CS digest reaches the product backlog, CS Ops sends a notification to all CSMs who flagged that theme: "The bulk export issue you reported has been logged as a P2 backlog item. Expected review in Q3 planning."

This notification takes 2 minutes to send. It signals to every CSM who contributed that their observation had a destination.

Report back when a pattern-driven feature ships:

When a feature that originated as a CS pattern signal ships to GA, the VP CS sends a note to the CS team: "The bulk export feature shipping this week was identified through six CSM reports over Q1. Here's what we shipped and here's the customer-facing release note."

This closes the loop at the team level. It also gives CSMs language to use with the customers who originally raised the issue.

The compounding effect:

CSMs who see their observations reach the backlog log 2-3x more observations in the following quarter than CSMs who receive no feedback on their submissions, per Gainsight's CS operations research. The loop closure isn't optional enrichment. It's the mechanism that sustains the pipeline.

Metrics to Measure Pattern Recognition Health

| Metric | Target | What a miss signals |

|---|---|---|

| % of feedback tagged with standard taxonomy | 85%+ | Training gap or taxonomy too complex |

| Distinct patterns surfaced per quarter | 4-8 | Either over-filtering (too few) or under-aggregation (too many) |

| % of surfaced patterns that reach product backlog | 30-50% | Either over-escalation or PM not triaging |

| Time from first report to pattern recognition (average) | <45 days | Sync cadence too infrequent or aggregation bottleneck |

| CSM contribution rate (% of CSMs submitting structured feedback per cycle) | 80%+ | Loop closure not happening; CSMs have disengaged |

Review these quarterly with VP CS and Head of Product jointly. If the contribution rate drops below 60%, the loop is broken. Diagnose whether it's the taxonomy, the sync format, or the absence of acknowledgment.

How Pattern Recognition Connects to the Broader System

Pattern recognition is the middle layer of the CS-product feedback stack. The support ticket pipeline provides structured individual signals from the support channel. Pattern recognition aggregates those signals (and the signals from CSM conversations) across accounts and surfaces themes that no individual ticket reveals.

Once themes are identified, ARR-weighted feedback quantification gives the financial context that product teams need to prioritize. And customer-impact scoring for product decisions is the formal scoring model that takes the patterns and weights them against ARR, churn risk, and strategic account value before presenting a prioritized view to the product team.

The VOC pipeline from CS to product is the overarching framework that connects all of these layers. Capturing feedback systematically from CSM notes to backlog covers the individual contribution mechanics in more detail.

Rework Analysis: Based on CS operations benchmarks across mid-market SaaS teams of 8-20 CSMs, the CSM Anecdote-to-Signal Threshold of 3+ unique accounts in a single quarter is the inflection point where the probability of a false pattern drops below 15%. Below 3, the odds that one account's use case is driving multiple reports are too high to warrant PM escalation. Above 5 (especially when the reports span different account segments and health scores) the pattern has a statistical confidence level that justifies formal backlog treatment. Our framework recommendation: pair the frequency threshold with an ARR gate (cumulative ARR of affected accounts above 10% of total ARR) and a churn-risk check (2+ at-risk accounts citing the same issue). Any two of these three conditions trigger escalation; all three is an immediate P1 regardless of absolute account count.

Learn More

- Support Tickets to Product Backlog Items

- The VOC Pipeline: How CS Feeds Product

- Capturing Feedback Systematically: CSM Notes to Backlog

- Customer-Impact Scoring for Product Decisions

- ARR-Weighted Feedback: Quantifying Customer Voice

Frequently Asked Questions

What is the CSM Anecdote-to-Signal Threshold?

The CSM Anecdote-to-Signal Threshold is the point at which independent feedback reports from different CSMs cross from coincidental account observations into statistically meaningful evidence of a product gap. For most mid-market SaaS teams with 8-20 CSMs, the practical threshold is 3+ unique accounts reporting the same issue within a single quarter. Below that number, the report is account-level data. Above it, it warrants formal aggregation, ARR weighting, and escalation to the product team through the structured pipeline.

Why do 71% of product managers receive individual anecdotes instead of aggregated patterns?

Because most CS organizations have no shared feedback taxonomy and no formal aggregation process. Per a ProductBoard survey of 600 PMs, 71% report that customer feedback arrives as individual accounts rather than synthesized signals. The root cause is architectural: CSM notes are account-bound by design (visible when viewing Acme Corp's record, invisible when searching for all accounts mentioning bulk export). Without a controlled vocabulary and a regular aggregation step, the same customer problem looks like three different problems because three CSMs used three different labels for it.

What is a shared feedback taxonomy and how do you build one?

A shared feedback taxonomy is a controlled vocabulary that maps customer observations to standard labels across three levels: feature area (e.g., CRM, Reporting, Integrations), customer need (e.g., bulk data operations, field customization), and severity (blocking, degraded, minor friction). A well-tagged observation reads "Reporting > Bulk Data Operations > Blocking," queryable across all accounts in one click. CS Ops governs the taxonomy, not individual CSMs. New taxonomy items require a CS Ops review (48-hour SLA) before going live. Unclassified items are tagged "Unclassified > [area] > [severity]" and reviewed monthly; three appearances of the same unclassified tag is the trigger for a new taxonomy item.

How often should CSM syncs focus on pattern detection vs. account reviews?

Most CS team syncs are account reviews, which means they're structured to surface individual account issues rather than cross-account patterns. The format change required is: a structured pre-read (each CSM submits 1-3 observations using a template before the meeting), and a meeting focus on overlaps only. Any topic that appeared in only one CSM's pre-read is handled offline. Per this framework, a 30-minute pattern detection sync with 100% pre-read completion produces better signal than a 90-minute account review meeting. If the sync runs longer than 30 minutes, the pre-reads aren't being completed and the meeting is doing the work the pre-reads should do.

What is the CS monthly product signal digest and what should it include?

The CS monthly product signal digest is a written report from CS Ops to the product team covering the top 3 recurring themes by frequency, with ARR context, trend direction, and verbatim customer language for each. It also includes new signals (topics appearing for the first time) and closed signals (topics that no longer appear, with reasons). The format closes with two action requests from product: acknowledge receipt by a specific date, and provide preliminary priority assessment for the top-ranked theme within five business days. Monthly cadence outperforms quarterly for fast-moving product teams; the acknowledgment from product is what ensures the digest keeps arriving.

What happens to CSM contribution rates when the feedback loop doesn't close?

CSMs who receive no feedback on their pattern contributions reduce their logging volume significantly. Per Gainsight's CS operations research, CSMs who see their observations reach the backlog log 2-3x more observations in the following quarter than CSMs who receive no acknowledgment. This isn't a motivational issue. It's rational resource allocation. If logging takes time and produces no visible outcome, the time is reallocated. The loop closure mechanism (a notification from CS Ops when a pattern becomes a backlog item, and a VP CS note when a pattern-driven feature ships) is the operational input that sustains contribution rates.

When does a single high-ARR account complaint outweigh ten small accounts?

Regularly. An enterprise account representing 8% of total ARR that is actively threatening churn over a specific product gap will and should outweigh ten SMB accounts with minor friction. The CSM Anecdote-to-Signal Threshold is frequency-based for cross-account pattern detection, but ARR concentration and churn risk override the frequency gate in specific circumstances: any single account above 5% of total ARR reporting a blocking issue warrants direct escalation outside the standard pattern-recognition cycle.

Senior Operations & Growth Strategist

On this page

- Why Pattern Recognition Fails at Scale

- The Foundation: A Shared Feedback Taxonomy

- Method 1: Structured CSM Sync (Weekly or Bi-Weekly)

- Method 2: Aggregated Feedback Tagging in the CS Platform

- Method 3: CS Digest to Product (Written, Not Verbal)

- The Threshold Problem: How Many Reports Constitute a Signal?

- Closing the Loop to Sustain Contribution

- Metrics to Measure Pattern Recognition Health

- How Pattern Recognition Connects to the Broader System

- Learn More

- Frequently Asked Questions

- What is the CSM Anecdote-to-Signal Threshold?

- Why do 71% of product managers receive individual anecdotes instead of aggregated patterns?

- What is a shared feedback taxonomy and how do you build one?

- How often should CSM syncs focus on pattern detection vs. account reviews?

- What is the CS monthly product signal digest and what should it include?

- What happens to CSM contribution rates when the feedback loop doesn't close?

- When does a single high-ARR account complaint outweigh ten small accounts?