ARR-Weighted Feedback: Quantifying the Customer Voice by Revenue, Not Vote Count

Your vote count says fourteen customers want better reporting. Your vote count is probably right. What your vote count doesn't tell you: those fourteen accounts represent $180K annual recurring revenue (ARR). And the three enterprise accounts that didn't vote for it, the ones who mentioned it once in a QBR and never followed up, represent $1.2M ARR.

You built reporting. You prioritized the fourteen. And 14 months later, one of the three enterprise accounts churned in a renewal conversation that went sideways when they surfaced a problem you thought you'd solved. The CSM had flagged the signal. It was in the notes. It just didn't register against a vote count that pointed the other direction.

This isn't a hypothetical. It's the systematic outcome of using ticket count or upvote totals as the primary input to product prioritization. The model has a structural bias: it weights customers who engage with your feedback mechanisms rather than customers who are commercially significant. Enterprise accounts complain to their CSM, not to your product portal. They don't click upvote buttons. They sign contracts and quietly evaluate alternatives.

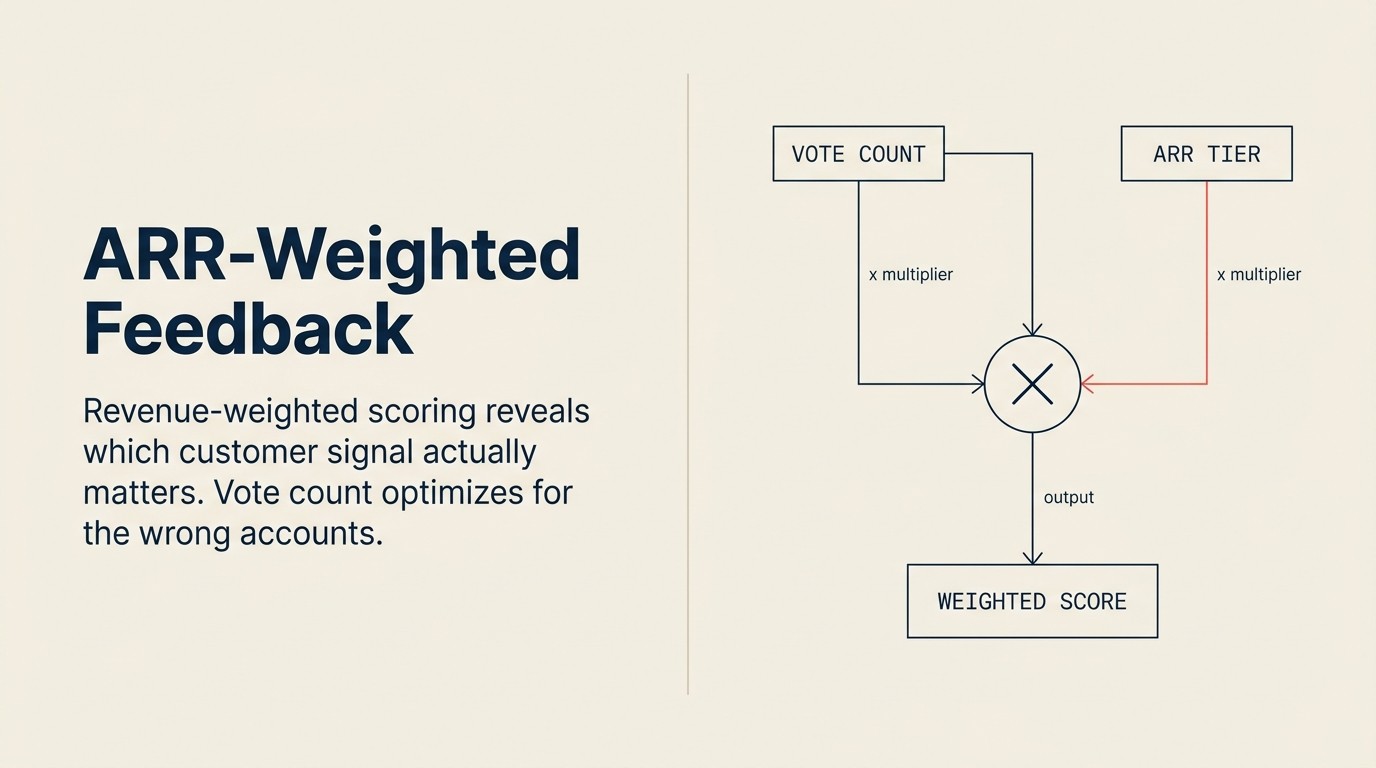

ARR-weighted feedback replaces vote count with a revenue-anchored model where each request carries a dollar weight that reflects actual retention risk and expansion potential. It's not a perfect model. But it's a significantly better input to roadmap decisions than the count of users who clicked a button. Tomasz Tunguz's framework for quantifying revenue at risk lays out the per-account methodology that ARR weighting borrows directly: churn signal strength, renewal proximity, and contract value combine into a single urgency number CS Ops can calculate from existing CRM data.

Why Vote Count Is the Wrong Model

The vote count model isn't arbitrary. It emerged from consumer product thinking, A/B tests, user surveys, upvote systems, where the goal is to understand what the largest number of users want. In a B2C context with relatively homogeneous users, that model makes sense. In a B2B SaaS context with a wide ARR distribution across customer tiers, it systematically misallocates development resources.

Three structural problems:

One account, one vote, regardless of contract value. A $10K SMB account clicking "upvote" on a feature request has identical weight to a $500K enterprise account doing the same. But the commercial reality is that the enterprise account's renewal decision is 50x more impactful on your NRR. A model that treats them equally is encoding a bias toward the segment that drives less revenue.

Survivorship bias in who submits tickets. The customers who engage with your feedback mechanisms are not a representative sample of your customer base. They're the customers who are motivated to submit formal feedback, often because they're actively exploring alternatives or have high self-service orientation. Enterprise accounts with a dedicated CSM don't need to submit a ticket. They raise the issue in their monthly sync. That signal disappears from vote-count models because it arrives through a human channel, not a digital one. MIT Sloan's analysis of B2B customer experience documents this gap directly: enterprise buyers use relationship channels, not self-service portals, to communicate their most important needs.

Volume-based prioritization systematically deprioritizes enterprise accounts. Enterprise customers churn harder and more quietly than SMB accounts. They don't complain; they evaluate. By the time you see a churn signal from an enterprise account, the conversation with the competitor has often already started. A model that underweights their feedback also underweights the early signal that would have caught the risk.

PMs default to vote count because it's available, auditable, and defensible. "Fourteen customers voted for this" is a sentence a PM can say in a planning session without anyone challenging the methodology. ARR-weighted scores require a bit more explanation, but they're far more likely to produce decisions that protect net revenue retention (NRR). The question isn't whether to weight by revenue. It's how to build the model.

Key Facts: Vote Count vs. Revenue-Weighted Prioritization

- Enterprise accounts submit formal product feedback at one-third the rate of SMB accounts relative to their contract value, using their CSM as the channel rather than the feedback portal, per Gainsight research.

- Product teams using ARR-weighted feedback models report 27% fewer enterprise churn events attributable to unaddressed product gaps, versus teams using vote-count prioritization, per TSIA's CS-product alignment study.

- Companies that switch from vote count to ARR-weighted prioritization see an average 12-point NRR improvement over 18 months, primarily driven by improved enterprise retention, per ChurnZero benchmarking research.

The ARR-Weighted Alternative: Core Concept

The core shift is simple: instead of counting requests, weight them. Each feedback item carries a revenue figure, not just the number of accounts requesting it, but a dollar weight that reflects how commercially significant those accounts are and how urgent the signal is.

The ARR-Weighted Feedback Formula has two components:

ARR at risk: the contract value at stake if this gap isn't addressed. Higher weight if the account is renewing soon. Higher weight if the CSM has flagged a churn signal tied specifically to this request.

ARR expansion potential: the additional ARR that could be unlocked if this gap is addressed. Some accounts are holding off on adding seats or modules specifically because a feature they depend on doesn't exist. That expansion headroom is a positive weight, not just a risk signal.

The formula:

Weight = (ARR × renewal proximity factor × churn signal strength) + (expansion headroom × dependency score)

Each variable is defined precisely enough to be calculated by CS Ops from data that already exists in the CRM.

Building the ARR Weight: Variable by Variable

ARR: the annual contract value for the account. Pull from CRM. Use the current year's contract value, not the lifetime total.

Renewal proximity factor: how close is the renewal date? Accounts renewing soon carry higher urgency weight:

- Renewing in less than 90 days: 1.5x

- Renewing in 90-180 days: 1.0x

- Renewing in 180-365 days: 0.7x

- Renewing beyond 12 months: 0.5x

The logic: a renewal 18 months away is low-urgency. The product team has time to address the gap before the renewal conversation. A renewal in 60 days is high-urgency. If the gap isn't acknowledged in the renewal discussion, it becomes a churn reason.

Churn signal strength: CSM-assigned assessment of how explicitly this request is tied to retention risk:

- Explicit churn statement ("if you don't build X, we'll have to look elsewhere"): 1.0

- CSM-inferred risk based on account health trends and the nature of the request: 0.5

- No expressed churn signal, general preference: 0.1

This is a judgment call, not a calculation. That's appropriate. The CSM's contextual read of the account is a real input, not noise. But it needs to be calibrated: "CSM-inferred risk" should be reserved for accounts where the CSM has documented health concerns, not applied to every request. Customer health monitoring data is the most reliable source for calibrating these inferences. Health score trends validate or challenge what CSMs flag manually.

Expansion headroom: the ARR value of untapped seats, modules, or upgrades the account could be purchasing but isn't. Pull from CRM if tracked. Estimate from seat count and average revenue per seat if not. Zero if the account is at full deployment.

Dependency score: binary: 1 if the CSM has documented this feature as a stated blocker for the expansion conversation, 0 if no dependency has been expressed.

A Worked Example: Three Accounts, One Feature Request

Feature: multi-region reporting filter (the ability to filter dashboards simultaneously by geographic region and account owner).

Account A: $40K ARR, renewing in 60 days, CSM flagged churn risk ("customer explicitly said this is on their list of requirements for renewal"), feature is their stated renewal condition.

ARR at risk: $40K × 1.5 (90-day proximity) × 1.0 (explicit churn statement) = $60K Expansion headroom: $0 (no expansion opportunity identified) Dependency score: 1 (stated renewal condition) Expansion component: $0 × 1 = $0 Total weight: $60K

Account B: $200K ARR, renewing in 14 months, no churn signal, mentioned reporting in a QBR once but didn't flag it as urgent.

ARR at risk: $200K × 0.5 (beyond 12 months) × 0.1 (no expressed churn signal) = $10K Expansion headroom: $50K (20 untapped seats at $2,500/seat average) Dependency score: 0 (no stated dependency on this feature for expansion) Expansion component: $50K × 0 = $0 Total weight: $10K

Account C: $15K ARR, just renewed (renewal in 11 months), no churn signal, but the CSM documented that they want to add 30 seats for a new regional team and the multi-region filter is a hard requirement for that team.

ARR at risk: $15K × 0.7 (180-365 days) × 0.1 (no churn signal) = $1,050 Expansion headroom: 30 seats × $2,500 = $75K potential ARR Dependency score: 1 (stated expansion blocker) Expansion component: $75K × 1 = $75K Total weight: ~$76K

Summary table:

| Account | ARR | Votes submitted | ARR weight |

|---|---|---|---|

| Account A | $40K | 1 (ticket submitted) | $60K |

| Account B | $200K | 1 (verbal, QBR note) | $10K |

| Account C | $15K | 0 (verbal, CSM note) | $76K |

| Total | $255K | 2-3 signals | $146K weighted ARR |

Vote-count result: this feature has 2-3 votes. It's a minor request.

ARR-weighted result: this feature represents $146K in weighted ARR exposure ($60K in near-term retention risk and $76K in blocked expansion). It belongs in the next quarterly planning session.

The difference in prioritization outcome is significant. Account C, the $15K account with $75K expansion potential, contributes the most weight, despite never submitting a formal request. That signal only exists in the model because the CSM documented it as an expansion dependency in the CRM. This is why capturing feedback systematically (with the dependency field included) is a prerequisite for the ARR weight model to work. Without structured capture, Account C is invisible.

What to Do With Non-Customers and Low-ARR Accounts

Prospects asking for features pre-sale. These go to Sales, not the VoC pipeline. Prospect requests are valuable for win/loss analysis and for Sales to surface in deal debriefs, but they shouldn't enter the ARR-weighted model. Prospects don't have ARR; their signals can't be weighted the same way. Mix prospect and customer signal in the same model and you distort both.

Small accounts with high churn risk. Include them, but apply a weight cap. An SMB account with $8K ARR and an explicit churn statement should be weighted. The explicit churn signal is real. But the cap prevents a large volume of low-ARR signals from distorting the model. A reasonable cap: any single account contributes no more than its own ARR to the weight, even with a maximum churn signal and proximity multiplier.

Accounts outside ICP. Track them in the capture system but exclude them from the weighted model. Building for non-ICP customers pulls the roadmap toward segments you're not trying to serve. CS Ops flags non-ICP accounts at categorization time; they appear in a separate report but don't affect the weighted scores. Once you've drawn those boundaries, the practical question is how to run the model without a data engineering team.

Practical Setup: Running This Without a Data Team

Most mid-market teams can run the ARR-weighted model in a spreadsheet. Here's what you need:

Data sources: CRM (account ARR, renewal date, expansion headroom if tracked), CS platform (CSM-assigned churn risk flags, capture notes), and the CS Ops capture log (signal type, verbatim, account context).

Spreadsheet structure: one row per account per feedback theme. Columns: account name, ARR, renewal date, renewal proximity factor (calculated from date), churn signal strength (CSM dropdown: explicit / inferred / none), expansion headroom, dependency score, ARR at risk (formula), expansion component (formula), total weight (formula).

The formulas are straightforward. CS Ops can build the model in a few hours once the data sources are identified. The ongoing maintenance is a weekly update of the churn signal strength field (as CSMs update account health assessments) and a monthly addition of new captures.

When to stay manual vs. when to automate: stay manual until CS Ops is spending more than three hours per week on data pulls and formula updates. At that point, a CRM-to-spreadsheet sync or a lightweight integration with Productboard reduces ongoing overhead. The model logic doesn't change with automation. The spreadsheet structure becomes the data model for the integration.

How to present ARR-weighted data to a skeptical PM: lead with the comparison. Don't open with "here's our methodology." Open with: "Our vote count says three accounts requested this. Here's what happens when we attach revenue context." The comparison between the raw count and the weighted score makes the case more clearly than explaining the formula. PMs respond to the "three votes = $146K weighted ARR" framing because it translates CS data into a language they already use for roadmap justification. The aggregate number obscures which specific accounts are at risk, and it's the per-account view that drives the real decisions.

Integrating ARR Weight Into the Prioritization Ritual

The weighted score feeds directly into the joint prioritization session, the quarterly meeting between the PM lead, VP CS, and CS Ops that produces the prioritized feedback shortlist.

CS Ops presents a ranked list of feedback themes by weighted ARR, with the top ten items annotated with verbatims and account lists. PMs see both the weight and the accounts behind it. The joint session applies the other two dimensions (customer breadth and strategic alignment) to arrive at the composite score. Prioritizing customer feedback covers the three-dimension model in detail. The quarterly customer feedback review is the structured session where this ranked list gets turned into a prioritized shortlist with named PM owners.

The ARR weight is the CS contribution to the joint scoring. PMs can challenge the weight inputs ("is that expansion headroom realistic?") but they should do so with data, not instinct. When CS brings structured, documented ARR weight to a planning session, the conversation shifts from "CS thinks we should build X" to "here's the revenue exposure if we don't."

Limits of the Model: What ARR Weight Doesn't Capture

The model is useful. It's not complete. Three situations require override:

Strategic lighthouse accounts. A referenceable logo, a well-known brand whose public advocacy drives pipeline, carries value beyond its ARR. A $150K enterprise account that speaks at your conference, contributes to case studies, and drives five referrals per year is worth more than its ARR weight suggests. CS and Product leadership should maintain a short list of lighthouse accounts whose requests receive elevated consideration regardless of weight.

Compliance or regulatory requirements. A customer request driven by GDPR, HIPAA, SOC 2, or industry-specific regulation isn't a preference signal. It's a table-stakes threshold. These don't enter the weighted model; they escalate directly to the CPO as compliance requirements.

Product vision constraints. A high-weight request that contradicts the product's direction, that would require architectural changes the team isn't willing to make or pull the product toward a market segment being deliberately exited, needs to be declined with a clear explanation. The ARR weight tells you the cost of declining; it doesn't override strategic product judgment. Document the override with the reason.

Rework Analysis: The ARR-Weighted Feedback Formula consistently surfaces a counterintuitive result in the worked example: the smallest-ARR account (Account C at $15K) produces the largest weight ($76K) because the CSM documented an expansion dependency. This is the model working correctly. It rewards careful capture discipline. Teams that implement the formula without improving their capture quality first will find the expansion component is always zero, because dependency notes don't exist in the CRM. The formula is only as good as the structured capture layer feeding it. Rework's CS tooling is designed to make expansion dependency documentation part of the standard account record, so the formula runs on real data from day one.

Connecting ARR Weight to CS Team Incentives

The model creates a feedback loop for CSMs: better capture data improves the weight accuracy, which improves the prioritization outcome, which builds CSM confidence that their input matters.

Make the connection visible. When an ARR-weighted score contributed to a roadmap decision, even a deferral decision, CS Ops tells the CSMs whose captures were part of the weight. "The regional reporting feature made it into Q3 planning. Your capture notes for Account C, where you documented the expansion dependency, were part of why it cleared the weight threshold." That attribution is worth more than any capture training program. Pattern recognition across CSMs is the natural next layer: once individual captures are flowing reliably, CS Ops can start identifying themes that no single CSM can see from their own account portfolio.

CSMs who see their documentation improve product decisions become significantly more disciplined about the five capture fields. The capture quality improvement improves the model's accuracy. And the model's accuracy improvement produces better prioritization decisions that protect NRR. The loop closes.

Frequently Asked Questions

What if the CRM doesn't track expansion headroom?

Start without it. Run the model using only ARR at risk (ARR × renewal proximity × churn signal strength). That's still a significant improvement over vote count. Add expansion headroom as a data field once CS Ops has established the habit of capturing dependency notes at the account level. The minimum viable model is better than waiting for a complete data set.

How do you handle a feedback theme where the accounts are widely distributed across tiers?

Weight each account individually and sum the weights. A theme with eight mid-market accounts at $50K each and two enterprise accounts at $250K each produces a weighted total that reflects both the breadth of the mid-market signal and the concentration of the enterprise signal. The joint scoring session then applies the breadth dimension (how many accounts, weighted by tier) separately from the revenue weight.

Should the model be visible to customers?

No. The weighting methodology is an internal prioritization tool, not a customer-facing commitment framework. Customers should not know that their ARR weight influences prioritization. That creates a perverse incentive for gaming, where large accounts threaten churn to boost their weight. The customer-facing communication stays at the level of: "We reviewed this request and here's the status." The model informs the decision; it doesn't get cited to the customer.

What is the ARR-Weighted Feedback Formula?

The ARR-Weighted Feedback Formula calculates a revenue-dollar weight for each customer feedback item, replacing raw vote or ticket counts with a score that reflects actual retention risk and expansion potential. The formula is: Weight = (ARR × renewal proximity factor × churn signal strength) + (expansion headroom × dependency score). Each variable is calculated from data already in the CRM, making the model runnable in a spreadsheet without a dedicated data team. Companies that switch from vote count to ARR-weighted prioritization see an average 12-point NRR improvement over 18 months, per ChurnZero benchmarking research.

How do churn signal strength values work in the formula?

Churn signal strength is a CSM-assigned multiplier with three values: 1.0 for an explicit churn statement ("if you don't build X, we'll have to look elsewhere"), 0.5 for CSM-inferred risk based on documented account health concerns, and 0.1 for no expressed churn signal (general preference). The multiplier is a judgment call. That's intentional. The CSM's contextual read of the account is a real input, not noise. Customer health monitoring data is the most reliable calibration source: if a CSM flags inferred risk, that flag should be backed by health score trends or declining feature engagement.

Learn More

Senior Operations & Growth Strategist

On this page

- Why Vote Count Is the Wrong Model

- The ARR-Weighted Alternative: Core Concept

- Building the ARR Weight: Variable by Variable

- A Worked Example: Three Accounts, One Feature Request

- What to Do With Non-Customers and Low-ARR Accounts

- Practical Setup: Running This Without a Data Team

- Integrating ARR Weight Into the Prioritization Ritual

- Limits of the Model: What ARR Weight Doesn't Capture

- Connecting ARR Weight to CS Team Incentives

- Frequently Asked Questions

- What if the CRM doesn't track expansion headroom?

- How do you handle a feedback theme where the accounts are widely distributed across tiers?

- Should the model be visible to customers?

- What is the ARR-Weighted Feedback Formula?

- How do churn signal strength values work in the formula?

- Learn More