The "We Built It, Nobody Uses It" Problem: How CS Surfaces Feature Non-Adoption Back to Product

Engineering ships the feature. Product marks the milestone closed. The announcement goes out. And then: nothing. Not complaints, not praise. Just silence, and a CSM who knows that three of their accounts are completely ignoring the new workflow and has no idea whether that's expected, alarming, or something they should be escalating.

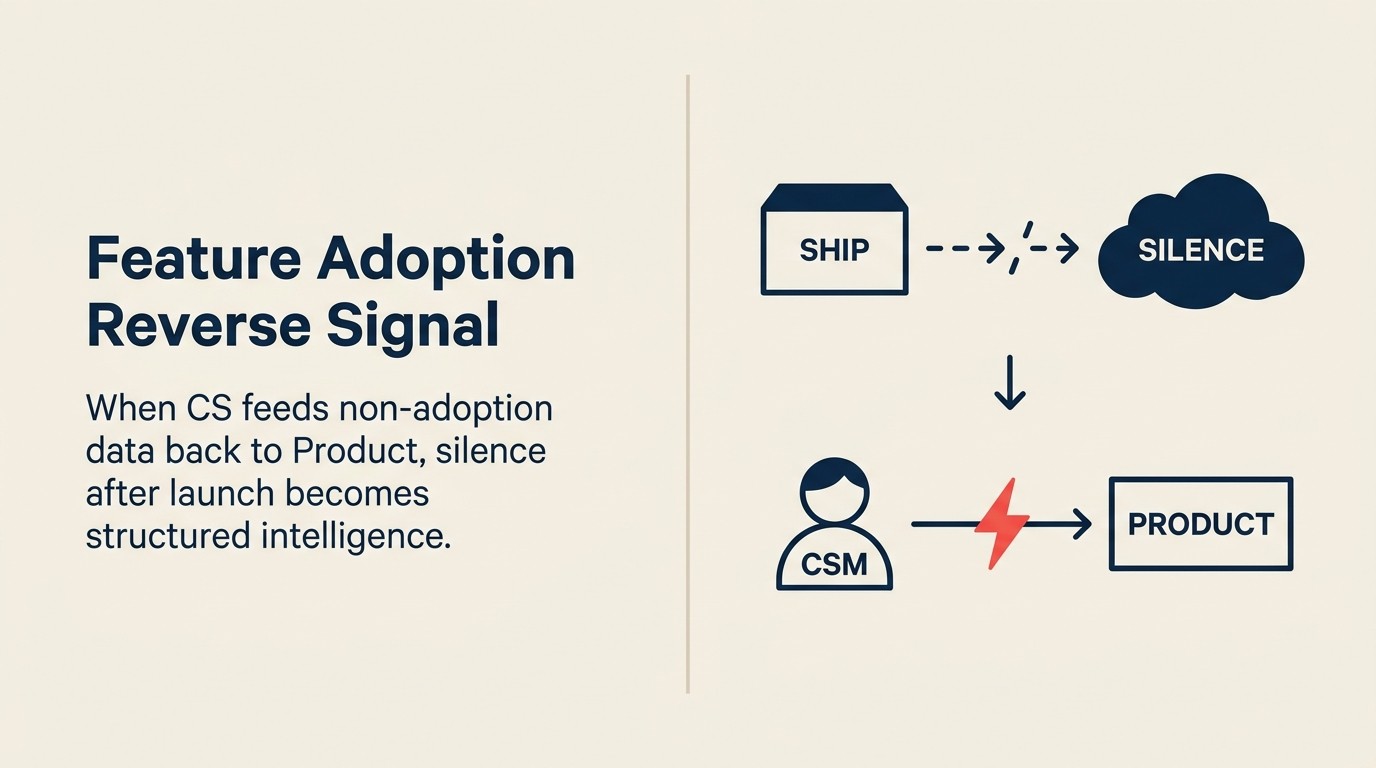

This is the seam problem. The gap between what Product ships and what customers actually adopt. It's not a product failure and it's not a CS failure. It's a signal failure: a breakdown in the reverse information flow that should tell Product what's landing and what isn't. Product interprets silence as acceptance. CS sees something different. And without a structured system to surface what CS sees, the same non-adoption problem repeats across the next release cycle, and the one after that.

See the CS & Product alignment glossary for definitions of terms like VoC, health score, and ICP used throughout this article.

The fix isn't complicated, but it requires both sides to build something they typically don't: a formal mechanism for CS to report what customers aren't using, and a formal mechanism for Product to define what adoption was supposed to look like in the first place.

The Adoption Signal-Failure Pattern is the recurring failure sequence this article defines: features ship, silence follows, Product reads silence as acceptance, and CS absorbs the adoption cost quietly through workarounds and avoided conversations. The reverse signal framework that breaks this pattern has four components: (1) Product defines expected adoption per segment at launch, not after; (2) CS runs 30/60/90 day adoption checkpoints during regular customer reviews; (3) non-adoption gets tagged in CS notes in structured format, not free text; (4) confirmed patterns escalate from CSM to CS Ops to Head of Product through a defined path. The pattern's central insight is that non-adoption is a seam problem. It belongs to both sides, and neither side can fix it alone.

Why Features Fail After Launch

HBR research on product launches identifies customer education and discoverability as the two most common reasons new capabilities fail to gain traction, patterns that appear repeatedly in post-launch CS reviews. Not all non-adoption is the same. Before CS can report it meaningfully and before Product can act on it, both sides need a shared vocabulary for what's actually happening. There are three distinct failure modes, and conflating them produces the wrong interventions.

Discoverability gap. The feature exists, it works, and it's genuinely useful for this customer's workflow, but they never found it. In-app placement was unclear. The release announcement didn't reach the right person. The CSM mentioned it once in a walkthrough that the champion skipped. Discoverability gaps are usually fixable without touching the product itself: a targeted CSM outreach campaign, a contextual tooltip update, or a placement change in the UI.

Relevance mismatch. The feature was built for a use case this customer doesn't have. A workflow automation feature built for teams managing high-volume pipeline doesn't land with accounts that have small, high-touch sales teams. This isn't a positioning failure. It's a segmentation problem that should have been caught in the roadmap conversation. When CS sees widespread non-adoption among a specific customer profile, it's often because the feature was built for a different segment than the one CS manages. This is closely related to identifying adoption barriers at the account level. The segmentation signal and the account-level diagnostic are two sides of the same coin.

Activation friction. The customer found the feature, understands the value in principle, but can't get it to work in their environment without significant effort. Integration requirements they don't have the internal resources to configure. A workflow that requires data fields they haven't populated. A dependency on another feature they haven't activated yet. Activation friction shows up in support tickets ("I tried the new thing and it didn't work") and in CSM workarounds (teaching customers to do manually what the feature was supposed to automate).

The critical distinction: non-adoption doesn't always mean the feature is wrong. A discoverability gap is a communication fix. A relevance mismatch is a segmentation signal. Activation friction is a UX and implementation problem. Product needs to know which is which, and CS is the only team with the account-level visibility to classify it. The psychology behind why users resist new features (even genuinely useful ones) is explored in depth in HBR's research on new-product adoption.

Key Facts: The Cost of Feature Non-Adoption

- On average, only 28% of new B2B SaaS features achieve meaningful adoption within 90 days of launch, per Pendo's 2024 State of Product Leadership report.

- 80% of features in a typical SaaS product are rarely or never used, according to Standish Group research cited in the 2023 Chaos Report.

- CS teams that run structured post-launch adoption check-ins report identifying adoption gaps an average of 47 days earlier than teams relying on passive usage data alone (Gainsight, 2024).

What Non-Adoption Looks Like from the CS Side

CSMs see non-adoption before it shows up in product analytics. The signals are behavioral, not metric-based, which is exactly why they're easy to miss in a dashboard and easy to catch in a QBR. Building a product usage and customer health dashboard that both teams track is one practical way to bridge the gap.

Customers skip the feature in every walkthrough. The CSM is walking through the product with a customer and goes around the new feature entirely, not because they forgot, but because they've learned not to bring it up. It generates questions they don't know how to answer, or it leads to a 15-minute detour they don't have time for. This is CSM avoidance behavior, and it's one of the clearest signals that a feature isn't ready to be positioned confidently.

Support tickets describe confusion, not breakage. When customers submit tickets about a feature saying "I'm not sure how this is supposed to work" rather than "this isn't working," that's an adoption signal, not a bug report. The feature isn't broken. It's not landing. These tickets go to the support queue and get resolved without anyone flagging them to Product as an adoption signal.

CSMs quietly workaround instead of teaching. Instead of walking customers through the new feature, the CSM teaches the old way of doing the same thing: the manual process the feature was supposed to replace. This is rational from the CSM's perspective: they're protecting the customer relationship by not introducing a frustrating experience. But it means the feature's non-adoption never surfaces as a problem because the CSM is absorbing the cost.

Health dashboard shows low usage for accounts that should be using it. This one is metric-visible, but only if someone is looking with the right context. An account that should be using a workflow automation feature because their use case matches it perfectly (but isn't) shows up differently than an account that isn't using it because the feature doesn't apply to them. CS has the context to distinguish these. Product analytics alone often can't.

The Reporting Gap: Why CS Signal Rarely Reaches Product

The signal exists. CSMs see it constantly. And it almost never reaches Product in a usable form. There are four structural reasons why.

CSMs don't know if low usage is normal or alarming. If Product never communicated what adoption was supposed to look like (what percentage of accounts in a given segment were expected to activate the feature by 60 days), then CSMs have no baseline. Low usage might be expected. It might be catastrophic. Without a baseline, there's nothing to compare against and nothing to escalate.

There's no formal channel for "this feature isn't landing." Bug reports go to support. Feature requests go to the CS-to-Product pipeline (see the VOC pipeline). But there's typically no defined path for "this existing feature has a soft adoption problem across multiple accounts." That falls into the void between support and roadmap conversations.

Product interprets silence as acceptance. When a feature ships and the support queue stays quiet, Product's default assumption is that it's working. The absence of complaints reads as success. But CSMs know that the complaints are being absorbed before they become tickets: by CSMs who workaround instead of escalate, and by customers who adapt rather than protest.

The feedback loop closes on bugs, not adoption drift. Post-launch monitoring is typically focused on error rates, performance metrics, and bug reports.

"80% of features in a typical SaaS product are rarely or never used. Yet Product teams continue to interpret post-launch silence as acceptance rather than as a signal that the reverse information flow has broken down." (Standish Group, 2023) These are the signals that are easiest to instrument. Adoption drift (the slow accumulation of accounts that tried the feature and quietly stopped) requires different instrumentation and a different reporting relationship. Most CS-Product pairs haven't built it.

Building the Reverse Signal Flow

The fix requires action on both sides. Product has to define what adoption should look like before the feature ships. CS has to build the reporting cadence that surfaces what actually happened. Neither works without the other.

Product's responsibility: define expected adoption at launch. Before a feature goes GA, Product should define, in writing, what adoption looks like for each target segment. Not an aspirational goal. A minimum threshold. "We expect 40% of mid-market accounts with active pipeline workflows to activate this feature within 60 days." This baseline is what makes CS reporting actionable. Without it, a CSM saying "three of my accounts aren't using it" has no context. With it, the same report becomes: "Three of my accounts that match the activation profile aren't using it, which is 60% of my target cohort and below the 40% baseline threshold."

CS's responsibility: the 30/60/90 adoption checkpoint cadence. After every significant feature launch, CSMs run a structured check during their regular account reviews. Not a separate meeting. A standing agenda item in EBRs and QBRs.

The checkpoint has three stages:

| Checkpoint | What CS checks | What CS reports |

|---|---|---|

| 30 days | Is the customer aware of the feature? Has the CSM introduced it? | Awareness rate by segment; activation blockers identified |

| 60 days | Is the customer using it? If not, which failure mode? | Adoption rate vs. expected baseline; failure mode classification (discoverability / relevance / activation) |

| 90 days | Is the customer getting value from it? Are they workarounding? | Adoption confirmation or escalation trigger if adoption rate is still below threshold |

Non-adoption tagging in CS notes: structured, not freeform. CSM notes that say "customer doesn't use the X feature yet" are not reportable. Notes that say "Feature: workflow automation / Account: Meridian Corp / Failure mode: activation friction (missing CRM integration) / 60-day checkpoint: not adopted" are. The difference is that the second version can be aggregated. When CS Ops pulls a monthly report of non-adoption tags across all accounts, patterns become visible: if 12 accounts have "activation friction" tagged against the same feature, that's a Product conversation. If 8 accounts have "relevance mismatch," that's a segmentation conversation.

The escalation path: CSM to CS Ops to Head of Product. Single-account non-adoption is a CSM-level conversation (reach out, diagnose, address). Multi-account non-adoption with a consistent failure mode is a CS Ops-level pattern (aggregate, analyze, prepare). Confirmed pattern with evidence across segments is a Head of Product escalation (present data, request response, define action). The escalation criteria should be defined in advance: "If more than 20% of target-segment accounts show the same failure mode at 60 days, CS Ops escalates to Product." The pattern recognition framework for CS teams describes how to aggregate these signals before they reach escalation level.

The Post-Mortem That Actually Helps

Most feature post-mortems happen at launch and focus on what went well. The post-mortem for non-adoption happens at 90 days and focuses on what didn't land. These are different conversations, and the second one almost never happens. Which is why the same adoption problems repeat across releases.

The feature non-adoption review is a quarterly conversation between CS and Product that covers every feature from the prior quarter with adoption rates below expected baseline. The format isn't complicated: present the adoption data, classify the failure modes, and agree on what happens next.

Three questions Product and CS answer together:

- Was this a positioning miss? Did CS and marketing present the feature in a way that matched the use case? If not, repositioning to the correct segment may be all that's needed.

- Was this a UX miss? Does the activation friction point to a specific point in the flow where customers drop off? If so, which Engineering sprint should address it?

- Was this a wrong-ICP miss? Was the feature built for a customer profile that doesn't match the accounts CS manages? If so, what does that mean for the roadmap conversation that drove this feature in the first place?

The outputs of the review aren't just action items. They're signals that should affect how the next feature is launched. An activation fix. A repositioning note that goes into CSM training. A deprecation recommendation for a feature that turns out to have no real use case in the current customer base.

Making This Systematic

Running this as a one-time experiment after a bad launch doesn't work. The reverse signal flow only produces consistent value when it's embedded in the standard operating rhythm, not added on top of it.

Non-adoption as a standard field in post-launch reviews. The question "what does expected adoption look like, and how will CS flag when it's not happening?" should appear in every feature launch checklist, not as an afterthought but as a launch-blocking item. If Product can't define an adoption baseline, the feature isn't ready to go GA. Gartner's SaaS adoption framework recommends embedding adoption milestones and owner accountability directly into the launch checklist. That's the same discipline that prevents "we shipped, they didn't use it" cycles from repeating.

CSM adoption reporting embedded in CS platform health scoring. Feature activation status for target features should be a health score component, not a separate spreadsheet. When a CSM checks a customer's health score and sees "Workflow Automation: Not Activated (60 days post-launch)" surfaced automatically, they don't have to remember to check. The signal finds them. A structured feature adoption strategy at the account level turns that signal into a repeatable activation play.

Shared adoption metrics CS and Product both track. The classic problem is that Product tracks feature usage data and CS tracks customer health data, and neither team can see the other's numbers. A shared dashboard (even a simple one) that shows adoption rates by segment, broken down by failure mode classification from CS notes, closes this gap. Both teams look at the same numbers. The conversation shifts from "we have different data" to "we're reading the same data differently."

Mid-Market Reality Check

Enterprises have UX research teams, product analytics specialists, and dedicated customer success programs with structured adoption tracking built into the platform contract. Startups often don't have enough customers for patterns to be statistically meaningful. Mid-market sits in the hard middle: enough customers for patterns to exist, not enough headcount to have dedicated teams for every part of this framework.

The minimum viable version for a 50-CSM team managing 500 accounts: one adoption checkpoint question added to the standard EBR template, one non-adoption tag added to the CS platform note taxonomy, and a monthly 30-minute CS Ops to Product Ops sync where flagged patterns get reviewed. That's it. The quarterly post-mortem can be a standing agenda item in the monthly CS-Product sync once there's enough data to warrant it.

The point isn't to build a research program. It's to close the gap between what Product ships and what CS sees, so the next feature launch starts with real adoption data from the last one, not silence that everyone interprets differently.

"Products with documented expected adoption baselines per segment at launch see 34% higher feature adoption rates at 90 days compared to products without baselines. The baseline isn't bureaucracy, it's the measurement instrument that makes CS reporting meaningful." (ProductBoard, 2024)

Rework Analysis: CS teams using Rework's unified CRM and task management platform can tag non-adoption signals directly in account records and escalate patterns to product ops without switching tools. When adoption checkpoints live alongside health scores, renewal dates, and CSM notes in one place, the 47-day gap between signal and escalation shrinks to days, not weeks. The discipline of structured tagging is the same whether your CS team uses five accounts or five hundred. The platform just removes the spreadsheet bottleneck.

Self-Diagnostic: Three Questions After the Next Launch

After your next feature goes GA, wait 60 days, then ask:

Can CS Ops run a report of which accounts in the target segment haven't activated this feature? If the answer is "not without manually pulling it from several places," the tagging and tracking infrastructure doesn't exist yet.

Does Product have a written baseline for what adoption was supposed to look like at 60 days? If that baseline wasn't defined before launch, there's nothing to compare the actual adoption data against.

Has any CSM escalated a non-adoption pattern to CS Ops in the past 30 days? If the answer is no (and you have more than 50 accounts in the target segment), the escalation path either doesn't exist or isn't being used. Find out which.

The answers tell you where the reverse signal flow is broken and what to fix first.

Frequently Asked Questions

What is feature non-adoption in B2B SaaS?

Feature non-adoption occurs when customers don't use a capability that was built for their use case. On average, 80% of features in a typical SaaS product are rarely or never used (Standish Group, 2023). Non-adoption is not always a product failure. It may reflect a discoverability gap, a relevance mismatch, or activation friction, each of which requires a different intervention.

Why does CS see feature non-adoption before Product analytics do?

CS signals are behavioral and account-specific. CSMs observe when customers skip features in walkthroughs, when support tickets describe confusion rather than breakage, and when they themselves build workarounds to avoid introducing a frustrating experience. Product analytics detect aggregate usage trends, but they can't distinguish "not applicable to this account" from "should be using this but isn't" without the account context CS provides.

What is the Adoption Signal-Failure Pattern?

The Adoption Signal-Failure Pattern is the recurring failure sequence in which a feature ships, post-launch silence is interpreted as acceptance, and CS absorbs the adoption cost quietly, through workarounds, avoided walkthroughs, and unescalated friction. The pattern repeats because neither side has built the reverse signal flow that surfaces what CS sees back to Product in a structured, actionable form.

What are the three failure modes of feature non-adoption?

Non-adoption falls into three distinct categories: (1) a discoverability gap, where the feature is useful but customers never found it; (2) a relevance mismatch, where the feature was built for a different segment's use case; and (3) activation friction, where customers found the feature but couldn't make it work in their environment. Each failure mode requires a different fix. Conflating them produces the wrong intervention.

How should CS teams tag and report non-adoption signals?

Non-adoption should be captured in structured CS notes, not freeform text. A reportable note includes: the feature name, the account name, the failure mode classification (discoverability / relevance / activation), and the checkpoint stage (30/60/90 days). This format enables CS Ops to aggregate patterns across accounts. When more than 20% of target-segment accounts show the same failure mode at 60 days, that's an escalation-level signal for Product.

What should Product define before a feature goes GA?

Before launch, Product should document a minimum adoption threshold per segment. For example: "we expect 40% of mid-market accounts with active pipeline workflows to activate this feature within 60 days." Without that baseline, CS has no reference point for what low usage means, and nothing to compare actual adoption data against. Products with documented adoption baselines at launch see 34% higher feature adoption rates at 90 days compared to products without (ProductBoard, 2024).

How often should the feature non-adoption review happen?

The non-adoption review (a structured conversation between CS and Product on every feature with adoption rates below expected baseline) should happen quarterly. It covers three diagnostic questions: was this a positioning miss, a UX miss, or a wrong-ICP miss? The outputs feed directly into how the next feature launch is structured, so the same adoption problems don't repeat across release cycles.

Learn More

Senior Operations & Growth Strategist

On this page

- Why Features Fail After Launch

- What Non-Adoption Looks Like from the CS Side

- The Reporting Gap: Why CS Signal Rarely Reaches Product

- Building the Reverse Signal Flow

- The Post-Mortem That Actually Helps

- Making This Systematic

- Mid-Market Reality Check

- Self-Diagnostic: Three Questions After the Next Launch

- Frequently Asked Questions

- What is feature non-adoption in B2B SaaS?

- Why does CS see feature non-adoption before Product analytics do?

- What is the Adoption Signal-Failure Pattern?

- What are the three failure modes of feature non-adoption?

- How should CS teams tag and report non-adoption signals?

- What should Product define before a feature goes GA?

- How often should the feature non-adoption review happen?

- Learn More