The Generate vs. Execute Boundary: Why Guardrails Matter

The most common AI incident in business isn't a model failure. It's a misunderstood boundary.

A team approves an AI tool that drafts responses. Somewhere between the demo and the deployment, "draft" becomes "send." Nobody made that decision explicitly. It just happened, because drafting and sending felt like one continuous step. The first morning after launch, the AI has already sent 340 emails to the wrong list, with the wrong names in a merge field nobody tested.

This failure pattern shows up across industries: AI-drafted contracts that got auto-executed, refunds that went out without approval, calendar invites that displaced existing bookings. In every case, the root cause is the same. Someone assumed the AI would stop before acting. Nobody wrote that assumption down.

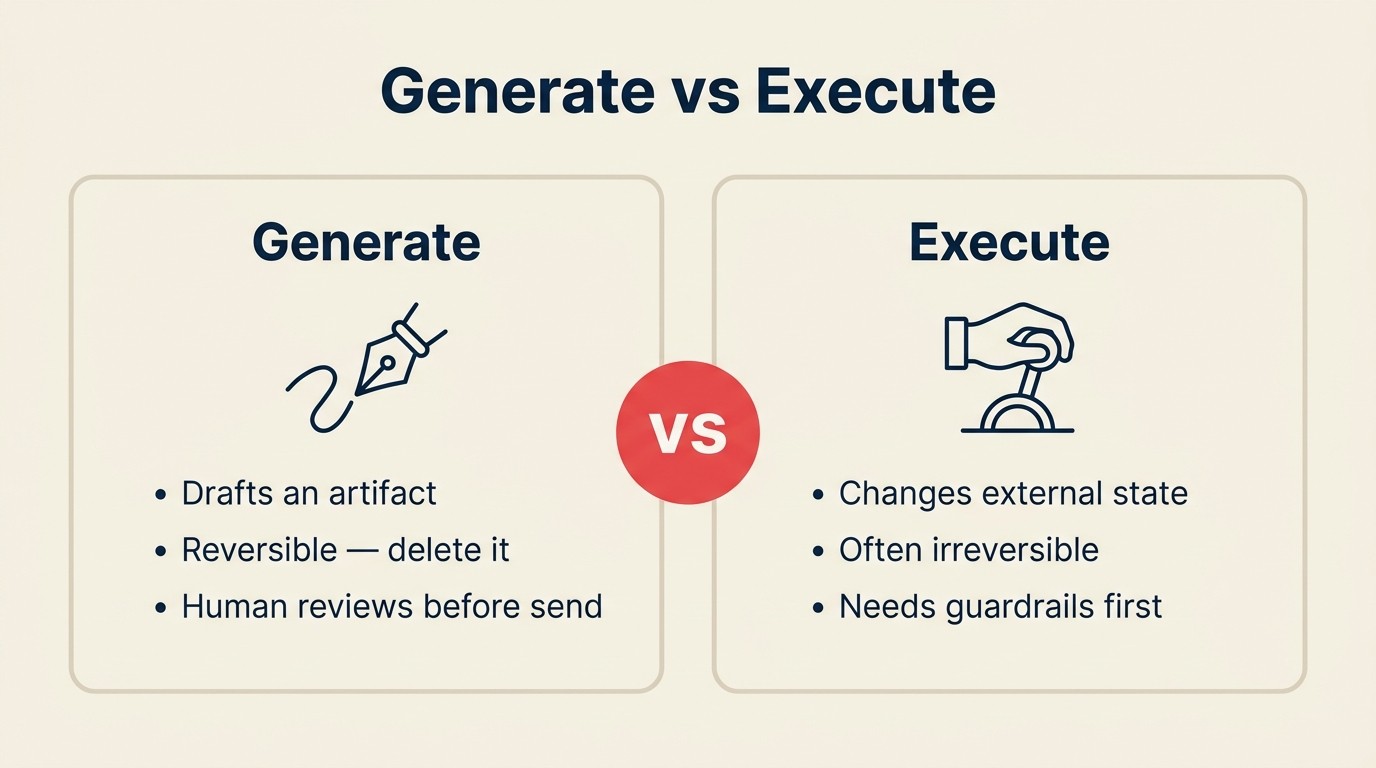

The Generate-Execute boundary is the most important line in AI governance. Generate produces a draft: an artifact that lives inside the AI context, reviewable and reversible, with zero external consequences. Execute commits a change to the world: money moves, messages arrive, records update, code ships. Those are two completely different risk profiles, and treating them as one continuous workflow is where most AI incidents originate.

This article explains how to draw that boundary, govern it, and build the approval patterns that prevent the incident from happening in the first place.

The difference in one sentence

Generate produces an artifact that lives inside the AI context. Execute commits a change to systems outside the AI that other people and processes can see immediately.

That sentence contains the whole argument. But it's worth being concrete.

Four places the boundary lives

The abstract distinction becomes obvious once you see it in specific workflows. Here are four examples that show the same activity before and after the boundary:

| Action | Generate (before the line) | Execute (after the line) |

|---|---|---|

| AI drafts a follow-up with prospect name, company, and relevant context | AI sends the draft to the prospect's inbox | |

| Code | AI writes a fix for the bug and creates the pull request locally | AI merges the pull request to the main branch |

| Refund | AI recommends a $340 refund and drafts the approval message | AI issues the refund in Stripe and closes the support ticket |

| Calendar | AI suggests three meeting times based on both parties' availability | AI sends the calendar invite and books the slot |

In every row, the Generate side produces something reviewable: a document, a recommendation, a plan. Nothing outside the AI has changed. A human can read it, edit it, reject it, or improve it. The cost of an error is zero, because the draft hasn't gone anywhere.

On the Execute side, something real happened. Money left the account. Code is now in production. A message landed in someone's inbox. An hour of someone's day is now committed. Reversing any of these requires effort. Some can't be reversed at all.

Why the boundary matters

The risk argument is direct.

Generate errors embarrass. If the AI drafts a bad email, you read it and don't send it. If it recommends the wrong refund amount, a human catches it. If the code it writes has a bug, your developer finds it in review. Generate errors are cheap. They stay inside the system until a person decides otherwise.

Execute errors cost money, damage trust, and are often irreversible. Wrong bulk send to 10,000 customers. Duplicate refund processed at 2 a.m. Code deployed to production that breaks a core workflow. Calendar invite sent to a client with the wrong agenda. These events happen in the world, not in a draft, and unwinding them requires real resources, sometimes legal exposure.

This asymmetry is why the ACE Framework treats Generate and Execute as separate capabilities. They look similar on a slide. "AI drafts and sends emails" sounds like one thing. It's actually two things with completely different risk profiles and governance requirements.

Governance, approval workflows, and human-in-the-loop policies all exist to control what happens at the transition from Generate to Execute. When that transition is explicit and designed, most AI incidents don't happen. When it's implicit and assumed, they do.

The boundary in product design

If you're evaluating AI tools or configuring your own workflows, the Generate-Execute boundary shows up as a design pattern:

- User initiates a task (or a trigger fires automatically)

- AI runs: Ingest → Analyze → Generate (artifact produced, no external change)

- User sees the output

- User approves (the boundary, the most important moment in the workflow)

- System executes the action in the external world

Step 4 is the hinge. Missing it — either by skipping it intentionally in the name of speed, or missing it accidentally because no one specified it should exist — is how Rachel's email went to 340 people.

The AI tools that handle this well make the boundary visible. Intercom's AI drafts responses and surfaces them to the agent for approval before sending. GitHub Copilot suggests code completions but doesn't commit them. Calendly's AI proposes times but doesn't book until the recipient confirms. These aren't limitations. They're features. The explicit approval step is what makes the tool trustworthy enough to use at scale.

Five patterns at the boundary

Not every workflow needs the same approach. These five patterns let you calibrate based on risk and volume:

1. Review-gate

Every Execute requires explicit human approval before anything happens in the external world. Best for high-value or irreversible actions: a refund above $1,000, an email to a key account, a personnel decision. Limitation: doesn't scale past a few dozen daily approvals. Use selectively.

2. Threshold

AI executes autonomously up to a defined limit; above it, the action pauses for review. Example: AI auto-resolves refund requests under $50, flags anything higher. The threshold lives in system configuration, not a policy document. Best for medium-volume, mixed-value decisions where most cases are safe but the tail needs oversight.

3. Reversible-only

AI can only execute actions with a system-supported undo path. "Create a task" is reversible. "Send an email" is not. "Update a CRM field" is reversible. "Delete records" is not. Define the list, then let the AI execute within it. Best for high-volume workflows where irreversibility is the primary risk.

4. Shadow mode

Execute is disabled entirely. The system logs every action it would have taken but takes none of them. Run shadow mode for two weeks, review the logs, find the edge cases you didn't anticipate, then enable live execution. This is how you find the 2 a.m. duplicate-refund scenario before it costs you money.

5. Rate limit

AI can execute up to N actions per time window, then pauses for a human review cycle before continuing. Example: 50 outreach emails per day, autonomously. On day 51, the queue pauses and someone reviews the next batch. Best for high-volume, low-individual-risk workflows where drift over time is the primary concern.

These patterns aren't mutually exclusive. A well-designed workflow might use threshold for refunds, reversible-only for data updates, and shadow mode for the first two weeks.

When to collapse Generate and Execute

Some workflows don't need a review gate. Collapsing Generate and Execute, letting the AI act without human review, is appropriate when all three of these are true:

The action is low-stakes. Autocomplete in a document, spell-check, suggested tags on an internal ticket. If the AI gets it wrong, the cost is negligible.

The action is clearly reversible. Undo is fast, built into the interface, and doesn't require contacting anyone. If you can fix it in two seconds, the gate is probably unnecessary overhead.

The scope is well-defined and narrow. Autocomplete inside your own document is different from composing an email that goes to customers. "Write this function" is different from "deploy this function to production."

The pattern to watch for: teams collapse Generate and Execute because the demo looked great and they want the speed. They skip the boundary because it feels like bureaucracy. Six weeks later, they're explaining to a client why the AI sent them someone else's pricing quote.

When to never collapse the boundary

Some categories of action should always have a human approval step, regardless of how confident the AI looks, how good the pilot results were, or how much time the gate costs. These are:

Customer-visible communications. Anything that lands in a customer's inbox, SMS, or app notification with your brand on it. AI can draft. A human approves.

Financial transactions. Refunds, charges, transfers, purchase orders. The default is always review. Volume may eventually justify threshold automation, but earn that with history.

Personnel decisions. Anything affecting hiring, compensation, performance, or termination. AI supports the analysis. A human decides.

Legal or compliance-sensitive actions. Contracts, NDAs, regulatory filings, anything that creates a legal obligation or that regulators might audit.

Deletions of any kind. Deletion is the hardest Execute mistake to reverse. Shadow mode it first, add a review gate, then consider automation only if volume genuinely demands it.

Autonomous agents and the boundary

Autonomous agents are the highest-risk deployment pattern in the ACE Framework. They combine all five capabilities in a loop, running toward a goal with multiple Execute actions along the way. Each Execute inside the loop is a potential incident.

The risk compounds. An agent that misclassifies an input (Analyze error) might draft a wrong response (Generate error) and then execute that wrong response across ten downstream systems before the loop completes. By the time a human reviews the log, the damage is multi-step.

Three rules for Execute inside autonomous agent loops: First, write down which Execute actions the agent is authorized to take. "Create tasks. Update CRM stage. Do not send external email. Do not delete records." Second, set a hard ceiling on Execute actions per hour or per run and expand it only as audit history earns it. Third, log the full decision trace for every Execute action — what the agent ingested, analyzed, generated, and executed, with timestamps. That log is the only way to understand what happened when something goes wrong, and something will go wrong.

Real incidents at the boundary

These are the failure patterns that actually happen. Not hypothetical. Patterns from real deployments.

AI-drafted email sent without review. A filter bug included 15,000 opted-out contacts in an outreach sequence. The AI drafted and sent overnight. The morning brought 400 unsubscribes, 30 angry replies, and a legal escalation.

AI-approved fraudulent refund. A support team's AI issued refunds automatically for complaints below $200. A bad actor submitted 60 near-identical complaints. The AI processed all 60 before any pattern triggered a human alert. $12,000 left the account.

Autonomous code deploy that broke production. A CI/CD pipeline auto-merged a pull request that passed all automated tests. The change broke a downstream integration the tests didn't cover. Four hours to resolve, 800 customers affected.

AI-scheduled meeting that displaced an existing booking. A scheduling AI rescheduled a client call to fit a new request without any human notification to the original client. They escalated to the account team.

Each incident shares one root cause: someone assumed the AI would stop before acting, and nobody wrote that assumption down.

Building a Generate-Execute policy

A policy doesn't need to be long. It needs to be specific and shared. Here's the template:

What actions are auto-Execute? List them specifically. "Send notifications to internal team channels. Create tasks in the project management system. Update lead stage in CRM when deal is marked closed." If it's not on this list, it's not auto-Execute.

What requires human approval? Default: everything else. Customer-facing communications, financial transactions, and deletions always require approval regardless of size.

Who approves? Name the role, not the person. "The account owner approves customer communications. The finance team lead approves transactions above $500. The engineering manager approves merges to main." One approver per action category.

What's logged? Everything. What the AI saw, what it decided, what it executed, who approved (or that it was auto-approved and why), and the timestamp. Minimum 90-day retention. Audit access for anyone who manages the workflow.

When does the policy get reviewed? Quarterly. Plus an immediate review after any incident, regardless of severity.

Write it down. Put it where your team can find it.

The bottom line

The Generate-Execute boundary is the single most important line in AI governance. Draw it consciously, and you'll catch most AI incidents before they happen. Ignore it, and you'll discover it the expensive way.

Generate is powerful. Execute is consequential. The distance between them is exactly one approval step, and that step is worth protecting.

What to read next

- Generate capability: the six sub-capabilities of Generate and the failure modes to design around

- Execute capability: what happens when AI stops producing drafts and starts changing the world

- The ACE Framework: how Generate and Execute fit with Ingest, Analyze, and Predict in the full five-capability map

- Why most AI frameworks fail: why vocabulary matters more than strategy slides when you're making real decisions

- Tagging AI initiatives: how to mark your Execute workflows so your team can track scope and risk across projects

- Reading an AI use case: apply the ACE vocabulary to any vendor pitch, including ones that involve Execute

Senior Operations & Growth Strategist

On this page

- The difference in one sentence

- Four places the boundary lives

- Why the boundary matters

- The boundary in product design

- Five patterns at the boundary

- 1. Review-gate

- 2. Threshold

- 3. Reversible-only

- 4. Shadow mode

- 5. Rate limit

- When to collapse Generate and Execute

- When to never collapse the boundary

- Autonomous agents and the boundary

- Real incidents at the boundary

- Building a Generate-Execute policy

- The bottom line

- What to read next