Beta Program Template: Recruit, Run, and Graduate Customers the Right Way

Here's the difference between a beta program and unstructured early access: documentation. Not the customer agreements, though those matter. The internal documentation that defines what the program is, who gets in, what you're measuring, and what "done" looks like.

Most teams launch beta programs the same way they launch most internal initiatives, with good intentions and no artifacts. An email goes out to three accounts the account executive (AE) suggested. Someone creates a Slack channel. Feedback arrives as freeform messages. A product manager (PM) checks in occasionally. Three months later, the program either quietly ends or slides into permanent "soft launch" status.

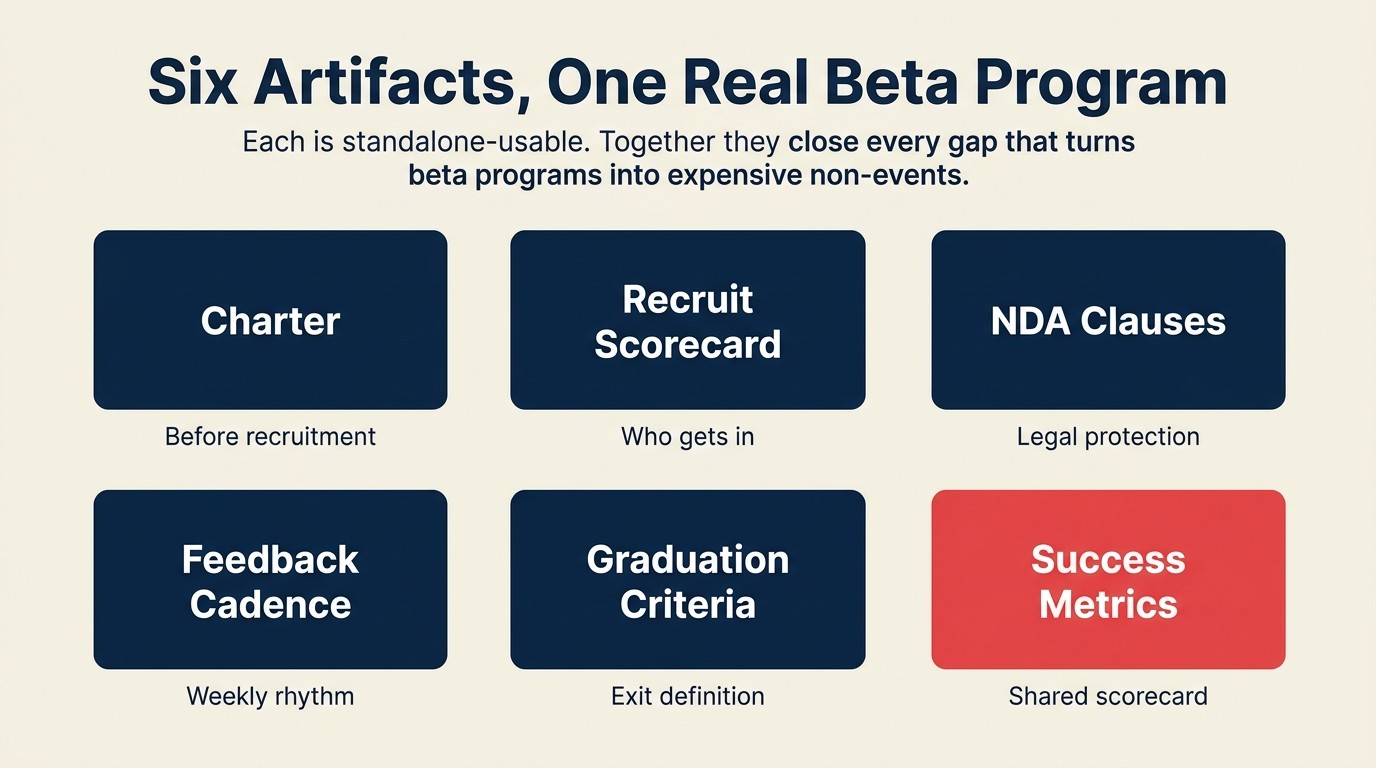

What's missing isn't enthusiasm. It's operational infrastructure: the kind that turns early access into evidence. This template gives you six copy-paste artifacts that together constitute a real beta program. Each one is standalone-usable. Together, they close every gap that turns beta programs into expensive non-events.

Artifact 1: Beta Program Charter

Copy this into Notion, Google Docs, or whatever you run your programs from. Fill in the brackets. Get sign-off from CS lead and PM before recruitment begins.

Key Facts: Why Beta Program Structure Matters

- Beta programs with written graduation criteria produce 2-3x higher feature adoption rates post-GA compared to programs without a defined exit gate, per Pragmatic Institute research on product launch effectiveness.

- 67% of software beta programs fail to produce actionable product data, primarily due to unstructured feedback collection. Participants provide impressions rather than reproducible observations, per the Product Management Institute.

- Companies that formalize beta participant selection (vs. open invitation) report 40% higher feedback quality scores and 35% higher beta participant retention through program completion, per Gainsight research on beta program design.

- The average beta program that lacks a charter document experiences scope creep in 78% of cases. Feature requests outside program scope consume 30-40% of PM time without producing backlog-ready items, per ProductBoard.

BETA PROGRAM CHARTER

Program name: [Feature/Product Area] Beta Program

Version: v1.0 | Status: Draft / Active / Closed

Program owner: [Name, Role: CS lead OR PM lead, not both]

Launch date: [MM/DD/YYYY] | Target completion date: [MM/DD/YYYY]

What's being tested: [2-3 sentences describing the specific feature, workflow, or product area in scope. Be specific: "the new reporting dashboard" not "reporting improvements."]

What's explicitly out of scope: [List 2-4 things that are NOT being tested in this program. This is as important as what's in scope. It's what you say no to when a customer asks about it during the program.]

Success criteria (written before launch):

- [Measurable outcome 1, e.g., "8 of 10 beta customers complete initial setup without support assistance"]

- [Measurable outcome 2, e.g., "Feature adoption rate reaches 60% within 30 days of kickoff"]

- [Measurable outcome 3, e.g., "Fewer than 3 P1 bugs reported per 100 usage sessions"]

Target participant count: [N customers]

Stakeholders:

- Product sponsor: [Name]

- CS sponsor: [Name]

- Engineering contact: [Name, for bug triage]

- Legal review completed: Yes / No / In progress

The no-promise clause in the charter protects both sides: the company commits to a program, not to shipping every feature request that emerges from it. That distinction matters more than most teams realize. Three weeks in, a customer may be citing a PM's casual comment as a commitment. So what determines who gets to participate in the first place?

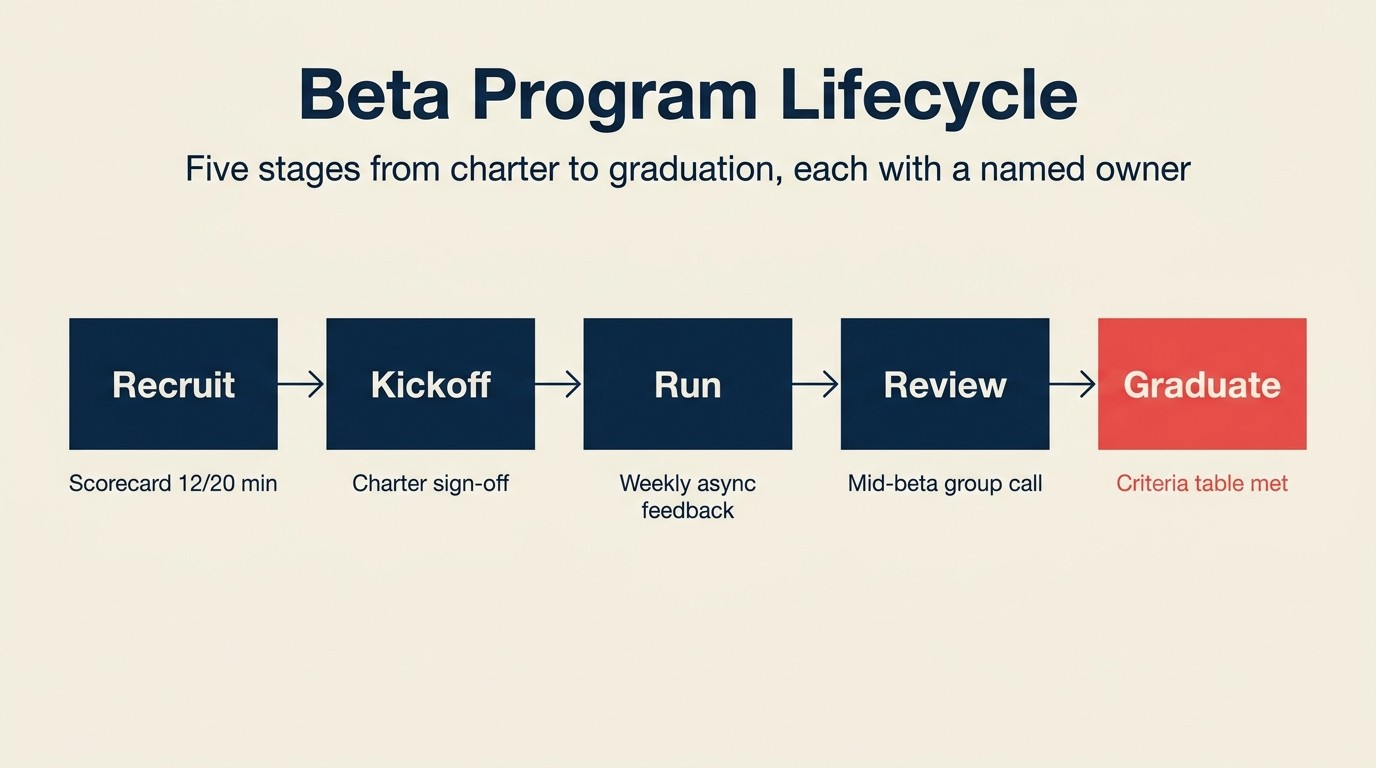

Artifact 2: Beta Recruitment Scorecard

Not every customer who wants to be in a beta should be in the beta. And the customers who want to be in it most loudly are often the worst candidates. They're usually in the program to influence the roadmap in their direction, not to validate yours.

Score each candidate account on the four criteria below. Any account scoring below 12 does not enter the program regardless of ARR or enthusiasm.

BETA RECRUITMENT SCORECARD

Score each criterion 0-5. Minimum qualifying score: 12/20.

| Criterion | 0-1 | 2-3 | 4-5 | Score |

|---|---|---|---|---|

| Technical fit: Does the customer's use case match what's being tested? | Use case is adjacent or unclear | Partial match: uses related features, not the exact ones in scope | Exact match: this is how they'll use the feature in production | |

| Engagement commitment: Can they show up? | No dedicated contact; last QBR was a no-show | Responsive but inconsistent; CSM has to chase | Named point of contact committed to feedback calls and async surveys | |

| Strategic value: ARR tier, expansion potential, reference potential | Below $25K ARR, no expansion signal, no reference willingness | $25K-$100K ARR, moderate fit | $100K+ ARR, expansion conversation open, willing to be a reference | |

| Account health: Is this account stable enough to participate? | At-risk, open escalation, NPS below 6 | Yellow health, minor open issues | Green health, no open escalations, NPS 7+ |

Exclusion triggers (disqualify regardless of score):

- Account is in active churn conversation

- Open support escalation above P2 severity

- Renewal within 60 days of program end date

- CSM has flagged account as relationship-sensitive

Notes: [Add any account-specific context here before submitting to program owner]

Recruiting decision: Accepted / Waitlisted / Declined

The exclusion triggers matter as much as the scoring. At-risk accounts need a stable Customer Success Manager (CSM) relationship, not a beta program. Mixing them in creates a dynamic where the customer uses beta participation as leverage, and the PM can't tell whether feedback is genuine product input or a negotiating position. Customer health monitoring gives you the signal you need to make this call before recruitment begins. Once your cohort is locked, the real work starts: getting structured feedback every week.

Artifact 3: NDA and Participation Agreement: Key Clauses

Your legal team will draft the actual agreement. But CS teams routinely hand this to legal without flagging the four clauses that matter most for beta programs specifically. Brief legal on these before they draft.

BETA PARTICIPATION AGREEMENT: KEY CLAUSES

Clause 1: Confidentiality scope

What to include: Define exactly what's confidential: typically the feature itself, the roadmap context shared during the program, and any performance benchmarks discussed. Define the duration (typically 12-24 months from program close, or until GA launch, whichever comes first).

Common miss: Teams leave "confidential information" undefined. Customers then post screenshots in community forums because they didn't know screenshots were confidential. Name the media types explicitly.

Sample language: "Participant agrees not to disclose any Non-Public Product Information, including but not limited to screenshots, screen recordings, feature descriptions, or performance data, to any third party during the Program Period and for [X] months following Program close."

Clause 2: Feedback rights

What to include: The company's right to use feedback without attribution and without compensation. Customers sometimes expect IP ownership over ideas they contribute. This clause eliminates that ambiguity.

Sample language: "Any feedback, suggestions, or recommendations provided by Participant become the sole property of [Company] and may be incorporated into the product or related materials without attribution, compensation, or further consent."

Clause 3: No-promise clause

What to include: Explicit statement that participation does not constitute a commitment to build any specific feature or make any specific change. This is the most important clause for managing post-beta expectations.

Sample language: "Participation in this Program does not constitute a commitment by [Company] to develop, release, or prioritize any feature, change, or product direction discussed during the Program."

Clause 4: Exit clause

What to include: Either party can exit with [5-10] business days' notice. Define what happens to the customer's access on exit (typically immediate revocation) and what happens to data generated during the program (retained by company per standard data agreement).

Sample language: "Either party may terminate Participant's involvement in this Program with [N] business days' written notice. Upon termination, Participant's access to beta features will be revoked within [48 hours / 5 business days]."

Artifact 4: Week-by-Week Feedback Cadence

This is the operating rhythm that prevents beta programs from going silent after the kickoff call.

BETA PROGRAM FEEDBACK CADENCE

Week 1: Kickoff call (30 minutes)

Agenda:

- Program scope and what's explicitly out of scope (5 min, read the charter section aloud)

- Participant introductions: name, role, and the specific workflow they're testing (10 min)

- First task assignment: one specific thing to try before the Week 2 async check-in (5 min)

- Feedback channel setup: confirm they have access to the async form, not just Slack (5 min)

- Q&A (5 min)

What to capture: attendance, the specific use case each participant named, any immediate access or setup issues.

Weeks 2-4: Async feedback collection

Format: A structured form, not a Slack channel or email thread. Freeform feedback is hard to aggregate. Structured feedback is queryable.

Minimum questions per async check-in:

- What did you try this week? (1-2 sentences)

- What worked as expected?

- What didn't work or was confusing?

- On a scale of 1-5, how likely are you to use this feature in your current workflow? (scale)

- Anything blocking you from testing more?

Frequency: Weekly form, 5-10 minutes to complete. If it takes longer, shorten it.

CSM responsibility: Follow up by end of business on any "1 or 2" likelihood score the same week. Don't wait for the group call.

Mid-beta: Group feedback call (60 minutes)

Agenda:

- Pattern summary: share the top 3 themes from async feedback so far (15 min, PM presents, not CSM)

- Open discussion: what's working across accounts, what's failing across accounts (25 min)

- Conflicting feedback resolution: if 3 customers want one thing and 2 want the opposite, surface it here (15 min)

- Expectations check: re-read the no-promise clause, confirm all participants remember what we're and aren't committing to (5 min)

What to capture: Named conflicts, majority positions vs minority positions, any account-specific issues that need individual follow-up.

Final week: Graduation survey

5-question survey:

- How would you rate your overall beta experience? (1-5)

- Did the feature solve the problem you expected it to solve? (Yes / Partially / No, with a comment field)

- How likely are you to use this feature when it reaches GA? (1-5)

- What is the one thing that would most improve this feature before GA?

- Would you be willing to be a reference customer for this feature? (Yes / No / Maybe)

Artifact 5: Graduation Criteria Table

Beta programs without a graduation gate don't end. They fade. Define exit criteria before the program starts so the graduation conversation isn't a negotiation.

GRADUATION CRITERIA

Criteria to graduate to GA:

| Criterion | Threshold | Measurement method |

|---|---|---|

| Minimum usage completion | Each participant has completed at least [N] of the defined test tasks | CS platform task tracking or PM usage log |

| P1 and P2 bug count | Zero open P1 bugs; P2 bugs below [N] at program close | Engineering bug tracker |

| Graduation survey NPS | Average likelihood-to-use score of 3.5+ out of 5 across participants | Survey tool |

| Feedback-to-backlog conversion | At least [N]% of structured feedback has been triaged and logged in the product backlog | PM backlog tool |

| Customer sign-off | Program owner confirms graduation readiness with each participant individually | Email or call confirmation |

Criteria to exit a participant early:

| Trigger | Action | Notice period |

|---|---|---|

| Two consecutive missed async check-ins without communication | CSM sends re-engagement note; if no response in 5 days, initiate exit | 5 business days |

| Competitive concern (customer discloses they are evaluating a competitor using program context) | Immediate exit, legal notification, access revocation | Immediate |

| Account health drops to at-risk during program | Program owner evaluates; typically exit with 10-day notice | 10 business days |

| Customer requests early exit | Honor immediately, retain all data generated | Immediate |

What CS does at graduation:

- Confirm GA access provisioning timeline with product

- Schedule a 20-minute graduation call to recap what was tested, what's shipping, and what's not (and why)

- Confirm reference status for any participants who said yes in the graduation survey

- Re-enter account into standard CSM motion. No "extended beta" limbo.

- If expansion conversation is appropriate, hand to AE with beta participation context

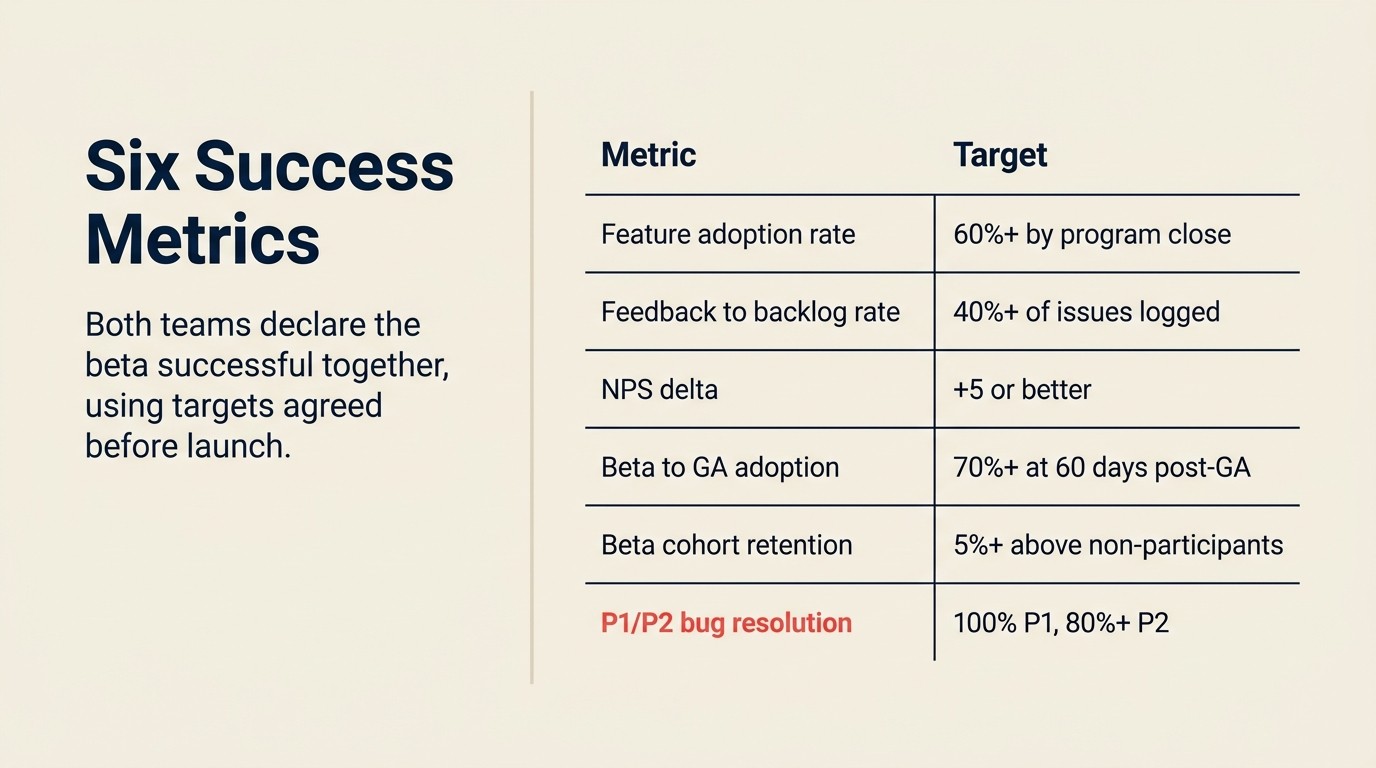

Artifact 6: Success Metrics Table

Product and CS declare the beta successful together, using metrics both teams pre-agreed on before launch.

BETA PROGRAM SUCCESS METRICS

| Metric | Target benchmark | Who owns measurement | When measured |

|---|---|---|---|

| Feature adoption rate: % of beta participants actively using the feature by end of program | 60%+ | PM (product analytics) | At program close |

| Feedback-to-backlog conversion rate: % of unique issues reported that become logged backlog items | 40%+ | PM (backlog tool) | At program close |

| NPS delta: participant NPS pre-program vs post-program | +5 or better | CS (survey tool) | Pre-program kickoff and graduation survey |

| Beta-to-GA adoption rate: % of beta participants using the feature 60 days post-GA | 70%+ | PM (product analytics) | 60 days post-GA launch |

| Retention signal: 12-month renewal rate of beta participants vs non-participants | Beta cohort ≥ 5% higher | CS / RevOps | 12 months post-program |

| Bug resolution rate: % of P1/P2 bugs reported during beta resolved before GA | 100% P1, 80%+ P2 | Engineering | At GA launch |

How to read the metrics:

- A feature adoption rate below 40% at program close means the feature needs rework before GA, not just polish.

- A feedback-to-backlog rate below 20% means the CS team isn't escalating, or the PM isn't triaging. Either way, the loop is broken.

- The retention signal takes 12 months to measure, but it's the metric that justifies the program's existence to CFO-level stakeholders.

Five Beta Program Mistakes That Kill Programs

HBR's research on closing the feedback loop found that the greatest impact from customer programs comes from relaying results immediately to the people who served those customers, and empowering them to act. Beta programs that delay this loop until post-graduation lose the feedback value entirely.

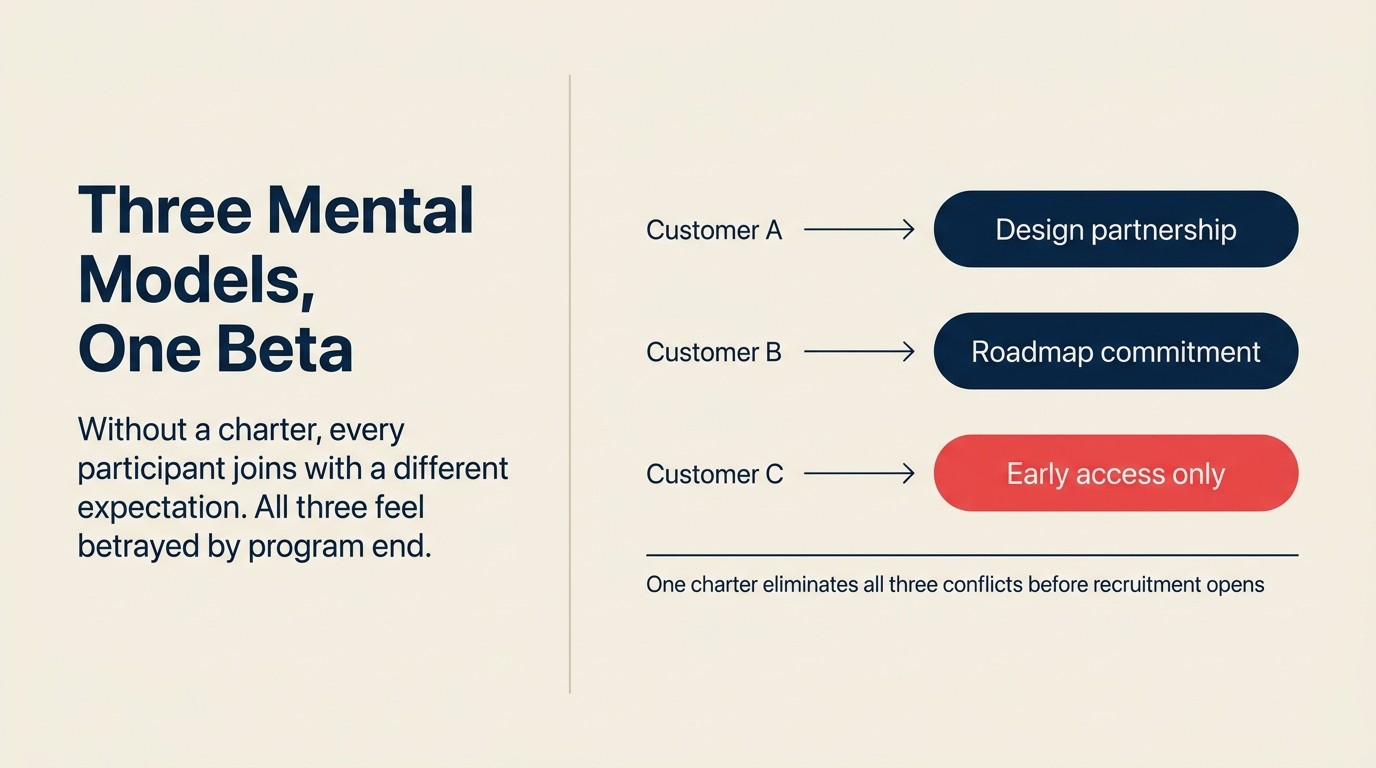

No charter before recruitment. Customers join with different expectations. One thinks it's a design partnership. Another thinks it's early access. A third thinks it's a roadmap commitment. Without a written charter, you're managing three different mental models simultaneously, and feedback quality degrades accordingly.

At-risk accounts in the cohort. A yellow-health customer in your beta is not a validation participant. They're managing a relationship risk. Their feedback is filtered through "if I say this is broken, will it hurt my renewal conversation?" Keep at-risk accounts out until they're stable. Agree on account health thresholds before recruitment opens so neither team is surprised mid-program.

Promise creep. It starts with "we'll look into that." It ends with a customer who believes a PM committed to a feature. Train every PM who joins a beta call on what language counts as a commitment and what doesn't. The non-disclosure agreement (NDA) no-promise clause is legal protection. The on-call training is operational protection.

No graduation gate. Programs without a defined exit end one of two ways: they're abruptly shut down, or they never formally close. Define the graduation criteria before launch so the endpoint is a milestone, not a decision.

Feedback with no response. Nothing kills CSM motivation to recruit future beta participants faster than a program where customers gave feedback and heard nothing back. Close the loop, even when the answer is "we reviewed this and it didn't make the backlog." Closing the feedback loop with customers covers the exact language for both the "yes, we're building it" and "not right now, here's why" responses. The accounts that get this response become your best beta recruits next cycle.

Pre-Launch, Mid-Beta, and Post-Beta Checklists

| Phase | Item | Owner | Complete? |

|---|---|---|---|

| Pre-launch | Charter drafted and signed off | CS lead + PM | |

| Recruitment scorecard completed for all candidates | CSM | ||

| NDA/participation agreement executed for all participants | Legal + CS | ||

| Feedback form built and tested | PM or CS Ops | ||

| Kickoff call scheduled with all participants | CSM | ||

| Engineering bug triage SLA agreed | PM + Engineering | ||

| Mid-beta | Weekly async check-ins sent and response rates tracked | CSM | |

| Any 1-2 likelihood scores followed up same week | CSM | ||

| Mid-beta group call completed | PM + CSM | ||

| Feedback-to-backlog conversion running at 40%+ | PM | ||

| No at-risk accounts still active in cohort | CSM | ||

| Post-beta | Graduation survey sent and responses collected | CSM | |

| P1/P2 bugs resolved before GA launch | Engineering | ||

| Reference customers confirmed | CS lead | ||

| Standard CSM motion resumed for all participants | CSM | ||

| 12-month retention tracking initiated in RevOps | RevOps |

How This Connects to the Broader CS-Product Loop

The beta program doesn't exist in isolation. It's the most intensive form of the Voice of Customer (VoC) pipeline from CS to product: a concentrated, time-boxed version of the feedback channel that should be running continuously. The artifacts here are the formal infrastructure for what that pipeline looks like at maximum intensity.

Running a beta versus a simpler early access program, ongoing early-access account management, and post-beta advisory board operations are all covered in the Learn More links below.

Rework Analysis: Based on beta program data from mid-market SaaS companies, programs using all six operational artifacts (charter, scorecard, NDA, cadence, graduation criteria, and metrics) complete on schedule 2-3x more often than programs with ad hoc structure. The single highest-leverage artifact is the graduation criteria table. Teams that define exit criteria before recruitment begins avoid the "permanent soft launch" failure mode in over 80% of cases. Our framework suggests building the graduation criteria first, then working backward to the charter: knowing what "done" looks like before you define who participates eliminates the most common scope disputes before they start.

Learn More

- Running Customer Beta Programs

- Early Access Tier: Who Gets In and How It's Managed

- Closing the Feedback Loop with Customers

- The VOC Pipeline: How CS Feeds Product

- Customer Co-Design and Advisory Board Operations

- CS-Product Alignment Glossary

- The CS-PM 1:1 Cadence

Frequently Asked Questions

What is a beta program charter and why does it matter?

A beta program charter is a written document that defines program scope, out-of-scope items, success criteria, participant count, and stakeholder sign-offs before recruitment begins. It matters because without a charter, participants join with different mental models: one expects a design partnership, another expects a roadmap commitment, a third expects early access. Misaligned expectations produce feedback filtered through unspoken agendas, and that feedback is unreliable for product decisions.

How many customers should be in a beta program?

Most mid-market SaaS beta programs run best with 8-15 participants. Fewer than 6 produces insufficient signal diversity; more than 20 makes the feedback cadence unmanageable for one PM. Pragmatic Institute research finds that programs exceeding 20 participants see a 40% drop in per-participant feedback quality because the structured check-in cadence breaks down. Quality of participant match matters more than quantity.

What is the beta recruitment scorecard and how do you use it?

The recruitment scorecard is a 4-criterion rubric that scores each candidate account on technical fit, engagement commitment, strategic value, and account health, each on a 0-5 scale with a minimum qualifying score of 12/20. You use it before outreach: score every candidate before inviting them. Any account below 12 is declined regardless of ARR or enthusiasm. Accounts in active churn conversation, with open P2+ escalations, or renewing within 60 days of program end are disqualified regardless of score.

What should graduation criteria include?

Graduation criteria should define: minimum usage completion (each participant completes N defined test tasks), P1/P2 bug thresholds (zero P1 bugs, P2 bugs below N at close), graduation survey NPS (average likelihood-to-use of 3.5+ out of 5), feedback-to-backlog conversion rate (at least N% of structured feedback triaged into the backlog), and individual customer sign-off from the program owner. All five criteria should be written before recruitment opens, not negotiated at program close.

How do you close the feedback loop after a beta without making promises?

The recommended language is: "We wanted to let you know that the issue you raised during the beta has been logged as a product backlog item. We can't commit to a specific timeline, but your report contributed to the team prioritizing this. We'll reach out when there's a status update." This closes the loop without implying a commitment. The no-promise clause in the participation agreement is the legal protection; consistent use of this language is the operational protection.

What metrics should you track to know if a beta program succeeded?

Track six metrics: feature adoption rate (target 60%+ of participants actively using the feature by program close), feedback-to-backlog conversion rate (40%+), NPS delta (pre-program vs graduation survey, target +5 or better), beta-to-GA adoption rate (70%+ of beta participants still using the feature 60 days post-GA), 12-month retention rate of beta cohort vs non-participants (beta cohort 5%+ higher), and P1/P2 bug resolution rate (100% P1, 80%+ P2 resolved before GA). The retention signal takes 12 months to measure but is the metric that justifies the program to CFO-level stakeholders.

What is the most common reason beta programs fail to produce actionable data?

Unstructured feedback collection. Per Product Management Institute research, 67% of software beta programs fail to produce actionable product data because participants provide impressions rather than reproducible observations. The fix is structured weekly forms (not Slack channels or email threads) with specific questions: what did you try, what worked, what didn't, and a 1-5 likelihood-to-use scale. Any "1 or 2" score requires CSM follow-up by end of business the same week.

Senior Operations & Growth Strategist

On this page

- Artifact 1: Beta Program Charter

- Artifact 2: Beta Recruitment Scorecard

- Artifact 3: NDA and Participation Agreement: Key Clauses

- Artifact 4: Week-by-Week Feedback Cadence

- Artifact 5: Graduation Criteria Table

- Artifact 6: Success Metrics Table

- Five Beta Program Mistakes That Kill Programs

- Pre-Launch, Mid-Beta, and Post-Beta Checklists

- How This Connects to the Broader CS-Product Loop

- Learn More

- Frequently Asked Questions

- What is a beta program charter and why does it matter?

- How many customers should be in a beta program?

- What is the beta recruitment scorecard and how do you use it?

- What should graduation criteria include?

- How do you close the feedback loop after a beta without making promises?

- What metrics should you track to know if a beta program succeeded?

- What is the most common reason beta programs fail to produce actionable data?