CS & Product Alignment Glossary: 30 Terms Every CS and Product Team Must Agree On

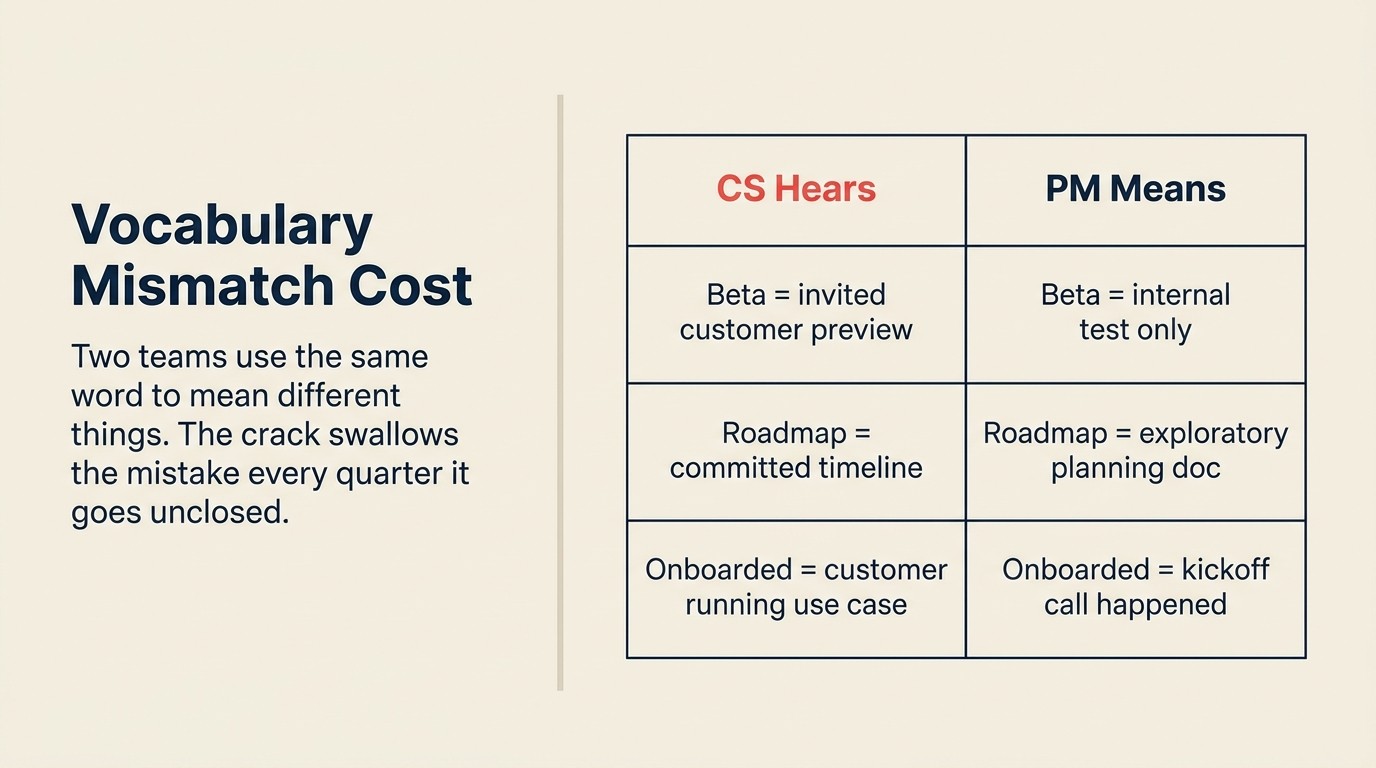

Here's a scenario that replays every quarter at mid-market SaaS companies. A Product Manager (PM) uses the word "beta" in a roadmap conversation with a Customer Success Manager (CSM). The PM means internal test, not customer-ready. The CSM hears invited customer preview. Two weeks later, the CSM tells a high-ARR account they'll be part of the beta. The PM is blindsided. The customer is confused when the feature isn't available.

Nobody lied. Nobody was wrong. They used the same word to mean different things, and the crack swallowed the mistake.

Process gets the blame when CS-Product alignment fails. But it's usually vocabulary. Two teams can run the same feedback ritual and still reach different conclusions. "Roadmap" means a committed timeline to CS and an exploratory planning document to a PM. "Onboarded" means the kickoff call happened to Sales and means the customer is actively running their primary use case to CS. "Feature request" means urgent customer need to a CSM and unvalidated signal to a PM.

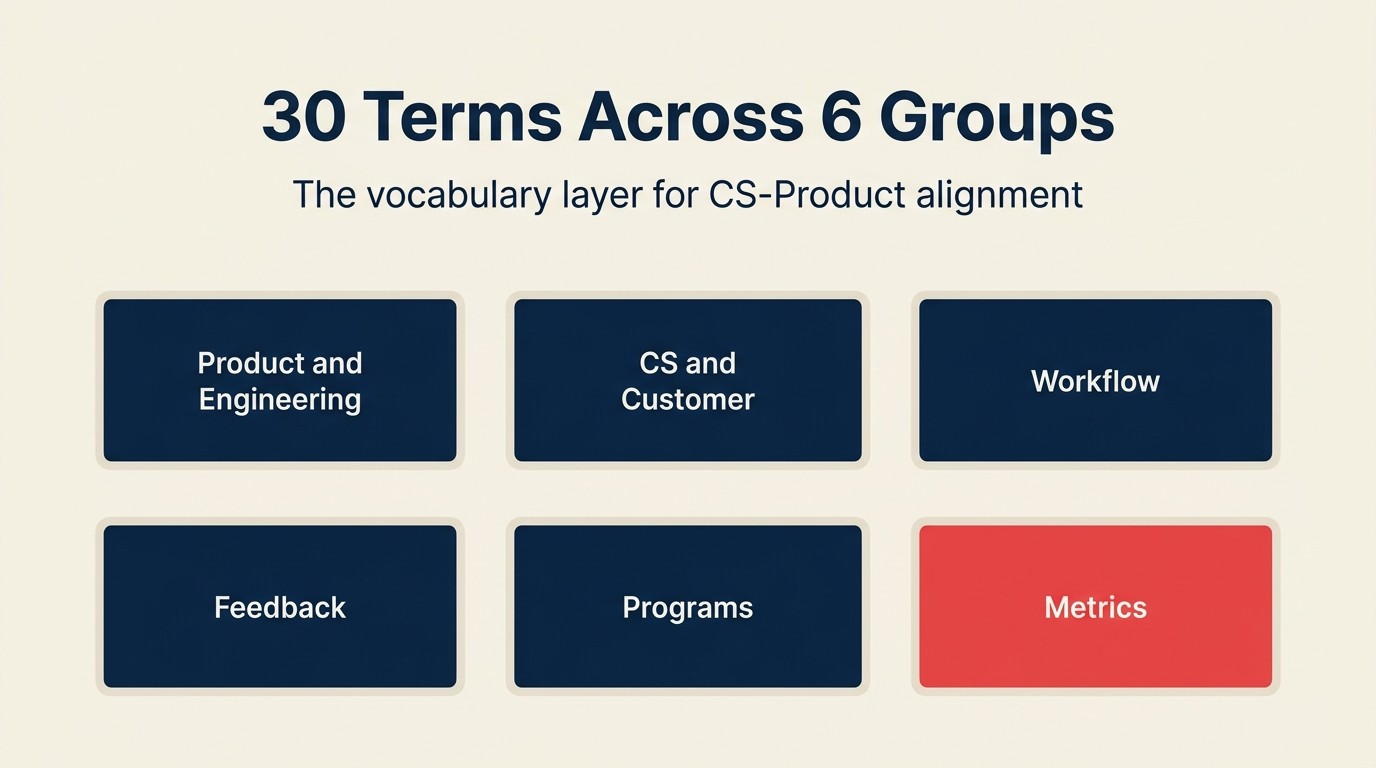

This glossary is the canonical vocabulary layer for the entire cs-product-alignment collection. Every article in the collection links back here when introducing a term for the first time. Use it as a working tool. Bring your VP CS and Head of Product into the same room, open this document, and work through the sections together. For every term where your current definitions diverge, that divergence is alignment work disguised as a vocabulary disagreement. The cost of CS-product misalignment compounds every quarter you leave it unaddressed.

Most-Cited Terms in CS-Product Alignment

The six terms that appear most frequently in CS-Product alignment breakdowns, and where definitional gaps cause the most operational damage:

- NRR (Net Revenue Retention): the north-star metric that CS and Product ultimately share. When CS and Product disagree on how to prioritize, NRR impact is the tiebreaker both sides can accept. See ARR-weighted feedback for how this translates into prioritization input.

- JTBD (Jobs-to-be-Done): the framework that converts a customer's feature request into a problem definition a PM can spec against. CSMs who understand JTBD submit better VoC tickets. PMs who teach it to CSMs get better input. See the full JTBD definition.

- MVP (Minimum Viable Product): the term most likely to be misunderstood by customers when CSMs use it without framing. An MVP is a learning vehicle, not a finished product. Customers who participate in MVP testing need to hear that distinction explicitly. See MVP.

- Beta: the most common single-word source of broken customer expectations at the CS-Product seam. "Beta" is invited-customer testing with feedback obligations; it is not "early access" and is not "almost done." See Beta.

- NPS (Net Promoter Score): a lagging signal that becomes useful only when paired with CSM follow-up. Raw NPS without a closed-loop pipeline is noise. See NPS.

- MoSCoW (Must / Should / Could / Won't): the prioritization framework PMs use to communicate roadmap certainty. CSMs who understand MoSCoW can give customers honest "must" vs. "could" answers without overpromising. See Roadmap and Backlog for the related workflow context.

How to Use This Glossary

This isn't a reading exercise. It's a facilitation tool.

Run a 60-minute session with the VP CS and Head of Product. Work through each section. For every term, ask one question: "Do we have a written, agreed definition that both teams use right now?" If yes, confirm it matches what's here. If no, or if each team gives a different answer, you've found a gap worth closing before the next quarterly planning cycle.

Each term has a definition and a one-line example. The example shows the term applied to a real mid-market B2B scenario, because abstract definitions look identical until you put them against a customer situation. The concrete case is where definitional disagreements surface.

Link to individual term groups from other documents using the anchor IDs on each section heading. When onboarding a new PM or CSM, send them this glossary in week one.

So: which terms are the most dangerous when left undefined? Start with Product and Engineering, the vocabulary where CS is most likely to be working from assumptions rather than shared definitions.

Product & Engineering Terms

These are the terms that originate in Product and Engineering and must be legible to CS. When CS doesn't share these definitions, they either over-promise to customers (committing to timelines the PM hasn't confirmed) or under-communicate by waiting for official releases instead of pre-briefing strategic accounts.

PM: Product Manager

Owns a product area's roadmap, prioritization, and delivery. In the CS-Product seam, the PM is the primary recipient of structured VoC input and the decision-maker on whether a customer request enters the backlog. A PM's yes to a feature request means it is under consideration; it doesn't mean it will ship, or when.

Example: A CSM escalates a workflow gap affecting four accounts. The PM reviews the VoC ticket, weighs it against backlog priority, and responds within five business days with a backlog status, not a delivery date.

PMM: Product Marketing Manager

Translates product changes into customer-facing language: release notes, in-app messaging, sales enablement. At the CS-Product seam, PMM is the structural bridge between what Product ships and what CS communicates to customers. PMM is not a communications function that activates after decisions are made; it's the translation layer in both directions.

Example: PMM takes a technical feature spec from the PM and produces three outputs: a CS pre-brief, an in-app announcement, and an external release note, each calibrated for its audience.

Engineering Manager (EM)

Leads the engineering team executing against the product roadmap. Relevant at the seam when CS escalates complexity or requests that require EM buy-in before a PM can commit. The EM owns resource allocation on the engineering side; a PM can't commit to a delivery timeline without EM alignment.

Example: CS escalates a customer-blocking integration bug. The PM routes it to the EM, who confirms a two-sprint fix cycle. The PM communicates the timeline to CS the same day.

Product Designer

Owns the user experience of a feature or flow. At the seam, product designers run ride-alongs with CSMs and customers to surface workflow gaps that don't appear in usage data. Direct customer exposure shapes design decisions earlier, reducing the "why does this work this way?" post-GA feedback cycle.

Example: A product designer joins two CSM-led onboarding calls for a new reporting module. The observations surface three UI friction points that usage data didn't show, which are incorporated before GA.

JTBD: Jobs-to-be-Done

A framework that defines what a customer is trying to accomplish (the "job") rather than what feature they requested. Jobs-to-be-Done is grounded in the idea that customers "hire" products to get specific jobs done, a concept closely tied to Clayton Christensen's innovation research. CS data is one of the richest sources of raw JTBD signal in any B2B company. CSMs hear the job every time a customer describes a workaround: they're telling you exactly what they're trying to do and what the product won't let them do. See Jobs-to-be-Done from CS Data for the full operational approach.

Example: Customers keep exporting data to spreadsheets and doing calculations manually. The feature request says "better export." The JTBD framing: customers need custom date-range calculations without leaving the platform.

MVP: Minimum Viable Product

The smallest version of a feature that can be tested with customers and generate learning. MVP was coined to describe a version with just enough features to be usable by early customers who then provide feedback for future development. MVP is a learning vehicle, not a finished product. In beta and early access programs, CS manages the relationship with customers participating in MVP testing and is accountable for communicating what "MVP" means to participants, specifically what is not included.

Example: A reporting MVP ships with three chart types. CS pre-briefs the four accounts in the MVP cohort: the feature is live, two more chart types are in the next sprint, and feedback should go to the PMM feedback channel.

GA: General Availability

The point at which a feature is fully released to all customers. GA triggers CS communication responsibilities: training, in-app guidance, release notes, and any proactive CSM outreach to high-ARR or at-risk accounts. "GA" does not mean customers are aware. It means the product is ready and the communication responsibility starts.

Example: A feature reaches GA on Tuesday. PMM publishes the release note Wednesday. CS pre-brief goes to all CSMs Monday. Strategic accounts receive direct CSM outreach by Thursday.

Beta

A pre-GA stage where a feature is available to a selected group of customers for testing and feedback. Beta programs are jointly owned by Product (feature readiness) and CS (customer selection and relationship management). CS selects participants based on account health, ARR, and likelihood of productive feedback, not based on who asked loudest. The running customer beta programs guide covers the full selection and management process.

Example: Eight accounts are selected for a beta program. CS owns the relationship and the feedback collection. Product owns the feature and the response to the feedback. PMM owns the communication template for participants.

Alpha

Earlier than beta, typically internal or with one to three design-partner customers. Alpha participants are usually sourced and managed by CS or PMM with direct PM involvement. Alpha feedback shapes the feature before it's built for broad testing. Beta feedback shapes it before GA.

Example: One design-partner customer with deep product expertise joins the alpha for a new automation engine. The PM runs the sessions. CS maintains the relationship and ensures the customer understands this is pre-beta.

RC: Release Candidate

A build that is feature-complete and expected to become GA unless a blocking bug is found. CS may pre-brief strategic accounts at RC stage to prepare the account team for the GA communication wave. RC status means "no new features," not "no bugs."

Example: The integrations module reaches RC on Friday. CS contacts the three accounts most dependent on the feature to prepare their teams. GA ships the following Tuesday.

Key Facts: The Cost of Undefined Terms

- B2B SaaS companies with high CS-Product term alignment report 23% faster post-GA feature adoption, as features are communicated consistently by CSMs who understood what shipped and why, per ProductPlan's 2024 product management benchmarks.

- 61% of SaaS companies have no shared written definition of what constitutes "feature adoption," the primary post-GA metric at the CS-Product seam, per Pendo's State of Product Leadership report.

- 54% of mid-market CS teams learn about new features at the same time as customers, from changelogs or in-app banners rather than through a pre-brief from Product or PMM, per ChurnZero's annual CS benchmarks.

CS & Customer Terms

These are the terms that originate in CS and must be legible to Product. When Product doesn't share these definitions, PMs prioritize without understanding which customers are at risk and why.

CSM: Customer Success Manager

Owns the post-sale customer relationship. Primary accountability is retention and health. In the CS-Product seam, the CSM is the front-line collector of qualitative customer signal: the person who hears what customers can't do, won't do, or have found a workaround for. CSM signal is the raw material of the feedback pipeline.

Example: A CSM's five accounts in a specific vertical share the same onboarding friction. She documents the pattern, categorizes it using the shared feedback taxonomy, and routes it to the PM responsible for that product area.

Customer Health Score

A composite numeric signal summarizing the risk profile of an account, combining usage data, support ticket volume, NPS or CSAT scores, engagement frequency, and CSM sentiment. Product teams use health score as one prioritization input: when a feature area correlates with low health scores, it is a candidate for immediate improvement. See product usage meets customer health dashboard for how both data streams connect operationally.

Example: The integrations module consistently correlates with health scores below 60. The PM reviews this signal with CS Ops quarterly and uses it to weight integration-related feedback above other categories.

Customer Advocacy

A customer's willingness to publicly support the product: reference calls, case studies, G2 reviews, advisory board participation. High-advocacy customers are disproportionately valuable for beta programs and co-design because they're invested enough to give productive feedback and absorb imperfect builds.

Example: A CSM identifies three high-advocacy accounts for an upcoming beta. They're selected not because they're the largest ARR, but because they've completed reference calls and submitted G2 reviews, signals of genuine engagement.

NPS: Net Promoter Score

A single-question survey metric measuring how likely a customer is to recommend the product. Net Promoter Score was developed by Fred Reichheld and first published in a 2003 Harvard Business Review article. It's useful as a lagging health signal and directional trend indicator; insufficient as a real-time feedback mechanism. NPS without a structured follow-up pipeline is noise. NPS with closed-loop follow-up by the CSM becomes qualitative signal.

Example: A customer submits an NPS of 5 (detractor). The CSM receives the alert, reaches out within 48 hours, and surfaces a specific friction in the reporting module. That friction goes into the VoC pipeline as a tagged, weighted item.

Advisory Board

A small group of senior customer stakeholders, typically 8 to 15, who meet quarterly to provide strategic input on roadmap direction. Advisory board membership is managed by CS; the agenda is co-owned with Product and PMM. Advisory boards are not customer support sessions; they are input sessions that shape the next quarter's roadmap priorities.

Example: The Q2 advisory board includes the VP Ops from eight strategic accounts. Product presents three roadmap options. CS facilitates the discussion. PMM documents the output and routes it to the PM prioritization session the following week.

Customer Council

A broader, more operational version of an advisory board, typically 20 to 50 customers in a product area who review feature previews and provide structured feedback. CS selects participants; Product defines the agenda. Customer councils run monthly or per release cycle rather than quarterly.

Example: The reporting customer council reviews a dashboard prototype with 30 accounts. PMM documents the feedback. The PM uses it to prioritize the three most-requested chart types for the next sprint.

Workflow Terms

These terms describe how Product executes. CS must understand them to set honest expectations with customers, route feedback correctly, and time their communications.

Backlog

The prioritized list of features, improvements, and fixes that a product team plans to build. Customer feedback from CS enters the backlog as structured input after going through a VoC pipeline, not directly from a CSM request. A "backlog item" is not a commitment; it is a tracked consideration.

Example: A CSM asks the PM to add a customer's feature request to the backlog. The PM confirms it's been logged as a VoC item. The CSM communicates to the customer: "We've captured this as a tracked input. We can't commit to a timeline yet."

Sprint

A fixed development cycle, typically two weeks, in which an engineering team completes a defined set of work. CS-facing implication: sprint commitments are why a PM can't promise a customer fix "this week" without checking the sprint plan first. Mid-sprint changes disrupt the delivery of items already committed.

Example: A customer escalates a bug on day eight of a two-week sprint. The PM confirms it's not a blocker for the current sprint but slots it as the first item in the next sprint. CSM communicates a 10-day resolution window.

Roadmap

The product team's planned sequence of initiatives across a time horizon, typically quarterly or annual. CS communicates roadmap direction to customers; the level of detail shared depends on whether the roadmap is public, private, or gated. A roadmap is a planning document, not a commitment. PMs need this distinction to be shared explicitly with CSMs before every customer conversation.

Example: The Q3 roadmap includes three initiatives. Two are shareable at the strategic-account level under NDA. One is not shareable. CS receives a pre-brief on what is and isn't on the table before any account team has a roadmap conversation.

Release

A versioned set of changes shipped to customers. Releases trigger a coordinated communication sequence between Product, PMM, and CS. The release is not the end of the PM's job. It's the beginning of the adoption and communication cycle.

Example: Release 3.4 ships on March 15. Product closes the sprint. PMM publishes the release note. CS distributes the pre-brief to account teams. CSMs with affected accounts initiate proactive outreach.

Deprecation

The process of announcing that a feature or capability will be removed or replaced in a future release. Deprecation requires CS involvement early. Affected customers need advance notice, a migration path, and in many cases a conversation with their CSM before the announcement reaches their inbox. The ownership model for this communication is defined in who owns customer-facing changes.

Example: The legacy CSV import flow is deprecated with a 90-day notice. CS identifies every account using it. PMM drafts the announcement. CSMs contact all affected accounts before the public notice goes out.

Sunset

The end-of-life of a deprecated feature: it is no longer available. Sunsets that lack CS coordination are a leading cause of retention risk and emergency escalations. The gap between deprecation (the notice) and sunset (the removal) is the window where CS must drive migration.

Example: The legacy import flow sunsets on June 30. CS tracks migration status for every affected account weekly from April 1. Accounts still on the legacy flow at June 1 receive direct CSM outreach and an escalation path.

Feedback Terms

These terms define how customer signal travels from CS to Product. Without shared definitions, feedback is either too raw for PMs to use or too stripped-down for the customer context to survive.

VoC: Voice of Customer

The aggregate of what customers say, request, complain about, and praise, collected through CSM calls, support tickets, QBRs, NPS surveys, and advisory sessions. VoC is the raw material of the CS-to-Product feedback pipeline. But VoC without structure is noise. The pipeline exists to turn raw signal into weighted, categorized input that PMs can act on.

Example: A CSM conducts a QBR and hears the customer describe a workaround. She logs the verbatim in the VoC system, tags it to the relevant product area, and scores its impact using the shared impact scoring rubric.

Feature Request

A customer's ask for a new capability or change. Feature requests are not backlog items. They must be categorized, weighted by ARR impact, and routed through the VoC pipeline before a PM can act on them. "Can we build this?" is a different question from "Has this been weighted and prioritized?"

Example: Three accounts request a Salesforce integration. The CSM logs each request in the VoC system with ARR and use-case context. PMM aggregates the three into one weighted item: "Salesforce integration: $840K ARR affected, 3 accounts, high urgency." The PM reviews it in the next prioritization session.

Customer Impact Score

A numeric weight assigned to a piece of feedback reflecting how many customers are affected and how much ARR is at risk. Combines account count with ARR to prevent ten small accounts from outweighing one strategic logo. The formula varies by company but typically weights ARR more heavily than account count.

Example: A request from one account at $300K ARR scores higher than the same request from five accounts at $40K each, because the ARR at risk is 50% greater even though the account count is lower.

ARR-Weighted Feedback

A method of prioritizing customer requests by total contract value rather than raw account count. A request from accounts representing $2M ARR ranks above the same request from accounts representing $200K ARR, regardless of how many individual accounts are making the request.

Example: The PM reviews the quarterly VoC synthesis. The top-weighted request by ARR (custom date-range exports) covers 12 accounts and $1.8M ARR. It surfaces above a more frequently requested item that covers 20 accounts but only $600K ARR.

Qualitative Feedback

Open-ended, narrative customer input: verbatims from calls, written QBR notes, Slack messages forwarded by CSMs. Qualitative feedback is high in context and low in comparability. It explains why a customer is frustrated, not just that they are.

Example: "We export to Excel every Monday morning because we can't build the view we need in the dashboard" is qualitative feedback. It has context, urgency, and a specific workaround, all of which a usage metric would miss.

Quantitative Feedback

Structured, measurable customer input: NPS scores, usage frequency, feature adoption rates, CSAT. Quantitative feedback is easy to compare across accounts and easy to trend over time, but low in context. It tells you what is happening, not why.

Example: Dashboard feature adoption at 30% across the customer base is quantitative feedback. It tells you the feature is underused. It doesn't tell you whether customers can't find it, don't understand it, or tried it once and found it insufficient.

Program Terms

These terms describe structured programs at the CS-Product seam. Without shared definitions, CS and Product have different expectations about what participation means, what the commitment is, and who owns what.

Early Access Tier

A formal program giving a subset of customers access to features before GA. Requires an application or invitation process, a feedback commitment from participating customers, and CS as the relationship owner. Early access is not the same as beta. It's a defined program with selection criteria and obligations.

Example: The early access program for the new automation engine has 20 slots. Applicants commit to two feedback sessions and a case study if the feature ships to GA. CS reviews applications. Product sets the selection criteria.

Customer Co-Design

A development practice where customers are involved in shaping a feature before it is built, through interviews, prototype reviews, or working sessions with the product team. CS selects participants and manages the relationship; Product owns the design decisions. Co-design is not a commitment to build what the customer asks for.

Example: The PM runs four co-design sessions for a new integration framework. CS selects participants with relevant technical expertise. The PM uses the sessions to validate the problem definition, not to gather feature requests.

Ride-Along

A practice where a PM or product designer joins a CSM on a live customer call or session, observing rather than leading. The most direct way for Product to hear unfiltered customer language about a problem. Ride-alongs are particularly valuable early in a feature's problem-definition phase. See product team customer call ride-alongs for how to structure and schedule them.

Example: A PM joins three CSM-led onboarding calls in the same month. She hears customers describe the same friction in their own words, language that is often quite different from the way it was framed in the VoC ticket. The difference shapes the feature spec.

Metrics Terms

These terms measure outcomes at the seam. Without shared definitions, CS and Product track the same concepts with different data sources and reach different conclusions.

Feature Adoption Rate

The percentage of eligible customers actively using a feature within a defined period after GA. "Active use" must be defined jointly before GA. A login doesn't count; completing a core workflow does. Low adoption on a recently shipped feature is a signal for both CS (investigate why customers aren't using it) and Product (investigate the onboarding experience).

Example: 90 days after GA, 34% of eligible accounts have completed at least one automated report using the new feature. The joint definition of "adopted" is: at least one completed report per week for two consecutive weeks.

Time-to-Adoption

How long it takes a customer to begin using a new feature after it is released. Long time-to-adoption often indicates a communication or onboarding gap, not a product quality problem. When CS pre-briefs customers and runs activation campaigns, time-to-adoption shortens significantly.

Example: Median time-to-adoption for the new dashboard is 22 days. For accounts where the CSM ran a 30-minute walkthrough in the first week, median time-to-adoption is 9 days. The gap is the activation campaign's value.

Sunset Retention Rate

The percentage of customers who remain after a feature they relied on is deprecated or sunsetted. A low sunset retention rate signals that the migration path was insufficient, the advance notice was too short, or both. Tracking this metric by deprecation event builds a dataset for improving future sunsets.

Example: After sunsetting the legacy CSV import flow, 94% of affected accounts remain. The 6% churn is reviewed: three accounts cited the migration complexity as the primary reason for leaving.

Rework Analysis: The Vocabulary-Gap Cost Model

Most CS-Product teams treat vocabulary misalignment as a communication nuisance. It's actually a compounding cost. Every quarter a team operates without shared definitions on VoC, feature adoption, and beta, they run a feedback pipeline where a meaningful portion of CS input is categorized differently by PM and CSM, producing a synthesis that neither team fully trusts. Based on benchmarks across mid-market SaaS (ChurnZero 2024, Gainsight 2024), companies that spend one facilitated session aligning on the 10 highest-frequency terms at their seam report meaningfully fewer "what did you mean by that?" moments in CS-PM syncs within 60 days. The facilitation cost is two hours. The compounding benefit is every VoC session, every sprint review, and every customer conversation that follows without a definitional detour.

Alphabetical Quick Reference Index

| Term | Section |

|---|---|

| Advisory Board | CS & Customer Terms |

| Alpha | Product & Engineering Terms |

| ARR-Weighted Feedback | Feedback Terms |

| Backlog | Workflow Terms |

| Beta | Product & Engineering Terms |

| CSM (Customer Success Manager) | CS & Customer Terms |

| Customer Advocacy | CS & Customer Terms |

| Customer Co-Design | Program Terms |

| Customer Council | CS & Customer Terms |

| Customer Health Score | CS & Customer Terms |

| Customer Impact Score | Feedback Terms |

| Deprecation | Workflow Terms |

| Early Access Tier | Program Terms |

| Engineering Manager (EM) | Product & Engineering Terms |

| Feature Adoption Rate | Metrics Terms |

| Feature Request | Feedback Terms |

| GA (General Availability) | Product & Engineering Terms |

| JTBD (Jobs-to-be-Done) | Product & Engineering Terms |

| MVP (Minimum Viable Product) | Product & Engineering Terms |

| NPS (Net Promoter Score) | CS & Customer Terms |

| PM (Product Manager) | Product & Engineering Terms |

| PMM (Product Marketing Manager) | Product & Engineering Terms |

| Product Designer | Product & Engineering Terms |

| Qualitative Feedback | Feedback Terms |

| Quantitative Feedback | Feedback Terms |

| RC (Release Candidate) | Product & Engineering Terms |

| Release | Workflow Terms |

| Ride-Along | Program Terms |

| Roadmap | Workflow Terms |

| Sprint | Workflow Terms |

| Sunset | Workflow Terms |

| Sunset Retention Rate | Metrics Terms |

| Time-to-Adoption | Metrics Terms |

| VoC (Voice of Customer) | Feedback Terms |

Glossary Maintenance

A glossary nobody updates is a glossary nobody trusts. Assign one owner, typically the CS Ops lead or whoever runs the CS-Product alignment cadence, and review definitions quarterly. The quarterly review doesn't need to be exhaustive. Run through the high-frequency terms: VoC, feature adoption, backlog, beta, GA. These are the words that appear in every weekly sync, and they're the ones where definitions quietly drift when teams don't check.

Trigger a full redefinition session when: a new product line changes what "onboarded" or "adopted" means for a new customer type; a go-to-market shift changes the ICP and therefore which customers are in your beta programs; a new VP of Product or CS joins and brings prior-company vocabulary into the team's daily language. New leaders' definitions compound for months before anyone names the divergence. A glossary review in the first 30 days of a new leader joining is the most efficient way to surface and close those gaps.

Version-control the document. When a definition changes, record the date and reason. Verbal alignment doesn't survive headcount changes, but the written record does.

Still have questions about how to apply these terms? The FAQ below answers the ones that come up most often in CS-Product alignment reviews.

Frequently Asked Questions

Why do CS and Product teams need a shared glossary?

CS and Product teams default to their own professional vocabulary. Terms like "beta," "adoption," and "roadmap" carry different meanings depending on which team is using them. Without a shared written definition, both teams can participate in the same feedback rituals and still reach different conclusions. A shared glossary is the foundational layer before any CS-Product process improvement will hold.

What is the difference between MVP and beta in a B2B SaaS context?

An MVP is a learning vehicle, the smallest version of a feature that can be released to generate customer feedback. Beta is a structured pre-GA program where selected customers test a feature that is closer to release-ready. In CS-Product alignment, the distinction matters because beta participants are typically managed by CS with explicit commitments, while MVP participants are often design partners with a higher tolerance for incomplete functionality.

What does "ARR-weighted feedback" mean, and why does it change prioritization?

ARR-weighted feedback prioritizes customer requests by total contract value rather than account count. A feature request from accounts representing $2M ARR outranks the same request from accounts representing $200K ARR, even if the lower-ARR group includes more individual companies. This prevents a loud cohort of small accounts from crowding out a strategic retention risk involving fewer but larger customers.

How often should this glossary be reviewed?

At minimum, review the high-frequency terms: VoC, feature adoption, backlog, beta, GA. Do this quarterly. Run a full review when a new VP of Product or VP CS joins, when the company launches a new product line that changes what "onboarded" or "adopted" means, or when a go-to-market shift changes the ICP. Definitions drift quietly; a quarterly check surfaces drift before it becomes a process failure.

What is NPS, and why is it insufficient as a standalone CS-Product metric?

NPS (Net Promoter Score) measures how likely a customer is to recommend the product on a 0-10 scale. It was developed by Fred Reichheld and introduced in a 2003 Harvard Business Review article. As a standalone metric at the CS-Product seam, NPS is too lagging and too broad: it tells you a customer is unhappy, but not which product area, not which workflow, and not what would fix it. NPS becomes useful when paired with CSM-led closed-loop follow-up that surfaces the specific friction behind the score.

What is JTBD, and how does it change what CSMs submit to the VoC pipeline?

JTBD (Jobs-to-be-Done) is a framework that defines what a customer is trying to accomplish rather than what feature they requested. Clayton Christensen's research established that customers "hire" products to complete specific jobs. In practice, when a CSM logs "customer wants better reporting," that's a feature request. When a CSM logs "customer needs to see cross-module data in a single view without exporting to a spreadsheet," that's a JTBD-framed VoC ticket, and it gives a PM a problem definition they can spec against rather than a solution they need to reverse-engineer.

Learn More

- What is CS & Product Alignment

- The VOC Pipeline: How CS Feeds Product

- Running Customer Beta Programs

- ARR-Weighted Feedback: Quantifying Customer Voice

- Customer Councils and Advisory Boards

- Sales-CS Alignment Glossary: shared vocabulary for the sales-to-CS handoff seam

Senior Operations & Growth Strategist

On this page

- How to Use This Glossary

- Product & Engineering Terms

- PM: Product Manager

- PMM: Product Marketing Manager

- Engineering Manager (EM)

- Product Designer

- JTBD: Jobs-to-be-Done

- MVP: Minimum Viable Product

- GA: General Availability

- Beta

- Alpha

- RC: Release Candidate

- CS & Customer Terms

- CSM: Customer Success Manager

- Customer Health Score

- Customer Advocacy

- NPS: Net Promoter Score

- Advisory Board

- Customer Council

- Workflow Terms

- Backlog

- Sprint

- Roadmap

- Release

- Deprecation

- Sunset

- Feedback Terms

- VoC: Voice of Customer

- Feature Request

- Customer Impact Score

- ARR-Weighted Feedback

- Qualitative Feedback

- Quantitative Feedback

- Program Terms

- Early Access Tier

- Customer Co-Design

- Ride-Along

- Metrics Terms

- Feature Adoption Rate

- Time-to-Adoption

- Sunset Retention Rate

- Alphabetical Quick Reference Index

- Glossary Maintenance

- Frequently Asked Questions

- Why do CS and Product teams need a shared glossary?

- What is the difference between MVP and beta in a B2B SaaS context?

- What does "ARR-weighted feedback" mean, and why does it change prioritization?

- How often should this glossary be reviewed?

- What is NPS, and why is it insufficient as a standalone CS-Product metric?

- What is JTBD, and how does it change what CSMs submit to the VoC pipeline?

- Learn More