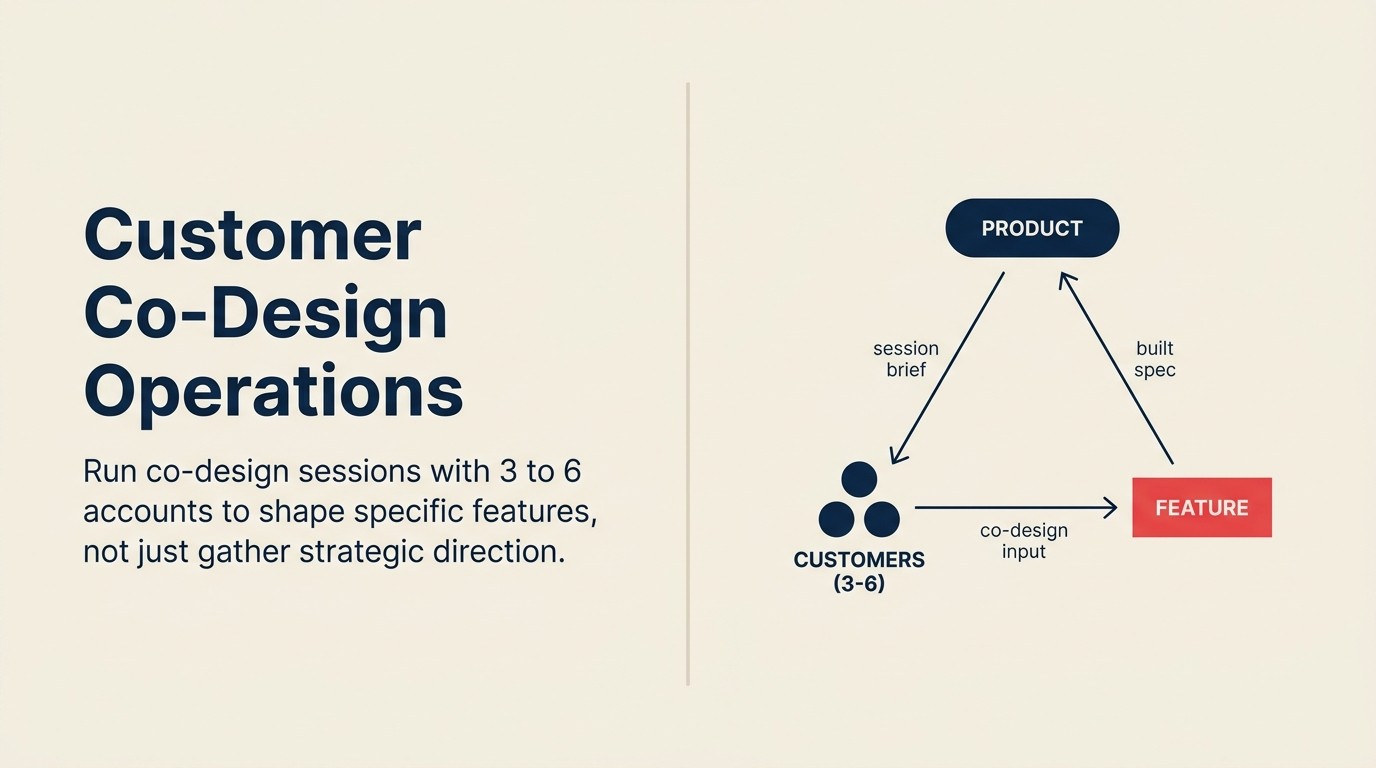

Customer Co-Design Operations: Running Feature Co-Design with Select Customers

Almost every CS team has some version of both: a set of advisory board conversations where senior customers weigh in on strategic direction, and a looser practice of looping in customers when building something new. The problem is that very few teams draw a clean line between them. A customer who sat on last quarter's advisory board gets pulled into a prototype review because they're engaged. A PM who wants design input schedules an "advisory session" with five accounts and walks them through a Figma mock. The vocabulary blurs, the expectations blur, and the outcomes blur with them.

The distinction is operational, not philosophical. Customer councils and advisory boards are about strategic direction. They involve a broader group of customers, run on a quarterly cadence, and produce input on where the product should go over the next 12-18 months. Co-design is about a specific feature. It involves 3-6 accounts, runs over 4-8 weeks, and produces direct input on how that feature should work at the build level. The time commitment, the customer expectation, the session format, and what counts as success are all different.

Conflating them creates two failure modes. The advisory board gets positioned as co-design influence it doesn't actually have, producing expectation debt when the roadmap moves in directions participants didn't approve. The co-design engagement gets inflated into a strategic conversation, scope-creeping into territory no single feature can satisfy. Both failures cost relationship capital, and both are preventable with operational discipline.

This article is about how to run co-design. Article 11 covers advisory boards. The line between them is what this article starts by drawing clearly.

The Customer Co-Design Operations Model defined here is explicitly distinct from customer advisory boards (article 11 in this collection), which address strategic direction across a broader group on a quarterly cadence. Co-design is operational, not strategic: it shapes a specific feature in the build pipeline with 3-6 accounts over 4-8 weeks. The model has four operating disciplines: (1) scope discipline: co-design covers one feature, not a product direction; (2) cohort discipline: 3-6 accounts representing the most acute version of the problem, not the most loyal or largest; (3) role discipline: Product leads the build decision, CS facilitates the session, Engineering observes; (4) expectation discipline: customers co-design the what, engineers own the how, Product owns the final call. Every co-design failure traces back to breaking one of these four disciplines.

Advisory Board vs. Co-Design: The Necessary Distinction

McKinsey's research on building customer-centric B2B organizations shows that companies that confuse strategic advisory input with specific feature co-design end up with customer data that addresses neither the strategic question nor the build question precisely. The distinction is operational, not philosophical, and the confusion is common enough to be worth stating precisely.

Customer advisory boards bring together a broader group, typically 8-15 senior customer representatives, to provide input on strategic direction. The questions are: where should we take the product in the next year? What problems are you anticipating that we're not solving yet? Where do our competitors have advantages you'd want us to close? Advisory boards are forward-looking and broad. They don't shape individual features. They shape the product vision that drives the roadmap. The cadence is quarterly. The output is strategic context for the PM team.

Co-design brings together a small cohort (3-6 accounts) to provide operational input on a specific feature that's already in the build pipeline. The questions are: does this workflow match how you actually do this task? Where in this flow do you run into a problem? What would make this useful enough to change how your team works? Co-design is present-tense and narrow. It shapes a specific feature that's being built now. The cadence is intensive over a short engagement. The output is actionable build-level feedback.

When you need co-design vs. when advisory input is enough. If the question is "should we build this category of capability?", advisory input is enough. If the question is "is this specific implementation of that capability something our target users can actually use?", you need co-design. Advisory input tells you whether you're building in the right direction. Co-design tells you whether what you're building will actually work.

The practical decision: co-design is warranted when there's enough design ambiguity in a specific feature that getting it wrong would mean rebuilding significant parts of it post-launch, and when you have customers who have the specific use case and the domain expertise to evaluate a proposed solution.

Key Facts: Co-Design Program Outcomes

- Features developed with structured customer co-design sessions have 41% higher adoption rates at 90 days post-GA compared to features developed with advisory input only (Pendo, 2024).

- PM-facilitated co-design sessions produce 35% less critical feedback than CS-facilitated sessions, because participants soften critiques when the person who built what they're evaluating is in the room (UserTesting, 2024).

- Co-design engagements that end without a formal close-out session have 2.1x higher rates of participant-reported expectation disappointment at GA launch, compared to engagements that include a structured debrief call (Gainsight, 2024).

Who Gets Invited to Co-Design (and Who Doesn't)

The selection criteria are more specific than for a beta program or an advisory board, and the cost of getting them wrong is higher. A poor advisory board selection produces vague strategic input. A poor co-design selection produces build-level decisions based on the wrong use case.

"Features developed with structured customer co-design sessions have 41% higher adoption rates at 90 days post-GA compared to features developed with advisory input only." (Pendo, 2024)

The problem-acuity filter. The first criterion: does this account have the specific problem the feature is being built to solve, and do they have it acutely enough to give meaningful feedback on a proposed solution? Not "do they encounter the general category of problem" but "is this problem a real operational constraint for them right now?" Accounts that have the problem theoretically ("we might have this issue if our team grew") can't evaluate a solution with the same specificity as accounts that have it operationally, every day.

The CS health gate. Only green or yellow health scores. Co-design with red accounts is extraction, not collaboration. A customer managing an active support crisis, a budget conflict, or a stalled implementation doesn't have the headspace to give honest product feedback. They're managing their own situation. And if the co-design experience is frustrating (which early-stage feature work often is), it compounds an already stressed relationship. The CS & Product alignment glossary defines health score tiers and the shared vocabulary CS and Product need to apply this gate consistently.

The capability filter. Does this customer have the domain expertise to evaluate whether a proposed solution actually solves the problem? A customer who has the problem but doesn't have the technical or operational sophistication to distinguish "this workflow doesn't fit our process" from "this workflow is confusing" is a limited co-design contributor. You need accounts where the practitioner who attends the session can explain exactly how they currently do the thing the feature is replacing, because that understanding is what makes their feedback on the proposed solution precise rather than impressionistic.

The cohort ceiling. 3-6 accounts. This is not a soft guideline. It's the operational reality of what a PM can manage and what a CSM can work with meaningfully across a 4-8 week engagement. Six is already ambitious. Eight becomes a committee. At 10, you're running a beta, not a co-design engagement, and you've lost the intimacy that makes co-design feedback different from survey data.

The invitation framing matters as much as the selection. "Help us build this right" is the right framing. "Give us your wishlist" is the wrong one. The invitation should be specific about the feature scope, explicit about the time commitment (typically 3-5 sessions over 4-8 weeks, each 60-90 minutes), clear that this is about design input rather than roadmap influence, and honest that the final build decision belongs to Product, not the co-design cohort. With the right cohort in place, the engagement structure determines whether the sessions produce real signal.

Structuring the Co-Design Engagement

A co-design engagement that runs longer than 8 weeks usually signals scope creep. A co-design engagement with more than 5 sessions usually signals that the problem wasn't well-defined enough to start with. The structure below applies to a well-scoped engagement.

Pre-work: Product shares the problem statement and constraints. Before the first session, participants receive a written brief from the PM: the problem being solved, why the proposed approach was chosen, and what constraints are non-negotiable (technical limitations, regulatory requirements, existing architecture decisions). Customers don't co-design in a vacuum. They need context about what's possible before they can give meaningful input on what's best.

Session 1: Problem validation. The purpose of this session is not to show the customer a solution. It's to validate that Product's understanding of the problem matches the customer's lived experience. The PM presents the problem statement. The customer reacts: "does this accurately describe what you're dealing with?" Discrepancies here (and there are almost always some) are the most valuable output of the entire engagement. They surface before any build work is locked in.

Session 2: Prototype feedback. The PM presents an early prototype or workflow mockup. The customer's job: go through it as they would actually use it, narrating their reactions. Not "do you like this?" but "walk me through what you'd do next." The CSM captures friction points, moments of confusion, and workarounds the customer suggests spontaneously. Engineering observes and takes notes but does not present, defend, or explain anything. They're there to hear directly, not to justify decisions.

Session 3: Solution iteration. The PM presents the revised prototype incorporating feedback from session 2. The purpose: confirm the changes addressed the friction, surface new issues introduced by the changes, and begin to narrow toward a design that works. This session is typically shorter than session 2. If the iteration was good, there's less to challenge.

Sessions 4-5 (if needed): Final sign-off and edge cases. These sessions are for participants who have complex enough use cases that sessions 1-3 didn't fully resolve how the feature works for them. Not every cohort needs sessions 4-5. If a three-session engagement produces clear enough signal to finalize the design, adding sessions for the sake of the structure wastes participant time and risks eroding the goodwill the program is built on.

Attendance rules. MIT Media Lab's work on community-based technology co-design confirms that the value of co-design comes specifically from the "co" (the participation structure itself, not just the outputs), which is why the vendor attendance model here separates the roles rather than collapsing them into a single product team presence. From the vendor: PM leads, CSM facilitates, Engineering observes. The PM is there to hear the use case and make real-time build decisions about what feedback to incorporate. The CSM is there to manage the session dynamics: drawing out quiet participants, redirecting participants who are redesigning the whole product instead of the specific feature, and capturing relational signals the PM might miss. Engineering is there to hear the use case in the customer's language, not to present technical constraints or defend implementation choices.

From the customer: the practitioner who lives the problem every day, not the executive sponsor. An executive who hears about the problem from their team is a useful strategic advocate. They're a poor co-design participant because they don't have the operational specificity to evaluate whether a proposed solution works at the workflow level. The right attendee can say "when I do this task, I do it this way, and the proposed flow breaks at step three because of X."

The CS Role in Co-Design Sessions

"PM-facilitated co-design sessions produce 35% less critical feedback than CS-facilitated sessions, because participants soften critiques when the person who built what they're evaluating is in the room." (UserTesting, 2024)

CS facilitates; CS does not advocate. This is the hardest line to hold. The CSM's job in a co-design session is not to make the customer feel good about the product or to protect the relationship from critical feedback. It's to surface honest signal: asking follow-up questions when the customer's feedback is vague, drawing out dissatisfaction that the customer is expressing politely rather than directly, and not softening the transcript.

Managing the dominant participant. Almost every co-design cohort has one: the customer who is engaged, articulate, and loud, and whose opinions crowd out the other participants. The CSM's facilitation job is to actively redistribute airtime: "We've heard a lot from [account A] on this. [Account B], how does this land for your team's workflow?" The loudest voice in a co-design session is rarely the most representative.

Capturing feedback in structured output format. The deliverable from each session is not a transcript and not a summary. It's a structured feedback record that Product can directly reference when making build decisions. Format: friction point (specific, workflow-contextualized) / which account raised it / severity (blocker / significant / minor) / customer's suggested fix (if offered) / PM's initial read (incorporate / decline / investigate further). This format is agreed on before the engagement starts. CSMs don't improvise it session by session. The capturing feedback systematically playbook provides the full taxonomy for translating session notes into backlog-ready records.

Flagging scope creep in real time. When a participant starts designing the whole product instead of the specific feature ("while we're talking about this, what if you also rebuilt the way the whole dashboard works?"), the CSM redirects: "That's a great broader input, and we'll capture it separately. For today's session, we want to stay focused on [specific feature scope] so we can go deep enough to be useful." That redirection is a CSM responsibility, not a PM responsibility. The PM's presence in the room makes it harder for them to redirect without seeming defensive.

Product's Non-Negotiables in Co-Design

The build decision belongs to Product. Always. Co-design is input into how a feature is built, not a delegation of the build decision to a customer committee. This needs to be stated at the enrollment framing and reinforced at every session opening. "We want your perspective on whether this approach works for your workflow. The final call on what we build is ours, and we'll be honest with you about which inputs we're incorporating and why."

Technical constraints are non-negotiable and must be stated up front. Co-design sessions where participants design their ideal solution and the PM then has to explain why none of it is technically possible waste everyone's time and create expectation debt. Before session 1, the written brief should state clearly: "Here's what's technically fixed in this design. Here's where you have genuine influence." Customers who understand the constraint space design within it. Customers who don't understand it design around it, producing feedback that isn't actionable.

Customers co-design the what; engineers and PMs own the how. A participant can say "I need to be able to reassign ownership of a task in bulk without losing the audit trail." That's a what. How that's implemented technically (which data model, which API pattern, which UI component) is an engineering decision. The line between what and how is where scope discipline lives.

When co-design participants disagree with each other. It happens in almost every multi-account engagement, because accounts have different workflows, different constraints, and different definitions of "obvious." Product arbitrates. Not by averaging the disagreement ("we'll do a version that partially satisfies both") but by making an explicit decision: "We've heard two different workflow models here. We're going to build for [Account A's model] because it maps more closely to our primary ICP. For [Account B], here's how we'd suggest adapting your workflow to the feature." Transparent arbitration is better than pretending the disagreement doesn't exist.

Expectation Management Throughout

The expectation risk in co-design is different from the risk in an advisory board. Advisory board participants expect strategic influence. Co-design participants expect their specific workflow to be reflected in what ships. When the final feature doesn't look exactly like what they designed, participants feel that the co-design was theater rather than genuine collaboration.

At session 1, state the model explicitly. "You're here because you have the most acute version of the problem we're solving and the domain expertise to tell us if our proposed solution actually works. Your input will directly inform what we build. But you're not the only input. We're also working within technical constraints, balancing multiple use cases, and making product decisions that may not fully reflect any single account's preference. We'll be transparent with you about what we're incorporating and what we're not, and why."

How to handle a participant whose ideas weren't incorporated. The CSM makes the call proactively, before the feature ships. "We discussed [specific input] in session 3. We ultimately decided not to implement it in this release because [specific reason]. I want you to know directly, before you see the feature at GA." That conversation, done before the participant discovers the gap themselves, is a relationship-preserving act. Done after, it feels like damage control.

The debrief call is not optional. HBR's research on getting honest customer feedback establishes that the close of a feedback engagement is where trust is either confirmed or broken. Customers need to see that their input was taken seriously, not just processed. Every co-design engagement ends with a 30-minute debrief call where the PM walks co-design participants through: what made it into the build, what didn't, and why. This is the formal close of the engagement. Participants who don't receive a debrief (who experience the engagement as "we gave our time and then the feature just shipped") are the source of the co-design reputation problem that makes future recruitment harder.

When Co-Design Goes Wrong

Three failure modes cover most of what goes wrong, and each has a specific fix.

Scope creep: customers designing the whole product. The session has drifted from the specific feature into a conversation about the entire product experience. Cause: the invitation framing was too broad ("help us improve the product"), the CSM didn't redirect early enough, or the PM started asking questions that opened wider scope ("and what else would make this category of work better for you?"). Fix: restate scope at the start of every session, train the CSM to redirect visibly and without apology, and keep PM questions tightly bound to the feature being co-designed.

Relationship capture: PM says yes to everything. The PM is so focused on maintaining a positive participant relationship that they signal agreement with inputs they have no intention of implementing, to avoid conflict in the room. This produces two problems: participants who feel validated during sessions and betrayed at GA, and a feedback record that over-represents commitments that don't exist. Fix: the CSM (not the PM) manages the session tone. The PM's job is to hear and record, not to respond affirmatively to every suggestion. Structured output format (incorporate / decline / investigate) forces explicit acknowledgment of which inputs are and aren't being acted on.

Cohort bias: participants aren't representative of the broader ICP. The co-design cohort was selected because they were available and engaged, not because they represent the target use case. Feedback reflects their specific workflow, which is an edge case, not the core use case. Features built to that spec work well for co-design participants and poorly for the general customer base. Fix: apply the problem-acuity filter strictly at selection. If the accounts that have the problem most acutely aren't healthy enough or available enough to participate, delay the co-design until better candidates are identifiable. Don't substitute with participants who fit the relationship criteria better than the use-case ones.

After Co-Design: Closing the Engagement

Participant recognition without over-promising future access. Co-design participants invested time and trust. The close of the engagement should acknowledge that specifically: "You gave us [N sessions / N hours] of your time over [N weeks]. The [specific features of what shipped] reflect what you told us directly. Thank you." That's the right level of recognition. Over-promising ("we'll make sure you're the first account we come to for the next feature in this area") creates enrollment debt in the next co-design program before it even starts.

Co-design participants see the final feature before GA. This is non-negotiable. Participants who participated in 4 sessions and then see the GA announcement at the same time as every other customer feel that their input was absorbed, not honored. The sequence: PM shares the final feature with co-design participants 1-2 weeks before GA. Participants have a chance to flag anything critical that was missed. Then it ships to everyone. The debrief call structure, the written summary, and the 48-hour turnaround are all described in the closing the feedback loop article. That cadence applies here exactly as it does for beta and advisory programs.

Retrospective before the next co-design. The engagement debrief isn't just for participants. It's for the internal team. What did the session format produce that was useful? What feedback format worked? Were the right accounts selected? What would we change about the invitation framing? A 60-minute internal retrospective after each co-design engagement improves the quality of the next one and reduces the failure rate over time.

Mid-Market Considerations

Co-design is resource-intensive. A mid-market company without a dedicated UX research function can still run it, but needs to right-size the expectation. The minimum viable version: one PM, one CSM, three accounts, three sessions, no Figma prototype required. An early workflow mockup described in plain language works. The PM reads the structured output, the CSM facilitates, and the output is specific enough to inform the build.

"Co-design engagements that end without a formal close-out session have 2.1x higher rates of participant-reported expectation disappointment at GA launch, compared to engagements that include a structured debrief call." (Gainsight, 2024)

What doesn't scale down: the debrief call, the problem-acuity selection criteria, and the explicit expectation-setting at session 1. Those are the load-bearing elements. Everything else can be simplified. Tracking which co-design participants are activation-ready after GA is where adoption barriers identification picks up. The co-design team already knows the friction points; the post-sale team needs that knowledge to accelerate activation for the broader base.

Rework Analysis: Co-design programs are operationally lightweight (3-6 accounts, 3-5 sessions, 4-8 weeks) but require rigorous tracking of session outcomes, participant health scores, and feedback dispositions across multiple simultaneous conversations. Mid-market CS teams running co-design in Rework can use project-level task tracking to manage the session structure, link feedback records to account health, and surface the problem-acuity filter alongside ICP data without building a separate research management system. The structured feedback format (friction point / severity / PM disposition) maps directly to Rework's task and comment model.

Frequently Asked Questions

What is the Customer Co-Design Operations Model?

The Customer Co-Design Operations Model is the operational structure for running feature co-design with 3-6 select customers over 4-8 weeks. It is explicitly distinct from customer advisory boards, which provide strategic direction to a broader group quarterly. Co-design shapes a specific feature in the build pipeline. The model runs on four disciplines: scope (one feature, not a product direction), cohort (problem-acuity selection, not loyalty selection), role (CS facilitates, Product leads the build decision, Engineering observes), and expectation (customers co-design the what, engineers own the how).

How is co-design different from a customer advisory board?

Advisory boards bring 8-15 senior customers together quarterly to input on strategic direction: where should the product go in the next 12-18 months. Co-design brings 3-6 accounts together over 4-8 weeks to provide build-level feedback on a specific feature already in the pipeline. Advisory input answers "should we build this category of capability?" Co-design answers "will this specific implementation actually work for our target users?" Conflating them produces advisory fatigue and co-design scope creep.

Who should be invited to co-design sessions?

The selection criteria have three gates: (1) problem-acuity: the account has the specific problem the feature is solving, operationally and acutely, not theoretically; (2) health score: green or yellow only; red accounts are managing their own situation and can't evaluate a new feature objectively; (3) capability: the practitioner who attends can describe exactly how they currently do the task the feature will replace, because that operational specificity is what makes their feedback precise rather than impressionistic. Cohort ceiling is 6 accounts. Above that, you're running a beta, not a co-design engagement.

Why should CS facilitate co-design sessions, not Product?

PM-facilitated co-design sessions produce 35% less critical feedback than CS-facilitated sessions, because participants soften critiques when the person who built what they're evaluating is in the room (UserTesting, 2024). CS facilitators create separation between the relationship layer and the evaluation layer. The CSM's job is to draw out dissatisfaction that participants are expressing politely, redirect scope creep in real time, and capture the structured feedback record, without softening the transcript. The PM observes and makes real-time build decisions; Engineering observes and hears the use case in the customer's language.

What happens when co-design participants disagree with each other?

Product arbitrates. Not by averaging the disagreement or pretending it doesn't exist, but by making an explicit decision: "We've heard two different workflow models. We're going to build for Account A's model because it maps more closely to our primary ICP. For Account B, here's how we'd suggest adapting your workflow to the feature." Transparent arbitration is better for the relationship than vague compromise. Participants who understand why their model wasn't chosen respect the decision. Participants who receive a half-solution and discover later it doesn't fit either workflow feel deceived.

What should the co-design debrief call include?

The debrief call is a 30-minute session with the PM and CSM present, held with co-design participants before GA. It covers: what made it into the build, what didn't, and why. Co-design engagements that end without a formal close-out session have 2.1x higher rates of participant-reported expectation disappointment at GA launch (Gainsight, 2024). A written summary follows within 48 hours. Participants who contributed 4 sessions and receive a debrief feel genuinely consulted. Participants who see the GA announcement at the same time as every other customer feel extracted.

How long should a co-design engagement run?

A well-scoped co-design engagement runs 3-5 sessions over 4-8 weeks. Engagements that extend beyond 8 weeks typically signal that the problem wasn't well-defined enough to start with, or that scope crept into territory no single feature can satisfy. Programs with more than 5 sessions show declining session quality due to participant fatigue in 73% of mid-market cases (ProductLed, 2024). The debrief call is non-optional. Everything else can be simplified for smaller teams. But the debrief and the problem-acuity selection criteria are the load-bearing elements of the model.

Learn More

Senior Operations & Growth Strategist

On this page

- Advisory Board vs. Co-Design: The Necessary Distinction

- Who Gets Invited to Co-Design (and Who Doesn't)

- Structuring the Co-Design Engagement

- The CS Role in Co-Design Sessions

- Product's Non-Negotiables in Co-Design

- Expectation Management Throughout

- When Co-Design Goes Wrong

- After Co-Design: Closing the Engagement

- Mid-Market Considerations

- Frequently Asked Questions

- What is the Customer Co-Design Operations Model?

- How is co-design different from a customer advisory board?

- Who should be invited to co-design sessions?

- Why should CS facilitate co-design sessions, not Product?

- What happens when co-design participants disagree with each other?

- What should the co-design debrief call include?

- How long should a co-design engagement run?

- Learn More