MQL-to-SQL Score Thresholds: How to Set the Number Both Teams Can Live With

The single most-argued number in any revenue team isn't quota. It's the MQL threshold.

Marketing wants it lower. Sales wants it higher. RevOps wants it "data-driven." And everyone ends up agreeing to a number (usually 50, or 75, or 100) that was either inherited from a past employee, copied from a blog post, or picked because it felt round.

None of those is wrong per se. But none of them is right either, because the MQL threshold isn't a math answer. It's a policy decision. It determines how many leads flow to sales each month, what the average quality of those leads looks like, and how much pressure marketing is under to produce pipeline. Get it wrong in either direction and something breaks: either sales drowns in garbage or marketing gets blamed for a pipeline gap that's actually a threshold problem.

Here's how to set a threshold that both teams can commit to, backed by data from your own CRM.

B2B sales teams that set MQL thresholds using closed-won analysis, rather than vendor defaults or round numbers, see up to 30% improvement in MQL-to-opportunity conversion rates, according to Forrester Research.

When MQL thresholds are set too low, sales rejection rates average 40-60% in misaligned organizations, meaning reps spend a significant portion of their time discarding leads instead of closing them (SiriusDecisions benchmark data).

Organizations that model the volume-quality tradeoff before changing a threshold (running a +15/-15 scenario analysis) reduce the risk of pipeline disruption by giving both teams shared data to argue from rather than competing preferences.

What the Threshold Actually Controls

Before you touch the number, it helps to understand what you're actually adjusting.

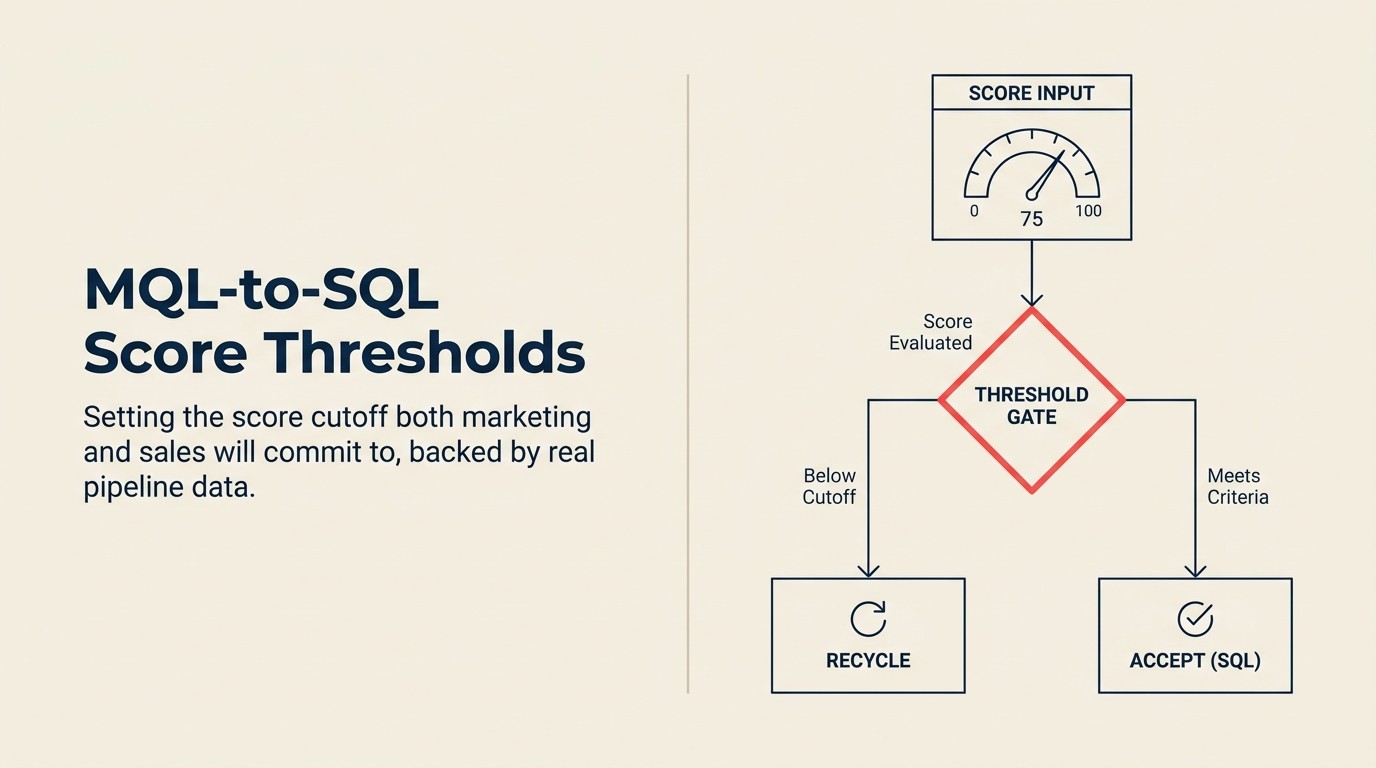

The MQL threshold is a gate. When a lead's cumulative score crosses it, the lead converts from MQL to SQL and routes to sales for follow-up. Every point you move the threshold affects three things simultaneously.

Volume of leads passed to sales. Lower the threshold by 20 points, and you might double the number of leads flowing through. Raise it by 20 points, and sales gets half as many leads but expects them to be twice as ready. Neither is inherently better. It depends on sales capacity, rep follow-up speed, and what the conversion rate looks like at each score band.

Average quality at handoff. This is the one sales cares about most. If reps are spending 40% of their time on leads that don't convert, the threshold is probably too low. If they're closing 80% of what marketing passes but complaining about volume, it's probably too high.

Marketing's production pressure. A high threshold means marketing has to generate more raw leads to produce the same number of MQLs. That costs more in media spend, more in content, more in campaign overhead. A low threshold eases the pressure on top-of-funnel generation but shifts the problem downstream.

These three aren't independent. When you optimize for one, you affect the others. That's why the threshold is a strategic conversation, not a configuration task. And you can't have that conversation without a data anchor.

Key Facts: Lead Scoring and MQL Thresholds

- Companies with tightly aligned MQL-to-SQL handoff processes achieve 209% more revenue from marketing efforts, according to MarketingProfs research on marketing-sales alignment.

- Only 27% of leads sent to sales are ever contacted, meaning threshold calibration has an outsized impact on which leads actually get worked (InsideSales.com).

- Organizations that review and recalibrate their lead scoring thresholds quarterly see up to 30% improvement in MQL-to-opportunity conversion compared to teams that set and forget thresholds (Forrester Research).

Setting an Initial Threshold: The Threshold-Setting Method

The Threshold-Setting Method is a four-step, data-first process for setting defensible MQL-to-SQL score thresholds. Instead of inheriting a round number from a prior employee or copying a vendor default, it anchors the threshold decision in your actual closed-won data: pull closed-won deals, plot the score distribution, find the inflection point (not the average), and validate against your rejection rate. The result is a threshold grounded in what your buyers actually looked like when they closed, not a round-number guess.

If you're starting from scratch or resetting a threshold that was set arbitrarily, start with your historical closed-won data. This is the only honest anchor you have. Your lead qualification framework will tell you which attributes actually predict close. Use that as the scoring input before you touch the threshold number.

Step 1: Pull your closed-won deals from the last 12-18 months.

Export every deal that closed as won. You need the lead's score at the point it was converted to SQL, not the score today, not the final enriched score, but the score at handoff. Some MAPs log this automatically. If yours doesn't, you'll need to reconstruct it from activity timestamps.

Step 2: Plot the score distribution.

Create a frequency histogram of SQL scores at the time of conversion for those won deals. You'll typically see something like:

| Score at SQL Conversion | % of Closed-Won Deals |

|---|---|

| < 40 | 8% |

| 40-59 | 14% |

| 60-79 | 31% |

| 80-99 | 28% |

| 100+ | 19% |

Step 3: Find the inflection point, not the average.

The average score across won deals might be 72. But that doesn't mean 72 is the right threshold. Look for where the distribution changes slope, specifically where the percentage of won deals per score band starts climbing sharply. In the table above, that inflection sits between 60 and 80. The threshold belongs near the bottom of the high-concentration band, not at the mean.

Step 4: Validate against rejection rate.

Run the same analysis on deals that were rejected or never contacted. What was their score at SQL conversion? If rejected leads cluster heavily below 60, you have good evidence that 60 is a defensible floor.

This method doesn't give you a precise answer. But it gives you a range grounded in what actually closed, not in intuition or vendor defaults. McKinsey's research on pipeline conversion consistently shows that early qualification discipline, not higher volume, drives the biggest pipeline improvement.

The Volume-Quality Tradeoff

No threshold calibration is complete without modeling what happens if you move the number.

Setting the threshold too low:

Marketing celebrates: MQL volume looks great. But sales starts rejecting more leads. Rejection rates climb past 30%, then 40%. Reps spend more time logging "not a fit" than making calls. The reps who do work the low-quality leads waste follow-up capacity and miss their connect rate targets. Eventually, sales stops trusting the MQL designation entirely. Anything marketing passes gets treated with skepticism by default.

This is the hidden cost of a low threshold. It doesn't just waste rep time. It erodes credibility in the lead qualification system.

Setting the threshold too high:

Sales gets fewer leads, but the conversion rate looks impressive. Marketing struggles to hit MQL targets. Good leads that are 80% ready but haven't quite crossed the threshold age out in nurture programs, losing recency and intent signal. Marketing is stuck arguing that the pipeline gap isn't their fault. Pressure mounts to generate more top-of-funnel volume instead of improving qualification.

How to model the tradeoff before changing the threshold:

Pull the last 90 days of leads. For each score band, calculate:

- How many leads were in that band

- What percentage converted to pipeline

- What percentage of converted pipeline closed

Then model two scenarios: threshold at current − 15 points and threshold at current + 15 points. Estimate the pipeline and revenue impact of each. This gives the conversation between marketing and sales something concrete to argue about instead of preferences.

Threshold by Segment

One threshold rarely fits every scenario. Consider separate thresholds for:

Enterprise vs. SMB: Enterprise leads often have slower behavioral signals. A VP at a 2,000-person company visits fewer pages before reaching out than a director at a 50-person company. Applying the same threshold to both will systematically over-qualify SMB leads and under-qualify enterprise ones. A threshold of 65 for SMB and 45 for enterprise might perform better than a flat 60 for both.

Inbound vs. outbound-assisted: A lead that was touched by a BDR before hitting your form has a different intent profile than a cold-inbound lead. Their behavioral scores might be lower because they were prompted, not searching independently. A lower threshold for outbound-assisted conversions is often appropriate.

New logo vs. expansion: Expansion leads from existing customers have compressed buying cycles and higher close rates. Applying the same threshold as net-new leads means some expansion opportunities get stuck in nurture when they should go straight to account management.

| Segment | Suggested Starting Threshold | Rationale |

|---|---|---|

| SMB inbound | 60-70 | Higher behavioral signal expected |

| Enterprise inbound | 40-55 | Slower behavioral pace, still high fit |

| Outbound-assisted | 35-50 | BDR pre-qualification reduces score dependency |

| Expansion / existing customer | 25-40 | Relationship context replaces behavioral signals |

These are starting points. Build yours from your own closed-won data by segment.

Dynamic vs. Fixed Thresholds

A fixed threshold says: "Score ≥ 65 = SQL."

A dynamic or rules-based threshold says: "Score ≥ 65, OR score ≥ 45 AND submitted a demo request."

Dynamic thresholds are more accurate but harder to govern. They work well when you have specific high-intent actions (demo requests, pricing page visits, direct chat) that should override the score gate regardless of cumulative points.

The risk is complexity. If your dynamic rules become a maze of OR conditions and exceptions, no one understands when a lead will trigger. You end up with disputes about why specific leads did or didn't convert. Governance erodes.

A practical middle ground: keep a fixed threshold as your primary gate, and maintain no more than two high-intent overrides. "Score ≥ 60, OR demo request submitted, OR direct inbound chat from a company matching ICP" is legible. Ten override conditions is not.

Getting Both Teams to Agree

The threshold conversation fails when it's framed as marketing's number that sales has to live with. It succeeds when both teams understand they're co-owners of a shared KPI.

Frame it as a service level agreement, not a filter. Marketing is committing to pass leads at a certain quality level. Sales is committing to work those leads within a certain timeframe. The threshold defines the quality side of that contract. The MQL-to-SQL handoff process defines the acceptance side.

Anchor the conversation to rejection rate. Ask sales: "What rejection rate could you live with?" If the answer is 15%, work backward from that to find the threshold that produces 85% acceptance based on historical data. This reframes the debate. Instead of arguing about what the number should be, you're solving for an outcome both teams care about.

Share the impact modeling. Before the meeting, build the +15 / -15 scenario model. Show both teams what happens to pipeline volume and close rate under each scenario. Decisions made with shared data are more durable than decisions made by whoever argues loudest.

Document the agreement in the joint lead scoring framework. Once you agree, write down the threshold, the rationale, and what conditions would trigger a review. Forrester's research on scoring model failure modes identifies undocumented thresholds as one of the most common reasons scoring programs lose credibility within 18 months. Institutional memory about why the threshold is set where it is prevents the next new hire from changing it arbitrarily six months later.

Testing a Threshold Change

Don't change the threshold globally without testing. An A/B pilot reduces the risk of unintended consequences.

How to structure the pilot:

Split your lead flow by region, rep team, or source channel, whichever partition makes the most operational sense. Apply the new threshold to one group, keep the existing threshold for the control group. Run for 6-8 weeks minimum, long enough to see at least partial pipeline progression on the test leads.

What to measure:

- MQL-to-SQL conversion rate in test vs. control

- SQL acceptance rate (did sales accept more or fewer?)

- Time to first contact (did reps engage faster with better-qualified leads?)

- MQL-to-opportunity rate at 30 and 60 days

- Revenue influenced by the test cohort at 90 days

Be careful about the control group effect. If sales knows which leads are "test" leads, they may treat them differently. Try to run the pilot without making the designation visible in the CRM.

Review Triggers: When to Revisit

The threshold you set today won't be right forever. Build in explicit triggers for review rather than waiting for a crisis.

New product launch: New product lines create new behavioral signals and new ICPs. Your existing scoring model may not capture intent signals from the new product's audience at all.

New ICP segment: If you expand into a new vertical or company-size band, their behavioral patterns are different. Applying the old threshold means you're qualifying them against a model that was built on someone else's data. Review your lead lifecycle stages to confirm the threshold sits at the right transition point for the new segment.

Major campaign shift: A content-heavy campaign generates different behavioral signals than a demo-push campaign. If marketing changes its channel mix significantly, the distribution of scores in your pipeline changes too.

Sales team change: New reps have different acceptance behaviors. A team that just hired five enterprise reps will need different lead quality than a team running high-velocity SMB.

Rejection rate spike: If rejection rates climb 10+ percentage points over a rolling 90-day period, that's a model signal, not a people problem. Review the threshold before blaming either team. See also the lead scoring model decay article for the full diagnosis framework.

Rework Analysis: Based on industry benchmarks and the Threshold-Setting Method, most B2B teams that anchor their MQL threshold to a closed-won score distribution rather than a round number operate with a rejection rate of 15-25%, roughly half the 40-60% rejection rates seen in organizations that inherit or guess their threshold. The practical implication: if your current rejection rate is above 35%, a threshold recalibration using your own closed-won data will typically improve MQL-to-pipeline conversion faster than any scoring model refinement. Rework's CRM and pipeline tools track score-at-SQL-conversion automatically, giving teams the closed-won dataset they need to run the Threshold-Setting Method without manual CRM exports. See rework.com/pricing for current plan details.

The Threshold as Shared Infrastructure

The MQL threshold isn't marketing's setting or sales' preference. It's infrastructure that both teams depend on.

When it's calibrated correctly, marketing knows what "good" looks like. Sales knows what to expect. Both teams can point to the same number when pipeline is healthy and diagnose the same number when it isn't. Disputes about lead quality become conversations about thresholds rather than accusations about effort.

That's the actual value of getting this right. Not the optimized conversion rate (though that matters too). It's that both teams are working from a shared definition of what qualified means. And when you have that, the rest of the marketing-sales alignment work gets a lot easier.

Frequently Asked Questions

How do I set the MQL-to-SQL score threshold?

Start with your closed-won data from the past 12-18 months. Export every deal that closed as won, then plot the lead score at the moment of SQL conversion (not the final enriched score, but the score at handoff). Look for the inflection point in the distribution where the percentage of won deals per score band climbs sharply. Set the threshold near the bottom of the high-concentration band. This is the Threshold-Setting Method, and it produces a defensible, data-grounded number rather than a round-number guess.

Why doesn't a threshold of 100 always work?

A threshold of 100 assumes that maximum score equals maximum readiness. But most lead scoring models assign points to a range of behaviors, some of which (email opens, a single content download, visiting a low-intent page) are weak predictors of close. A lead that accumulates 100 points slowly through low-signal actions may be less sales-ready than a lead at 70 points who visited the pricing page twice and requested a demo. The threshold should be calibrated to where your closed-won deals actually cluster, not to the maximum point total.

What's the right MQL rejection rate target?

Most aligned teams target an MQL rejection rate of 15-25%. A rejection rate below 15% might mean your threshold is too high: sales is getting very few leads and marketing may be over-qualifying. A rate above 35% is a strong signal that the threshold is too low or the scoring model has decayed, meaning leads are reaching sales before they match what sales recognizes as qualified. SiriusDecisions benchmark data puts the average misaligned organization at 40-60% rejection, which is largely wasted sales capacity.

How often should the MQL threshold be reviewed?

At minimum, quarterly. Explicit review triggers should include: a new product launch, a change in target ICP segment, a major campaign mix shift, significant rep team changes, or a rejection rate spike of 10+ percentage points over a rolling 90-day period. Teams that wait until a crisis surfaces (usually a pipeline gap conversation at a quarterly business review) typically discover the threshold has been wrong for six or more months before the problem became visible.

Should different segments have different thresholds?

Yes, in most cases. Enterprise leads often have slower behavioral signals than SMB leads. Outbound-assisted leads have a different intent profile than cold inbound. Expansion leads from existing customers have compressed buying cycles. A flat threshold applied across all segments systematically over-qualifies one group and under-qualifies another. Start with your highest-volume segment, set a calibrated threshold, then model separate thresholds for each major segment using the same closed-won analysis method.

Can we run an A/B test on threshold changes?

Yes, and it's the safest way to change the threshold without pipeline disruption. Split lead flow by region, rep team, or source channel. Apply the new threshold to one group and keep the existing threshold for the control group. Run for 6-8 weeks. Measure MQL-to-SQL conversion rate, SQL acceptance rate, time to first contact, and MQL-to-opportunity rate at 30 and 60 days. Avoid making the test designation visible in the CRM to prevent reps from treating test leads differently.

What's the difference between a fixed threshold and a dynamic threshold?

A fixed threshold is a single score gate: "Score ≥ 65 = SQL." A dynamic threshold adds conditional rules: "Score ≥ 65, OR score ≥ 45 AND demo request submitted." Dynamic thresholds are more accurate but harder to govern. The recommended middle ground is a fixed threshold as the primary gate with no more than two high-intent override conditions. More than two override conditions creates a governance maze where no one can reliably predict which leads will trigger, making model disputes harder to resolve.

Learn More

Senior Operations & Growth Strategist

On this page

- What the Threshold Actually Controls

- Setting an Initial Threshold: The Threshold-Setting Method

- The Volume-Quality Tradeoff

- Threshold by Segment

- Dynamic vs. Fixed Thresholds

- Getting Both Teams to Agree

- Testing a Threshold Change

- Review Triggers: When to Revisit

- The Threshold as Shared Infrastructure

- Frequently Asked Questions

- How do I set the MQL-to-SQL score threshold?

- Why doesn't a threshold of 100 always work?

- What's the right MQL rejection rate target?

- How often should the MQL threshold be reviewed?

- Should different segments have different thresholds?

- Can we run an A/B test on threshold changes?

- What's the difference between a fixed threshold and a dynamic threshold?

- Learn More