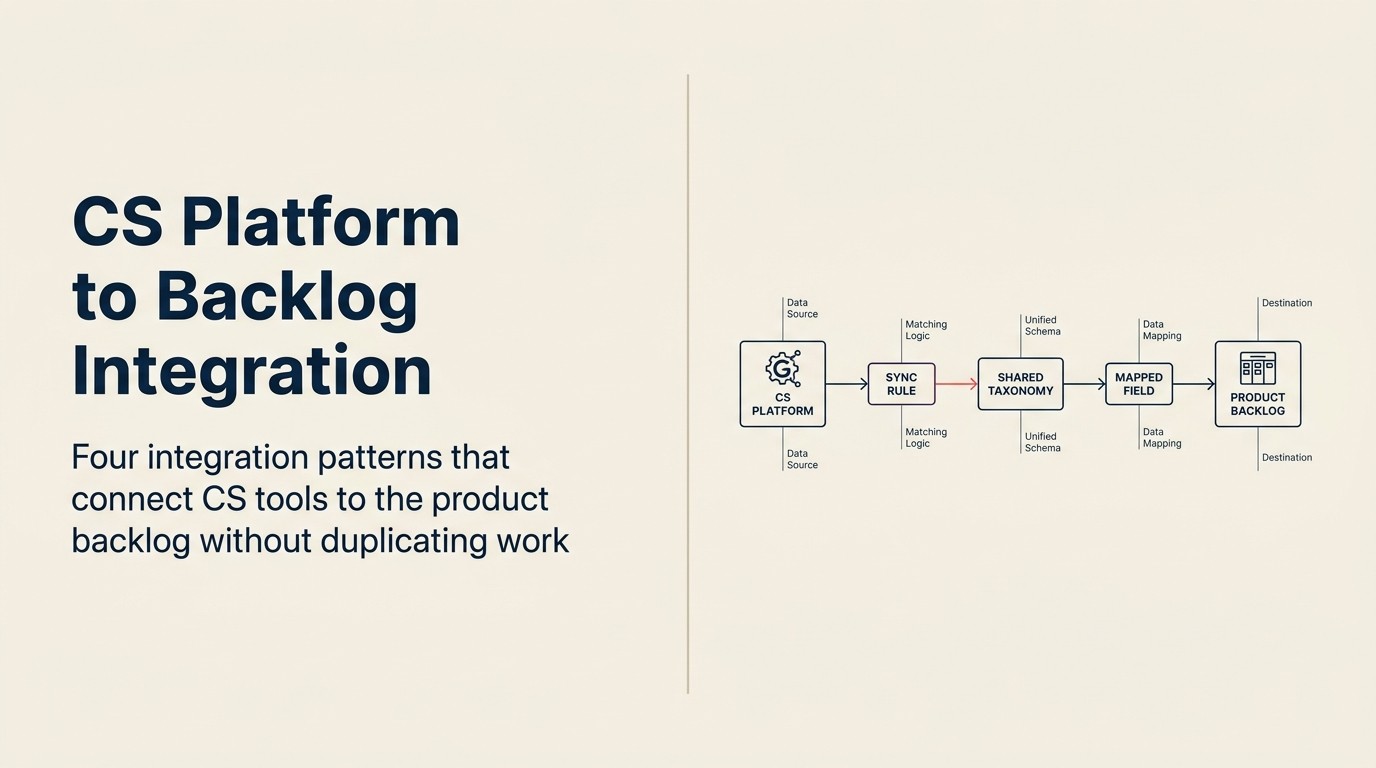

CS Platform to Product Backlog Integration: Making CS and Product Tools Work Together

Here's the copy-paste problem in its full absurdity: a CSM (Customer Success Manager) files a feature request in Gainsight with a verbatim customer quote, the account ARR, and three other accounts that have raised the same issue. That record sits in the CS platform. Two floors away (or two Slack channels away), a PM has a Jira backlog column called "CS requests" with seven vaguely labeled tickets: some from 14 months ago, none with ARR context, two with no description at all. The CSM's rich record and the PM's empty ticket have never been formally connected.

The tools exist. The integration doesn't.

And this isn't a vendor problem. Swapping Gainsight for ChurnZero, or Jira for Linear, doesn't fix it. The failure is in workflow design and taxonomy agreement: the decisions that have to happen before any integration tool is configured. Gartner's Magic Quadrant for Customer Success Management Platforms evaluates the major CS platforms on exactly these integration capabilities. It's a useful vendor-neutral starting point before committing to a stack. Most teams skip these decisions, wire the tools together with a Zapier zap, and six months later find that the integration is technically running but practically useless. The upstream stack (CRM, CS platform, and revenue intelligence wired together) determines how much structured data is available to route in the first place; see the aligned stack overview for context on how those layers interact.

This article is about getting the workflow design right first, and then choosing the integration pattern that fits your current tool maturity.

Why This Integration Is Harder Than It Looks

Key Facts: CS-to-Product Integration

- Only 23% of product teams have a formal, documented process for receiving and routing CS feedback into the product backlog, per Productboard's 2024 State of Product Management survey.

- The median mid-market SaaS company has 4.7 handoff points between a CSM raising a feature request and that request appearing in a PM's reviewed backlog, per CS Insider's 2024 CS Operations Report.

- Feature request graveyard rates (requests that enter the product backlog but are never reviewed or closed) run at 55-70% in companies without a shared taxonomy between CS and product, according to ProductPlan research.

- Teams with a shared CS-product feedback taxonomy report 3.1x faster time-from-request-to-PM-acknowledgment than those without, per Gainsight's CS Benchmark data.

CS platforms and product backlog tools are built on fundamentally different data models, and this matters more than which specific tools you're using. How those models connect to the rest of the customer record (including CRM data and shared account history) is covered in depth in the shared customer record architecture guide.

CS platforms record the world by account. Every data point (health score, NPS, feature request, support escalation) is anchored to a named customer account. The CS platform's primary index is the account record.

Product backlog tools record the world by feature or epic. A Jira ticket exists independently of accounts. It might represent 40 accounts or one. The product tool's primary index is the work item.

When a CSM logs a feature request in Gainsight, it's attached to a specific account. When a PM looks at that request in Jira, account context is often stripped out or encoded in a custom field that nobody maintains. The 1-to-many relationship (one feature ticket representing many accounts) is the core translation problem. It's not a technical limitation. It's a data model mismatch, and no amount of native integration solves it without deliberate taxonomy design.

The other mismatch is cadence. CS platforms update continuously: health scores shift daily, support tickets open and close, CSMs log calls in real time. Product backlog tools operate on sprint cycles. A feature request that enters Jira on Tuesday might not be reviewed until the next sprint planning session in two weeks. The integration needs to account for both cadences: urgent routing (something that needs PM attention this week) and batch routing (the regular queue that feeds sprint planning).

What "integration" actually means here isn't a sync. It's a structured handoff with translation logic. A sync implies two systems staying in agreement. What CS-to-product actually needs is a record in the CS platform being converted into a record in the product tool, with the right fields populated, in the right format, with the right routing logic applied. Which pattern of integration gets you there depends on how much RevOps maturity and automation your team actually has today.

The Four Integration Patterns (Vendor-Neutral)

Named Framework: The 4-Pattern Integration Model The 4-Pattern Integration Model classifies CS-to-product backlog integration by automation level and implementation complexity: Pattern 1 (manual-with-structure), Pattern 2 (native connector), Pattern 3 (CS Ops-owned middleware), and Pattern 4 (bidirectional status sync). Each pattern is vendor-neutral and designed to match a team's current RevOps maturity and account volume, rather than requiring a specific tool combination. The model's primary value is preventing teams from attempting Pattern 4 before they have the data infrastructure and engineering capacity to maintain it.

These four patterns are ordered by implementation complexity and automation level. The right choice depends on your current tool maturity, RevOps capacity, and account volume.

Pattern 1: Manual-with-structure. A shared template (Google Doc, Notion table, or a dedicated spreadsheet) that CS Ops populates weekly from the CS platform and sends to the PM lead. The PM lead reviews it in a designated time block and routes items into the backlog manually. No automation. No native integration. Just a defined format and a weekly rhythm.

Who it works for: teams under 50 active accounts, early-stage CS Ops function with no dedicated RevOps support, or teams where the PM lead and CS lead sit near each other and a weekly 20-minute sync covers more ground than any automated feed. The cost is PM time to review and route. The benefit is zero integration maintenance overhead.

Pattern 2: Native connector. Gainsight has a native Jira integration for creating tickets from CTAs (Calls to Action) and the Feedback module. ChurnZero connects to Jira, Asana, or Productboard via Zapier or Make. The connector passes a defined set of fields from the CS platform record to the product tool record.

What data actually flows: account name, account ARR (if mapped), the verbatim text of the feature request, the CSM who logged it, and a timestamp. What typically gets lost: the account count (how many other accounts raised the same issue), the workaround the customer is using, the urgency context, and any linkage to a product theme or category. These fields require manual mapping configuration that most teams skip during initial setup.

What to audit before going live with a native connector: are the custom fields on the Jira ticket or Productboard record actually mapped and required? If they're optional, they'll be empty 80% of the time within 60 days.

Pattern 3: CS Ops-owned middleware. RevOps or CS Ops runs the routing logic as a dedicated function, not automation, but a defined human step with structured criteria. CS Ops reviews the incoming feedback queue weekly, applies the routing criteria (threshold-based: which items meet the ARR and account-count bar to go to the product backlog?), formats the handoff record using the minimum viable format (below), and submits it to the PM team via the agreed product tool.

Who it works for: teams with 50-200 accounts, an active RevOps or CS Ops function, and a PM team that has complained about noisy or unformatted CS feedback. This pattern requires RevOps maturity but produces the cleanest, best-formatted inputs to the product backlog of any pattern short of bidirectional sync.

Pattern 4: Bidirectional status sync. The CS platform and product tool stay in sync bidirectionally. When a PM updates a ticket status (in review / on roadmap / declined / shipped), the CS platform account record reflects that status. When a CSM logs a new feature request, it automatically creates a ticket in the product tool.

Who it works for: teams with mature RevOps, a data engineering resource who can maintain the sync, and 200+ accounts where manual review at any step creates bottlenecks. This is the gold standard. It's also the rarest implementation at mid-market because maintaining bidirectional sync requires ongoing engineering attention when either tool changes their API or data model.

Most mid-market teams are at Pattern 1 or Pattern 2, want to be at Pattern 3, and have someone on the team advocating for Pattern 4 before the team is ready for it. Before any pattern works reliably, both teams need to agree on what data actually crosses the seam.

Rework Analysis: Based on industry benchmarks, the most common integration failure is not tool selection. It's taxonomy mismatch between CS and product. Teams that establish a shared five-category taxonomy before configuring any integration tool reduce feature request graveyard rates (requests that enter the backlog but are never reviewed or closed) by roughly half compared to teams that rely on each system's default labels. The minimum viable handoff record (six fields: feature request statement, account count, ARR at stake, verbatim quote, current workaround, submitting CSM) is the single structural change that most improves signal quality across all four integration patterns.

What Data Should Actually Cross the Seam

Before configuring any integration pattern, agree on the minimum viable handoff record. This is the set of fields every feature request must have before it leaves the CS platform and enters the product backlog. The VoC pipeline that feeds product defines the upstream structure that generates these records. The handoff format works best when the intake process is already disciplined.

The 6-field minimum viable handoff record:

| Field | Description | Why it matters |

|---|---|---|

| Feature request statement | One clear sentence describing what the customer needs (in job terms, not solution terms) | Gives PM context to evaluate without calling the CSM |

| Account count | Number of distinct accounts that have raised this issue | Signals pattern vs. one-off |

| ARR at stake | Total ARR of those accounts | Turns account count into business weight |

| Verbatim quote | At least one direct quote from a customer (exact words, not paraphrase) | Connects PM to actual customer language |

| Current workaround | What accounts are doing today to compensate | Signals urgency and adoption risk |

| Submitting CSM | Named CSM, reachable if PM has questions | Closes the loop for follow-up |

What does NOT belong in the backlog: health scores, renewal dates, CSM sentiment ratings, NPS scores, support ticket counts. These are CS-internal data points. They're meaningful in the CS platform where they have account context. In Jira, stripped of that context, they create noise and privacy exposure without improving PM decision quality.

CS Platform Notes by Tool

Gainsight has the most mature native integration capabilities among the major CS platforms. The CTA-to-Jira path works well when CTA templates are configured to require the six fields above before the CTA can be submitted. The Feedback module adds a dedicated intake layer, but it requires discipline to avoid it becoming a feature request graveyard inside Gainsight before anything reaches Jira. What RevOps usually builds on top: a weekly automation that exports the Feedback queue above a defined ARR threshold to a formatted CSV that CS Ops reviews before routing to product.

ChurnZero connects to Jira, Trello, and other tools primarily via Zapier or Make, not a native integration. PlayBooks can trigger feature request logging workflows. But the Zapier path requires careful field mapping: the default setup passes very little structured data. ChurnZero teams that run Pattern 3 (CS Ops middleware) get better handoff quality than those relying on the Zapier automation, because CS Ops applies the minimum viable format before submission.

Catalyst and Vitally are lighter-weight CS platforms that have fewer native integration options. Both typically operate via CSV export or Slack-to-Jira routing at this stage of their product maturity. This isn't a limitation for teams under 100 accounts. A weekly Slack message with a formatted handoff record, routed to a dedicated PM Slack channel, works. It's Pattern 1 with a Slack delivery layer. For larger account volumes, teams on Catalyst or Vitally typically run Pattern 3.

All four CS platforms share one architectural characteristic: they track feedback at the account level, not the feature level. The translation from account-level records to feature-level backlog tickets is always a manual or custom-built step. No CS platform today produces feature-centric output natively.

Product Backlog Tool Notes by Tool

Productboard has the strongest native CS-to-product intake capability of the group. The Insights portal accepts feedback in free text with account tagging, and the feedback-to-feature linkage allows PMs to connect multiple customer inputs to a single feature record. For CS teams that can direct CSMs to log requests directly into Productboard's Insights portal (rather than the CS platform), this is the cleanest integration path. For teams where CS Ops does the routing, Productboard's API supports formatted submissions.

Jira is flexible but requires intentional configuration. The default Jira ticket fields don't include ARR, account count, or verbatim quote. These require custom fields. And custom fields only produce value if they're required and maintained. A Jira integration built without the custom field requirements enforced at submission will degrade to empty fields within 90 days as CSMs or automation tools stop populating them. Jira works well for teams that invest in the custom field configuration upfront and have CS Ops enforce the minimum viable format.

Linear is minimal by design. It's built for fast-moving engineering teams and doesn't have an intake or feedback aggregation layer. Using Linear as the product destination for CS feedback requires a CS Ops routing layer upstream that formats and batches requests before they enter Linear. Pattern 3 (CS Ops middleware) is almost always the right choice for Linear-using teams.

Aha! is strong at roadmap visualization and strategic planning. CS feedback usually enters Aha! via the Ideas portal (where customers can submit directly) or via API from CS Ops. The Ideas portal is useful for structured feedback collection but requires customers to have access and motivation to submit. That works for mature enterprise customer advisory boards but less well for mid-market day-to-day feedback.

The Taxonomy Problem (and How to Fix It)

The single biggest integration failure, across all patterns and all tool combinations, is a taxonomy mismatch between CS and product. Gartner's Critical Capabilities research for CS platforms identifies shared taxonomy and feedback routing as the two capabilities that most differentiate high-performing CS teams from the rest. CS tags a feature request as "reporting." Product has a Jira label called "analytics." These are the same thing. They never get linked. The pattern across 15 accounts disappears in the tag inconsistency. This is closely related to the pattern recognition problem across CSMs, where the same disconnected tagging that hides patterns within a CS team also hides them at the CS-to-product seam.

Building a shared tagging schema is a prerequisite for any integration pattern above Pattern 1. It's owned by CS Ops and a designated PM, not by the tool vendor's default labels.

Five categories cover 80% of CS feedback in most mid-market SaaS products:

- Feature gap: the product doesn't have a capability customers need

- Workflow friction: the capability exists but is too difficult to use in the customer's actual workflow

- Missing integration: customers need the product to connect to another tool in their stack

- Performance or reliability: speed, uptime, or consistency issues affecting customer outcomes

- Documentation or training: customers can't figure out how to use what exists

These five categories apply across all CS platforms and all product tools. When CS Ops tags every incoming request with one of these five categories before routing, and when product uses the same five categories in their backlog labels, the pattern data survives the handoff.

Taxonomy governance: CS Ops and a designated PM review the taxonomy quarterly. Questions to evaluate: are there requests showing up in "feature gap" that are actually "workflow friction"? Is "documentation or training" being used as a catch-all for things that are actually "workflow friction"? The taxonomy should stay stable. Resist adding new categories without removing or merging old ones.

Routing Logic: What Triggers a Handoff

Not every CS platform record should reach the product backlog. Routing criteria determine what crosses the seam.

Threshold-based routing criteria (illustrative; adjust to your ARR profile):

- Account count: three or more accounts raised the same issue

- ARR at stake: $150K+ in combined ARR

- Severity: any single-account issue where churn risk is flagged or the customer raised it in a QBR

Items meeting any of these criteria go to the backlog. Items below all three stay in the CS platform for monitoring, not routing.

Urgent path vs. batch path. The urgent path handles items where a customer has escalated, churn risk is flagged, or a C-level executive raised the issue. These get routed to the PM directly (Slack message + formatted ticket) within 24 hours. The batch path handles the regular queue: items meeting the threshold criteria but not escalated. These accumulate weekly and are reviewed in the CS-PM 1:1 cadence or submitted as a weekly batch to the backlog.

Who reviews the queue: a designated PM liaison is the cleanest model at mid-market. One PM owns the CS feedback intake queue and routes within the PM team. Rotating PM ownership works at smaller scale but creates accountability gaps when the rotating PM is deep in a sprint. CS Ops gating (CS Ops reviews before anything hits the PM queue) works best in Pattern 3.

Closing the Loop: Getting Status Back to CS

The return path (PM decisions flowing back to CS so CSMs can update customers) is harder than the intake path, and it fails more often. McKinsey's research on customer-centric B2B organizations shows that the highest-impact change companies make is building bidirectional communication channels: not just from customer to product, but from product back to the field. Closing the feedback loop with customers requires deliberate mechanics on the CS side. The integration patterns here cover the internal handoff, but the customer-facing loop is a separate discipline.

The gap between what "built" means to a PM and what it means to a CSM preparing for a QBR is real. A PM marks a ticket "shipped" when the feature is deployed to production. The CSM needs to know: is it available to all accounts? Is it behind a feature flag? Does it require migration? Is there documentation? "Shipped" without answers to these questions doesn't help a CSM close the loop with the customer who raised the request eight months ago.

Minimum viable status update: four states CS needs to know, communicated by PM in the agreed-upon format:

- In review: PM is evaluating; no timeline yet

- On roadmap: committed for a future quarter; indicate which one if possible

- Declined: not planned; include the reason (out of scope, too small, duplicate of existing feature, etc.)

- Shipped: deployed; include the rollout scope and any account action required

The mechanism for this return path depends on the integration pattern. In Pattern 4 (bidirectional sync), the CS platform updates automatically when the PM updates the ticket. In Patterns 1-3, PM either updates the shared template/CS platform directly, or CS Ops pulls a weekly status update from the product tool and reflects it back into CS platform account records. The quarterly customer feedback review is where the status of the full feature request queue gets reviewed at a higher level, but individual account updates can't wait for the quarterly session.

The 30-Day Integration Audit

Before implementing or changing your integration pattern, document how a single feature request actually travels today. Walk it:

Day 1-3: Pick one feature request that a CSM raised in the past 30 days. Trace it from the CS platform record to wherever it is now in the product tool (or find that it never made it). Count the handoff points. Who touched it? What format did it take at each step? What was lost along the way?

Day 4-7: Interview the CSM who raised it and the PM who (should have) received it. Ask the CSM: do you know what happened to this request? Ask the PM: do you have this in your backlog? If yes, can you find it? If not, where did it go?

Day 8-14: Map the current state in a single diagram. Handoff points, data loss points, latency at each step. Present this to VP CS, Head of Product, and RevOps lead.

Day 15-21: Based on the audit, select the pattern (1-4) that fits your current tool maturity and RevOps capacity. Draft the minimum viable handoff record fields. Propose the shared taxonomy (five categories).

Day 22-30: Implement Pattern 1 or Pattern 2, whichever is achievable in the remaining time. Run it for the next quarter before evaluating whether to move to a more complex pattern.

The audit almost always reveals that the problem is simpler than it appeared. A shared taxonomy and a minimum viable format fix more than any technical integration. The feature request graveyard problem is a workflow problem, not a tool selection problem. What closes that graveyard for good is getting status back to CS so CSMs can close the loop with customers.

Frequently Asked Questions

What is the 4-Pattern Integration Model for CS-to-product backlog?

The 4-Pattern Integration Model classifies CS-to-product backlog connections by automation level and RevOps maturity: Pattern 1 (manual-with-structure, a shared template with weekly routing), Pattern 2 (native connector, using tools like Gainsight's Jira integration or Zapier), Pattern 3 (CS Ops-owned middleware, where a human routing function applies threshold-based criteria before anything hits the PM queue), and Pattern 4 (bidirectional status sync, requiring a data engineer to maintain real-time parity between both systems). Most mid-market teams operate at Pattern 1 or 2. Pattern 3 produces the cleanest backlog inputs without requiring engineering resources.

Why do CS-to-product integrations fail even when the tools are connected?

The most common failure is taxonomy mismatch, not tool failure. CS tags a feature request as "reporting." Product has a Jira label called "analytics." The pattern across 15 accounts disappears in that tag inconsistency. Gartner's Critical Capabilities research for CS platforms identifies shared taxonomy and feedback routing as the two capabilities that most differentiate high-performing CS teams. A shared five-category taxonomy (feature gap, workflow friction, missing integration, performance or reliability, documentation or training) resolves this before any integration tool is configured.

What six fields must every CS-to-product handoff record include?

The minimum viable handoff record for a feature request crossing from the CS platform to the product backlog includes: (1) a feature request statement in job terms rather than solution terms, (2) account count of distinct accounts that raised the issue, (3) ARR at stake across those accounts, (4) at least one verbatim customer quote in the customer's exact words, (5) the current workaround the account is using today, and (6) the submitting CSM's name for follow-up. What does not belong in the handoff record: health scores, renewal dates, CSM sentiment ratings, or NPS scores. These carry meaning in the CS platform with account context but create noise when stripped of it in Jira or Productboard.

How does routing logic determine what crosses from CS to the product backlog?

Threshold-based routing criteria define what triggers a handoff. Illustrative thresholds for a mid-market team: three or more accounts raised the same issue, $150K or more in combined ARR at stake, or any single-account issue where churn risk is flagged or the customer raised it in a QBR. Items meeting any criterion go to the backlog. Items below all three stay in the CS platform for monitoring. A separate urgent path handles escalations within 24 hours: C-level escalations, churn-flagged accounts, or high-ARR accounts that surfaced an issue directly. The batch path handles the regular queue reviewed in the biweekly CS-PM 1:1.

What data should not be included in the CS-to-product handoff record?

Health scores, renewal dates, CSM sentiment ratings, NPS scores, and support ticket counts belong in the CS platform where they have account context. In a Jira or Productboard ticket, stripped of that context, they add noise without improving PM decision quality. They also create a privacy exposure risk when account-level sentiment data sits in a product tool accessed by a broader engineering and design team. The handoff record should contain only the information a PM needs to evaluate the request, route it, and reach the submitting CSM for clarification.

How does bidirectional status sync work, and who needs it?

Bidirectional sync keeps the CS platform and product backlog tool in real-time agreement: when a PM updates a ticket status (in review, on roadmap, declined, shipped), the corresponding CS platform account record updates automatically. When a CSM logs a new feature request, it automatically creates a ticket in the product tool. This is Pattern 4: the gold standard and the rarest at mid-market. It requires a data engineering resource to build and maintain the sync, plus API stability from both tools. Most mid-market teams achieve the same outcome operationally through Pattern 3 (CS Ops middleware) combined with a weekly PM status update to CS Ops, which takes more human time but zero engineering maintenance.

Learn More

Senior Operations & Growth Strategist

On this page

- Why This Integration Is Harder Than It Looks

- The Four Integration Patterns (Vendor-Neutral)

- What Data Should Actually Cross the Seam

- CS Platform Notes by Tool

- Product Backlog Tool Notes by Tool

- The Taxonomy Problem (and How to Fix It)

- Routing Logic: What Triggers a Handoff

- Closing the Loop: Getting Status Back to CS

- The 30-Day Integration Audit

- Frequently Asked Questions

- What is the 4-Pattern Integration Model for CS-to-product backlog?

- Why do CS-to-product integrations fail even when the tools are connected?

- What six fields must every CS-to-product handoff record include?

- How does routing logic determine what crosses from CS to the product backlog?

- What data should not be included in the CS-to-product handoff record?

- How does bidirectional status sync work, and who needs it?

- Learn More