More in

AI Tool Comparisons for Executives

Claude vs ChatGPT vs Gemini: Which LLM Fits Your Business in 2026

Mar 17, 2026 · Currently reading

Perplexity vs ChatGPT Search vs Gemini: AI Research Tools for Executives in 2026

Mar 16, 2026

Jasper vs Copy.ai vs Writer: AI Writing Tools for Marketing Teams in 2026

Mar 3, 2026

Cursor vs GitHub Copilot vs Windsurf: AI Coding Tools Compared for Engineering Leaders in 2026

Feb 16, 2026

Otter vs Fireflies vs Fathom: AI Meeting Tools for Sales Leaders in 2026

Feb 16, 2026

You're evaluating which large language model to standardize on for your team of 50 to 500 people. Your IT lead wants to know about data privacy. Your Head of Marketing wants to know which one writes better. Your CFO wants a number. And you'd like to actually make a decision before the next board meeting.

The problem isn't a shortage of reviews. It's that most comparisons are written for developers or power users who already live in the tooling. This one is written for the operator: the CEO, COO, CRO, or Head of Ops who needs to allocate seats, manage adoption risk, and justify the cost line. Here's the decision through your lens.

TL;DR

| Dimension | Claude (Anthropic) | ChatGPT (OpenAI) | Gemini (Google) |

|---|---|---|---|

| Best for | Long-form reasoning, analysis, policy writing, careful output | Breadth of tasks, widest ecosystem, coding, integrations | Teams inside Google Workspace; multimodal data work |

| Model lineup | Opus 4, Sonnet 4, Haiku (speed/cost tiers) | GPT-5, GPT-4o, o3-mini (speed/cost tiers) | Gemini 2.5 Pro, Gemini 2.5 Flash, Workspace integration |

| Primary strength | Nuanced writing quality and instruction-following | Plugin ecosystem, widest tool integrations, DALL-E | Native Google Docs/Sheets/Drive, multimodal reasoning |

| Enterprise data privacy | Strong — no training on business data by default | Strong on Enterprise tier, less on Team tier | Strong on Workspace Business/Enterprise tiers |

| Weakest area | Smaller third-party integration ecosystem | Can be verbose; output requires more editing on long tasks | Weaker standalone writing quality vs. Claude; Google lock-in risk |

| Pricing model | Per-seat (Team) or API usage + Enterprise custom | Per-seat (Team) or API usage + Enterprise custom | Per-seat via Google Workspace add-on or API |

| Ideal buyer | COOs and CMOs who prioritize output quality | CIOs and CTOs who prioritize ecosystem breadth | CIOs already on Google Workspace |

What Each LLM Is Actually Built For

Claude (Anthropic)

Anthropic's design philosophy is "Constitutional AI," a model trained to be helpful, harmless, and honest by design, not just by safety filters. Anthropic's research on Constitutional AI explains the training approach in detail. That philosophy shapes the output: Claude tends to be more careful, more nuanced, and better at following complex multi-step instructions over long documents.

In practical terms, this shows up in:

- Legal, compliance, and policy document review where ambiguity matters

- Long-form content where maintaining consistent tone and structure across 5,000 words is required

- Analysis tasks where the model needs to hold multiple constraints in memory simultaneously

- Customer-facing copy where "AI-sounding" phrases hurt brand perception

Marketing teams evaluating AI writing tools specifically should also see Jasper vs Copy.ai vs Writer, which compares purpose-built content tools built on top of these LLMs.

Claude's three tiers (Haiku for speed, Sonnet 4 for balance, Opus 4 for highest capability) let teams match cost to task complexity.

ChatGPT (OpenAI)

OpenAI's GPT models are the most widely deployed LLMs in the world, which means the widest ecosystem: plugins, custom GPTs, integrations, and the largest community of prompt engineers and workflows. OpenAI's product overview covers the full model lineup and team plans. GPT-5 pushes the capability ceiling higher, while o3-mini trades raw capability for speed and cost efficiency on structured reasoning tasks.

ChatGPT's practical advantages show up in:

- Technical writing and code generation (deepest coding benchmark performance)

- Workflows that need connections to external tools (it has the most plugins and API connectors)

- Teams where someone already knows how to use it (training cost is lower when everyone's seen it)

- DALL-E image generation built directly into the interface

Gemini (Google)

Gemini 2.5 Pro is Google's strongest reasoning model, competitive with the others on most benchmarks. Google's Gemini for Google Workspace overview shows how it integrates with Docs, Sheets, and Gmail. But Gemini's real differentiator isn't the model: it's the distribution. If your team runs on Google Workspace (Docs, Sheets, Drive, Meet, Gmail), Gemini is already embedded in those tools as a first-party experience.

Gemini's practical advantages:

- Native "Help me write" and "Summarize" actions directly inside Google Docs and Gmail

- Gemini Advanced for Workspace means no separate tool to onboard

- Multi-modal: can reason over images, PDFs, spreadsheets, and video natively

- Strong data analysis in Google Sheets with natural language prompts

| Capability | Claude | ChatGPT | Gemini |

|---|---|---|---|

| Long-document instruction following | Excellent | Good | Good |

| Code generation | Good | Excellent | Good |

| Writing quality (editorial) | Excellent | Good | Moderate |

| Reasoning over data/spreadsheets | Good | Good | Excellent (in Sheets) |

| Image generation | No (text only) | Yes (DALL-E 3) | Yes (Imagen) |

| Video understanding | No | No | Yes (Gemini 2.5 Pro) |

| Web search | Yes (claude.ai) | Yes (ChatGPT Search) | Yes (Google Search grounding) |

| Native Workspace integration | No | Partial (Microsoft 365 Copilot uses GPT) | Yes (Google Workspace) |

Decision by Business Goal

This is the frame that actually matters. Pick the column that reflects your primary business goal going into the evaluation.

| Business Goal | Best Fit | Runner-Up | Why |

|---|---|---|---|

| Cut time-to-first-draft on written deliverables by 40%+ | Claude | ChatGPT | Claude's instruction-following produces cleaner drafts with fewer editing cycles |

| Give the dev team an AI coding assistant that ships faster | ChatGPT | Gemini | GPT-5 and o3-mini lead on code benchmarks; largest coding community |

| Standardize AI across the company without a new tool rollout | Gemini | ChatGPT | Gemini inside Workspace means no new login, no new tool. It's already there |

| Build internal AI workflows and automations via API | ChatGPT | Claude | OpenAI has the largest API ecosystem and most mature developer tooling |

| Improve legal, compliance, or policy document review quality | Claude | ChatGPT | Constitutional AI training makes Claude better at nuance and constraint-following |

| Get board-ready ROI inside 90 days | Gemini (if on Workspace) | ChatGPT | Fastest time-to-adoption with no change management if Workspace is already standard |

| Reduce vendor dependence on a single Big Tech provider | Claude | — | Anthropic is the only independent (non-Google, non-Microsoft) major LLM vendor |

| Support a multilingual team across 5+ languages | ChatGPT | Claude | GPT-4o has the strongest multilingual benchmark coverage |

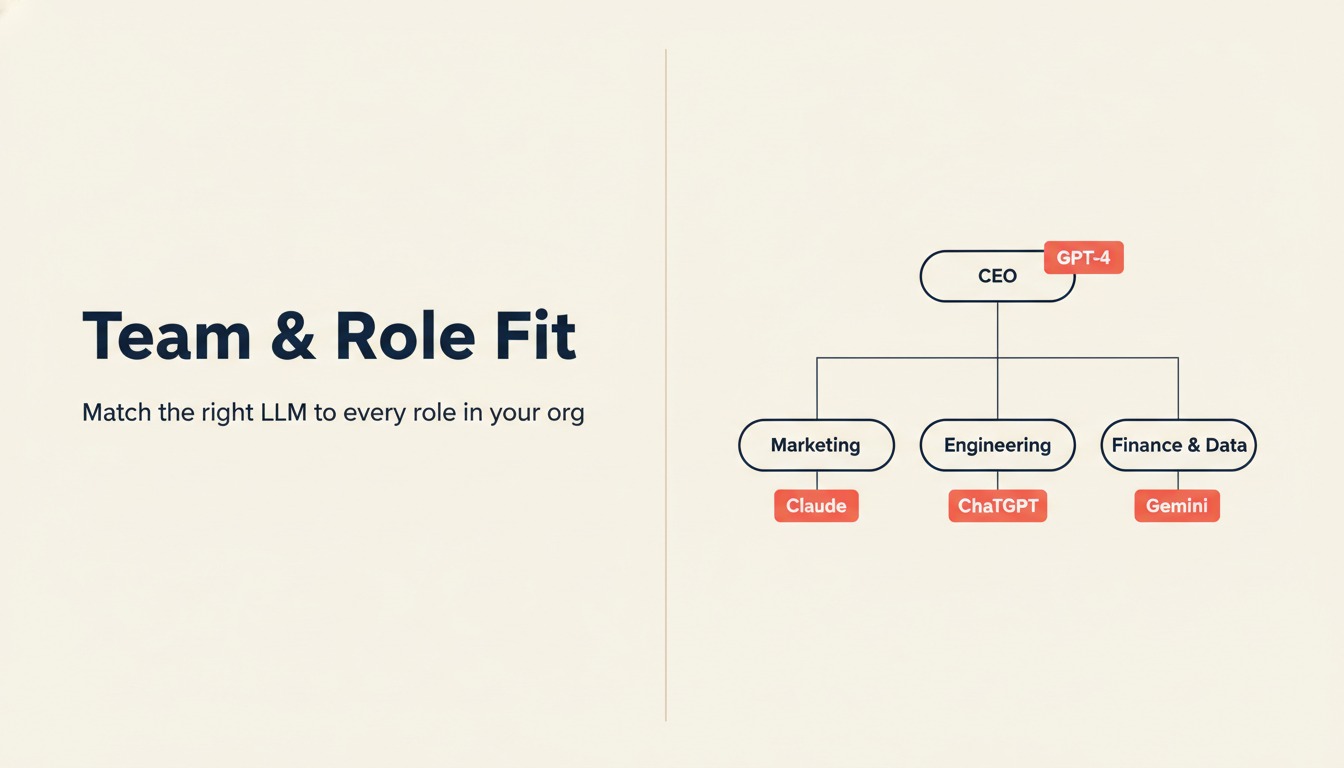

Team and Role Fit

| Role | Recommended Tool | Primary Use Case |

|---|---|---|

| CEO / Founder | Claude | Board prep, strategy memos, speech drafts, long-form thinking |

| COO / Head of Ops | Claude or ChatGPT | SOPs, process documentation, cross-team communication |

| CRO / VP Sales | ChatGPT | Sales email sequences, call prep, objection handling, proposals |

| CMO / Head of Marketing | Claude | Content strategy, brand voice consistency, campaign briefs, editorial |

| CFO / Finance | Gemini | Spreadsheet analysis, financial narrative, Google Slides summaries |

| CIO / Head of IT | ChatGPT | API integration planning, code review, infrastructure documentation |

| Head of Legal / Compliance | Claude | Contract analysis, policy review, regulatory summary |

| People Ops / HR | Gemini or Claude | Job descriptions, onboarding docs, Workspace-native workflows |

| Data / RevOps | Gemini | Google Sheets automation, data narrative, dashboard commentary |

| Marketing writers | Claude | Blog drafts, ad copy, landing pages, long-form SEO content |

Enterprise Features

Before buying seats at scale, verify these with your vendor. The table reflects plans as of Q1 2026; pricing and feature tiers change.

| Feature | Claude Team / Enterprise | ChatGPT Team / Enterprise | Gemini Business / Enterprise |

|---|---|---|---|

| SSO (SAML/OIDC) | Enterprise only | Enterprise only | Yes (Google Workspace SSO) |

| Admin console | Enterprise only | Enterprise only | Via Google Admin Console |

| Usage analytics per user | Enterprise only | Enterprise only | Via Workspace Admin |

| No training on your data | Enterprise (and Team with opt-out) | Enterprise; Team requires opt-out | Business and Enterprise by default |

| Data residency options | Enterprise (limited regions) | Enterprise (EU available) | Enterprise (multiple regions) |

| Private deployment / VPC | Enterprise (select customers) | Enterprise | Not standard |

| API access | Yes (via api.anthropic.com) | Yes (via api.openai.com) | Yes (via Google AI Studio / Vertex AI) |

| Priority support | Enterprise | Enterprise | Enterprise |

| Audit logs | Enterprise | Enterprise | Via Google Workspace |

| Custom retention policies | Enterprise | Enterprise | Via Google Workspace |

If you have 10 to 50 seats and aren't on Enterprise, you'll use Team plans. Team plans give you basic admin controls and data opt-outs, but not SSO, audit logs, or custom data residency. For most mid-size companies evaluating general productivity use cases, Team is fine. For use cases touching customer data, legal documents, or financial records, Enterprise controls are non-negotiable.

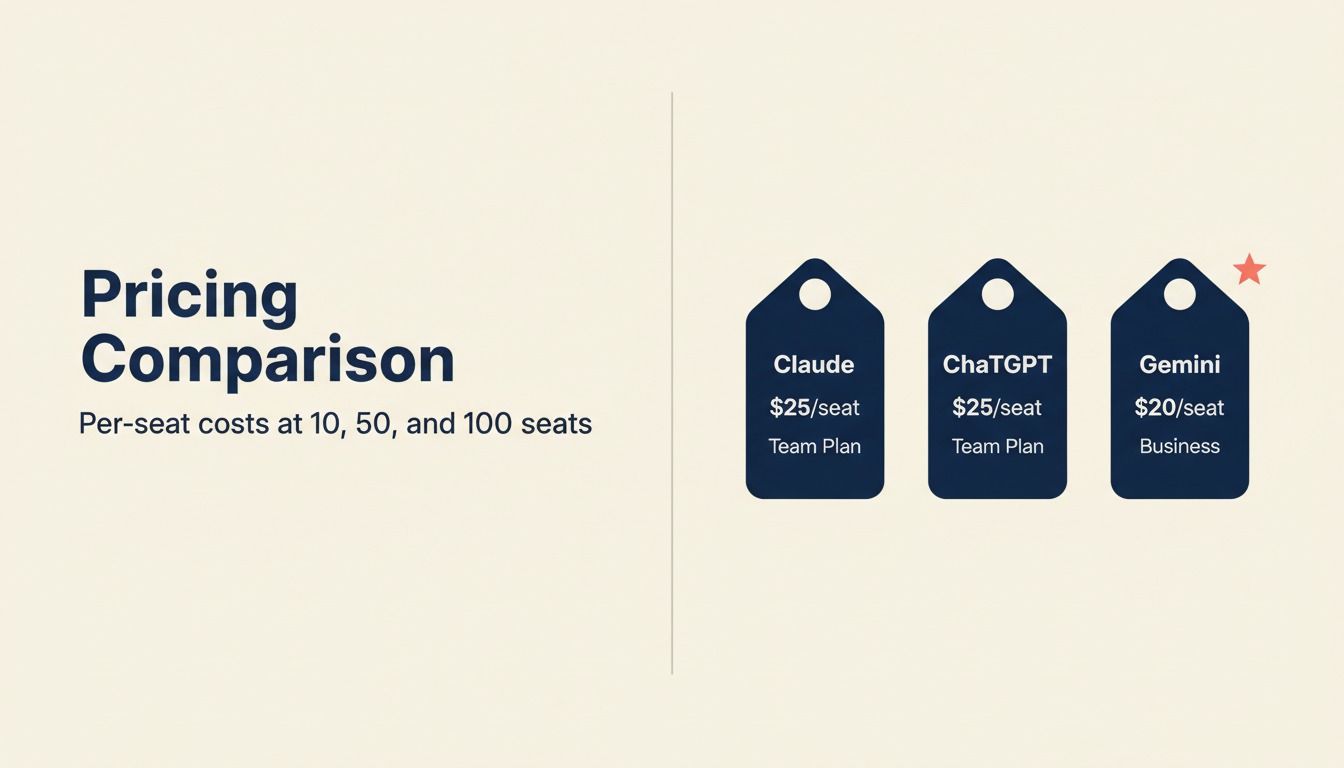

Pricing at Real Team Sizes

Pricing as of Q1 2026. Verify current rates on vendor pricing pages before budgeting.

| Plan | Per-Seat / Month (annual billing) | 10 seats / year | 50 seats / year | 100 seats / year |

|---|---|---|---|---|

| ChatGPT Team | $25/user/month | $3,000 | $15,000 | $30,000 |

| ChatGPT Enterprise | Contact sales (est. $30-60/user/month) | ~$4,500+ | ~$22,500+ | ~$45,000+ |

| Claude Team | $25/user/month | $3,000 | $15,000 | $30,000 |

| Claude Enterprise | Contact sales (est. $30-60/user/month) | ~$4,500+ | ~$22,500+ | ~$45,000+ |

| Gemini Business (Workspace add-on) | $20/user/month | $2,400 | $12,000 | $24,000 |

| Gemini Enterprise (Workspace add-on) | $30/user/month | $3,600 | $18,000 | $36,000 |

Key points for the CFO:

- Gemini is cheaper if you're already paying for Google Workspace: you're adding an AI layer on top of existing licenses, not buying a net-new tool budget line

- Claude and ChatGPT Team are identically priced at $25/seat: the decision is capability and fit, not cost

- Enterprise pricing is opaque for all three: budget conservatively at 2x the Team rate for initial modeling, then negotiate volume discounts

- API usage costs are separate: if you plan to build internal automations or integrations, add an API budget line. Costs depend heavily on usage volume and model tier selected (Haiku/Flash are 5-10x cheaper per token than Pro/Opus)

Implementation and Change Management

This is where most AI rollouts underperform. The tool isn't the bottleneck. Adoption is. McKinsey's research on AI adoption in the enterprise consistently shows that change management, not technology selection, determines whether AI investments pay off.

| Factor | Claude | ChatGPT | Gemini |

|---|---|---|---|

| Time to first productive user | 1-2 days (familiar chat UI) | 1-2 days (most teams have used it before) | Hours if team is on Workspace (it's already there) |

| Learning curve | Low for general use; moderate for API/advanced prompting | Low; widest existing familiarity | Very low for Workspace users |

| Who needs training | Everyone; focus on prompt quality | Everyone; focus on prompt quality | Minimal for basic use; moderate for Gemini Advanced |

| Internal champion needed | Yes: someone to define use cases and share prompts | Yes: without direction, teams revert to shallow use | Helpful but less critical given Workspace embedding |

| Integration with existing stack | Via API or Claude.ai | Via API, Zapier, native integrations, or ChatGPT.com | Native in Google Workspace; also via API |

| Governance setup time | 1-2 weeks (Enterprise controls) | 1-2 weeks (Enterprise controls) | Faster if Workspace admin is already configured |

The fastest ROI path: pick one team (Marketing or Ops), define three specific use cases, run a 30-day pilot, measure output quality and time saved, then expand. Don't try to roll out to 200 people before you have one team who can teach the others.

Risk and Governance

This is the section your CIO and legal team will ask about.

| Risk Dimension | Claude | ChatGPT | Gemini |

|---|---|---|---|

| Training on your data | No — Anthropic does not train on API or Enterprise data | No for Enterprise; Team requires opt-out in settings | No for Business/Enterprise Workspace tiers |

| Data processing location | US by default; EU option on Enterprise | US by default; EU option on Enterprise | Multiple regions; Google Cloud standard |

| Third-party subprocessors | Limited; Anthropic publishes DPA | Multiple (standard for SaaS) | Google's existing subprocessor list |

| Vendor concentration risk | Independent (Amazon investment, not Amazon-owned) | Microsoft partnership (not owned) | Google-owned; lock-in risk is real |

| Model availability SLA | Enterprise SLA available | Enterprise SLA available | Google Workspace SLA (99.9%) |

| Hallucination controls | Better on factual constraints; still hallucinates | Still hallucinates; web search grounding helps | Still hallucinates; search grounding helps |

| Audit trail for outputs | Enterprise only | Enterprise only | Via Google Vault |

| Regulatory frameworks supported | SOC 2; GDPR; HIPAA (Enterprise, BAA required) | SOC 2; GDPR; HIPAA (Enterprise, BAA required) | SOC 2; GDPR; HIPAA; ISO 27001 (Workspace standards) |

On vendor lock-in: Gemini carries the highest lock-in risk of the three because its strongest value is embedded inside Google's own products. If your company ever moves off Google Workspace, you lose the native integration advantage. Claude and ChatGPT are infrastructure-agnostic and work regardless of which productivity suite you use.

On hallucination: all three still generate confident wrong answers. Any use case that requires factual accuracy (financial analysis, legal research, technical specs) needs a human review step. Web-search grounding helps but doesn't eliminate the problem. For teams that need real-time web research specifically, see Perplexity vs ChatGPT Search vs Gemini for how the search-grounded versions compare as research tools.

When Each Is the Right Call

Choose Claude when:

- Your primary use cases are writing, editing, summarizing, and document analysis (not coding or automation)

- You need the model to follow complex, multi-constraint instructions without drifting (legal review, compliance writing, brand voice guidelines)

- Your team produces a lot of long-form content (proposals, reports, policy docs) where editing time is the cost you're trying to reduce

- You want an AI vendor that isn't majority-owned by Microsoft or Google (relevant if you have board-level concerns about Big Tech concentration)

- Your CIO cares about an independent DPA with clear data processing commitments

Choose ChatGPT when:

- Your developers are the primary users and need the strongest coding and API tooling

- You're building internal automations or need the broadest plugin/integration ecosystem

- Your team has the most prior experience here; retraining to a new interface has hidden costs

- You want image generation baked in (DALL-E 3 is the strongest built-in image model)

- You need multilingual output at scale across more than five languages

Choose Gemini when:

- Your entire company runs on Google Workspace and you want AI with zero new-tool friction

- Your Finance and Data teams live in Google Sheets and want natural-language data analysis

- You want the fastest time-to-value metric: embedding in existing tools beats any other rollout path

- Your IT team already manages Google Admin Console and wants AI governance under the same infrastructure

- Cost efficiency at scale matters and you're willing to accept some writing quality tradeoff

Decision Framework

| Pick this | If this describes your situation |

|---|---|

| Claude | Writing quality is your primary metric; your team produces long-form documents; you need complex instruction-following |

| Claude | You want an independent AI vendor with a clear Constitutional AI safety model |

| ChatGPT | Developer productivity is the top priority; broadest ecosystem and coding performance matters |

| ChatGPT | Your team already uses it; switching costs outweigh capability differences |

| ChatGPT | You need image generation and multilingual depth in one tool |

| Gemini | You're fully on Google Workspace and want AI that's already inside the tools your team uses every day |

| Gemini | Finance and data teams are the primary use case; Sheets integration is the wedge |

| Gemini | Fastest rollout timeline with lowest change management overhead is the success metric |

| Start with a pilot, then decide | Your team has genuinely split use cases. Give Claude to Marketing, ChatGPT to Engineering, Gemini to Finance for 30 days and let the data decide |

What to Do Next

Don't buy seats before you know your use cases. The most common mistake is purchasing 100 seats companywide before a single team has defined what they're actually trying to accomplish.

A better path:

Pick one team and three specific use cases. Marketing, for instance: first draft of blog posts, campaign briefs, email copy. Or Ops: SOP rewrites, meeting summaries, vendor communication.

Run a two-week pilot with five to ten users. Measure output quality (does it reduce editing time?), adoption rate (are people actually using it daily?), and one business outcome (did campaign output volume increase?).

Compare across tools if you're undecided. Give the same prompt to Claude, ChatGPT, and Gemini and evaluate the output for your specific use case. Benchmark comparison tables mean less than whether the output fits your brand voice and workflow.

Then buy seats. Starting with Team plans for the pilot team, then expanding after a defined pilot period, avoids the sunk-cost trap of a company-wide rollout that doesn't stick.

Set governance before you scale. Get your CIO or Head of IT to confirm the data handling settings (opt-outs on Team, DPA review on Enterprise) before sensitive documents start flowing through any of these tools.

The right LLM is the one your team actually uses, applied to a workflow where AI output measurably reduces time or improves quality. That's a 30-day experiment, not a features spreadsheet. If your engineering team's use case is the primary driver, see Cursor vs GitHub Copilot vs Windsurf for the coding-specific comparison. And for a broader take on how AI copilots and agents are evolving inside business workflows, see AI copilots vs agents.

Senior Customer Retention Strategist

On this page

- TL;DR

- What Each LLM Is Actually Built For

- Claude (Anthropic)

- ChatGPT (OpenAI)

- Gemini (Google)

- Decision by Business Goal

- Team and Role Fit

- Enterprise Features

- Pricing at Real Team Sizes

- Implementation and Change Management

- Risk and Governance

- When Each Is the Right Call

- Choose Claude when:

- Choose ChatGPT when:

- Choose Gemini when:

- Decision Framework

- What to Do Next